Halfway through the semester, when deadlines stack up and revision notes start to blur together, a familiar question tends to surface. Is there a smarter way to prepare without cutting corners?

That question sits at the heart of the conversation around AI exam helpers. You hear the term everywhere, often bundled with anxiety, curiosity, and no small amount of confusion.

This article unpacks what an AI exam helper actually is, how it works, and where the lines are clearly drawn. You will see how these tools fit into exam preparation, where they help, and where they cross into territory that most schools explicitly prohibit.

Understanding that distinction matters. Used well, AI exam helpers support learning. Used poorly, they undermine it. Let’s start with the basics before moving into how these tools really function behind the scenes.

What is an AI Exam Helper, Really?

An AI exam helper is an AI-powered tool designed to support students during exam preparation, review, and, in some cases, assessment-related workflows. At its core, it assists with understanding, not substitution. That distinction is important. In 2026, AI exam helpers are formally defined as learning aids, not exam shortcuts.

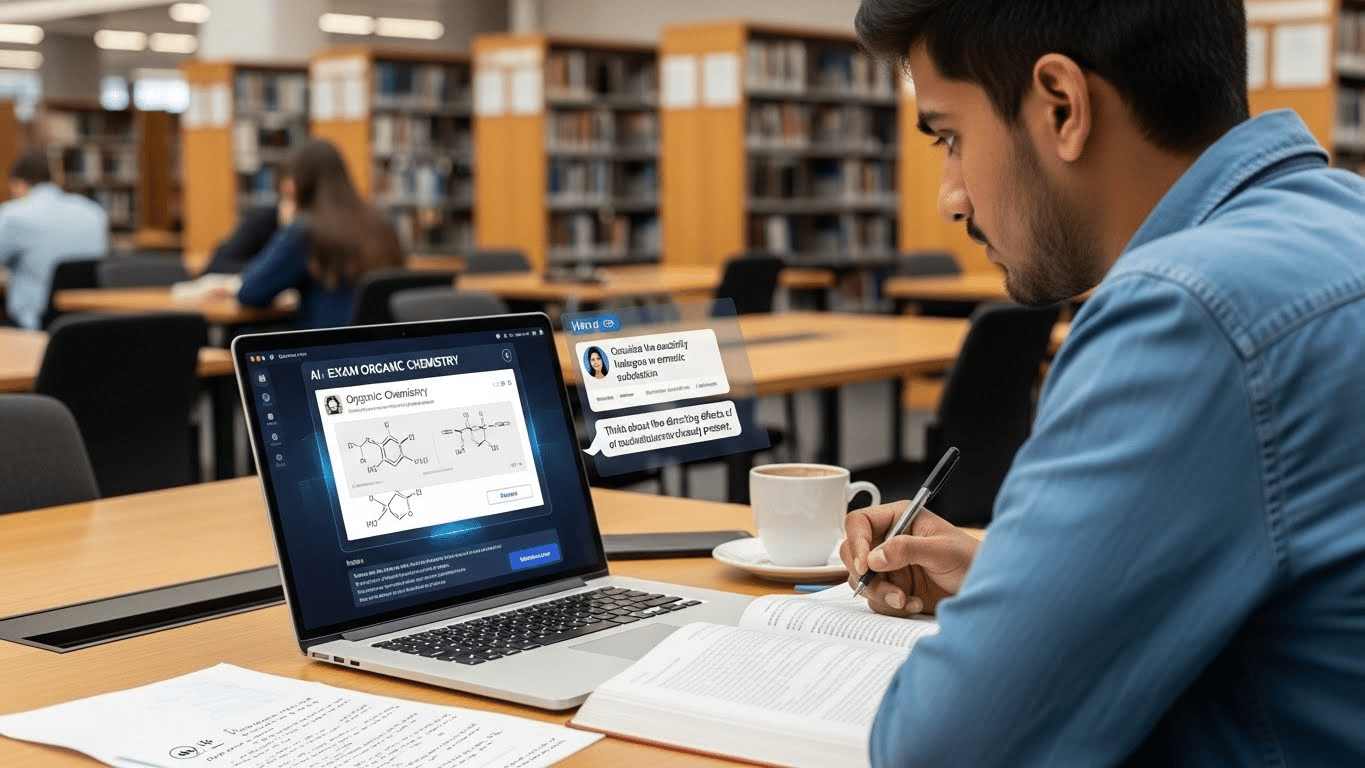

These tools are often described as 24/7 digital tutors because they are available whenever you study. They help explain concepts, generate practice questions, summarize materials, and respond quickly when you are stuck.

You will find them used across subjects, from computer science and organic chemistry to broader general education courses where revision demands can feel relentless.

What an AI exam helper is not is equally important. It is distinct from hiring someone to take an exam on your behalf. That practice violates academic integrity outright. AI exam helpers are meant to support the learning process, not replace it.

Understanding that boundary sets the stage for everything that follows, especially when you start asking how these tools actually work.

How Do AI Exam Helpers Actually Work Behind the Scenes?

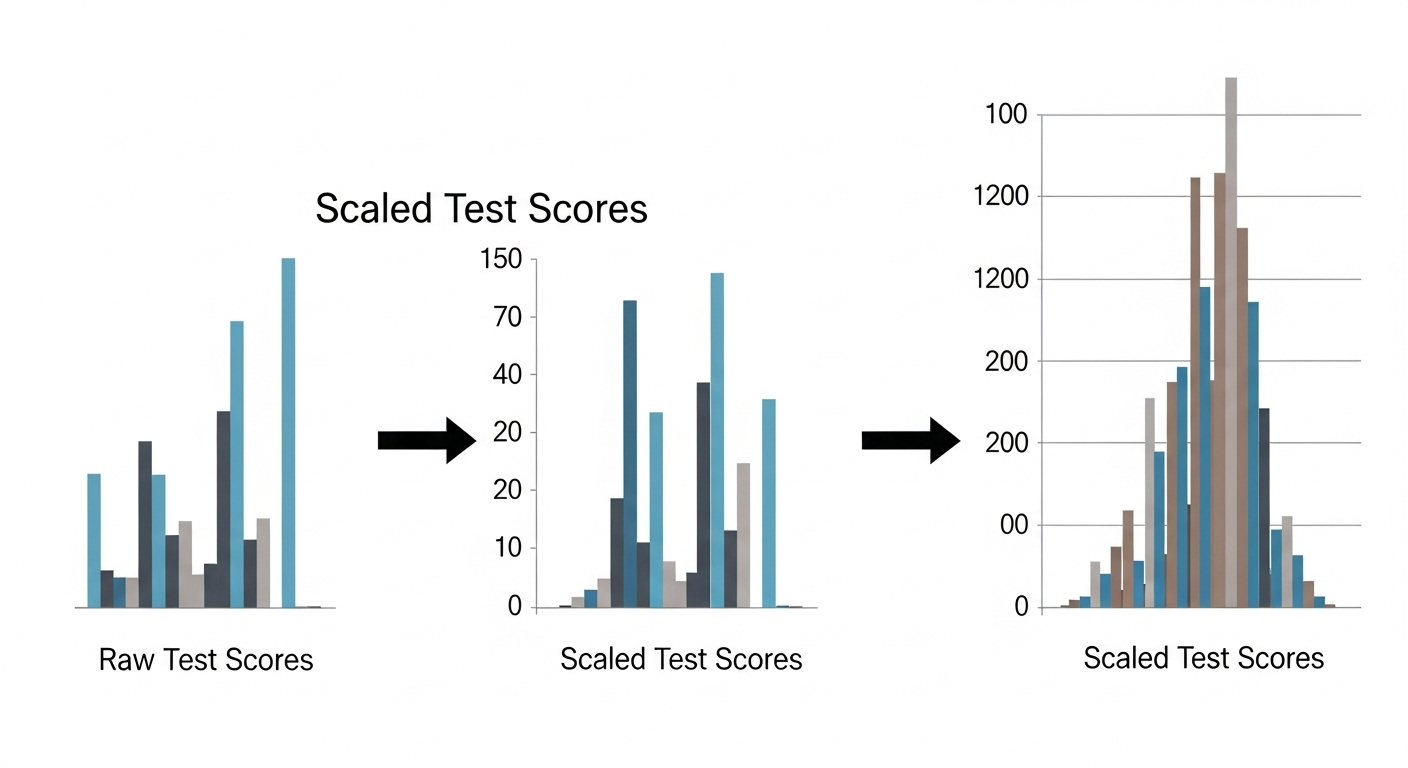

The mechanics are less mysterious than they sound. AI exam helpers rely on a combination of natural language processing and machine learning to function. Together, these technologies allow the tool to interpret content, respond meaningfully, and adapt over time.

Most AI exam helpers analyze uploaded materials such as PDFs, lecture slides, textbook photos, and past exams. Large language models interpret question types, intent, and difficulty rather than just matching keywords.

That is why explanations often feel contextual instead of canned. Systems generate summaries, step-by-step solutions, and clarifications designed to support understanding, not just completion.

Equally important, AI exam helpers track progress and performance over time. Patterns emerge. Weak areas become visible. Support adjusts.

Behind the scenes, this typically involves:

- Natural language processing, used to understand exam questions and written answers

- Machine learning, which adapts explanations to learning pace and topic difficulty

- Data analytics, helping track readiness, gaps, and overall progress

Once you see how these systems operate, it becomes clearer what they can and cannot do during study time.

What Can an AI Exam Helper Help You Do While Studying?

Used responsibly, an AI exam helper acts like a structured study partner that never gets tired. It can generate practice exam questions tailored to your course material and create dynamic quizzes based on past exams or uploaded content. That repetition helps reinforce knowledge without turning study sessions into guesswork.

AI exam helpers also explain important points and break down complex concepts when textbooks or notes feel impenetrable. Instead of rereading the same paragraph, you can ask for clarification, examples, or alternative explanations. Many tools also summarize readings and help organize notes, which saves time during high-pressure weeks.

Support tends to be practical and concrete:

- Practice short-answer questions similar to real exams

- Review different topics within a single course

- Get explanations instead of just answers

- Track study progress and time spent

Because these tools adapt to your pace, you study at your own speed rather than rushing to keep up with an external schedule. That flexibility is helpful. But it also raises an obvious question about boundaries. What happens when studying turns into testing?

Can AI Exam Helpers Give You Answers During an Exam?

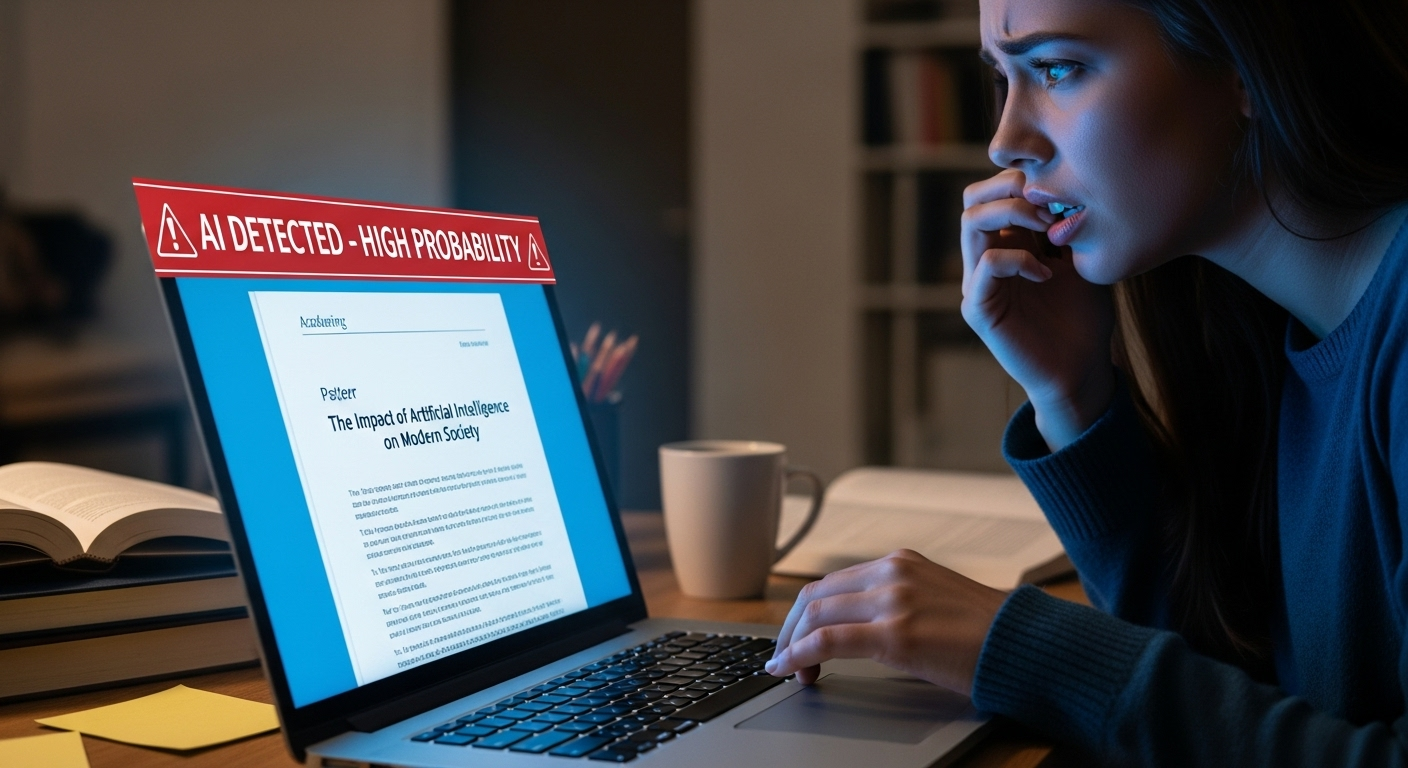

Technically, yes. AI can generate answers and explanations almost instantly. But context matters more than capability. Using an AI exam helper during a live or proctored exam is considered cheating in most educational institutions. There is no gray area here.

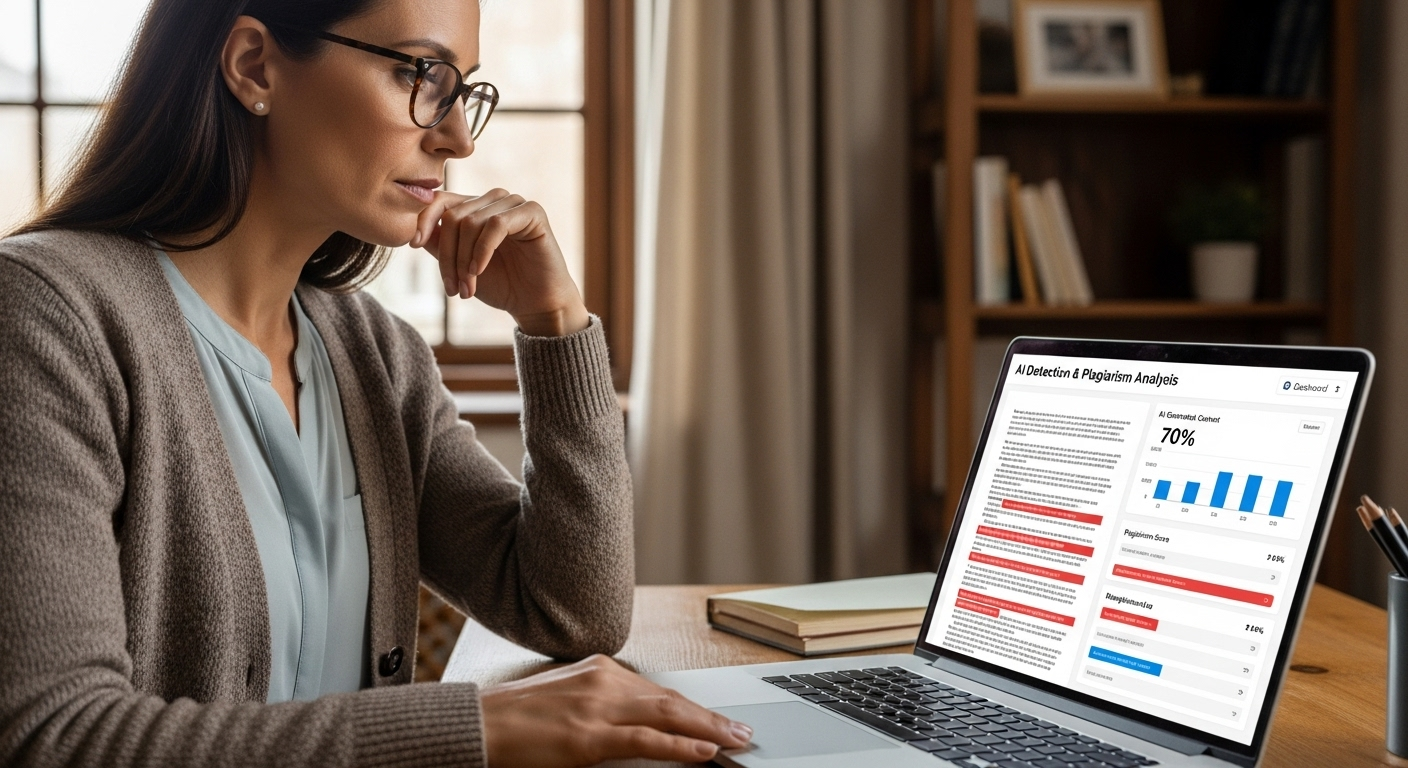

AI exam helper services that take exams on behalf of students violate academic integrity outright. In 2026, 53 percent of students believe AI-based plagiarism is more prevalent than in previous years, which has pushed schools to tighten policies and monitoring. The expectation is clear. AI-generated answers must reflect original student thinking to be valid for submission.

Preparation is allowed. Live assistance during an exam is not. That distinction protects fairness and learning outcomes. Understanding where that line sits is essential before relying on any AI-powered tool.

From here, it becomes important to explore how exam helpers differ from homework tools, and why that difference shapes how they should be used.

Are AI Exam Helpers the Same as Homework Helpers?

They look similar on the surface, which is where the confusion starts. AI exam helpers and homework helpers both rely on artificial intelligence, both respond quickly, and both can support students across multiple assignments. But their purpose and timing differ in important ways.

Homework helpers focus on assignments and practice. They assist during the learning process by helping you work through problems, understand concepts, and complete tasks that are meant to be formative. The goal is repetition and skill-building. Exam helpers, by contrast, focus on review, readiness, and exam strategies. They help you prepare, not submit.

Both tools can save time on tasks that would otherwise feel time consuming, such as organizing notes or reviewing different topics before a test. And both carry misuse risks if they replace thinking instead of supporting it. The distinction matters because policies often treat homework support differently from exam-related assistance.

Understanding that difference helps you use the right tool at the right moment, without crossing lines that institutions take seriously.

How Do AI Exam Helpers Personalize Learning for Each Student?

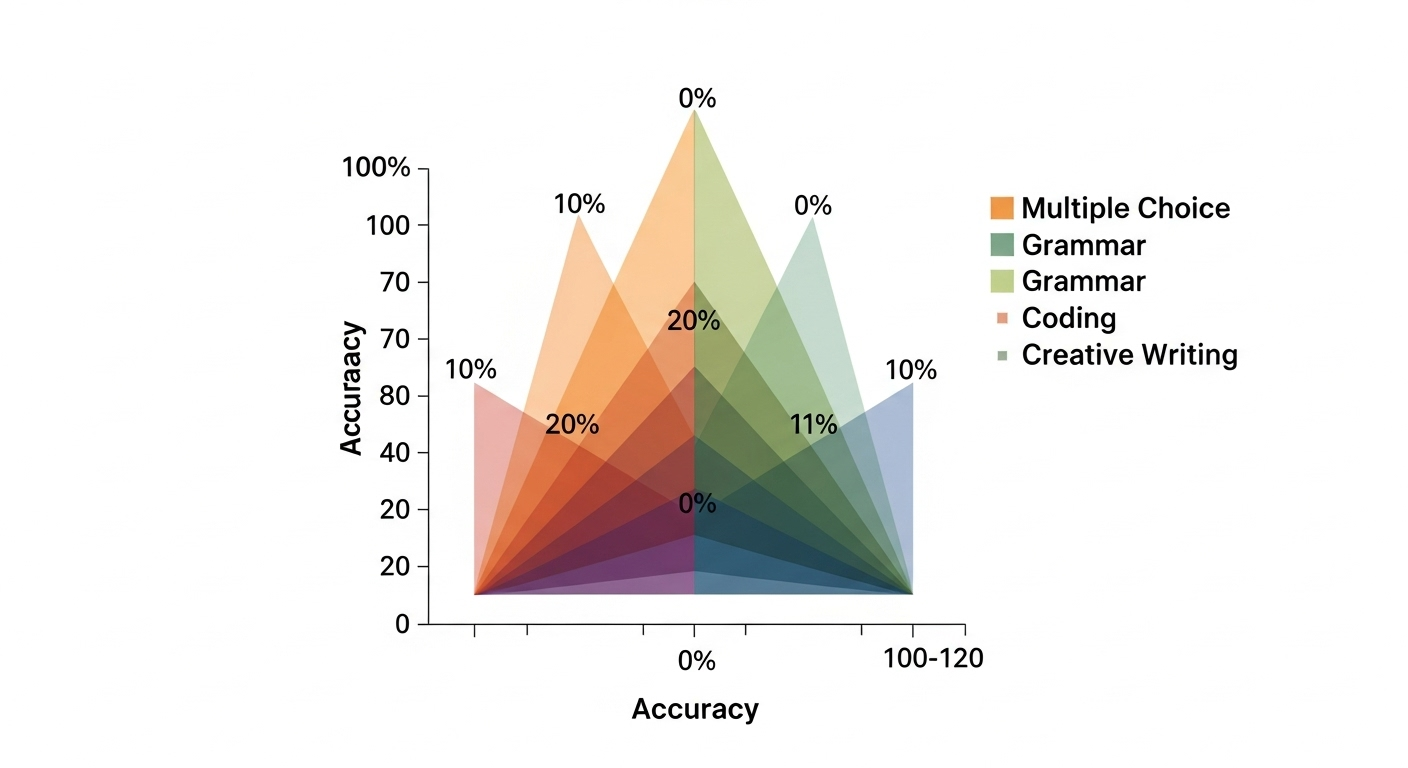

Personalization is where AI exam helpers tend to shine, when used as intended. These tools track study time, accuracy, and topic mastery as you work. Over time, patterns emerge. Strong areas become obvious. Weak spots stop hiding.

Based on performance, explanations adjust. If you struggle with one concept, the tool slows down and reframes it. If you move quickly, it shifts difficulty rather than repeating what you already know. Practice tests are generated dynamically, pulling from different question types to match where you are in the learning process.

Support also adapts to learning styles. Some students benefit from step-by-step breakdowns. Others prefer summaries or comparisons. AI exam helpers adjust accordingly.

Common personalization features include:

- Personalized study plans built around course material

- Adaptive question difficulty that responds to progress

- Progress tracking dashboards showing readiness and gaps

This level of tailoring can deepen understanding, but it also raises expectations. Personalization only helps if it leads to active learning, not passive dependence.

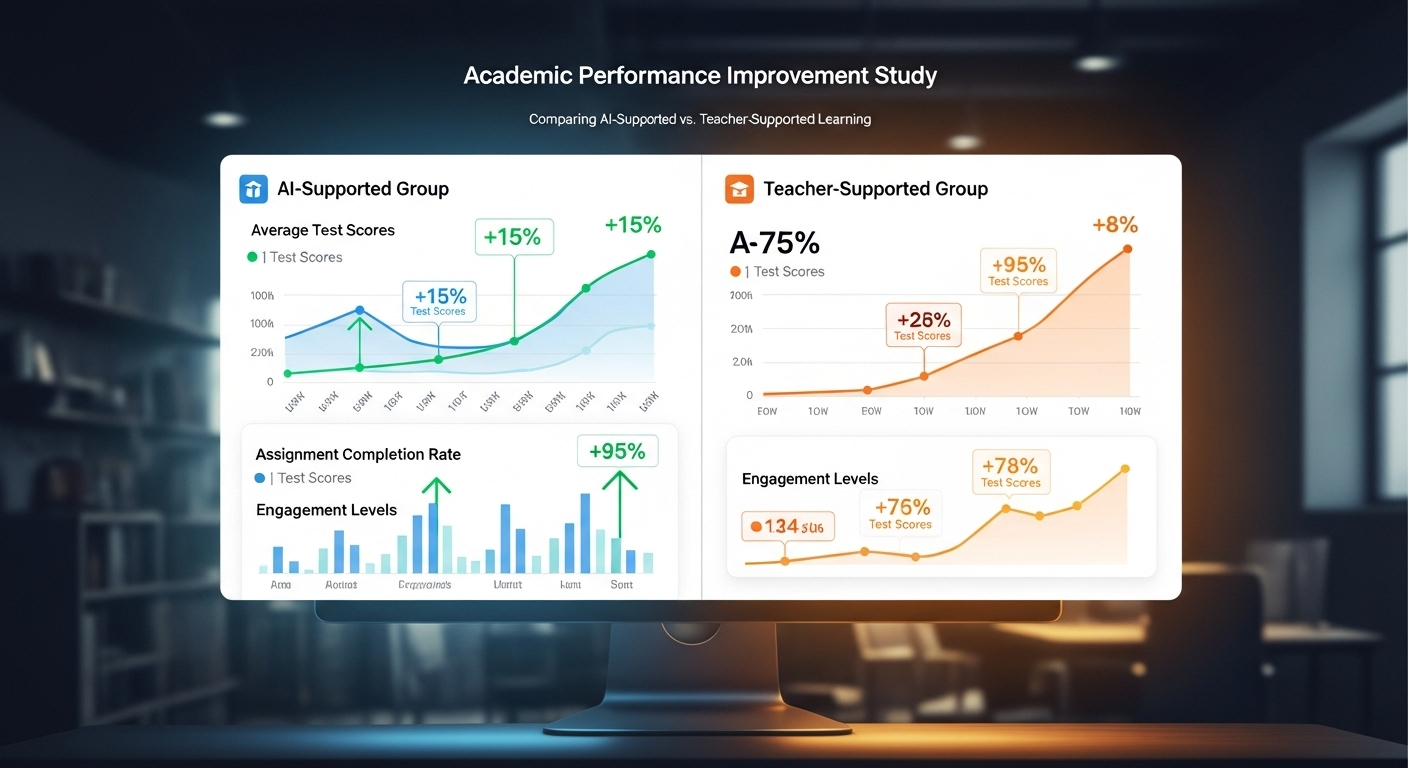

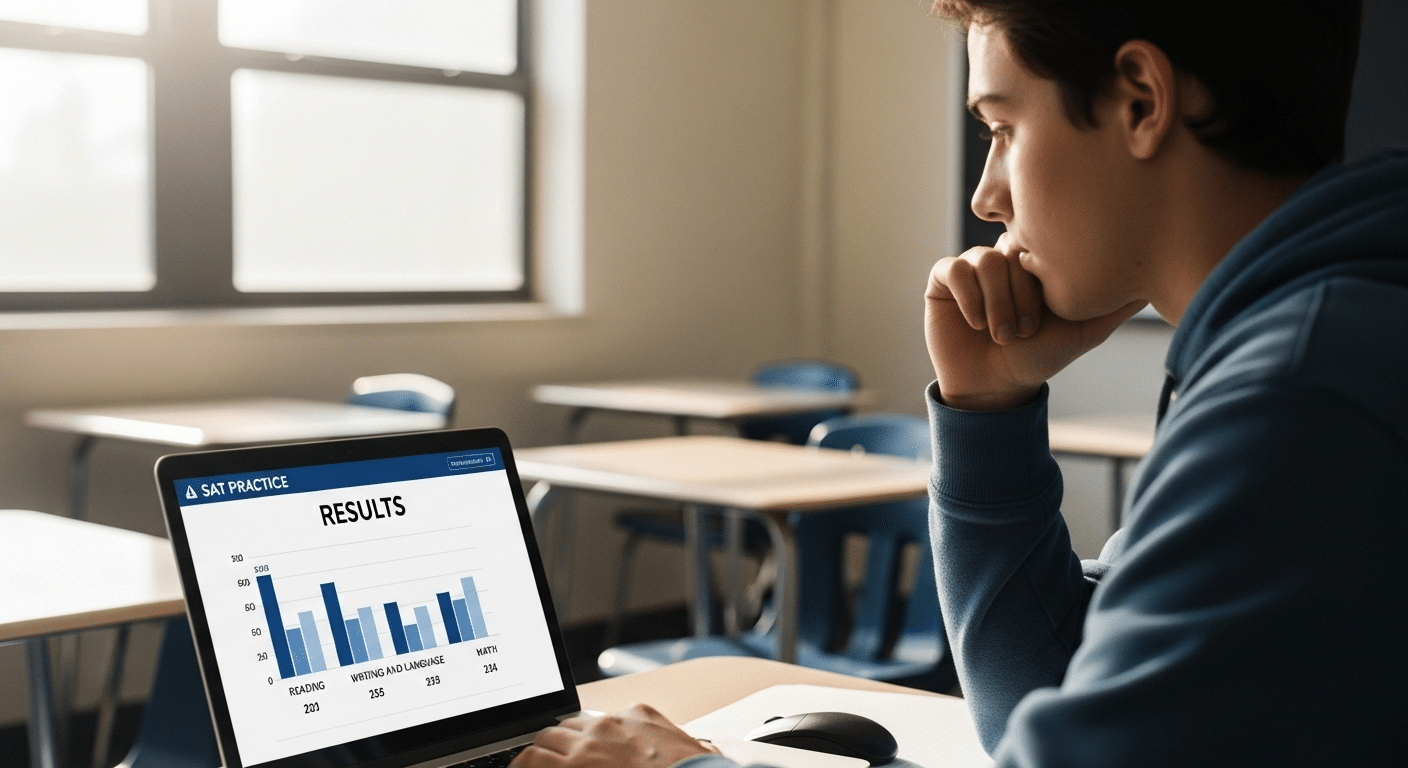

Do AI Exam Helpers Actually Improve Exam Performance?

For many students, the short answer is yes, with conditions. Students often report improved confidence when using AI exam helpers because uncertainty drops. You know what you’ve covered. You know what still needs work.

These tools can also reduce exam-related stress by helping manage time and focus. Instead of cramming blindly, study sessions become structured. That structure matters. When AI is used for preparation rather than shortcuts, performance tends to improve because understanding improves.

However, there is a tradeoff. Over-reliance can reduce long-term retention. When answers appear too quickly, effort shrinks. Learning becomes shallow. That is why improvement depends on how the tool is used, not simply whether it is used.

AI exam helpers support progress when they guide thinking. They undermine it when they replace thinking. The difference shows up most clearly over time, not just on one test.

What Are the Risks of Using an AI Exam Helper?

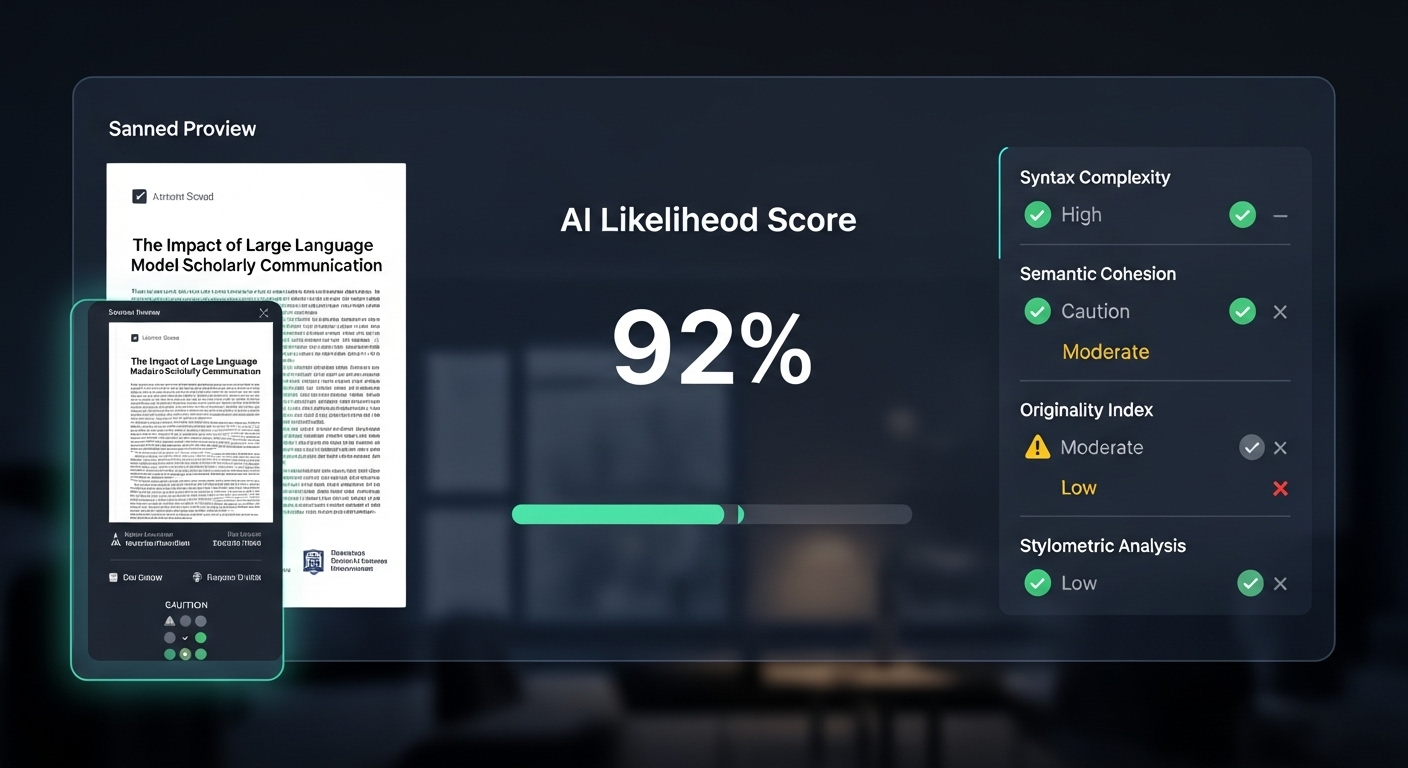

No tool is neutral. AI exam helpers carry real risks that are easy to overlook when convenience takes center stage. Academic misconduct and plagiarism risks sit at the top of the list. Generating answers without understanding invites violations that institutions increasingly monitor.

There is also a cognitive cost. Over-reliance can lead to disengagement, where effort drops and critical thinking erodes. When struggle disappears entirely, learning often follows it out the door.

Other concerns are structural:

- Integrity violations, especially during restricted assessments

- Privacy risks, tied to data collection and storage

- Loss of critical thinking, from habitual shortcutting

- Ethical concerns, around fairness and access

Reduced student-teacher interaction is another risk. When AI becomes the default source of help, mentorship fades. These risks do not mean AI exam helpers should be avoided. They mean boundaries matter.

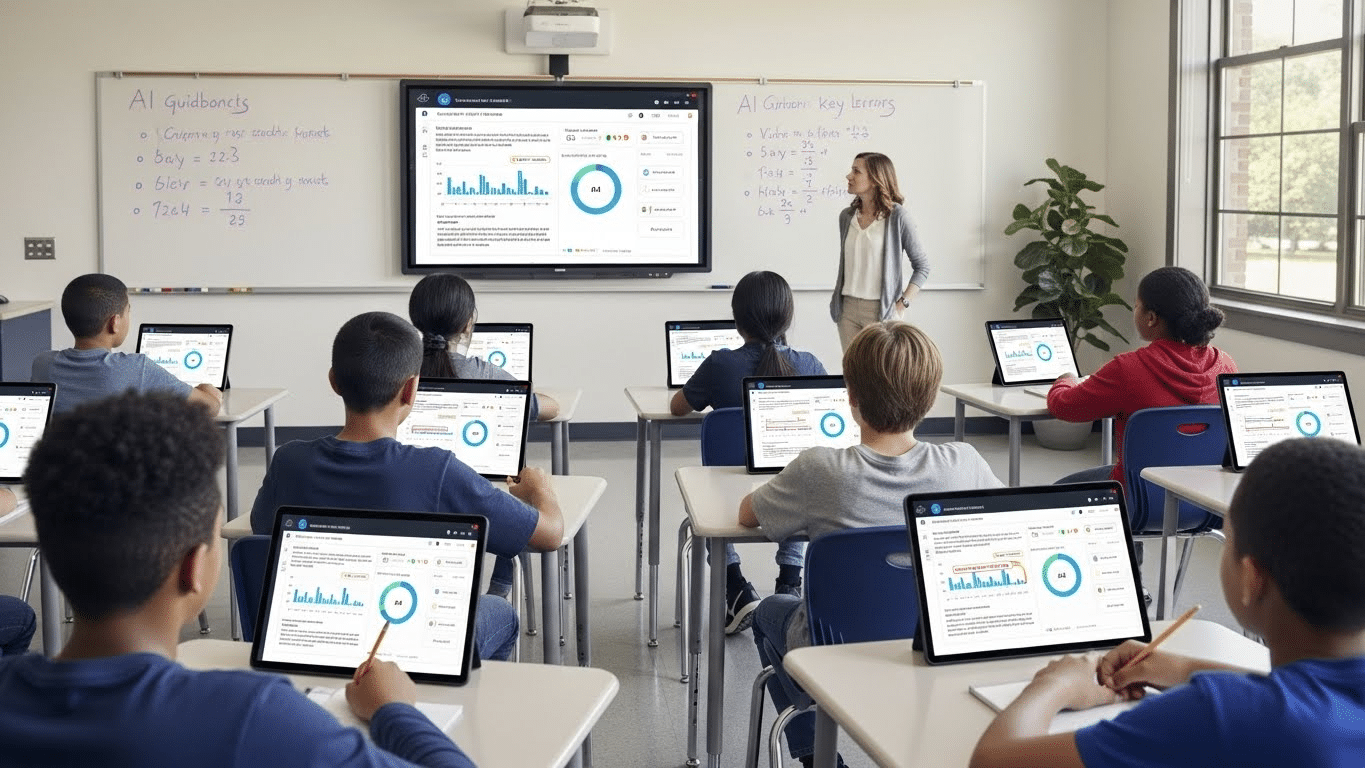

How Do Schools and Universities Use AI in Online Exams?

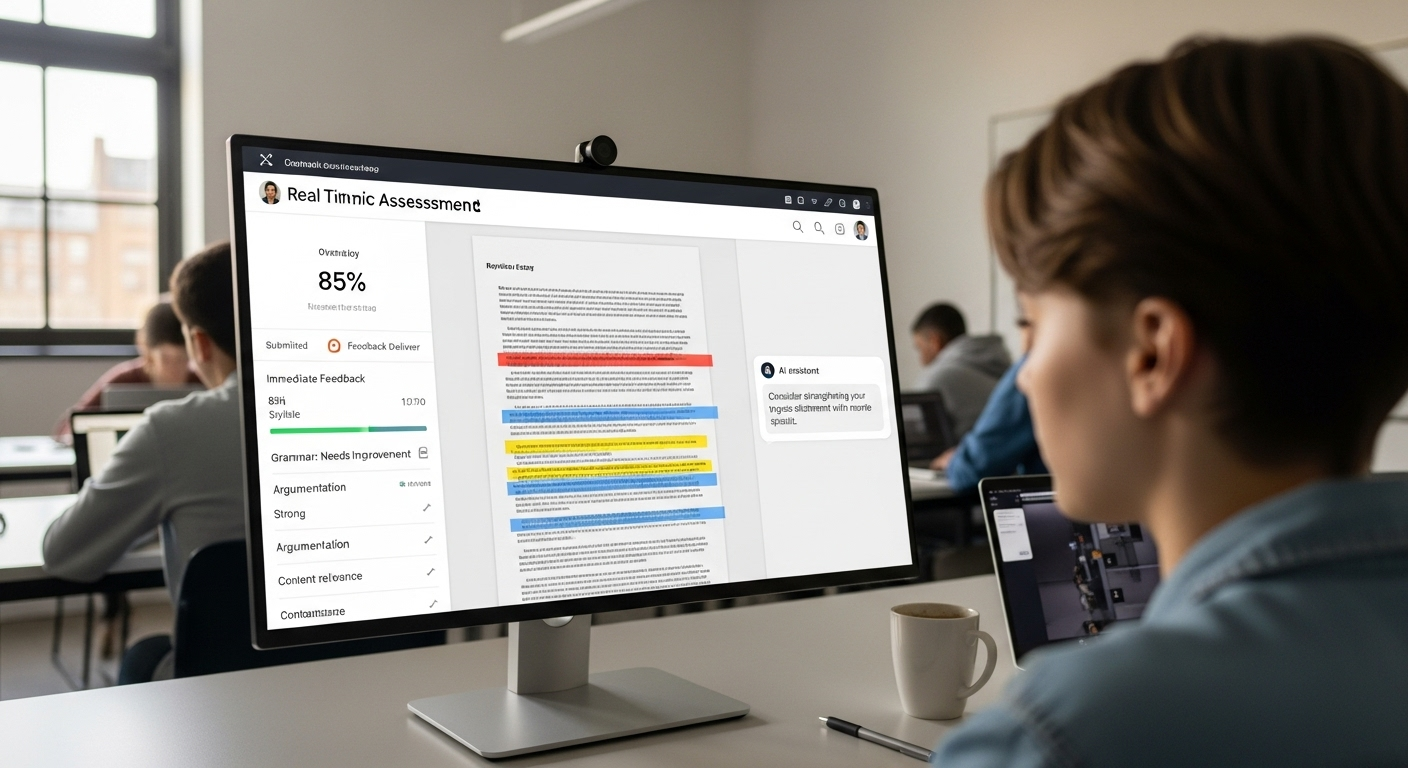

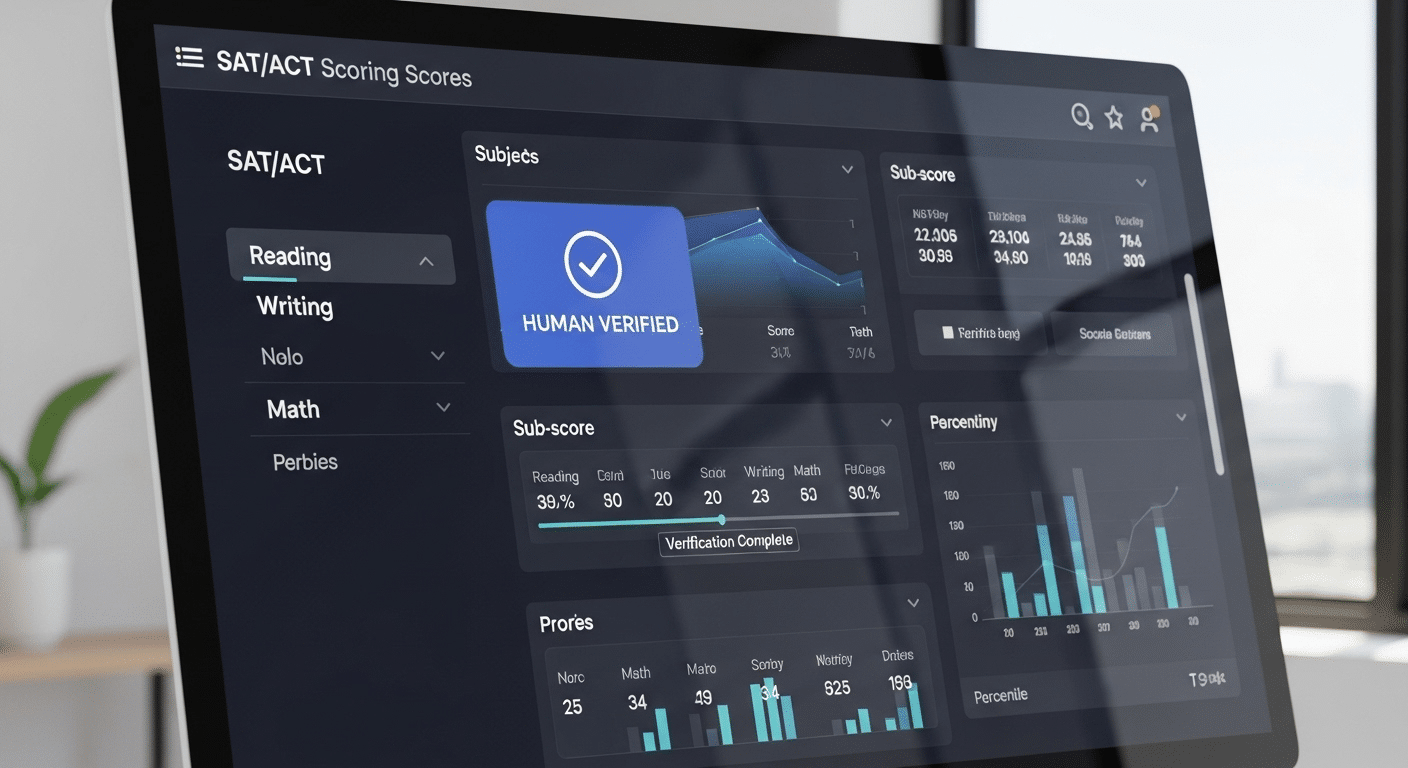

Institutions approach AI from the opposite angle. While students use AI exam helpers to prepare, schools use AI to secure and manage online exams. AI-powered proctoring tools monitor exams in real time, flagging unusual behavior and enforcing rules at scale.

Identity verification may include facial recognition or biometric analysis, particularly in proctored exam environments. AI analyzes patterns rather than isolated actions, which helps reduce false positives. Automated grading also plays a role, improving efficiency and accuracy for objective question types.

Beyond monitoring, AI streamlines exam creation and management. Question banks grow faster. Scheduling becomes simpler. Educators spend less time administering exams and more time teaching.

The same technology that supports learning can also enforce integrity. Context determines which side you see.

Is Using an AI Exam Helper Ethical or Allowed?

The answer depends on policy, timing, and intent. Most institutions allow AI exam helpers for exam preparation. Reviewing content, practicing questions, and clarifying concepts typically fall within acceptable use.

Using AI during a live or proctored exam is usually prohibited. That line is rarely ambiguous. Ethical use emphasizes learning, not outsourcing thinking. Transparency matters. If you are unsure, institutional guidelines are the authority, not the tool’s marketing language.

Ethics here are practical, not abstract. AI should support understanding. Once it replaces it, the relationship breaks down. Knowing where your institution draws that boundary is part of responsible use.

How Can Educators Use AI Exam Helper Technology Responsibly?

From an educator’s perspective, AI exam helper technology offers leverage when applied thoughtfully. AI can automate grading and assist with exam creation, saving time that would otherwise be consumed by repetitive tasks.

That time matters. When administrative load shrinks, educators focus more on teaching, mentoring, and curriculum design. AI also supports exam integrity by helping detect irregular patterns and enforce consistent assessment criteria.

Responsible use requires structure. Clear policies, training, and transparency are essential. Educators must understand not only what AI can do, but what it should not do. When that balance is in place, AI supports assessment without undermining trust.

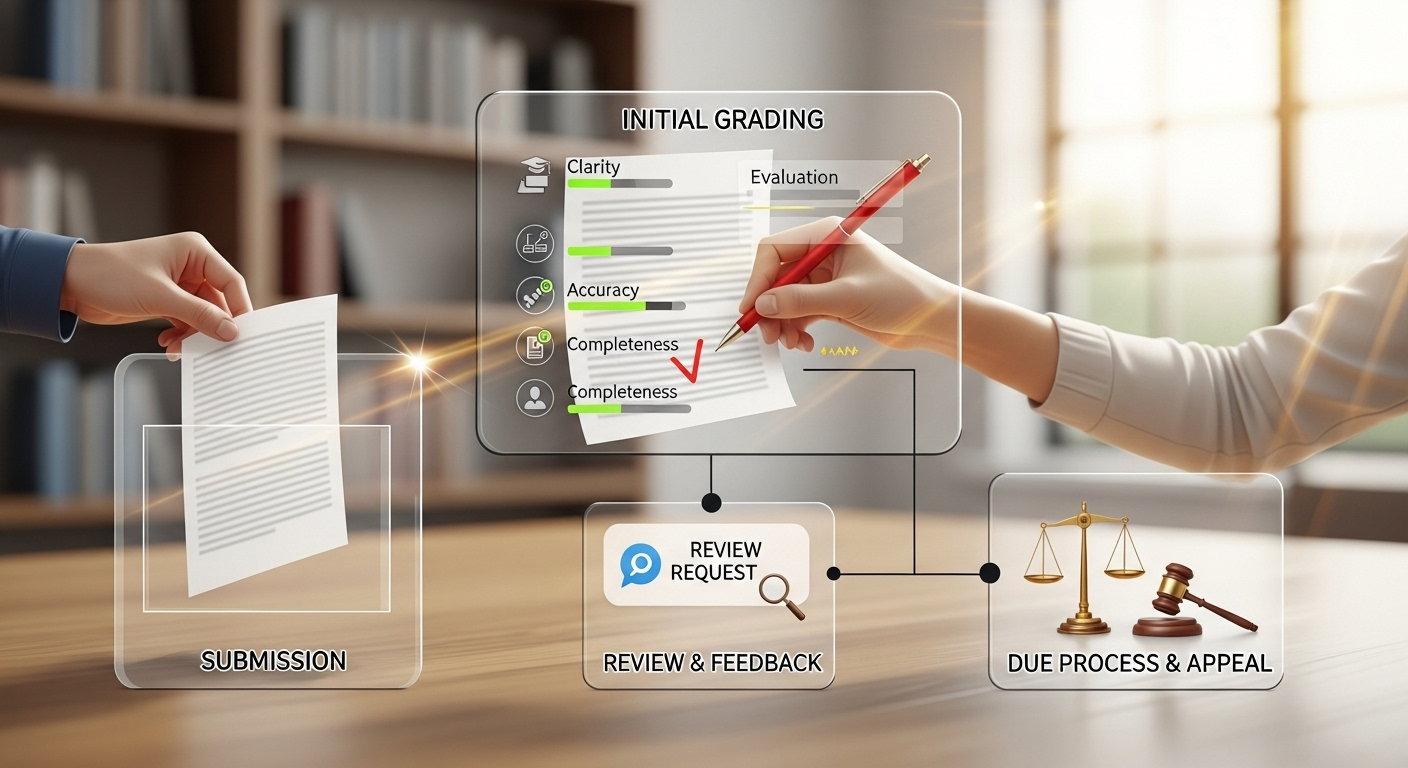

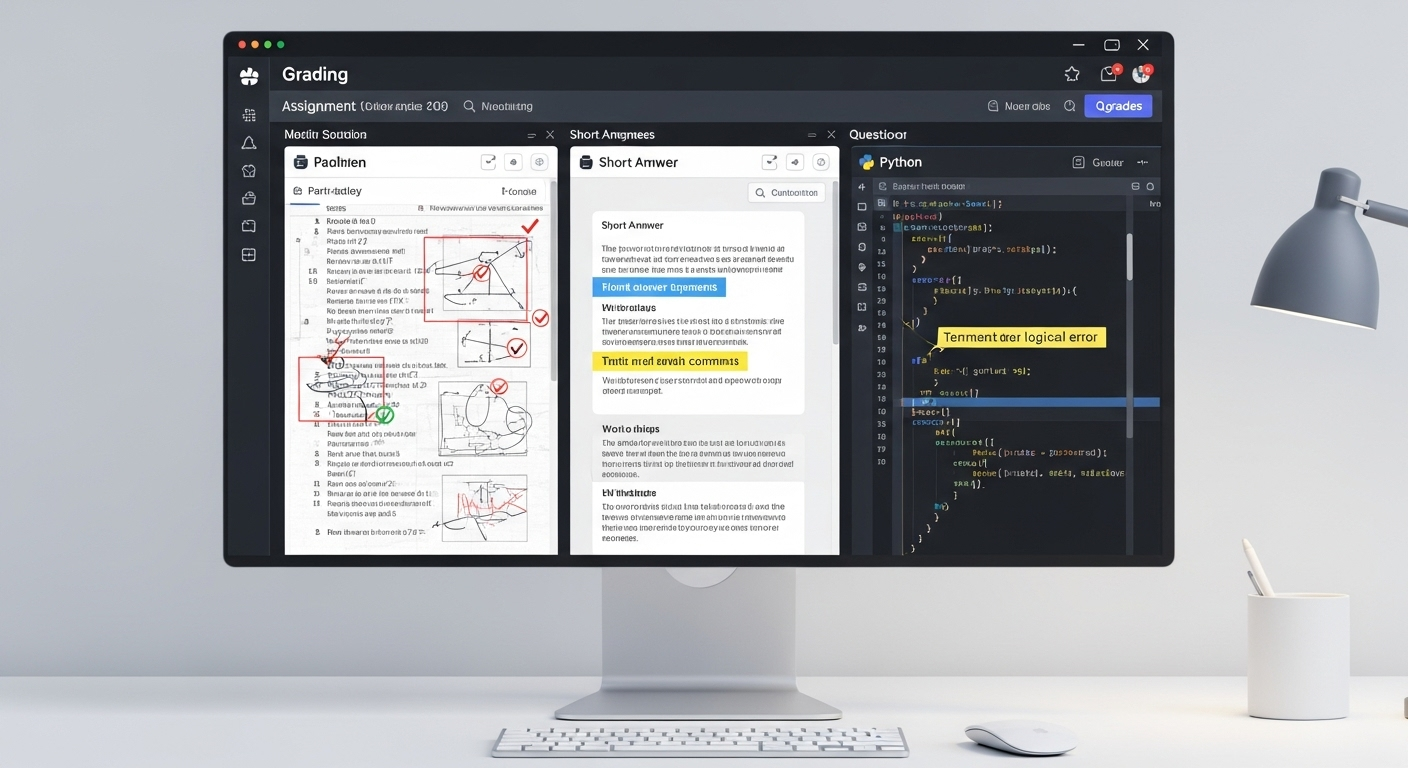

How Can PowerGrader Support Ethical, Scalable Exam Assessment?

Ethical assessment becomes harder as scale increases. PowerGrader is designed to address that challenge without removing educators from control. It provides instructor-controlled AI feedback, ensuring assessment criteria are defined by humans and applied consistently.

Pattern detection across cohorts helps surface common issues early, rather than after final grades. At the same time, PowerGrader reduces workload without lowering rigor, allowing educators to focus on instruction rather than repetitive grading.

Most importantly, the platform follows a human-in-the-loop governance model. Educators can review, adjust, or override AI outputs at any stage. This design keeps accountability where it belongs while still delivering efficiency at scale.

That balance makes ethical, institution-ready assessment practical, not theoretical. Try Apporto’s AI PowerGrader today!

Conclusion:

AI exam helpers are evolving away from shortcuts and toward structured learning tools. The trend is clear. Stronger emphasis on ethics, clearer boundaries, and better alignment with educational goals.

Human judgment remains essential. No system replaces mentorship, curiosity, or accountability. The future lies in balance. AI supports learning, educators guide it, and students remain responsible for their own progress.

When support and accountability coexist, AI exam helpers become what they were meant to be. Tools. Not substitutes.

Frequently Asked Questions (FAQs)

1. What is an AI exam helper?

An AI exam helper is an AI-powered tool that supports exam preparation by explaining concepts, generating practice questions, and helping students review material responsibly.

2. Can AI exam helpers be used during exams?

Using AI exam helpers during live or proctored exams is generally prohibited and considered a violation of academic integrity policies.

3. Are AI exam helpers considered cheating?

AI exam helpers are not cheating when used for preparation, but generating answers during restricted exams is widely classified as academic misconduct.

4. Do AI exam helpers replace studying?

No. They support studying by organizing materials and explaining concepts, but effective learning still requires effort, reflection, and practice.

5. Are AI exam helpers safe to use?

Safety depends on the tool. Risks include data privacy concerns, over-reliance, and misuse if institutional guidelines are ignored.

6. How do schools detect AI misuse during exams?

Schools use AI-powered proctoring, behavior analysis, and identity verification to monitor exams and flag irregular activity.