Marking used to be invisible work. Late nights, quiet weekends, stacks that never quite disappeared. Lately, though, it has become a headline issue.

Across education and professional training, marking workloads are rising faster than time or staffing can realistically absorb.

That pressure explains the shift. Institutions are moving away from traditional automated marking systems and toward AI marking tools that promise scale, speed, and consistency.

But the language has become messy. AI marking, AI grading, generative AI. They are often used interchangeably, even though they mean very different things.

What’s changing now is the focus. The conversation is no longer just about grades. It is about learning-focused feedback processes and how feedback actually helps students improve.

Quality assurance bodies are beginning to address AI marking explicitly, not as a novelty, but as a practice that needs definition, limits, and accountability.

That is why the question what is AI marking suddenly matters more than it did before.

What Is AI Marking, in Plain and Honest Terms?

At its simplest, AI marking is the use of artificial intelligence to mark assessments and assignments. Not to judge students as people. Not to replace educators. To assist with the work of evaluating student responses at scale.

AI marking systems rely on machine learning and natural language processing to evaluate students’ written responses, not just right-or-wrong answers. That means they can look at structure, relevance, and alignment with marking criteria, then draft feedback based on those patterns. In many cases, this feedback is aligned to existing rubrics provided by educators.

What AI marking does not do is remove humans from the process. Human markers remain essential, especially where context, creativity, or consequences matter.

In practice, AI marking is:

- Not just grades, but draft feedback and insights

- Support for learning-focused feedback, not one-off scoring

- Humans making final decisions, always

- Designed to support human judgement, not override it

Used honestly, AI marking is an assistant. Not an authority.

How Is AI Marking Different From Traditional Automated Marking Systems?

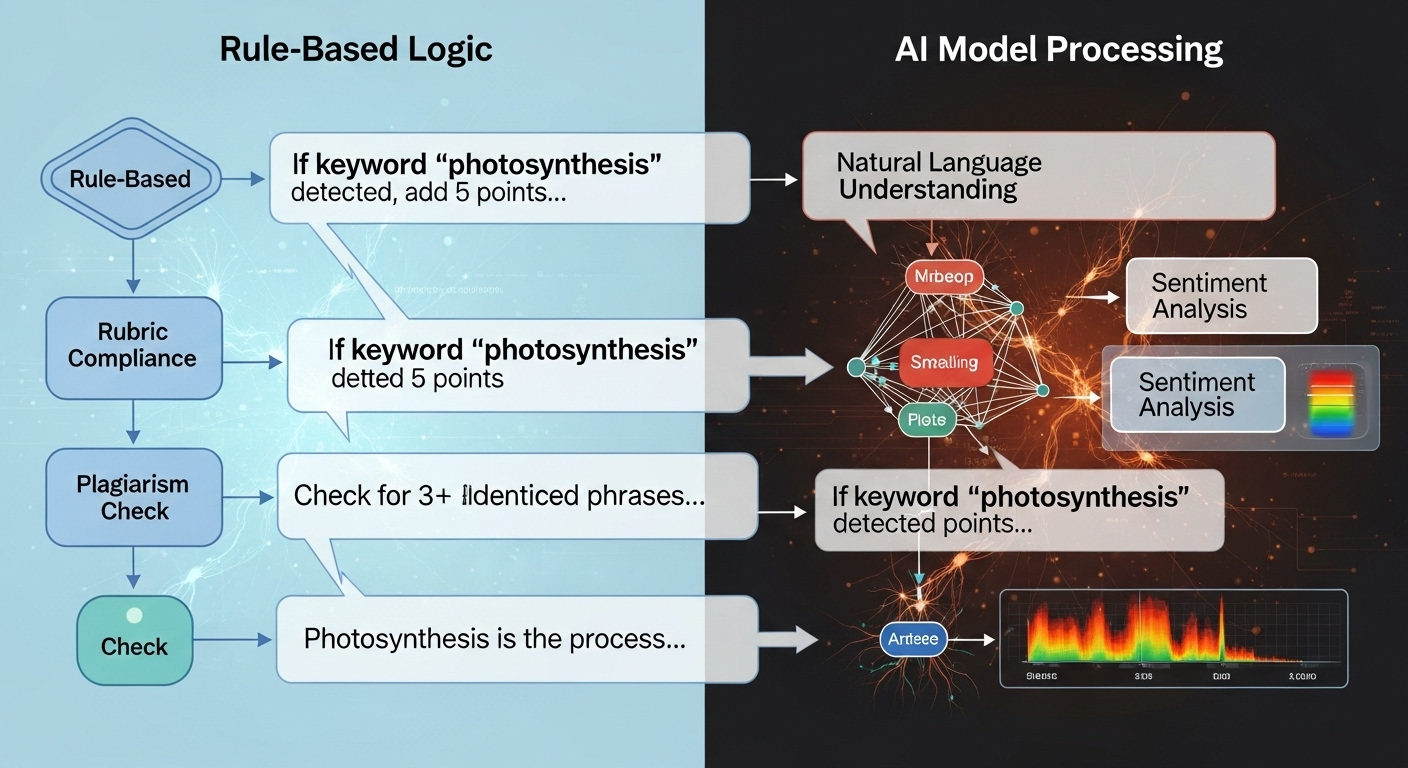

Traditional automated marking systems rely on fixed rules. If an answer matches a predefined pattern, it passes. If it doesn’t, it fails. The logic is deterministic. Same input, same output. Every time. That approach works for tightly constrained tasks, but it collapses the moment responses become more nuanced.

AI marking works differently. It uses machine learning models that learn from large sets of previously scored work. Instead of checking for exact matches, the system compares student answers against learned patterns of quality. The result is probabilistic, not absolute. The same input may vary slightly over time, especially as models update or context shifts.

Here’s the contrast, plainly:

| Traditional Automated Marking | AI Marking |

|---|---|

| Fixed rules | Machine learning models |

| Deterministic output | Probabilistic output |

| Same input → same output | Same input may vary |

| Right/wrong only | Quality-based evaluation |

| No feedback | Draft and summary feedback |

Because current large language models are stochastic, AI marking can handle open-ended responses. That flexibility is its strength, and also why human oversight remains essential.

How Does AI Mark Student Work Behind the Scenes?

Behind the interface, AI marking is less mysterious than it sounds. These systems are trained on thousands of pre-scored answers, learning the signals that distinguish strong responses from weak ones. Over time, they internalize patterns linked to clarity, coherence, relevance, and alignment with criteria.

Natural language processing allows the system to read student writing as language, not just text. It evaluates structure and meaning, not only grammar.

Machine learning models then compare new submissions to what they have learned, assessing similarity and divergence across the same tasks and the same answers.

AI marking can also handle extended written responses and many coding tasks. It can flag identical student work, cluster similar answers, and surface patterns that would be easy to miss manually.

Under the hood, this usually involves:

- Natural language processing for written responses

- Machine learning for pattern detection across cohorts

- Comparison of same tasks and same answers to ensure consistency

- Drafting inline comments and summary feedback for educator review

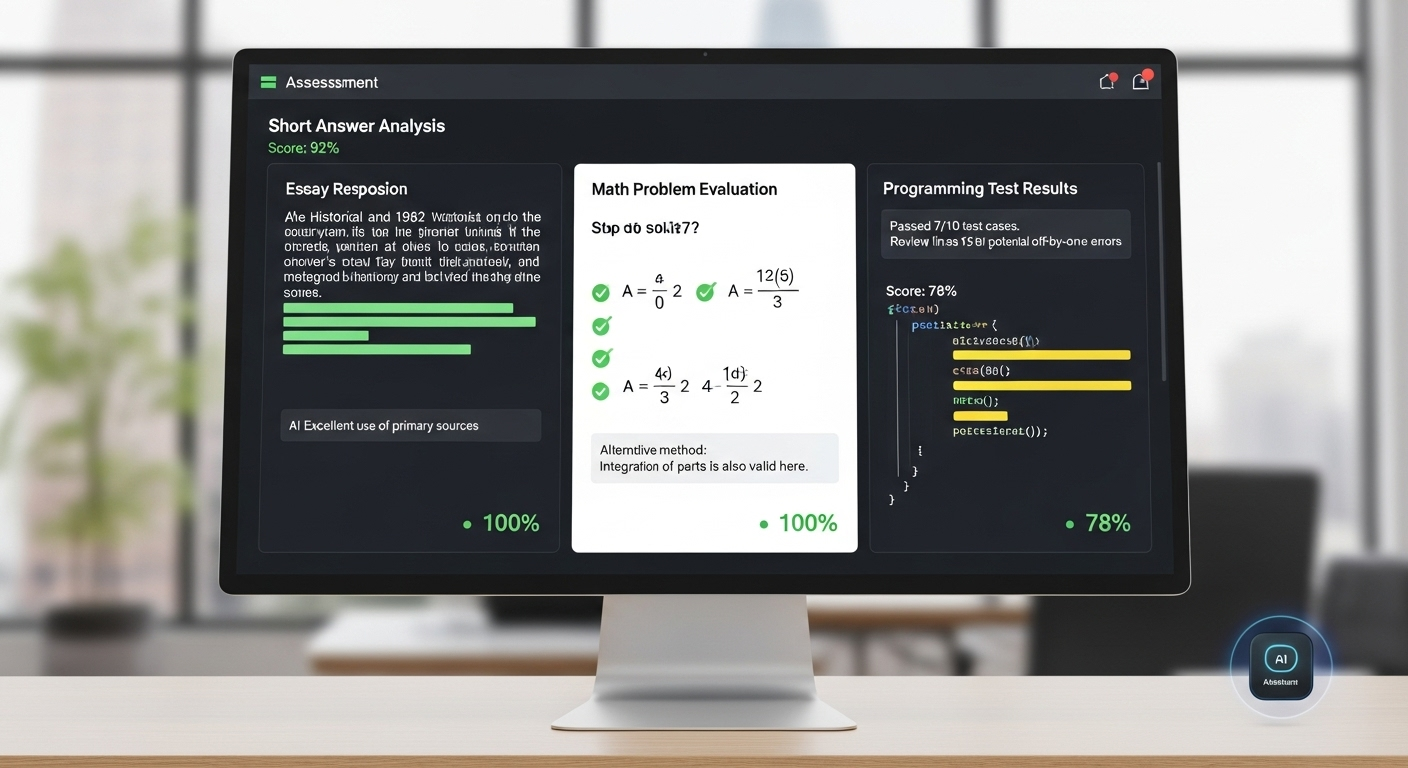

What Types of Assessments Does AI Mark Most Accurately?

Accuracy improves as structure increases. That pattern shows up repeatedly in empirical work.

AI marking reaches its highest accuracy on assessments where expectations are clear and variation is limited. In some studies, agreement with human markers exceeds 90 percent for these task types.

As subjectivity rises, accuracy drops. Not because the system fails outright, but because interpretation becomes harder.

AI performs best on:

- Tightly constrained short answers

- Simple short answer marking with clear criteria

- Numeric or algebraic results

- Coding tasks and programming tests

- Right-or-wrong questions

These formats limit ambiguity. The same answer should receive the same mark, and AI handles that consistency well.

Once creativity, voice, or novel reasoning enters the picture, accuracy depends increasingly on human judgment layered on top of the system’s suggestions.

Where AI Marking Becomes Unreliable or Risky

AI marking has edges. Clear ones. And pretending otherwise does more harm than good.

The biggest problems appear when responses stop following familiar paths. Creative writing, reflective essays, and open reasoning tasks push beyond patterns the system has seen before.

Current large language models are stochastic by design, which means their outputs are probabilistic, not fixed. The same prompt, with the same settings, can produce slightly different results at different times.

That variability is compounded by model updates. Monthly or even silent version changes can affect consistency, which becomes a real issue when assessments are compared across cohorts or semesters. What counted as a strong response last term may be interpreted differently now, even if the work is identical.

AI marking becomes risky when it encounters:

- Open-ended creative responses with unconventional structure

- Novel reasoning paths that do not match learned patterns

- Model drift across versions, changing outputs over time

- Non-deterministic behavior, even with the same prompt

These limits don’t make AI marking useless. They make boundaries essential.

Why Human Oversight Is Essential in AI Marking

AI marking must never operate autonomously. Not in education. Not where outcomes carry real weight.

At its best, AI acts as an assistant or a second marker. It drafts, flags, and suggests. Humans decide. That division of labor is not optional.

It is required for fairness, accountability, and legal defensibility, especially in high-stakes assessments where marks influence progression, certification, or employment.

Human oversight ensures context is not lost. Effort is recognized. Unusual but valid reasoning is protected. Without that layer, AI marking risks becoming efficient but unjust.

In responsible systems:

- Human markers retain authority over final outcomes

- The AI sandwich approach (AI → human → AI) supports review and refinement

- Serious consequences if misused are actively mitigated

- Support, not replacement, remains the guiding principle

Accuracy in marking is inseparable from responsibility. Humans carry that responsibility.

How AI Marking Improves Feedback Quality (Not Just Speed)

Speed gets the headlines, but feedback quality is where AI marking quietly changes the game.

AI can draft feedback aligned directly to rubrics, linking comments to specific criteria instead of vague impressions. It can suggest alternative explanations when a student’s reasoning goes astray, helping them see not just that something is wrong, but why.

Across large cohorts, it maintains a consistent tone, avoiding the accidental harshness or inconsistency that creeps in when humans are exhausted.

Perhaps most importantly, AI provides instant feedback. Students don’t wait weeks. They respond while the work is still fresh in mind. When educators review and refine that feedback, quality improves rather than declines.

In practice, this looks like:

- Draft feedback for review, not automatic release

- Rubric-linked explanations that clarify expectations

- Consistent tone across all submissions

- Instant feedback that supports learning momentum

Used this way, AI marking supports a learning-focused approach. Not faster grading. Better guidance.

Is AI Marking Accurate Enough to Trust?

Short answer. Yes, in the right places. Longer, more honest answer: accuracy depends on how and where you use it.

AI marking shows high accuracy when tasks are structured. Short answers. Coding problems. Clearly defined criteria. In these cases, studies repeatedly show the same finding: AI outputs align with human markers at very high rates, often above 90 percent. That’s not hype. That’s data.

But AI marking is probabilistic, not exact. It does not “know” in the human sense. It estimates. It predicts. Which means clarity matters. The clearer the rubric, the more accurate the marking. Vague criteria produce vague outcomes.

The realistic position is this: AI marking works best as an assistant. An accelerator. A consistency checker. Not a final judge. Used that way, it earns trust. Used alone, it overreaches.

Accuracy is real. Certainty is not. And that distinction matters.

Ethical and Practical Risks of AI Marking

Used well, AI marking improves feedback quality. Used carelessly, it introduces new risks that institutions cannot ignore.

The first issue is bias. Generative AI poses challenges when training data reflects historical inequities. Without careful oversight, those patterns can quietly shape outcomes. Then there’s privacy. Student work is sensitive data, and systems must comply with GDPR, FERPA, and local regulations. Anything less is unacceptable.

Over-automation is another risk. When educators defer too much to the system, human judgement weakens. Feedback becomes technically correct but educationally thin. Governance gaps widen. Confusion creeps in. Helping turns into maintaining the system rather than the learner.

Key risks include:

- Bias and fairness issues rooted in training data

- Data confidentiality and regulatory compliance failures

- Over-reliance on automated decisions

- Governance gaps that reduce accountability

AI marking demands restraint as much as adoption.

How AI Marking Fits Into Real Educational and Institutional Workflows

AI marking doesn’t sit off to the side. It lives inside real systems, doing unglamorous but necessary work.

Most tools integrate directly into Learning Management Systems. That matters. It allows educators to apply the same model, the same criteria, across large cohorts without reinventing workflows. Supporting admin tasks becomes the quiet win. Sorting. Flagging. Drafting. Pattern detection across hundreds or thousands of submissions.

In universities, this scales marking during peak periods. In professional and workplace assessment, it enables consistent evaluation across regions and time zones. The goal is not novelty. It’s reliability.

Common uses include:

- LMS integration for seamless workflows

- Supporting admin tasks like triage and review

- Same model applied consistently across cohorts

- Scalable evaluation without losing oversight

Used this way, AI marking develops local patterns without dictating outcomes.

How AI PowerGrader Enables Responsible AI Marking

AI PowerGrader is designed around a simple principle: humans stay in charge.

It uses a rubric-first design, meaning marking criteria come from educators, not the model. AI assists by drafting feedback, identifying patterns, and surfacing inconsistencies. It does not assign final marks. Humans do that. Always.

The system operates with human-in-the-loop AI marking, ensuring every output can be reviewed, adjusted, or overridden. Pattern detection happens with oversight, not automation for its own sake. Governance is built in, not bolted on later.

Equally important, AI PowerGrader is institution-ready. It is designed with FERPA- and GDPR-conscious safeguards, recognizing that trust depends on privacy and accountability, not just performance.

This is AI marking that supports judgement instead of pretending to replace it. Quiet. Careful. And deliberate.

The Bottom Line

AI marking is not here to replace human markers. That’s the honest answer.

Empirical work is still evolving, and the evidence points in one direction: learning-focused feedback processes outperform blind automation every time. AI helps scale. Humans provide judgement. Together, they work. Separately, both fall short.

The future isn’t about choosing speed over fairness or efficiency over care. It’s about balance. Using AI to handle volume while protecting meaning. Letting systems suggest while people decide.

A realistic position matters here. AI marking is powerful. It is also limited. When those limits are respected, it becomes a tool worth trusting.

Frequently Asked Questions (FAQs)

1. Is AI marking the same as traditional automated marking?

No. Traditional systems follow fixed rules. AI marking uses machine learning to evaluate quality and meaning, especially in written responses, with human oversight required.

2. Can AI marking replace human markers?

No. AI marking supports human judgement by drafting feedback and flagging patterns, but final decisions must always remain with human markers.

3. How accurate is AI marking?

AI marking is highly accurate for structured tasks when clear rubrics are used, but it remains probabilistic and should not be treated as infallible.

4. Is AI marking fairer than human marking?

It can improve consistency, but fairness depends on training data, rubric quality, and strong human oversight to prevent bias.

5. Does AI marking work for creative assignments?

It struggles with highly creative or novel responses. Human review is essential for these tasks.

6. Is student data safe in AI marking systems?

Only if platforms are designed with strong governance and comply with regulations like GDPR and FERPA.

7. Should AI marking be used in high-stakes assessments?

Only as a support tool. High-stakes decisions require human judgement and accountability.