It starts as a passing thought. Then it sticks. If artificial intelligence can write essays, solve equations, and analyze massive datasets in seconds, it’s reasonable to wonder whether it’s also deciding something as consequential as standardized test scores.

Parents ask. Students worry. Counselors field the same question again and again: is AI grading the SAT and ACT now?

That uncertainty didn’t appear out of nowhere. The educational landscape has shifted quickly, and the rules feel less visible than they used to. This article walks through what’s actually happening, what isn’t, and why so many people are suddenly paying attention.

The goal isn’t to speculate. It’s to clarify, step by step, how scoring works today and where AI fits into the picture.

Why Are People Asking If AI Is Grading the SAT and ACT Now?

The timing isn’t accidental. Generative AI moved from novelty to everyday tool almost overnight, and assessment was always going to be part of the conversation. When AI tools became visible in classrooms, homework platforms, and admissions workflows, questions about grading followed naturally.

Standardized tests already feel opaque. You take the exam, wait, and receive a number with little explanation. That distance leaves room for doubt.

The rollout of the Digital SAT added fuel to that uncertainty. Adaptive testing, algorithmic routing, and faster score delivery sound technical enough to blur the line between machine assistance and machine control.

Test-optional policies made things even murkier. Some colleges downplayed scores, others doubled down on them, and families were left trying to interpret mixed signals.

Against that backdrop, the idea that AI might be grading the SAT and ACT doesn’t sound far-fetched. It sounds plausible. That’s why a clear answer matters before assumptions take root.

Short Answer: Is AI Actually Grading the SAT and ACT?

The short answer is no, not in the way many people imagine. The SAT and ACT are not fully AI-graded from start to finish. There is no single algorithm deciding a student’s fate.

Multiple-choice sections are machine scored, and they have been for decades. That part isn’t new. The controversy usually centers on writing. Here, both exams rely on hybrid systems. AI assists with efficiency and consistency, but it does not act alone.

For sections that involve scoring essays or written responses, automated systems are paired with human graders. AI helps apply scoring rubrics consistently and flags patterns, but final authority does not rest with a machine. Human graders remain part of the scoring process, especially for responses that fall outside typical patterns.

In practice, AI acts as support, not judge. It speeds things up and reduces fatigue, but it does not replace human oversight. That distinction is easy to miss if you only hear the word “algorithm” without context.

How Has AI Been Used in Standardized Testing Before the SAT and ACT?

AI in testing didn’t arrive suddenly. It crept in, quietly, over more than a decade. Long before today’s generative tools, standardized exams were already experimenting with automated scoring to handle scale.

The GMAT is often cited as an early example. It introduced automated essay scoring systems to reduce grader fatigue and improve consistency across large volumes of responses.

These systems were never meant to operate alone. They were designed to apply scoring rubrics uniformly, then work alongside human review.

Machine learning made that process more reliable over time. Instead of rigid rule-based checks, systems began identifying patterns across thousands of essays. That evolution happened gradually, with continuous adjustment and oversight.

What matters is this: AI wasn’t dropped into testing overnight. It was layered in cautiously, tested repeatedly, and kept within defined boundaries. The SAT and ACT followed that same trajectory rather than breaking from it.

How Does the Digital SAT Scoring System Actually Work?

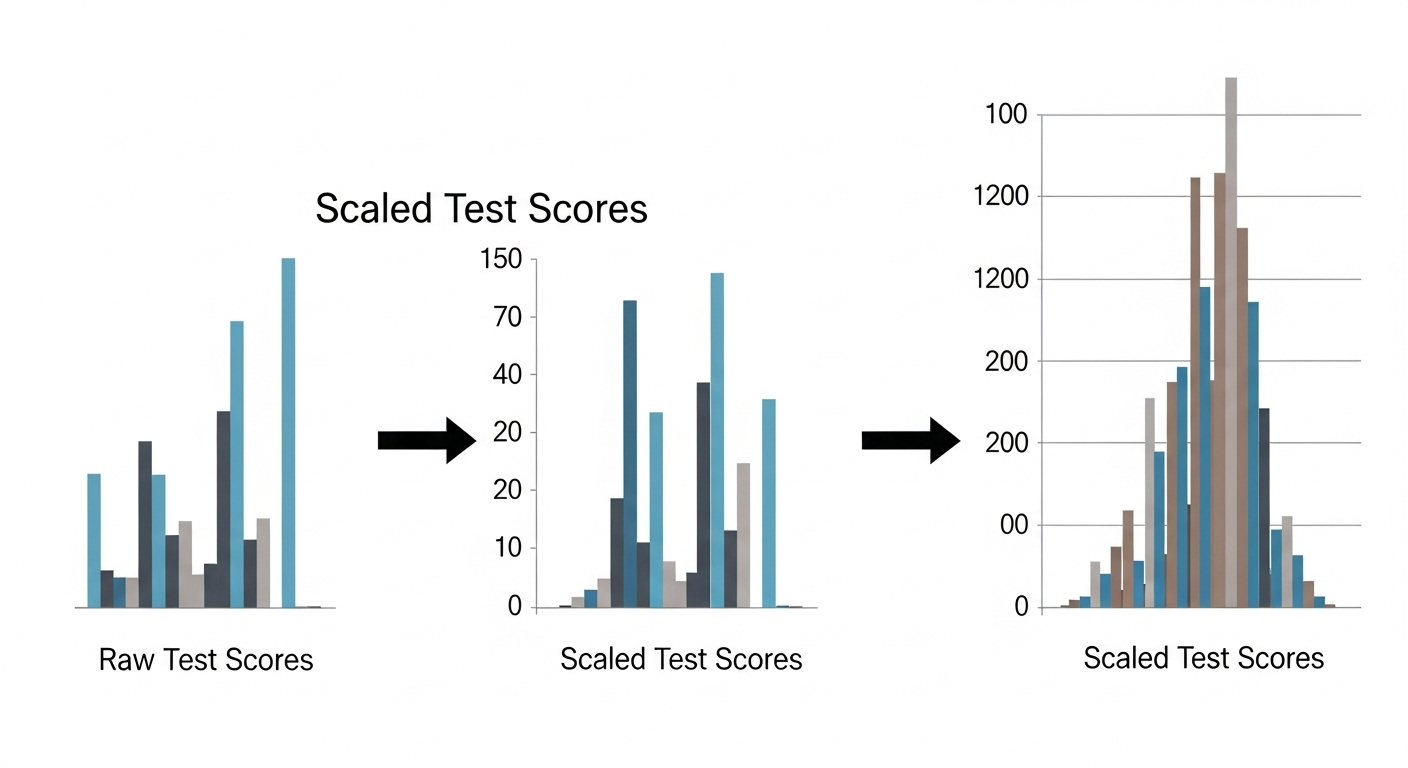

The Digital SAT changed the testing experience, but not in the way many people assume. Its most noticeable feature is adaptive testing. The exam adjusts difficulty based on performance, rather than giving every student the same fixed set of questions.

Here’s how it works in practice. You start with Module 1. Your performance there determines which version of Module 2 you receive.

Strong performance routes you to a more challenging second module, which carries higher scoring potential. Weaker performance leads to a less difficult path, with a lower ceiling.

Several elements are always in play:

- Module 1 performance determines Module 2 difficulty

- A harder second module allows for higher scaled scores

- English and Math sections are scored separately

- Raw scores are converted into scaled scores

The algorithm considers correct answers and question difficulty together. What it does not do is assess creativity or intent. The full scoring logic isn’t publicly disclosed by the College Board, but it is designed to ensure accuracy, consistency, and equity across test-takers.

Understanding this structure helps separate adaptive design from automated judgment. The system routes questions. Humans still stand behind the standards.

Is AI Scoring the SAT Writing Section?

This question comes up a lot, mostly because “writing” sounds like the kind of thing AI would naturally handle. But the structure of the SAT matters here. The SAT no longer includes a required standalone essay. That change alone removes the idea of a single, AI-graded writing task deciding a score.

Instead, writing skills are woven into the Evidence-Based Reading and Writing sections. Grammar, clarity, sentence structure, and comprehension show up inside multiple-choice questions and short written responses.

AI assists behind the scenes by evaluating patterns and consistency across large volumes of responses, helping ensure scoring stability. But it does not independently judge creativity or intent.

There is no moment where an AI system reads a free-form essay and assigns a final SAT score. Human oversight remains central to the process.

The technology supports quality control and efficiency, not authority. Understanding that distinction helps separate the mechanics of scoring from the assumptions people often make when they hear the word “AI.”

How Does the ACT Use AI in Scoring?

The ACT takes a slightly different approach, especially when it comes to writing. Automated scoring engines are used to handle scale and speed, particularly for objective sections. This allows scores to be processed efficiently and consistently across millions of test-takers.

The optional Writing section is where nuance enters. Here, AI-assisted scoring is paired with human graders. The goal is balance.

AI helps apply rubrics consistently and flags patterns, while human teachers review responses that fall outside typical ranges. This hybrid approach reduces grader fatigue without removing professional judgment.

In practical terms, ACT scoring looks like this:

- Machine scoring for multiple-choice sections

- AI-assisted essay scoring to support consistency

- Human review for edge cases and unusual responses

As the ACT moves toward greater automation in 2026, that hybrid model remains intact. Speed improves. Oversight stays.

Are AI Systems Fair When Grading Writing?

Fairness is the hardest question in automated scoring, and it doesn’t have an easy answer. AI systems rely on natural language processing trained on large sets of past student essays. They assess grammar, coherence, structure, and organization. Those elements are measurable. Creativity is not.

That gap matters. Unconventional writing, unexpected structures, or culturally influenced expression may score lower simply because they don’t resemble dominant patterns in the training data. Bilingual students and those learning English can be disadvantaged if their writing style diverges from the norm.

Bias in training data is a known risk. If most examples reflect a narrow range of voices, the system learns that narrow range. Human graders can recognize intent, originality, and context.

AI struggles there. That limitation doesn’t mean AI has no place. It means fairness depends on how heavily automated judgments are weighted and how consistently humans stay involved.

What Happened in Texas With AI-Graded Writing Tests?

Texas became a flashpoint in the AI grading debate when the Texas Education Agency began using automated scoring for written responses on statewide assessments. The goal was efficiency and consistency. The outcome sparked controversy.

Reports surfaced of a sharp increase in zero scores on written sections. That raised immediate alarms. Educators questioned whether valid responses were being misread. Parents worried about equity. Students felt blindsided by results that didn’t match classroom performance.

The concerns went beyond individual scores. Transparency became a central issue. How were responses evaluated? What safeguards existed for unusual but valid writing? Accountability felt distant when decisions were tied to opaque systems.

The backlash didn’t come from opposition to technology itself. It came from uncertainty about accuracy, fairness, and oversight. The episode remains a cautionary example of what happens when automation moves faster than trust.

Can AI Penalize Students for Thinking Differently?

Yes. And that possibility sits at the core of ongoing skepticism.

AI favors patterns. It learns from what it sees most often. When a student’s response follows an unexpected structure, uses an unusual argument flow, or challenges assumptions creatively, the system may misinterpret strength as weakness. A strong idea can look disorganized if it doesn’t resemble prior examples.

Human graders can pause. They can infer intent. They can recognize originality. AI cannot do that reliably yet. It identifies patterns, not purpose.

This tension explains why many educators insist on human involvement in scoring. The risk isn’t that AI makes mistakes. Humans do too.

The risk is that mistakes become systematic, quietly penalizing students whose thinking doesn’t fit the mold. That concern, more than speed or efficiency, drives resistance and caution around AI grading in high-stakes testing.

Are Colleges Using AI Beyond Test Scoring?

Yes. And it’s happening quietly, mostly behind the scenes. As application volumes climb, colleges are turning to AI tools to assist with admissions essay review, not to decide outcomes, but to manage scale. Surveys show that 48 percent of institutions plan to use AI in admissions, often as a screening aid rather than a final judge.

These systems flag writing level, surface potential red flags, and help admissions officers prioritize where human attention is most needed. The goal is triage. Not replacement. One visible example is University of Miami, which has piloted AI support to streamline essay reading during peak cycles.

In practice, AI assists in a few specific ways:

- Essay coherence checks to spot structural issues quickly

- Pattern detection across applications to highlight similarities or anomalies

- Triage support for admissions officers so deeper reads happen where they matter

This use of generative AI doesn’t remove judgment. It reallocates it. Human readers still make decisions, but with better signal amid the noise.

If AI Exists, Why Do Colleges Still Care About SAT and ACT Scores?

Because AI hasn’t replaced the need for a common yardstick. Standardized tests still provide a shared benchmark across wildly different schools, grading systems, and curricula. That comparability matters, especially when GPA alone can’t tell the full story.

Tests also measure reasoning under pressure. Not just recall. Colleges argue that SAT and ACT scores capture aspects of academic readiness that coursework sometimes masks. That belief hasn’t faded with AI’s rise. If anything, it’s sharpened.

Several elite institutions have said this out loud. MIT and Georgetown University have reaffirmed testing as a useful signal. Even as test-optional policies spread, scores remain important for scholarships and merit-based aid administered through bodies like the College Board.

AI tools change preparation. They don’t erase the value of an objective measure.

Does AI Change How Students Should Prepare for the SAT and ACT?

It changes the how, not the why. AI tutoring tools now offer personalized prep paths, instant feedback, and adaptive practice. That can make studying more efficient. Gaps surface faster. Weak spots get targeted attention.

But AI doesn’t replace critical thinking. It can coach, not compete. Overreliance dulls problem-solving skills and creates a false sense of readiness. Students who let tools do the heavy lifting often struggle on test day, when synthesis and judgment matter.

Human practice still matters. Timed sections. Paper-and-pencil habits. Reviewing mistakes without shortcuts. AI works best as a guide alongside disciplined study, not a crutch. Used that way, it supports learning rather than hollowing it out.

How Can AI Improve Feedback Without Replacing Human Judgment?

Speed is AI’s advantage. Meaning is human territory. When feedback arrives immediately, learning sticks. AI can provide that speed at scale, flagging errors and patterns while the material is still fresh.

What it can’t provide is nuance. Humans deliver emotional support, encouragement, and context. They read intention. They notice growth. Hybrid systems work best because they combine immediacy with understanding.

In classrooms and assessments alike, timely feedback improves outcomes. AI accelerates the loop. Teachers complete it. That division of labor isn’t a compromise. It’s a design choice that keeps judgment human.

What Role Could Tools Like PowerGrader Play in Ethical Assessment?

Ethical assessment hinges on control. PowerGrader is built around that principle. It offers instructor-controlled grading logic, ensuring rubrics come from educators and stay aligned with course goals.

Pattern detection helps surface trends without penalizing originality. Consistent rubric application reduces fatigue and bias. And a human-in-the-loop governance model keeps accountability where it belongs, with teachers.

The result is efficiency without erasure. Fairness without opacity. Technology supports assessment, but doesn’t overrule it. That balance is what ethical scaling looks like. Try it now today!

Conclusion

AI assists, but it does not fully replace humans. Risks around bias, transparency, and equity are real, and they demand oversight. At the same time, standardized tests remain relevant because they measure skills AI can’t stand in for.

The future isn’t human versus machine. It’s collaboration. When technology handles volume and humans handle meaning, assessment stays credible. That balance, maintained carefully, is what keeps trust intact.

Frequently Asked Questions (FAQs)

1. Is AI grading the SAT and ACT by itself?

No. Multiple-choice sections are machine scored, but writing-related evaluations use hybrid systems with human graders retaining final authority.

2. Does the Digital SAT use AI to decide scores?

The Digital SAT uses adaptive algorithms to route questions, not to judge creativity or intent. Humans still define standards and oversight.

3. Are colleges using AI to read admissions essays?

Yes, as a screening aid. AI flags patterns and writing levels, but admissions officers make final decisions.

4. Can AI grading be biased?

Yes. Bias can appear if training data is narrow. That’s why human review and transparency are essential safeguards.

5. Do Ivy League schools still value SAT and ACT scores?

Many do. Institutions like MIT and Georgetown view standardized tests as useful indicators of academic readiness.

6. Should students rely on AI for test prep?

AI helps with practice and feedback, but it shouldn’t replace critical thinking or timed, independent study.

7. Will AI replace human graders in the future?

Unlikely. High-stakes assessments still rely on human judgment to ensure fairness, nuance, and accountability.