If you’re exploring how to create an AI tutor, you’ve probably noticed something unsettling. There are plenty of tools that look impressive. Few actually teach.

Artificial intelligence has moved quickly into education. Apps promise instant explanations, automated grading, personalized support at scale. On the surface, it feels like progress. And in some ways, it is. But many AI tutors fail for a simple reason, they prioritize speed over depth. They provide answers instead of building understanding.

When a student asks a question, the system responds immediately. Efficient, yes. Educational, not always. Students learn by grappling with material, by working through confusion, by making and correcting mistakes. If an AI tutor removes that struggle entirely, it removes growth with it.

So the real question is not just how to create an AI tutor, but how to create one that helps students solve problems rather than bypass them. That requires more than clever code. It demands pedagogy, guardrails, and design decisions that respect how learning actually works.

In this blog, you’ll learn how to create an AI tutor that strengthens understanding, supports real education, and prepares students for the future rather than just delivering quick answers.

What Learning Problem Are You Trying to Solve?

Before you write a single line of code, pause. Ask the uncomfortable question. What problem are you actually trying to fix?

Educational technology has a habit of racing ahead of reflection. The tools get built first, the pedagogy gets patched in later. That order rarely ends well. If you want to understand how to create an ai tutor that truly helps, you must begin with the learning experience itself.

Look closely at prior knowledge. Where are students getting stuck? Which key concepts create friction? One main point of friction is often not the material itself, but the gap between what the student already knows and what the course assumes they know. That gap matters.

Context matters too. In higher education, learners may need support with analytical thinking and complex material. In K–12, cognitive load and developmental readiness shape how students learn. An AI tutor should adapt to those realities. And it should support teachers, not replace them. The goal is to extend human guidance, not compete with it.

What Pedagogical Framework Should Guide Your AI Tutor?

Technology without pedagogy is just noise. Polished noise, perhaps, but noise all the same. If you are serious about how to create an ai tutor that actually teaches, you need a framework that respects how humans learn.

Start with the Zone of Proximal Development. Students learn best when the material feels slightly out of reach, not impossible, not trivial. That delicate edge is where growth happens. Too easy, and attention drifts. Too hard, and motivation collapses.

Then consider Bloom’s Taxonomy. Memorizing facts sits at the bottom. Analysis, evaluation, creation, those require deeper cognitive effort. Your AI tutor should not stop at recall. It should push thinking upward.

Active engagement matters as well. Passive consumption rarely builds durable skills. Constructive and interactive learning, where the learner responds, reflects, corrects error, and refines understanding, produces stronger outcomes.

Socratic questioning ties it together. Instead of supplying an explanation immediately, the system can ask probing questions that nudge the learner toward insight.

- Target the Zone of Proximal Development by challenging learners just beyond current ability

- Scaffold learning through hints rather than direct solutions

- Use probing questions to deepen understanding

- Encourage students to explain answers in their own words

- Move learners from passive to active to constructive interaction

When you design around these principles, the AI becomes a guide, not a shortcut.

How Do You Design the Intelligence Layer of an AI Tutor?

Now you move beneath the surface. The visible interface, the friendly responses, the smooth conversation flow, all of that sits on top of something quieter. The intelligence layer.

Most AI tutors begin with a base model, often a large language model trained on vast amounts of text. That model can generate responses, follow instructions, and simulate conversation. Impressive, yes. But raw capability is not enough. If you stop there, your tutor may sound fluent yet drift into unreliable territory.

You need to fine tune it. Not with random internet scraps, but with curated, pedagogically rich datasets built around real content and actual learning objectives. Training should reflect research, structured material, and instructor-approved knowledge. Otherwise the system may respond confidently while being wrong, which is far worse than saying “I don’t know.”

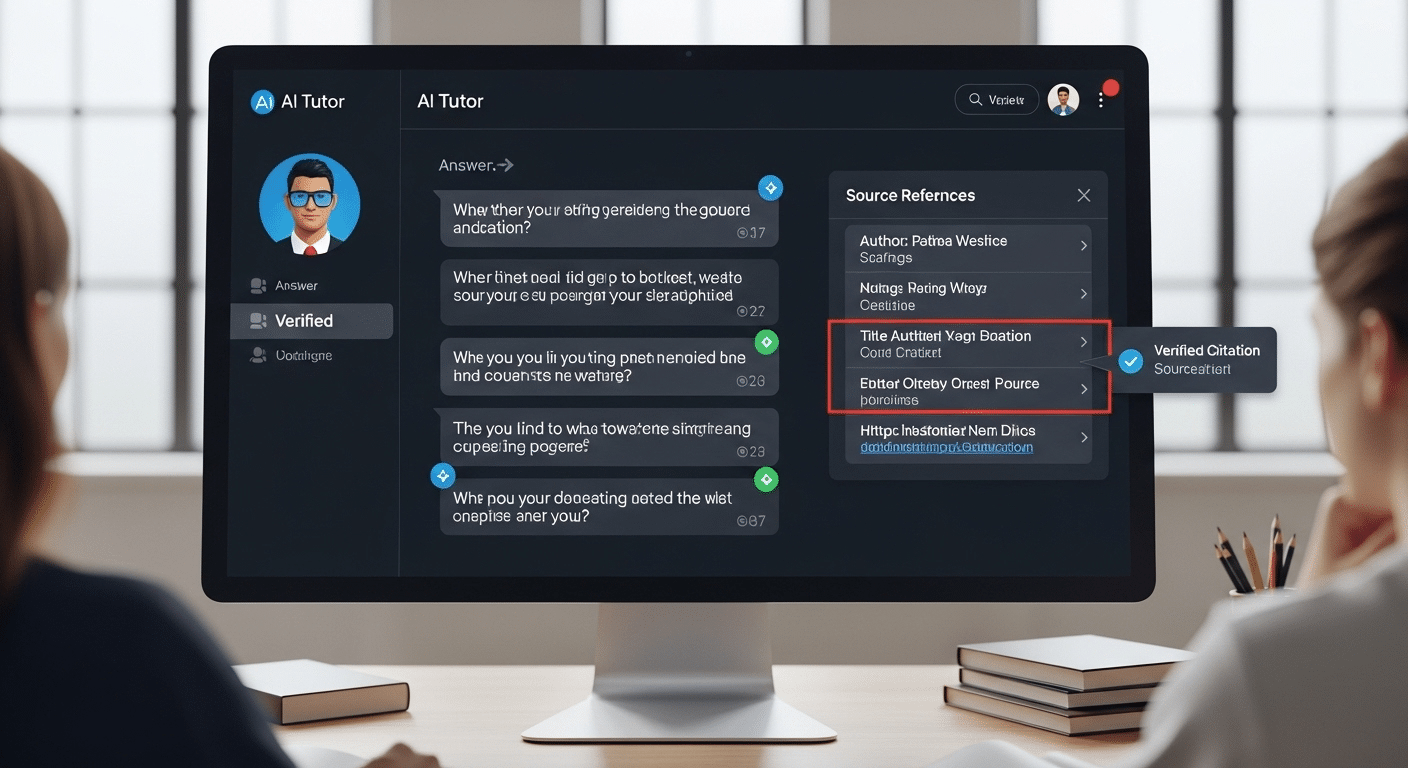

Ground the model using Retrieval Augmented Generation, often called RAG. In simple terms, this means the AI pulls from vetted documents before it answers, staying anchored in context rather than improvising freely.

Use prompts strategically. Clear instructions guide how the program responds, how it phrases explanations, how it manages conversation flow. Good code matters. But disciplined design matters more.

How Can You Ensure Accuracy and Prevent Hallucinations?

Hallucinations are not mystical. They are predictable. When a model lacks reliable grounding, it fills the gap with probability. The result can sound polished, even authoritative, yet quietly wrong.

If you want to understand how to create an ai tutor that educators can trust, accuracy cannot be optional. Students will assume the system is correct. That assumption carries weight.

Start by narrowing the knowledge boundary. Do not allow the AI to roam freely across the open internet. Anchor it to a defined body of research and course material. Confirm that every response can be traced back to vetted sources. Then test it, repeatedly, under challenging conditions.

Reinforcement Learning with Human Feedback, often shortened to RLHF, helps refine behavior. Human reviewers evaluate responses, flag error patterns, and improve reliability over time.

- Use one solid core document as a source of truth

- Add 1–3 additional content documents and FAQs

- Design the tutor to refuse answers outside uploaded material

- Use multiple evaluators to improve response consistency

- Audit for bias and misinformation

Trust grows from disciplined limits. Not from unlimited answers.

How Should an AI Tutor Provide Feedback That Builds Understanding?

Feedback is where an AI tutor either earns its place or quietly undermines it. When students work through assignments or practice problems, timing matters. Immediate feedback helps anchor learning while the material is still fresh.

If correction comes days later, the connection weakens. But speed alone is not enough. The feedback must carry substance.

High-information feedback includes verification and elaboration. In other words, the system should confirm whether an answer is correct, then provide an explanation that clarifies why.

That explanation should strengthen understanding, not overwhelm the learner with excess detail. Cognitive overload is real. Too much information at once, and even capable students disengage.

Correction should be precise. Not vague encouragement, not robotic repetition. When mistakes appear, identify them clearly. When reasoning is strong, say so. Reinforce what works.

- Highlight mistakes clearly and explain why

- Provide hint-based support rather than full solutions

- Adapt feedback in real time

- Encourage learners to reflect before responding

- Confirm understanding before moving forward

Good feedback turns error into progress. Poor feedback just delivers answers and moves on.

How Do You Personalize the Learning Experience Without Overcomplicating It?

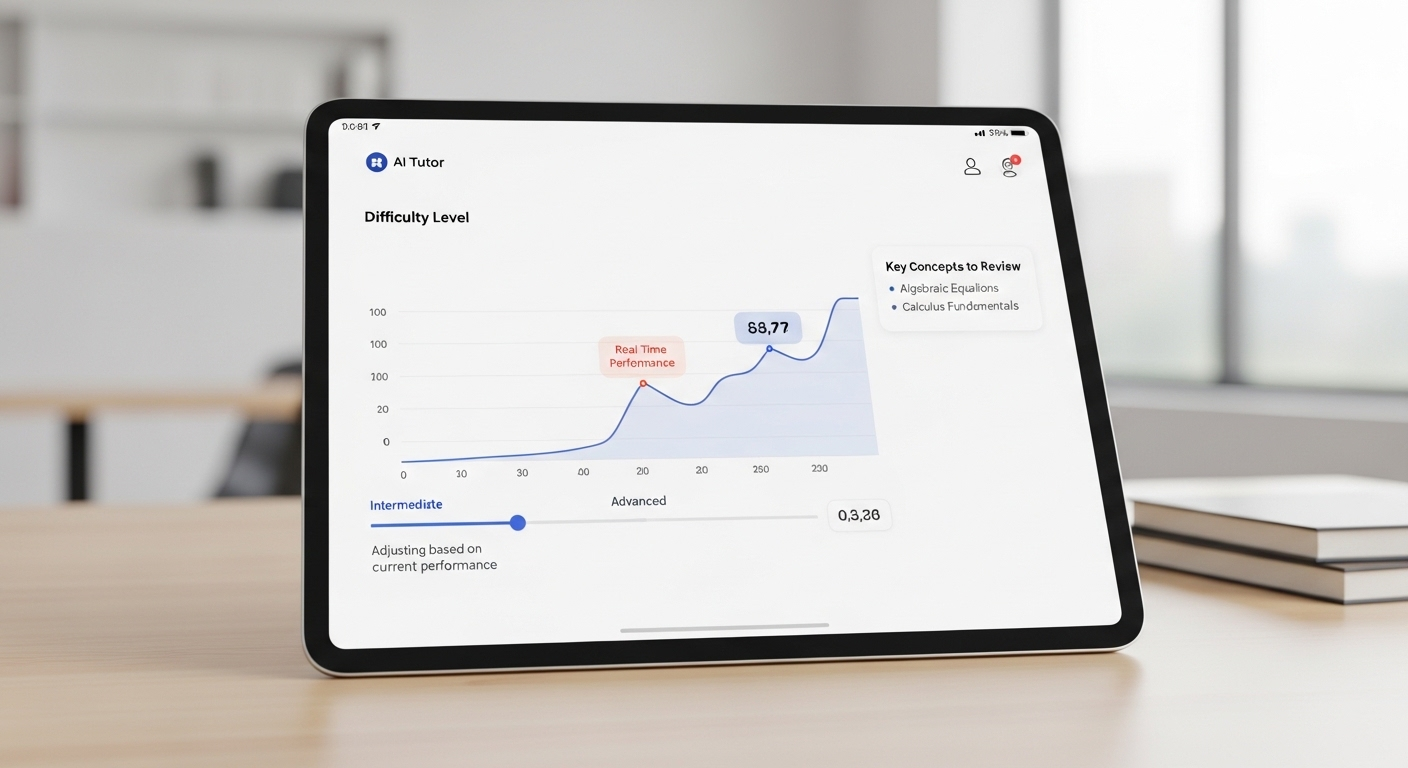

To create a personalized AI tutor, you do not need an elaborate maze of features. You need clarity. Start with adaptive learning paths driven by analytics. As the learner interacts with the app, the system tracks performance, response time, recurring mistakes, and depth of understanding. Based on that data, it adjusts difficulty and pacing in quiet, almost invisible ways.

If a student demonstrates strong ability with key concepts, increase the challenge. If confusion appears, slow down and provide structured support. The goal is balance, not constant escalation.

Support multimodal interaction whenever possible. Some learners respond best to text. Others benefit from voice input or short video explanations. Offering multiple formats increases accessibility without adding unnecessary friction.

Above all, manage cognitive load. Keep the interface clean. Keep instructions clear. Personalization should feel natural, not overwhelming. When done well, it becomes engaging rather than distracting, tailored to the learner without becoming complicated for its own sake.

How Do You Keep Teachers in the Loop?

An AI tutor should never operate in isolation. Education does not exist in a vacuum, and technology should not quietly replace the judgment of teachers. If you are serious about how to create an ai tutor that works in higher education or any structured learning environment, you must design for human oversight from the beginning.

Teachers need visibility. They need to understand how students are performing, where confusion is clustering, which key concepts are sticking and which are not. Dashboards and clear insights make this possible. Data, when presented responsibly, becomes a lens rather than a burden.

AI can surface patterns quickly. A teacher still interprets them.

Without that loop, the system risks drifting away from classroom goals. With it, the tutor becomes a form of structured support rather than an invisible authority.

- Share performance data with educators

- Allow teachers to review AI responses

- Use AI as supplemental, not replacement

- Maintain contact between student and real person

What Ethical and Security Guardrails Must You Implement?

An AI tutor deals with something fragile, student data, academic records, patterns of behavior, even mistakes that reveal how someone thinks. That responsibility is not abstract. It is immediate.

If you want your system to be reliable in the real world, compliance is non-negotiable. In the United States, FERPA protects student records.

In Europe, GDPR governs personal data. Similar regulations exist globally. Your design must account for them from day one, not as an afterthought.

Security also extends beyond privacy. AI models trained on open internet material can inherit bias or produce subtle error patterns that affect certain learners unfairly. Without careful auditing, those issues persist quietly.

Ethical guardrails protect both the learner and the institution. They shape how the system behaves now and in the future.

- Protect student records with robust encryption

- Audit algorithmic bias using diverse datasets

- Implement guardrails against harmful or inaccurate responses

- Ensure equitable access to prevent disparities

A well-designed AI tutor does not just teach content. It operates within boundaries that safeguard trust.

How Do You Test and Refine an AI Tutor Before Launch?

You do not release an AI tutor and hope for the best. You test it, break it, and test it again. Iterative testing should involve both students and teachers. Let real learners interact with the system in authentic classroom conditions.

Observe where confusion arises, where conversation flow feels unnatural, where responses drift away from the intended material. Small friction points matter more than you think.

Collect structured feedback after each test cycle. Ask what felt engaging. Ask what felt mechanical. Measure learning outcomes, not just user satisfaction. Did understanding improve? Did performance on assignments shift in measurable ways? Research-backed evaluation keeps you grounded.

Refine prompts carefully. Slight adjustments in instructions can dramatically improve how the AI responds. Monitor cognitive load as well. If learners appear overwhelmed, simplify.

After each test round, adjust based on data. Then repeat. Launch should feel earned, not rushed. Continuous refinement is part of responsible design, not a postscript.

What Does a Future-Ready AI Tutor Look Like?

A future-ready AI tutor does more than respond quickly. It promotes critical thinking, nudging students to analyze, compare, question, and justify rather than simply repeat. It scales quality instruction without flattening it, preserving rigor even as access expands.

Active engagement sits at the center. The learner interacts, reflects, revises, practices. The system adapts across disciplines, from quantitative problem sets to conceptual discussions, without losing coherence. In higher education especially, scale matters, but so does depth.

The real test is this, can the tutor support thousands of students while still respecting individual ability and context?

That is the standard emerging tools must meet. And it is where platforms like CoTutor begin to enter the conversation.

Why CoTutor Represents a Smarter Way to Create an AI Tutor?

If you have followed the thread so far, a pattern should be clear. Creating an AI tutor that truly teaches requires structure, restraint, and educational intent. CoTutor reflects that philosophy.

Rather than improvising from the open internet, CoTutor is grounded in vetted, instructor-approved content. Its intelligence layer is built around pedagogical scaffolding, encouraging students to think in their own words, not simply extract answers. The design prioritizes institutions, particularly in higher education, where accountability and measurable outcomes matter.

Human oversight is not an afterthought. Teachers remain in the loop, able to monitor progress and intervene when necessary. The goal is support, not substitution.

- Curriculum-aligned conversation flow

- High-information feedback mechanisms

- Instructor visibility dashboards

- Secure, compliant infrastructure

To ensure easy access for students and educators, institutions can post the CoTutor link in accessible platforms such as LMS, email, or intranet.

CoTutor embodies what this guide has outlined, a disciplined, research-informed approach to building an AI tutor that strengthens learning rather than shortcuts it.

Conclusion

If you step back, the path becomes clearer. How to create an AI tutor is not a question of adding more features or louder marketing claims. It begins with pedagogy. It requires defined learning goals, structured scaffolding, accurate content, human oversight, and disciplined guardrails.

Many AI tutors fail because they chase speed and convenience. Effective ones slow down just enough to foster understanding.

Design intentionally. Test rigorously. Keep teachers involved. Prioritize learning over automation.

When you build with those principles in mind, artificial intelligence becomes a meaningful support system rather than a shortcut. Explore how CoTutor can help your institution build AI tutoring the right way.

Frequently Asked Questions (FAQs)

1. What is the first step in creating an AI tutor?

The first step is defining the learning problem you want to solve. Identify key concepts, prior knowledge gaps, and clear objectives. Before writing code or selecting a model, clarify how students learn and what outcomes the tutor should improve.

2. Why do many AI tutors fail in education?

Many AI tutors fail because they focus on providing answers instead of fostering understanding. When a system prioritizes speed over critical thinking, students may complete tasks but fail to develop problem-solving skills or long-term retention.

3. Do you need to train an AI tutor on internet data?

No. In fact, relying heavily on open internet data can reduce reliability. A stronger approach uses curated, instructor-approved content as the foundation, ensuring the tutor responds within a defined academic context rather than generating loosely sourced material.

4. How can you prevent hallucinations in an AI tutor?

You can prevent hallucinations by grounding the model in vetted documents, restricting responses to approved materials, and testing outputs rigorously. Human review and structured prompt design further reduce error and improve consistency.

5. Should AI tutors replace teachers?

AI tutors should not replace teachers. They work best as supplemental support tools, offering practice and feedback while educators provide judgment, context, and human guidance that technology alone cannot replicate.

6. How do you personalize an AI tutor for different learners?

Personalization comes from adaptive learning paths that adjust difficulty, pacing, and feedback based on performance data. The system should respond to learner ability without overwhelming them, offering targeted support where needed.

7. Can you build an AI tutor using only one document?

Yes, you can begin with one strong, up-to-date source document as a foundation. For deeper expertise, add a few additional materials and FAQs to strengthen coverage while maintaining clear boundaries.