It starts quietly. A student opens a blank document, toggles between their thoughts and a blinking cursor, then—almost without thinking—opens one of the many AI writing tools now baked into everyday life. Grammarly. ChatGPT. A sidebar suggestion. Nothing dramatic. Just help. Or so it seems.

College application essays, though, sit on a different fault line. They are meant to show judgment, voice, growth. So when AI generated content enters the picture, nerves kick in on both sides.

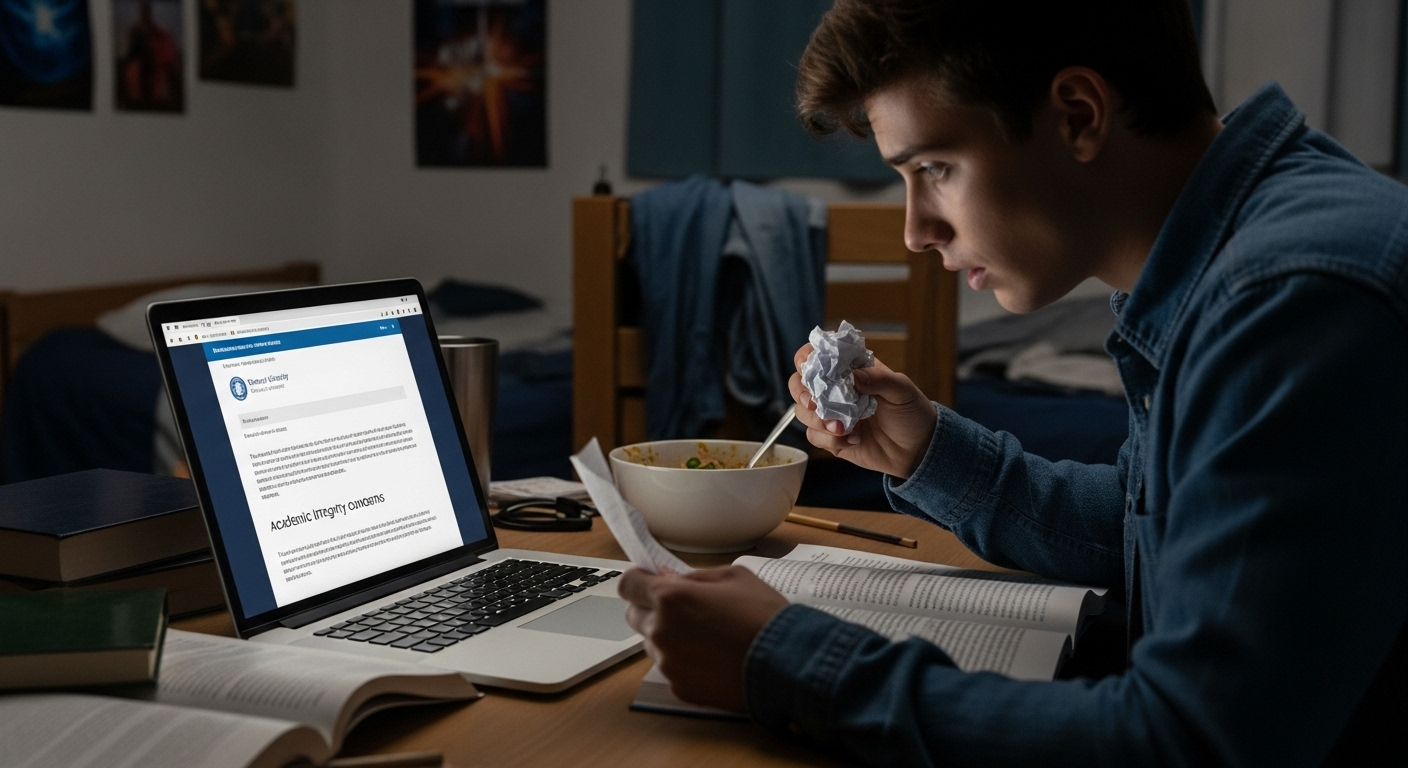

Students worry about crossing an invisible line and triggering consequences they didn’t intend. Colleges worry about fraud, fairness, and whether the admissions process still measures what it claims to measure.

Meanwhile, policies are changing in real time. Detection tools improve, then misfire. Enforcement varies by institution. The question—do colleges check for AI in application essays—keeps resurfacing because the ground underneath it keeps shifting.

Do Colleges Actually Check for AI in Application Essays?

Short answer? Yes. Sometimes. And not in the same way everywhere. Many colleges now do check for AI, but practices vary widely across the college application process.

Roughly 40 to 50 percent of institutions are testing or actively using AI detection tools, especially at large admissions offices handling thousands of essays. That said, detection software is rarely a final judge. More often, it’s a signal. A nudge. A reason to look closer.

Admissions officers don’t auto-reject essays because a tool throws a number on a screen. Instead, AI detection is folded into a broader review process that includes human judgment, contextual reading, and comparison against the rest of a student’s application. Voice. Consistency. Plausibility.

It’s also worth noting that the absence of a published AI policy doesn’t mean AI use is allowed. Some colleges expect restraint by default, others rely on honor codes tied to academic integrity. In practice, checking for AI is less about catching students out and more about protecting trust in admissions decisions—something colleges can’t afford to lose.

How the Common App and Major Platforms Treat AI Use

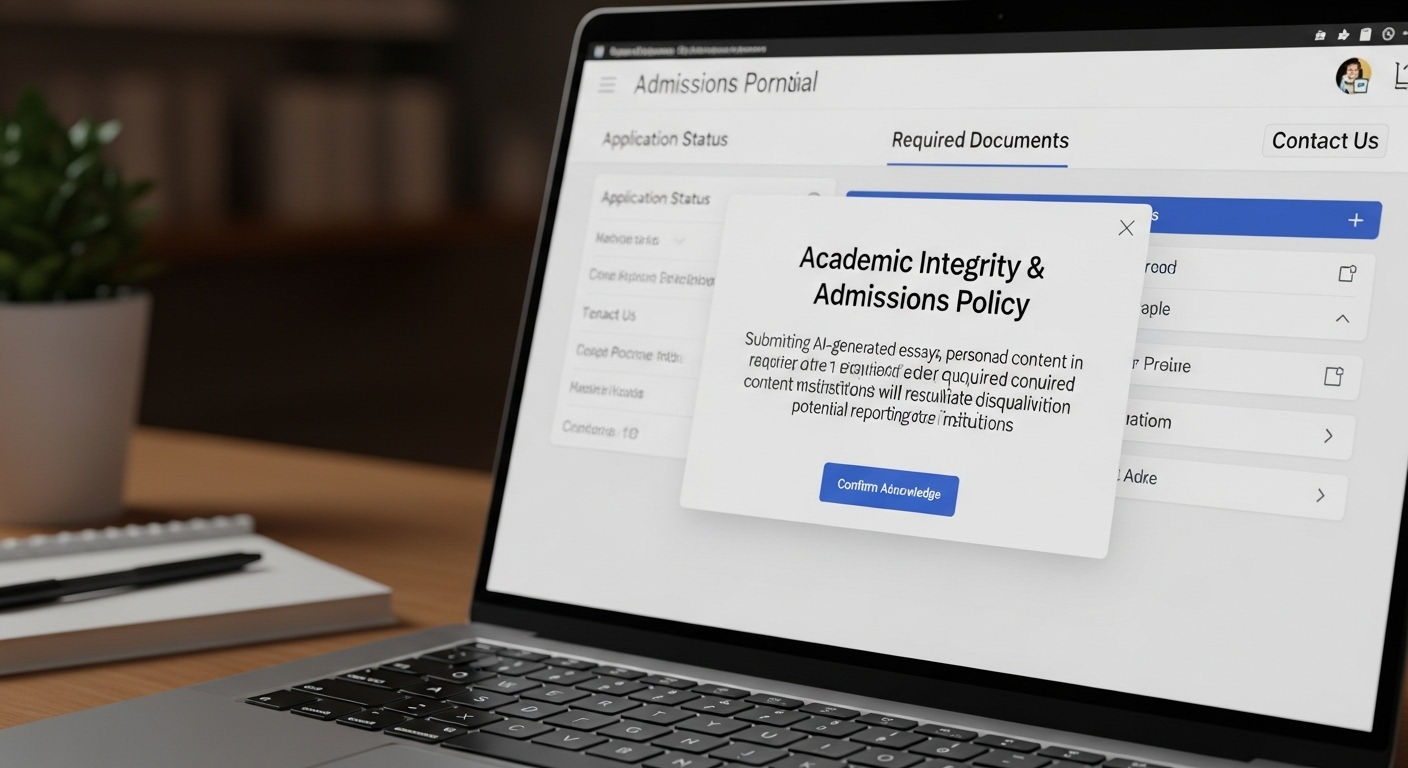

This part tends to surprise people. The Common App doesn’t dance around the issue. Its fraud policy is blunt: submitting substantive AI-generated content as part of an application is considered fraud. Full stop.

And because the Common App sits upstream of hundreds of colleges, that rule applies across all member institutions, even if individual schools phrase their guidance a little differently.

Where it gets tricky is what counts as substantive. Brainstorming? Generally tolerated. Asking an AI tool to help organize ideas, tighten clarity, or catch obvious grammar slips? Often acceptable.

Letting AI generate the essay, or large chunks of it? That crosses into authorship delegation, which the Common App explicitly prohibits.

Disclosure expectations are strict. If an application raises red flags, the investigation doesn’t stay local. A single AI generated essay can trigger reviews across multiple colleges using the same platform. In other words, what feels like a small shortcut can ripple through the entire college admissions process. Quietly. And not in a good way.

What College Admissions Officers Are Really Looking For

Here’s the part that gets lost in the tech talk. Admissions readers aren’t chasing perfect prose. They’re chasing you. Or at least the closest thing to you that fits on a page.

They read thousands of essays. Patterns jump out fast. What they value isn’t polish, but presence. A sense that the writer actually lived the moment they’re describing, wrestled with it, maybe stumbled a bit, then thought something through.

What tends to land well:

- Personal growth that unfolds, not just gets declared

- Emotional depth rooted in specific moments

- Clear ownership of ideas and opinions

- Consistency with recommendation letters and transcripts

And the subtler signals matter too:

- Personal stories tied to lived experience

- Natural imperfections that sound human, not sloppy

- Details only the student would know

- A voice that stays consistent across all materials

An essay can be grammatically flawless and still feel hollow. Admissions officers notice that. Quickly.

How Colleges Use AI Detection Tools (and Their Limits)

Yes, colleges are using AI detection software. Tools like Turnitin, GPTZero, Copyleaks, and Originality.ai show up frequently in admissions workflows, especially at larger institutions. Many institutions actively use AI detection tools, such as Turnitin and GPTZero, to screen college essays. These tools are often praised for their impressive accuracy in identifying AI-generated content, but here’s the nuance that gets missed in online chatter.

These tools don’t “catch” AI the way plagiarism checkers catch copied sources. They analyze linguistic patterns, sentence structure, predictability, and statistical markers that might suggest machine generation. These tools are specifically designed to detect ai-generated content by analyzing linguistic patterns and other markers. What they produce is a probability score. Not proof. Not authorship verification.

And the limits are real:

- Edited or hybrid essays confuse detectors

- Non-native English speakers are disproportionately flagged, and false positives from AI detection tools are a significant concern, particularly for non-native English speakers

- High-achieving writers trigger false positives more often than you’d expect

Because of that, detection tools are rarely used in isolation. Admissions officers often combine AI detection technology with human review to assess the authenticity of essays. A flagged essay usually prompts human review, comparison with other application components, and sometimes follow-up questions. Think of detectors as smoke alarms. Sensitive ones. Useful, but not judges.

What Triggers Red Flags in Application Essays

Red flags don’t mean guilt. They mean pause. Admissions teams look for inconsistencies that don’t line up with the rest of an application.

Common warning signs include writing that feels overly polished but oddly shallow. Essays that say a lot, yet reveal very little. Conclusions that restate the prompt without adding insight. Or language that sounds impressive but detached, like it came from nowhere in particular.

Patterns that raise eyebrows:

- Uniform sentence lengths with predictable rhythm

- Formulaic transitions that feel pre-packaged

- Vague evidence instead of concrete moments

- No emotional risk-taking at all

Another quiet signal? Vocabulary that doesn’t match prior writing or academic context. Perfect grammar paired with zero personality can be just as suspicious as obvious errors.

Admissions officers aren’t hunting mistakes. They’re scanning for authenticity. When the voice disappears, that’s when questions start.

When Using AI Becomes a Serious Admissions Risk

This is where the line hardens. In the college admissions process, using AI to generate an essay is often treated not as a gray area, but as a breach of academic integrity.

Many institutions explicitly equate AI-generated text with contract cheating, the same category as paying someone else to write for you. Different tool. Same outcome.

The consequences can be severe. Rejection is the obvious one. Less obvious, but very real, are rescinded offers, flagged application files, or requests for additional verification.

Some students are asked to complete monitored writing exercises. Others are invited to interviews where they’re expected to explain ideas from their own essays, on the spot. Awkward. Stressful. And usually avoidable.

Here’s the distinction admissions teams care about most: assistance is not the same as authorship delegation. Getting help shaping ideas is one thing.

Handing over the thinking, the wording, the voice—that’s when AI use turns into misconduct, even if the text is technically “original.”

What Types of AI Use Are Usually Allowed (and Why)

Most colleges aren’t anti-technology. They’re anti-misrepresentation. That’s why limited AI assistance is often allowed, sometimes even encouraged, as long as the student remains the actual author.

Commonly accepted uses include:

- Brainstorming essay topics or angles

- Organizing scattered ideas into a clearer outline

- Checking grammar, spelling, or basic readability

- Clarifying sentence structure without changing meaning

A few guardrails tend to matter more than the tool itself:

- AI acts as a planning partner, not a writer

- The student keeps their own voice and phrasing

- No AI-written paragraphs are submitted as final work

When used this way, AI supports thinking rather than replacing it. And that’s the point. Admissions officers aren’t grading software skills.

They’re trying to understand who you are, in your own essays, using your own words—even if they’re a little imperfect.

Why AI Detection Alone Can’t Decide Admissions Outcomes

Here’s the uncomfortable truth many admissions offices have already learned the hard way: AI detection tools don’t deliver certainty . They deliver probabilities. Educated guesses. Signals that something might be off, not proof that it is.

Detection methods analyze linguistic patterns, predictability, and statistical markers. Useful? Sometimes. Decisive? No. A high score doesn’t mean misconduct, and a low score doesn’t mean authenticity.

False accusations carry real consequences, from legal exposure to reputational damage, and colleges know it. That’s why many institutions explicitly prohibit making admissions decisions based on detector output alone.

Admissions outcomes demand defensible evidence, not algorithmic hunches. Academic integrity frameworks increasingly emphasize fairness, due process, and context.

An automated flag without supporting review simply doesn’t meet that bar. Especially when students’ futures are on the line.

So yes, detection tools may open a door to closer review. But they cannot, and should not, close the case by themselves.

How Admissions Teams Verify Authenticity Without Guessing

Instead of playing algorithm roulette, admissions teams rely on comparative, human-centered verification. It’s quieter. Slower. And far more reliable.

What does that look like in practice?

- Comparing application essays with recommendation letters and transcripts

- Watching for voice consistency across short answers, supplements, and activities

- Using follow-up interviews or timed writing prompts when questions arise

- Reviewing context, background, and growth, not isolated text samples

In other words, they look sideways, not just straight at the essay.

Key elements admissions teams weigh:

- Cross-document consistency in tone, maturity, and perspective

- Human judgment from experienced readers who know what authentic writing feels like

- Contextual evaluation, especially for nontraditional or multilingual applicants

This approach doesn’t assume guilt. It asks better questions. And it protects both applicants and institutions from overreach.

Where TrustEd Fits in College Admissions Integrity

This is exactly the gap TrustEd was built to address. Rather than guessing whether text “looks AI-written,” TrustEd focuses on authorship verification.

It brings together writing history, evidence trails, and structured human review to support decisions that are fair, explainable, and defensible. No black boxes. No single-score verdicts.

With TrustEd, admissions teams can:

- Reduce false positives that unfairly penalize students

- Resolve concerns without escalating unnecessary disputes

- Preserve trust while still protecting institutional integrity

- Rely on human-led decisions, supported by evidence, not replaced by software

The philosophy is simple but powerful: verification over detection. Fairness over fear. Trust over shortcuts.

As AI becomes part of the admissions landscape, TrustEd helps ensure that integrity doesn’t come at the expense of students—or common sense.

The Bottom Line

So, yes. Many colleges do check for AI use. That part is no longer speculative. But here’s the quieter truth that tends to get lost in the noise: software rarely decides anything on its own.

What actually carries weight is authenticity. Voice. Ownership. The sense that a real person wrestled with real ideas and put them on the page, imperfectly perhaps, but honestly.

Policies vary, sometimes wildly, from one institution to the next. Authenticity doesn’t. Essays that lean too hard on AI often end up sounding smooth yet hollow, polished but strangely generic, like a suit bought off the rack and never tailored.

Transparency and clear ownership remain the safest path for students navigating this shifting ground.

If you’re wondering how institutions can protect integrity without punishing the wrong people, it’s worth seeing how TrustEd helps admissions teams verify authorship, reduce false accusations, and maintain trust in an AI-shaped admissions landscape.

Frequently Asked Questions (FAQs)

1. Do colleges automatically reject essays flagged as AI-generated?

No. A flag from AI detection software is rarely treated as a final verdict. Most admissions offices use it as a signal for closer review, followed by human evaluation and contextual checks before any decision is made.

2. Can AI detectors really tell who wrote an essay?

Not definitively. AI detectors estimate probabilities based on linguistic patterns, not authorship. Many AI-generated essays are produced by large language models, which are advanced AI systems trained on vast amounts of text. They cannot see intent, drafting history, or personal context, which is why colleges avoid relying on them alone.

3. Is using AI for grammar checking allowed?

Often, yes, but it depends on the institution. Many colleges allow AI for grammar, spelling, or readability checks, as long as the ideas, structure, and final wording clearly reflect the student’s own work.

4. What happens if an essay is falsely flagged?

Typically, nothing automatic. Flagged essays usually trigger additional human review. In some cases, students may be asked for clarification, context, or to complete a short writing exercise to confirm authorship.

5.Do colleges interview students if AI use is suspected?

Sometimes. Interviews, follow-up questions, or monitored writing prompts are used by some admissions teams to resolve uncertainty. These steps are meant to verify authenticity, not to punish by default.

6. How can students protect themselves from accusations?

The best protection is transparency and consistency. Write in your natural voice, keep drafts, follow each school’s AI policy closely, and avoid letting AI generate substantive content you plan to submit as your own.