Artificial intelligence has expanded what you can produce in a matter of seconds. Essays, summaries, code, even polished arguments can now be generated with a prompt. That capability is impressive. It is also unsettling. In the age of AI, the boundaries of academic work are no longer as clear as they once seemed.

Academic integrity has traditionally rested on the expectation that the work you submit reflects your own thinking and understanding. Now, AI generated content can closely resemble human writing, which complicates that expectation. When technology can draft ideas faster than you can outline them, questions naturally follow.

So how does AI affect academic integrity? It challenges long-standing assumptions about authorship, effort, and originality. To answer that fully, you first need to clarify what academic integrity actually means today, and who defines its standards.

What Has AI Changed About Academic Work?

Generative AI has altered the writing process in ways that are difficult to ignore. You can now generate essays, solve coding problems, or summarize research articles in seconds.

What once required hours of drafting and revision can appear almost fully formed with a single prompt. That efficiency changes how academic work is produced and, more importantly, how it is evaluated.

AI generated text can blur the line between your own reasoning and automated output. When students submit work that is partly or fully AI generated, questions arise about authorship and originality. The issue is not only plagiarism in its traditional sense.

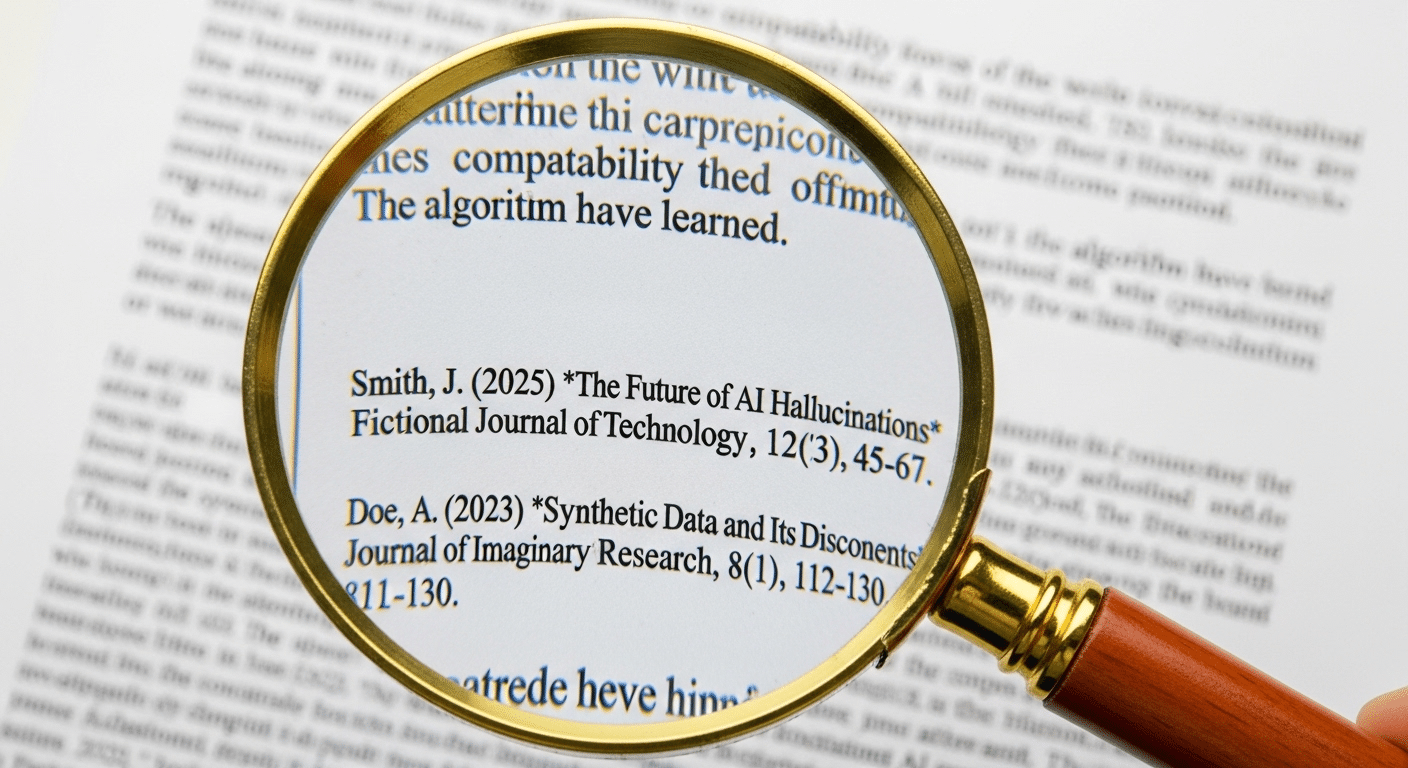

AI can fabricate research data, invent citations, or create entirely fictitious datasets that look credible at first glance. That undermines scholarly trust.

Over time, over-reliance on such tools can weaken critical thinking and the development of original thought. Authenticity becomes harder to verify, and the integrity of student work becomes more fragile.

AI now enables:

- Full assignment generation

- Paraphrasing at scale

- Automated code completion

- Fabricated references or datasets

- Realistic but false research output

These capabilities expand possibility, but they also complicate integrity in ways universities are still learning to address.

Where Does Ethical Use End and Academic Misconduct Begin?

The tension around AI use rarely centers on the tool itself. It centers on intent and disclosure. Ethical use of AI can support learning.

There is a meaningful distinction between using AI for brainstorming ideas and submitting AI output as if you authored it independently. When you rely on generative tools to clarify concepts, outline arguments, or refine sentence structure, you are still responsible for shaping the intellectual direction.

However, failing to disclose substantial AI assistance, especially when it produces significant portions of an assignment, becomes misrepresentation.

Proper citation and proper attribution remain core expectations. If AI contributes meaningfully to your academic work, transparency matters.

Responsible AI use requires human vetting of all output. AI systems can generate convincing text, but they can also introduce errors, bias, or fabricated claims.

Many students struggle with these nuances. The boundary is not always obvious. Yet the principle is consistent: academic integrity requires that the work you submit genuinely reflects your understanding, judgment, and effort.

Why Detection Alone Is Not Enough?

It may seem reasonable to respond to AI related academic dishonesty with stronger detection tools. In practice, that approach quickly reveals its limits.

Current detection tools struggle to reliably identify AI generated text. Generative models evolve rapidly, often faster than the systems designed to detect them. What works today may fail tomorrow.

False positives are a serious concern. When authentic student work is flagged incorrectly, trust erodes. Students feel accused rather than supported.

Faculty feel uncertain about the reliability of the tools they are expected to use. Traditional plagiarism detection methods, which compare text against existing sources, are becoming less effective in the face of AI generated content that is technically original but not genuinely authored.

Relying only on policing students creates a narrow model of AI academic integrity, one focused more on suspicion than learning.

Limitations of AI detection tools:

- Significant margins of error

- False positives that can wrongly accuse students

- Rapid model evolution that outpaces detection updates

- Inability to distinguish brainstorming from true authorship

- Metadata ambiguity that complicates evidence

Detection has a role. But on its own, it cannot define or safeguard academic integrity in a meaningful way

How AI Is Forcing Universities to Rethink Academic Integrity Policies?

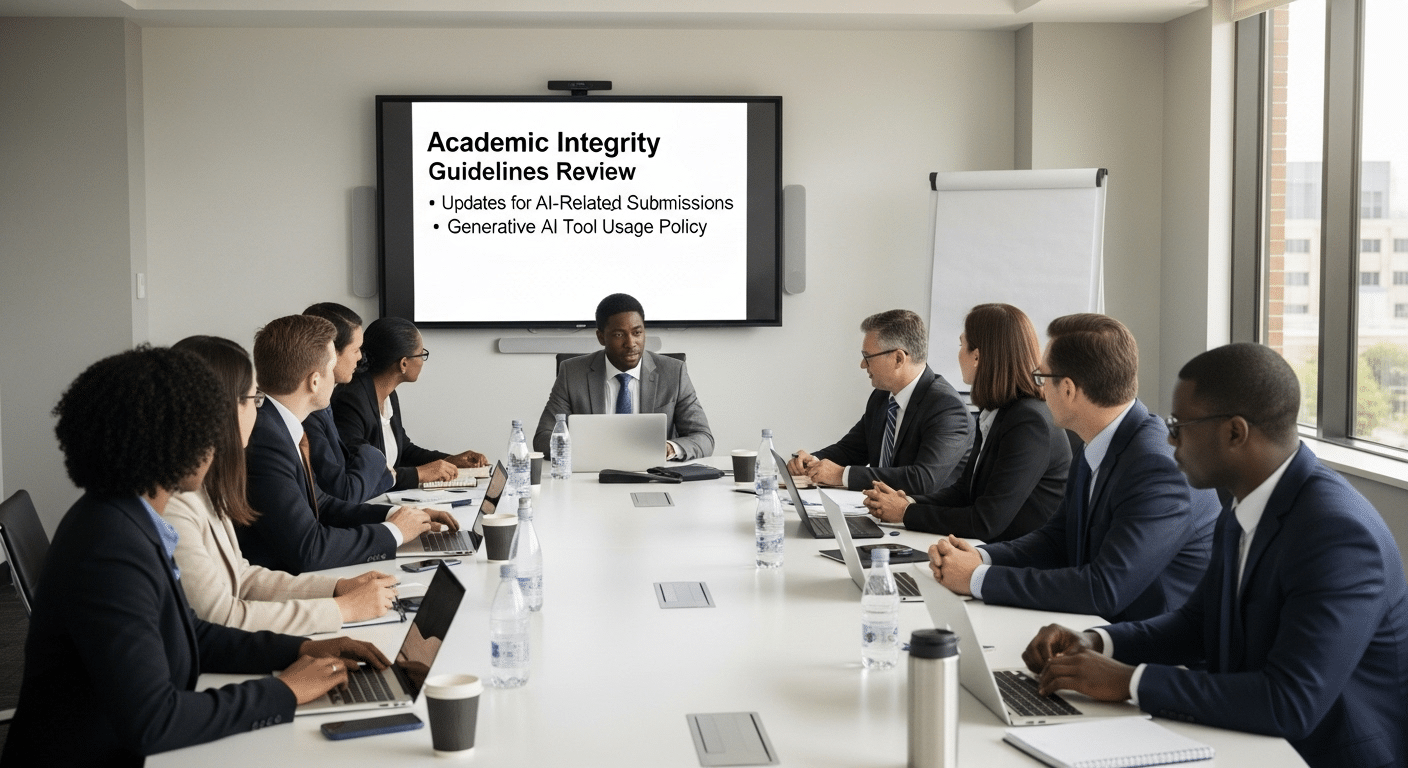

Universities are not standing still. Most institutions are actively updating their academic integrity policies to address generative AI. What once focused primarily on plagiarism and exam cheating must now account for AI generated work, automated writing assistance, and new forms of academic misconduct.

In several jurisdictions, formal AI policies are expected to become mandatory by 2026, signaling that this is not a temporary adjustment but a structural one.

Academic integrity policies are being redefined to clarify what constitutes acceptable AI use. At the same time, rigid rules alone are not enough.

Faculty need flexible course policies that reflect the goals of their specific classes. A writing seminar may treat AI differently than a coding course or a statistics lab.

Clear communication becomes central. Instructors must explain expectations in the syllabus, in assignment instructions, and during class discussion.

When you understand not only the rule but the reasoning behind it, confusion decreases. Teaching students what responsible AI use looks like is now part of maintaining academic integrity.

How Teaching and Assessment Must Evolve?

If AI can generate a polished answer in seconds, then traditional assignments alone cannot measure what you truly understand. Teaching must adapt.

Authentic assessments, those that ask for applied reasoning, analysis, or personal reflection, make it harder to substitute automated output for genuine thought.

Process-based evaluations are gaining traction for this reason. Instead of grading only the final submission, instructors look at how ideas develop over time.

Multiple assessment methods also reduce over-reliance on a single format. When you engage in discussion, short responses, projects, and collaborative work, your learning goals become clearer and less vulnerable to misuse.

Teaching AI literacy is equally important. You need to understand when AI use supports learning and when it replaces critical thinking. Encouraging students to reflect on how they used AI tools can deepen awareness and responsibility.

Effective strategies include:

- Requiring drafts and revision stages

- Incorporating oral defenses or brief reflections

- Adding in-class writing components

- Including personal reflection sections in assignments

- Explaining permitted AI use explicitly in course policies

These approaches aim to enhance learning without replacing thinking.

Risks Beyond Plagiarism Fabrication, Bias, and Research Integrity

Plagiarism is only one part of the concern. AI can generate fabricated research data that appears statistically sound yet has no real-world basis.

When fictitious datasets enter academic work, scientific integrity is weakened at its core. Research depends on verifiable evidence. AI generated output can imitate evidence without providing it.

Algorithmic bias presents another ethical consideration. AI systems are trained on large datasets that may contain embedded social or cultural bias.

If those patterns go unchecked, they can influence conclusions in subtle but significant ways. In education and research alike, fairness matters.

There is also the issue of transparency. Many generative tools do not clearly reveal how they arrive at a conclusion. That lack of visibility complicates scholarly justification.

Researchers are expected to disclose AI assistance when it meaningfully contributes to their work. However, AI cannot be cited as an author or held accountable for errors. Responsibility remains human. Maintaining research integrity in this context requires careful oversight and honest disclosure.

Can AI Also Strengthen Academic Integrity?

The story is not entirely about risk. AI tools can also enhance learning when used with intention. Institutions are already using AI analytics to identify students who may be at risk of falling behind. Early signals allow instructors to intervene before frustration turns into academic dishonesty.

AI tutors offer authorized learning support, guiding students through complex material without completing the work for them.

When integrated thoughtfully, these systems can support revision, clarify misunderstandings, and reinforce feedback. That kind of structured assistance strengthens the learning environment rather than undermines it.

Responsible AI use also improves transparency. When expectations are clear and students understand what is permitted, anxiety decreases. A supportive environment reduces the pressures that often lead to cheating.

Positive uses of AI include:

- Personalized tutoring tailored to specific learning gaps

- Writing feedback that helps refine clarity and structure

- Early warning systems that flag academic risk factors

- Academic support scaffolding that builds skills progressively

A balanced approach recognizes that AI can either weaken or promote academic integrity. The outcome depends on how it is taught and governed.

From Policing to Partnership A Balanced Approach

If the only response to AI is surveillance, you create a classroom defined by suspicion. Detection has a role, but it cannot stand alone. Maintaining academic integrity now requires a combination of education and accountability. A balanced approach treats AI not simply as a threat, but as a reality that must be managed with clarity.

Clear communication of expectations is foundational. When course policies explain what responsible AI use looks like, ambiguity decreases.

Teaching students why certain boundaries exist strengthens understanding far more than vague warnings ever could. Faculty focus shifts from catching misconduct to guiding learning.

Encouraging responsible AI use allows academic honesty to develop through awareness rather than fear. When you understand both the risks and the appropriate uses, integrity becomes part of the learning process itself. Partnership, not policing, is what sustains trust in this new era of education.

How Technology Can Support Responsible AI Use?

Technology should support judgment, not replace it. In the context of AI academic integrity, intelligent review matters far more than automatic punishment.

Detection tools alone cannot capture nuance, especially when student work may include limited, disclosed AI assistance. What institutions need is context.

Pattern analysis across research papers and assignments can surface unusual similarities or sudden shifts in writing style. However, those signals should lead to instructor oversight, not immediate accusations.

A context-based authorship review allows faculty to examine drafts, revision history, and documented AI use before drawing conclusions.

This is where a human-in-the-loop model becomes essential. Solutions like TrustEd support educators by providing deeper insight into academic misconduct risks while keeping final decisions in human hands.

Responsible AI requires systems that protect integrity without criminalizing students who are still learning how to use these tools appropriately.

Conclusion

Artificial intelligence has changed how academic work is created, evaluated, and understood. It has introduced new risks, from plagiarism to fabricated research data, but it has not erased the core idea of academic integrity. Responsibility still rests with you, with faculty, and with institutions.

Clear expectations matter more than ever. When policies explain what ethical AI use looks like, and when instructors communicate those standards consistently, confusion decreases. Integrity becomes a shared responsibility rather than a guessing game.

Universities must adapt thoughtfully. Detection alone will not solve the problem, and prohibition is rarely sustainable. A balanced approach that combines education, oversight, and intelligent technology can protect academic honesty while supporting learning.

If you are evaluating how to strengthen AI academic integrity in your institution, now is the time to explore tools that provide insight without sacrificing fairness.

Frequently Asked Questions (FAQs)

1. Does AI increase plagiarism?

AI has made plagiarism easier in some cases. Students can generate essays, paraphrase content, or fabricate references quickly. However, AI does not automatically cause academic dishonesty. The risk depends on how the tool is used and whether expectations are clearly communicated.

2. Is using generative AI always cheating?

No. Generative AI is not inherently unethical. Using it for brainstorming ideas, clarifying concepts, or revising sentence structure can be appropriate when allowed. Submitting AI generated work as entirely your own without disclosure, however, is considered academic misconduct.

3. Can AI detection tools be trusted?

Detection tools can provide signals, but they are not fully reliable. Many struggle to accurately identify AI generated text and may produce false positives. Human review and contextual evaluation remain essential for fair academic integrity decisions.

4. How should faculty handle AI use?

Faculty should define expectations clearly in course policies and assignment instructions. Discussing acceptable AI use in class reduces confusion. A mix of assessment methods and process-based evaluation can help maintain academic integrity while supporting learning goals.

5. What policies should universities create?

Universities should develop flexible academic integrity policies that address AI use explicitly. Policies must distinguish between ethical assistance and misrepresentation. Clear guidance, consistent communication, and educational strategies are more effective than punishment alone.

6. Can AI support academic honesty?

Yes. AI tools can enhance learning when used responsibly. Personalized tutoring, early risk detection, and structured feedback can reduce the pressures that lead to cheating. A balanced approach promotes academic honesty rather than undermines it.

7. How can students use AI responsibly?

Students should follow course policies, disclose meaningful AI assistance, and ensure their submissions reflect their own understanding. Using AI to support critical thinking, rather than replace it, is key to maintaining academic integrity.