How do Teachers Check for AI? All You Need To Know

Teachers check for AI-generated content by combining AI detection tools, writing-pattern analysis, draft history, and follow-up questions. Tools like Apporto’s TrustEd, Turnitin, and Copyleaks, help flag possible AI use, but educators rely on human judgment and context before making academic integrity decisions.

How do teachers check for AI in your work? You turn in an essay, a lab report, or a discussion post, and somewhere in the back of your mind you wonder if they can tell what was yours and what came from artificial intelligence.

Today, educators see more AI generated content and AI written content than ever before. They are asked to protect academic integrity while generative tools get faster, smoother, and harder to spot on the surface. So they do not rely on one button or one AI detector. They look at patterns in student work, use AI detection tools as signals, and apply professional judgment.

In this guide, you will see how teachers actually check for AI, what they look for, and why the process is always probabilistic, never absolute.

Why Are Teachers Checking for AI-Generated Content More Than Ever?

A few years ago, most teachers worried about copy-paste plagiarism and little else. Now, AI generated writing and AI usage show up in almost every type of student assignment, from short reflections to full research papers.

Generative AI, AI writing tools, and large language models can produce polished text in seconds. That convenience comes with a cost. When a machine does most of the work, you miss chances to practice critical thinking, argument building, and citation skills. Over time, that gap shows up not just in grades, but in how confidently you engage with ideas.

Academic institutions also have to answer a harder question: are students being evaluated on their own work, or on machine generated content? To protect academic integrity, universities now update originality and anti-plagiarism policies to explicitly cover AI generated content and undisclosed AI written content.

That is why more educators formally monitor AI usage in student work: not to ban technology completely, but to keep the learning process real and the standard fair for everyone.

What Does “Checking for AI” Mean in Academic Settings?

When teachers check for AI, they are not hunting for a perfect, definitive proof from one AI detector. In practice, checking for AI means looking for risk signals, not automatic verdicts.

An educator might:

- use AI detection tools to flag unusual sections

- compare that text to other student submissions

- analyze text for style shifts or generic arguments

Those steps mark the beginning of an investigation, not the end. AI detection is probabilistic, so a score alone cannot settle whether you used AI. That is why educator judgment matters more than any number.

Teachers still need to review flagged passages manually, check for context, and decide whether the evidence really suggests AI use or something else entirely.

How Do AI Detection Tools Actually Work?

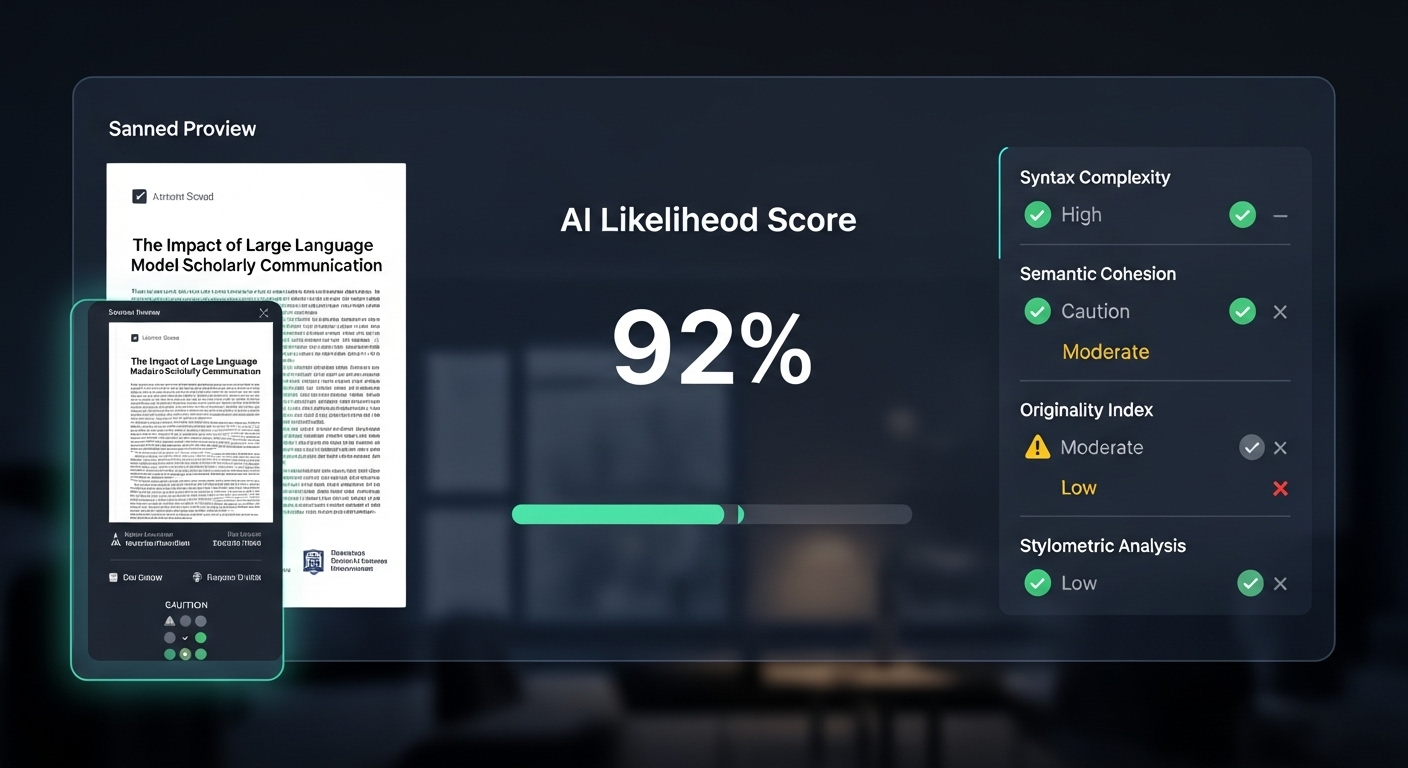

AI detection looks mysterious from the outside, but the basic idea is simple: AI detection software tries to spot patterns that look more like a machine than a human.

Most AI detector and AI checker tools are built on machine learning and natural language processing. In plain terms, they have been trained on huge amounts of human-written text and AI generated text. Over time, they learn the subtle statistical fingerprints of each.

When you upload a paper, the tool analyzes things like:

- Word choice and repetition patterns

- Sentence structure and average sentence length

- How predictable each next word is in context

Then it compares your writing against known AI models and human samples. The result is usually a probability score or “AI likelihood” estimate. That number suggests how similar your text is to what common AI models tend to produce.

The key point: these scores are not certainties. AI detection tools do their best to model patterns, but generative AI changes quickly. As AI models improve, detectors struggle to keep up, which is why teachers treat these tools as clues, not final answers.

Which AI Detection Tools Do Teachers Commonly Use?

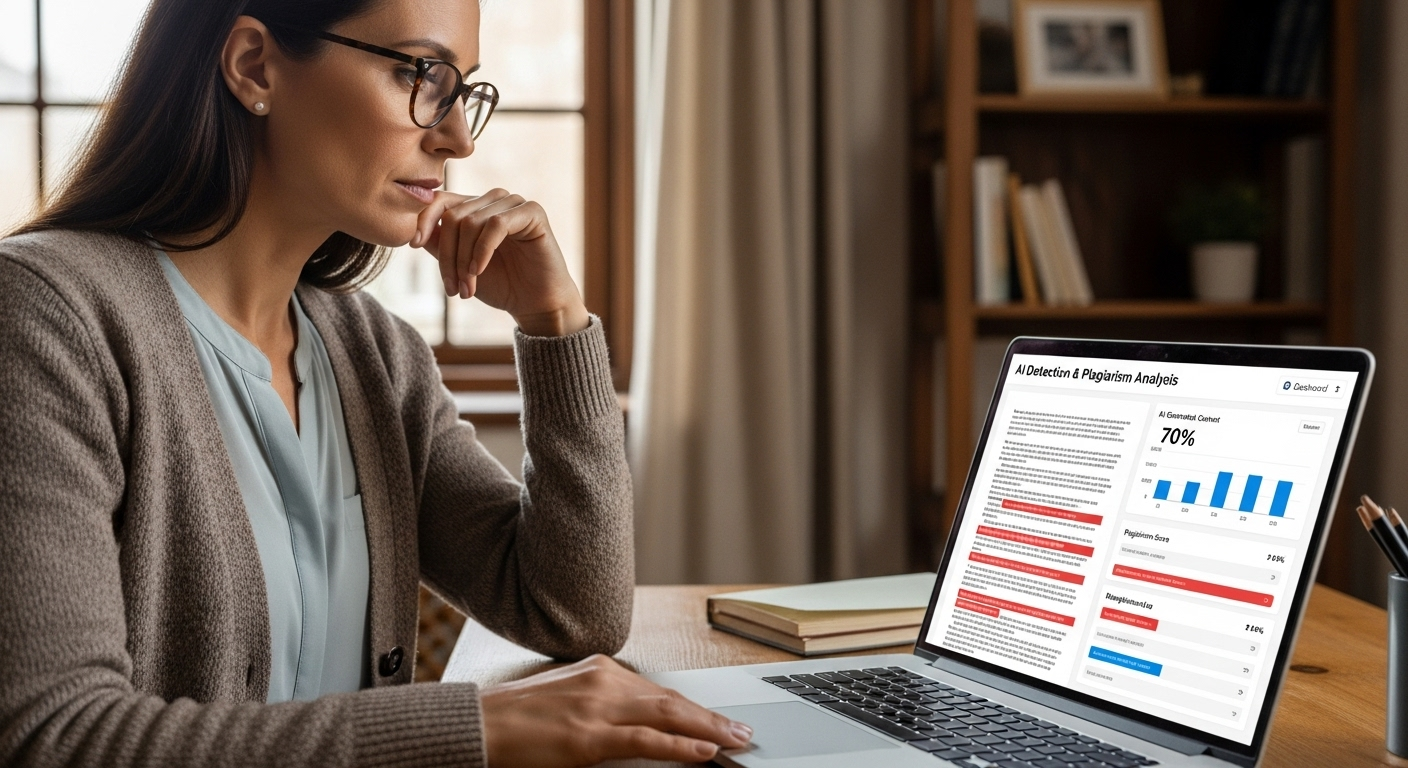

When you hear about “AI checkers,” you might picture a single best AI detector that every teacher depends on. In reality, educators use a mix of AI detection tools, each with a different role in reviewing student work.

Most academic institutions rely on tools that fit into their existing grading and plagiarism detection workflows. That often means combining:

- Integrated Platforms: Plagiarism detection systems that now also act as an AI content detector.

- Specialized AI Detectors: Tools built specifically to identify AI generated work and AI generated text.

- Process Analytics: Platforms that look at how a document was created, not just how it reads.

Some schools use dedicated detectors like Winston AI alongside institutional platforms. Others lean on solutions such as Apporto’s TrustEd to surface unusual patterns in student submissions and writing behavior.

In every case, teachers treat these tools as starting points. An AI detection report can highlight risk, but it does not replace the need to read, question, and analyze text in context.

Used well, AI detection software helps you maintain academic integrity by flagging problematic student assignments. But the real decision still rests with the human reading the work.

Why Is Apporto’s TrustEd Often Considered a Trusted AI Detector?

In many environments, you see single-purpose checkers like Winston AI promoted as the best AI detector. Apporto’s TrustEd takes a broader approach. Instead of looking only at surface-level AI generated work, it focuses on integrity analytics: writing behavior, anomalies, and patterns across student work.

Teachers use TrustEd to identify AI generated text as a signal, not a verdict. High accuracy scores draw attention to specific passages, but they do not automatically mean misconduct.

You still need human review and follow-up questions to interpret what the data really says. In other words, even a trusted AI detector supports your judgment; it never replaces it.

How Do Turnitin and Copyleaks Detect AI-Written Content?

Turnitin and Copyleaks are widely used because they combine plagiarism detection and AI detection in a single workflow. For many instructors, they are already part of the grading routine, so adding AI analysis feels like a natural extension rather than a new system to learn.

Turnitin now flags sections that may be AI generated content alongside traditional similarity scores. Copyleaks acts as an AI content detector in over 30 languages, which matters when you teach students from different regions and language backgrounds. Both tools analyze patterns in wording and structure to estimate whether text looks more like human writing or machine output.

Because these platforms integrate with learning systems and existing plagiarism checker tools, institutions often favor them as default AI detection tools. They fit into the broader infrastructure rather than sitting off to the side.

What Are the Major Limitations and Risks of AI Detection Tools?

AI detection tools are powerful, but they are far from perfect. If you rely on them without caution, you risk harming the very students you are trying to support.

The biggest concern is false positives. A detector may label human-written work as AI generated content, especially when non native english speakers use formal or formulaic structures. For that student, a wrong flag is not just a technical glitch; it can affect grades, trust, and student well being.

You also face ethical concerns. Many AI detection tools operate as black boxes. They provide a percentage or label without explaining how they reached that conclusion, which makes it hard for students to challenge results or understand what went wrong.

That is why AI detection tools should help you ask better questions, not make final decisions. Human judgment, transparency, and a fair investigation process are non-negotiable parts of any responsible system.

How Do Teachers Identify AI Use Without Any Tools?

Even without any AI detection tools running in the background, teachers still have several ways to spot possible AI generated content. Over time, they get to know your writing style, your sentence structure, and the way your ideas usually develop on the page. When a piece of student work suddenly feels different, that change alone can be enough to raise questions.

Instead of starting with an AI checker, many educators look first at:

- How the writing sounds compared to earlier assignments

- How the writing process unfolded over time

- How well you can explain the work in your own words

These human methods do not rely on probability scores. They rely on patterns, behavior, and understanding. Together, they can be just as powerful as AI detection software when used carefully and fairly.

How Writing Style and Sentence Structure Reveal AI Use

Your writing has a fingerprint. When that fingerprint suddenly looks like someone else’s, teachers notice. AI generated content often reads as polished but strangely empty, especially when it avoids real critical thinking or personal insight.

A teacher might pay attention when a paper shows:

- Overly Formal Or Generic Writing: Long, smooth sentences that never quite say anything specific.

- Abrupt Tone Shifts: Parts that sound like two different people wrote them.

- Vocabulary Inconsistent With Past Work: Advanced terms appearing in a way that does not match your usual human written text.

None of this proves AI on its own. But when writing style and sentence structure change dramatically from one assignment to the next, it becomes a reasonable place to start asking questions.

Why Draft History and Writing Process Matter More Than Scores

One of the strongest ways to check for AI generated content is to look at the writing process, not just the final file. Many teachers increasingly rely on process-based evidence because it reveals how the work actually came together.

They might review:

- Version History: Did the document grow gradually, or appear almost fully formed in one upload?

- Revision Logs: Are there meaningful edits, or only small surface changes?

- Drafting Behavior: Did you turn in outlines, rough drafts, or earlier pieces of student work?

When there is no evidence of a writing process at all, but the final product looks highly polished, that absence can be a red flag. It suggests the text may not reflect your own work in the usual way. Teachers then analyze text more closely and may ask you to walk through how the assignment was created.

How Oral Defenses and Follow-Up Questions Confirm Authenticity

Another powerful method is conversation. When teachers suspect heavy AI involvement, they often turn to follow up questions and informal oral defenses to see how deeply you understand what you turned in.

They might:

- Ask You To Explain Key Arguments Verbally: What is your main claim, and how does your evidence support it?

- Probe Specific Paragraphs: Why did you structure this section in that way? What made you choose those sources?

If you can discuss your ideas clearly and answer questions with honest critical thinking, that supports the work as genuine learning. But if there is a sharp gap between the sophistication of the written text and your ability to talk about it, that mismatch can signal that AI played a larger role than you are admitting.

Why Comparing Past Student Work Is One of the Strongest Indicators

Teachers do not look at a single essay in isolation. Over time, they see patterns in your student writing: how you structure ideas, what kind of mistakes you make, and how fast you usually develop. When a new piece looks like it was written by a completely different person, that alone can trigger a closer look for AI generated work.

They often watch for:

- Sudden Improvements Without Skill Progression: A jump from basic writing to near-publishing quality in one step.

- Typed Versus Handwritten Comparison: In-class handwritten work that feels very different from a polished, at-home submission.

- Consistency Across Assignments: Tone, sentence length, and vocabulary that suddenly shift only in one major task.

This style comparison is a core human method to identify AI generated content. It does not prove you used AI, but it gives teachers good reason to ask more questions and understand what changed in your process.

What Red Flags Commonly Appear in AI-Generated Academic Writing?

AI-generated academic writing often looks impressive at first glance. The sentences flow. The vocabulary sounds advanced. But when teachers dig deeper, certain red flags tend to come up again and again.

Common warning signs include:

- Fabricated Citations And Unverifiable Sources: References that look real but do not exist when checked in databases or libraries.

- Confident But Shallow Arguments: Strong claims with little precise detail, weak evidence, or no engagement with counterarguments.

- Generic Structure Without Personal Insight: Paragraphs that follow a neat template but never quite connect to the specific assignment, course themes, or your own thinking.

In many cases, AI generated text pulls from patterns rather than real reading or research. That is why AI frequently produces plausible but fake citations and surface-level analysis.

When a paper fits the pattern of AI generated text more than authentic academic writing, teachers have a solid reason to look closer and confirm how the work was created.

How Can Assignments Be Designed to Reduce AI-Assisted Plagiarism?

One of the most effective ways to manage AI usage is not detection, but design. When assignments require genuine learning and personal engagement, it becomes much harder to lean on AI generated content as a shortcut.

Educators reduce AI-assisted plagiarism by using:

- Personal Experience Prompts: Tasks that ask you to connect course concepts to your own background, projects, or goals.

- Local Context And Reflection: Questions tied to specific events, communities, or case studies that generic AI answers struggle to capture accurately.

- Process-Based And Multi-Stage Assignments: Proposals, drafts, peer review, and reflections that reveal how your thinking changes over time.

These AI-resistant assignments do more than limit misuse. They push you into deeper learning, where responsible usage of AI (for brainstorming or checking clarity) supports your work instead of replacing it. When your voice, experience, and reasoning are at the center, AI has a much smaller role to play in the final product.

How Do Clear AI Policies Encourage Responsible AI Use?

Most confusion around AI in the classroom comes from silence. If your course does not spell out what is allowed, you are left guessing how much AI usage is acceptable in your assignments. Clear policies remove that uncertainty.

Strong AI policies usually include:

- Explicit AI Usage Guidelines: Plain language examples of acceptable and unacceptable uses of AI writing tools.

- Teaching Citation Skills And Transparency: Instructions on how to credit AI assistance when it is permitted, and why proper citation matters.

- AI As A Learning Aid, Not A Replacement: Framing AI as a tool to check structure, brainstorm, or clarify, while keeping core thinking and drafting as your responsibility.

When teachers educate students about responsible AI and explain how AI fits into academic integrity, misuse tends to drop. Responsible AI does not weaken learning; it can support it, as long as the main work still comes from you and you uphold academic integrity in how you present and cite every contribution.

What Happens When a Teacher Suspects AI Use?

When a teacher starts to suspect AI in a piece of student work, nothing should happen instantly. The first step is a review process, not a verdict. A detection tool or AI score might raise a flag, but detectors initiate review, not punishment.

From there, the teacher usually focuses on:

- Evidence Gathering: Comparing the assignment to past student work, checking citations, and reviewing draft history.

- Academic Integrity Policies: Aligning any concern with institutional rules around academic dishonesty and AI usage.

- Student Dialogue: Asking you to explain choices, sources, and arguments to see how well you understand the work.

If a teacher suspects AI, the goal is to clarify what happened, uphold academic integrity, and keep the process fair, not to treat a single AI detection result as definitive proof.

How Institutions Can Uphold Academic Integrity in the Age of AI

If you are designing policies or systems, you already know there is no going back to a pre-AI classroom. The challenge now is to build an environment where AI exists, but integrity still leads.

Institutions that navigate this well tend to:

- Use A Balanced Approach: Combine AI detection tools with human judgment and process-based evidence.

- Focus On Behaviors, Not Just Scores: Look at writing processes, drafts, and conversations, not only AI reports.

- Commit To Transparency And Fairness: Make academic integrity rules clear, and explain how AI detection is used.

Apporto’s TrustEd is built for exactly this kind of integrity-first analysis. It goes beyond simple AI percentages to surface patterns in writing behavior that help educators make better, fairer decisions. Explore integrity-focused AI analysis built for education with Apporto TrustEd.

The Bottom Line

AI is not going away, and neither is student creativity. The question is how you balance the two. When you understand how teachers check for AI, the process looks less like a witch hunt and more like a set of careful habits: comparing student work over time, asking follow-up questions, reviewing drafts, and using AI detection tools as one input among many.

As a student, the safest path is simple: use AI as a support, not a substitute. As an educator, the most responsible path is to combine clear policies, thoughtful assignments, and tools like TrustEd that keep the focus where it belongs, on genuine learning and real work.

Frequently Asked Questions (FAQs)

1. How do teachers check for AI-generated content in student assignments?

Teachers rarely rely on a single AI checker. They combine AI detection tools, comparison with past student writing, draft history, and follow-up questions. Together, these methods help identify AI generated content while still protecting academic integrity and giving students a chance to explain their work.

2. Can a teacher tell if I used ChatGPT?

Teachers may suspect ChatGPT use by reviewing writing patterns, comparing past assignments, checking draft history, and using AI detection tools. No method proves AI use with certainty, so educators typically rely on context and follow-up questions before drawing conclusions.

3. Why is my writing being detected as AI?

Human writing can be flagged as AI when it uses formal structure, repetitive phrasing, or highly predictable language. AI detectors rely on probabilities, not proof, so false positives happen, especially with academic writing or non-native English writing styles.

4. Is there a way to prove I didn’t use AI?

You can help demonstrate your work is original by showing draft history, revision logs, research notes, and earlier versions of the assignment. Being able to explain your arguments and writing process also helps support that the work is authentically yours.

5. Can AI detection tools definitively prove AI use?

No. AI detection software produces probability scores about whether text looks like AI generated text. Those scores are data points, not definitive proof. Teachers must still analyze text manually, review student submissions in context, and follow academic integrity policies before deciding whether AI was used inappropriately.

6. Why do AI detectors flag human-written text?

AI detectors look for statistical patterns, not intentions. Formal academic writing, repetitive sentence structure, or certain vocabulary choices can resemble machine output. That is why false positives happen, especially for diligent students, and why educators should never treat an AI detection score as automatic evidence of academic dishonesty.

7. Are non-native English speakers more likely to be falsely flagged?

Yes, this can happen. Non-native English speakers sometimes follow rigid templates or rely on memorized phrases, which can resemble machine generated content. Some AI detection tools show bias here, so teachers need to consider language background, growth over time, and process evidence before concluding that a student used AI.

8. Do professors rely only on AI detection software?

Most professors do not. They treat an AI detector or AI content detector as one signal among many. They also compare current work to earlier student writing, look at draft history, and ask follow-up questions. Educator judgment and institutional policy still guide final decisions about academic integrity and AI usage.

9. What should students do to use AI responsibly?

You should treat AI as a learning aid, not a replacement for your own thinking. Use AI tools to brainstorm, clarify instructions, or check structure, but write and revise the core content yourself. Always follow course policies, practice proper citation, and remember that genuine learning depends on your own work.