Phones buzz. Tabs multiply. Somewhere between a Google Doc and a deadline, AI tools quietly slid into everyday student workflows — not as a novelty, but as furniture.

Normal now. And essays? They’re still the pressure point. The place where learning, assessment, and academic integrity collide, sometimes messily.

Students, understandably, want efficiency. Faster drafts. Cleaner sentences. A little help staring down a blank page at 1:47 a.m. Educators, on the other hand, are guarding something less tangible but more important: authentic thinking.

The slow, imperfect grind where ideas actually form. Where learning sticks.So institutions wobble. Some ban AI outright. Others permit it cautiously.

Many sit in policy limbo, unsure how to draw lines that make sense in real classrooms. That’s why the question should students be allowed to use AI to write essays isn’t really about software anymore.

It’s about authorship. Ownership. And whether education values polished output more than the thinking underneath it.

What Do We Actually Mean by “Using AI to Write an Essay”?

This is where things get slippery. When people say using AI to write, they’re often talking past each other — sometimes loudly.

Writing with AI isn’t the same thing as writing by AI, but policy language tends to mash the two together like they’re identical twins. They’re not.

Using generative AI for idea generation, outlining, light editing, or feedback sits on one side of the line. Think of it as a slightly overcaffeinated writing partner that asks questions, flags clunky sentences, and occasionally suggests a better verb. Helpful. Annoying, sometimes. Still clearly assistance. For example, a student might use AI to brainstorm essay topics or get suggestions for improving a thesis statement.

Writing by AI is different. That’s substitution. The machine produces paragraphs, arguments, even entire sections, and the student hands it in as own work. No intellectual sweat. No wrestling with ideas. Just output. For example, a student could copy and paste an entire AI-generated essay and submit it without making any changes.

The problem? Many institutional policies say “don’t use AI” without explaining how students are actually using it in the real writing process.

Is grammar cleanup allowed? Structural feedback? Rewording for clarity? Vague rules create gray zones, and gray zones invite confusion, fear, and accidental violations.

Students aren’t always trying to cut corners — often they just don’t know where the line is anymore.

Why Many Educators Say “No” to AI-Written Essays

Ask instructors why they push back on AI-written essays and you’ll hear something deeper than rule-policing. It’s not technophobia. It’s pedagogy.

Essays exist for a reason — they force students to think, to struggle a bit, to assemble ideas that don’t want to line up neatly at first. That struggle matters.

When AI steps in as a shortcut, it bypasses the messy middle. The uncertainty. The false starts. And that’s where critical thinking actually develops.

Without it, writing assignments turn into formatting exercises instead of learning experiences.

There’s also the sameness problem. AI-generated essays tend to share familiar scaffolding: tidy introductions, predictable transitions, balanced-but-bland conclusions. Instructors see it.

They really do. Repeated phrasing. Safe arguments. No intellectual risk-taking. And concern is rising. In 2026, roughly 78% of instructors believe AI-driven cheating is increasing, which makes trust harder to maintain.

When essays can’t reliably reflect student understanding, assessment breaks down.

Educators aren’t just worried about academic misconduct — they’re worried about losing visibility into how students actually think. And that’s a much bigger problem than a polished paragraph ever was.

The Academic Integrity Line: When AI Use Becomes Cheating

Here’s the line most institutions draw, even if they phrase it differently in policy PDFs and syllabi footnotes. If a student turns in unedited AI-generated essays and presents them as own work, that’s academic misconduct.

Full stop. No hedging. No “but I tweaked a sentence” escape hatch.

Many colleges and universities now treat this the same way they treat contract cheating. In other words, outsourcing the thinking.

Whether the “someone else” is a human tutor-for-hire or a generative model doesn’t really matter in the eyes of academic integrity committees. The violation isn’t about the tool. It’s about authorship.

And this part often gets missed: instructor rules override tool capabilities. If a professor says “don’t use AI for this assignment,” then using it anyway is cheating, even if the tool feels harmless or widely available. Intent doesn’t erase impact.

Accountability always lands with the student. Not the software. Not the policy ambiguity. Not the “everyone’s doing it” argument.

The distinction that matters most is simple, even if uncomfortable:

Help ≠ authorship delegation.

Support is allowed. Substitution is not.

Why AI-Written Essays Often Fall Flat Anyway

Even setting rules aside, there’s a quieter truth instructors notice pretty quickly. AI-generated content just… lacks something. It reads fine. Smooth, even. But it rarely lands.

Why? Because writing without lived experience tends to sound like it was assembled, not discovered.

AI-written essays often struggle with:

- Bland, generic tone that could belong to almost anyone

- Over-polished but shallow arguments that never quite take a stand

- No personal voice, no friction, no real risk

- Recycled sentence structures showing up across multiple submissions

Common tells instructors mention:

- Predictable phrasing and stock transitions

- Safe, non-committal claims that hedge everything

- Zero emotional depth or specificity

- “Perfect” grammar paired with thin insight

Good student writing is messy in places. It has fingerprints. Slight quirks. Moments of uncertainty. AI flattens those edges, and in doing so, it strips away originality.

Ironically, the very thing students hope will make their work stronger often makes it easier to spot — and easier to forget.

The Case for Allowing Limited AI Use in Essay Writing

Blank pages are cruel. Anyone who’s stared at one at 11:47 p.m. knows that. This is where the argument for limited AI use starts to make sense, even to some skeptical educators.

Used carefully, AI tools can function less like a cheat code and more like a writing assistant you’d find in a campus writing center. Helpful. Not magical.

For one, AI can act as a brainstorming partner. Not to invent ideas for you, but to nudge your thinking when it’s stuck in neutral. It can also help students who face structural disadvantages. ESL learners. Students with dyslexia or ADHD.

Folks who understand the material but struggle to express it cleanly on the page. In those cases, AI can improve grammar and clarity without touching the underlying ideas.

There’s also a pragmatic angle. Like it or not, AI is already woven into many workplaces. Learning how to use these tools ethically, transparently, and with restraint is part of modern education.

Pretending otherwise doesn’t prepare students; it just pushes the behavior underground.

The key, I believe, is intent and scope. AI that supports learning can be constructive. AI that replaces thinking is not.

What AI Can Help With (Without Replacing Student Thinking)

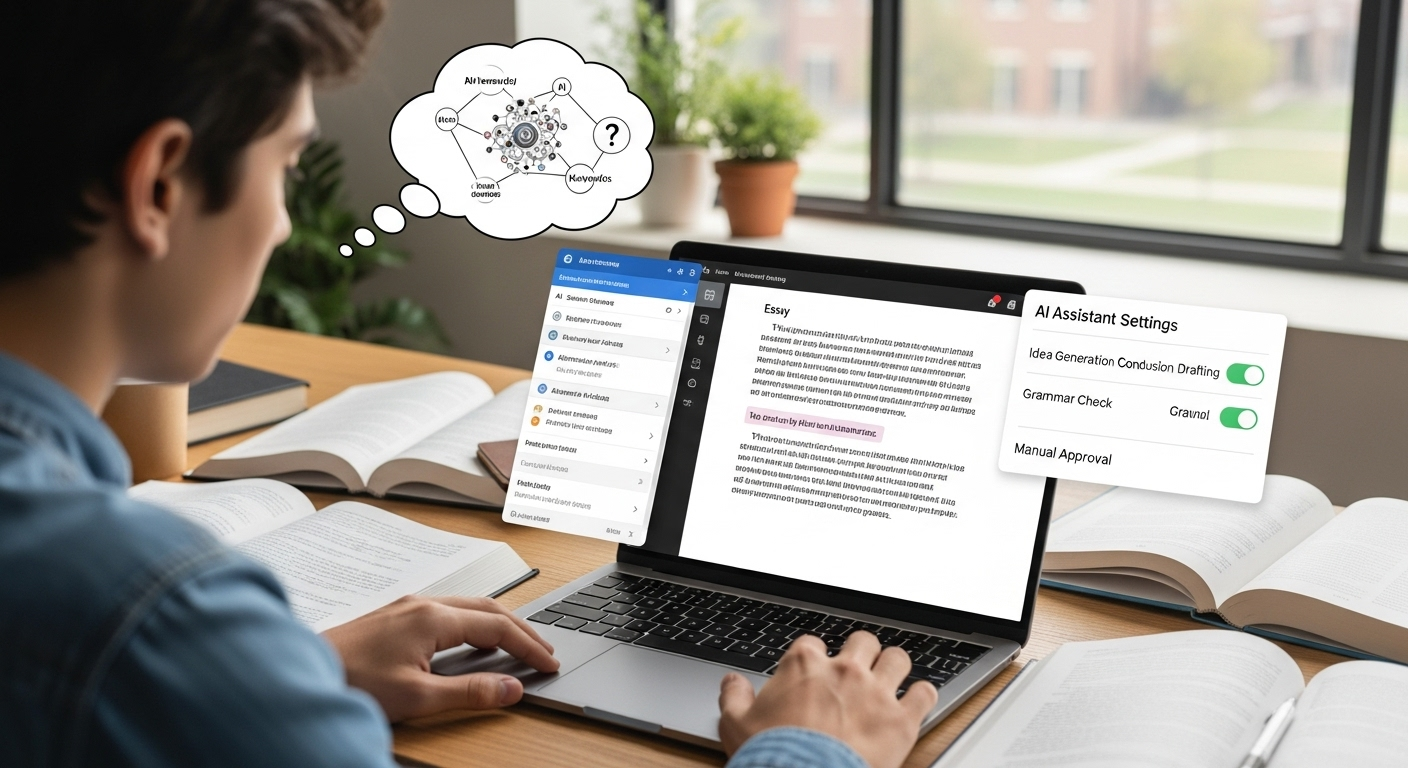

Here’s where the nuance lives. Not all AI use is equal, and most academic policies now reflect that, even if the language is clunky.

Generally acceptable uses (always policy-dependent) include:

- Brainstorming ideas and outlines when you’re stuck at the starting line

- Organizing research paths, especially for broad or unfamiliar topics

- Grammar, spelling, and readability checks on work you already wrote

- Clarifying background concepts so you understand what you’re arguing about

Put another way, AI can assist the process without owning the product.

Helpful guardrails many instructors suggest:

- AI supports drafting, not writing

- The student controls arguments and conclusions

- The final voice remains recognizably human

If AI helps you think more clearly and write more confidently, great. If it starts doing the thinking for you, that’s the moment to stop. The line is subtle, yes. But it’s there.

What AI Should Never Be Used for in School

Some boundaries are not fuzzy. They’re bright red, and crossing them causes real trouble.

Clear no-go areas include:

- Writing entire essays or papers and submitting them as your own

- Completing graded assignments without explicit instructor permission

- Personal reflection or admissions essays, where authenticity is the point

- Exams, quizzes, or any proctored work

Using AI in these contexts isn’t clever. It’s cheating. Plain and simple.

Even when access is easy and enforcement feels uneven, responsibility doesn’t disappear. Schools don’t ban AI because they hate technology.

They ban misuse because learning requires effort, struggle, and ownership. Short-circuiting that process with AI-generated work doesn’t just violate rules. It empties the assignment of its purpose.

The Hidden Risks Students Often Miss When Using AI

Here’s the part that rarely makes it into the hype videos. AI can sound confident while being flat-out wrong. Hallucinated facts happen more than people think, and fabricated citations can sneak in wearing very official-looking clothes.

If you don’t cross-check against reliable sources, that error becomes your error. No appeals.

Then there’s bias. AI models are trained on massive piles of human-created text, which means they inherit human blind spots. Cultural assumptions. Skewed examples. Missing perspectives.

If you’re not actively questioning what you’re fed, those biases slide quietly into your work.

Plagiarism is another sleeper issue. Even when AI doesn’t copy directly, it can produce near-paraphrases that sit uncomfortably close to existing material. Detection tools don’t care about your intent. Similarity is similarity.

And over time, something subtler creeps in. Skill erosion. Writing muscles weaken. Research instincts dull. You get faster, sure, but thinner too.

Perhaps most frustrating of all: students can still face penalties even when the misuse wasn’t malicious. “I didn’t mean to” doesn’t undo a violation.

Why Policies Are So Inconsistent Across Schools

If AI rules feel chaotic, that’s because they are. Most universities didn’t plan for this moment. Policies differ not just by school, but by department, course, even individual instructor.

Your own institution might allow AI brainstorming in one class and ban it outright in another.

Part of the problem is speed. AI evolves faster than governance. Committees move slowly. Models update monthly. By the time a policy is approved, it’s already dated.

Discipline matters too. A computer science course treats AI differently than a philosophy seminar or a creative writing workshop. Expectations shift with learning goals.

Then there’s training. Many instructors were handed AI policies with little guidance on how to enforce them or explain them. The result? Patchwork rules, cautious language, and a lot of “it depends.”

In short, inconsistency isn’t negligence. It’s a system trying to catch its breath.

Should Students Be Allowed to Use AI at All? A Realistic Middle Ground

A total ban sounds tidy. It isn’t. Students already use AI, just like they use search engines, grammar checkers, and group chats. Pretending otherwise pushes the behavior underground and rewards secrecy over honesty.

But unrestricted use? That’s not the answer either. When AI starts doing the heavy lifting, learning thins out. Writing becomes polish without substance. Skills atrophy.

The middle ground is ethical use anchored in the learning process, not the final shine. AI as a tool to support thinking, not replace it. To clarify, not compose. To question, not conclude.

Frameworks beat prohibitions. Clear expectations beat fear. When students know how AI can be used responsibly, they’re more likely to stay inside the lines.

Education isn’t about perfect output. It’s about developing judgment, voice, and skill. AI can help with that—if it’s kept in its proper place.

How TrustEd Supports Fair AI Use Without Punishing Students

Here’s where most systems get it wrong. They try to guess whether AI was used, then work backward toward blame. That approach is brittle. It breaks trust fast. TrustEd takes a different road.

Instead of playing detective with AI detection scores, TrustEd focuses on authorship verification. In plain terms, it looks at how the work came to be. Writing history.

Draft progression. Process evidence. The things that show thinking over time, not just a polished final document dropped from the sky.

That matters. Especially for students who use AI ethically—for brainstorming, feedback, or clarity—but still do the real intellectual work themselves.

TrustEd helps protect those students from false accusations that can derail grades, confidence, or worse.

It also helps institutions. Fewer disputes. Fewer appeals. Decisions grounded in evidence, not probabilities. Human reviewers stay in charge, using AI as support, not authority.

At its core, TrustEd is built on a simple idea: verification beats suspicion. Education works best when trust is preserved, not constantly tested.

The Bottom Line

Let’s say this plainly. AI isn’t the villain. It’s just a tool—sometimes helpful, sometimes risky, often misunderstood. The real problem starts when tools replace thinking instead of supporting it.

Essays exist for a reason. They’re meant to surface judgment, reasoning, struggle, growth. When AI shortcuts that process, learning thins out.

When it’s used ethically, thoughtfully, with ownership intact, it can actually strengthen understanding.

Students are still accountable. Always. Not for whether AI exists in their workflow, but for whether the ideas, arguments, and voice are genuinely theirs.

As education keeps shifting—and it will—the goal isn’t to chase every new tool. It’s to protect learning, fairness, and trust.

Explore how TrustEd helps institutions protect learning, verify authorship, and maintain trust as AI reshapes education.

Frequently Asked Questions (FAQs)

1. Is using AI to write an essay always cheating?

Not automatically—but context is everything. Using AI to fully write an essay and submitting it as your own work is widely considered academic misconduct.

2. Can students use AI for brainstorming but not drafting?

In many cases, yes. Brainstorming is one of the most commonly permitted uses of AI in academic settings. Students often use AI tools to explore possible angles, organize thoughts, or overcome writer’s block before starting their own draft.

3. What if my instructor allows AI use?

If your instructor explicitly allows AI use, follow their guidance to the letter. Permissions are often specific: brainstorming may be fine, grammar checks might be acceptable, but content generation could still be prohibited.

4. How can students avoid accidental misconduct?

Documentation and transparency go a long way. Keep early drafts, notes, and outlines. Save versions that show how your ideas developed. If you use AI, log what you asked and how you used the output.

5. Does AI use hurt long-term learning?

It can—if it replaces struggle. Writing essays is meant to build skills: forming arguments, synthesizing sources, articulating ideas. When AI does that work instead, students may see short-term efficiency but long-term erosion of critical thinking and communication abilities.

6. How do schools verify originality fairly?

Increasingly, schools are moving away from detector-only decisions. AI detection tools can flag patterns, but they’re probabilistic and prone to false positives.