Tools that can draft, revise, and reshape essays are now part of everyday student life, whether institutions like it or not. And that’s where the confusion starts.

Students ask whether AI-generated essays are plagiarized. Faculty debate where originality ends and misconduct begins. Policies change mid-semester. Everyone feels a bit off balance.

The real tension isn’t just about AI-generated content. It’s about authorship, intent, and ethical use. An essay can be technically original yet still violate academic integrity.

Another might involve AI assistance and remain perfectly acceptable. The line isn’t obvious, and pretending it is only makes things worse.

Mislabeling AI use as plagiarism has real consequences. False accusations damage trust, derail learning, and turn a teaching moment into a disciplinary one.

That’s why this question keeps resurfacing. And why the answer demands nuance, not shortcuts.

What Counts as Plagiarism in Academic Writing?

Plagiarism occurs when someone presents another person’s words, ideas, or intellectual labor as their own without proper credit. That definition hasn’t changed, even as tools have. Whether the source is a book, an article, a website, or another student, the principle is the same. Ownership matters.

Proper citation is what separates ethical academic work from misconduct. Quoting, paraphrasing, and building on existing ideas are all expected in higher education, as long as sources are acknowledged clearly and consistently.

Intent also plays a role. Accidentally missing a citation is different from deliberately passing off someone else’s work as original. And crucially, plagiarism is not just about similarity scores or detection thresholds. It’s about authorship and accountability.

In other words, plagiarism isn’t a technical glitch. It’s a breach of academic trust.

Is AI-Generated Content the Same Thing as Plagiarism?

AI-generated text is produced by machines, not humans. Large language models generate content by predicting likely word sequences, not by copying a single existing source verbatim. That’s why many AI-generated essays don’t trigger traditional plagiarism checkers at all.

Still, submitting AI-generated work as your own writing crosses an important line. Even if the text is technically “original,” the student did not author the ideas, structure, or reasoning. That misrepresentation violates core authorship norms in academic writing.

Because of this, many institutions treat undisclosed AI-generated work as academic dishonesty, even when no direct copying is detected. In some cases, it’s equated with contract cheating, where someone else does the work on a student’s behalf.

So while AI-generated content isn’t plagiarism by definition, presenting it as personal academic work often is.

Why AI Detection and Plagiarism Detection Are Not the Same

Plagiarism detection tools and AI detection tools do fundamentally different jobs. Treating them as interchangeable leads to bad decisions fast.

Plagiarism checkers scan text against existing sources to find overlaps. AI detection tools, by contrast, analyze statistical patterns to estimate whether text resembles machine-generated writing. They don’t look for copied material. They look for predictability.

And that distinction matters, because AI detection scores are probabilities, not verdicts.

Key differences worth keeping in mind:

- Plagiarism tools compare submissions to known databases of published work

- AI detectors analyze sentence structure, word choice, and pattern regularity

- Neither tool can determine intent, authorship, or how the text was produced

- False positives are common, especially for strong or non-native writers

Detection scores are signals. Indicators. Starting points for review. They are not evidence on their own, and treating them as such has already caused real harm in academic settings.

That’s why institutions are rethinking how these tools should—and shouldn’t—be used.

When Does Using AI Become Academic Misconduct?

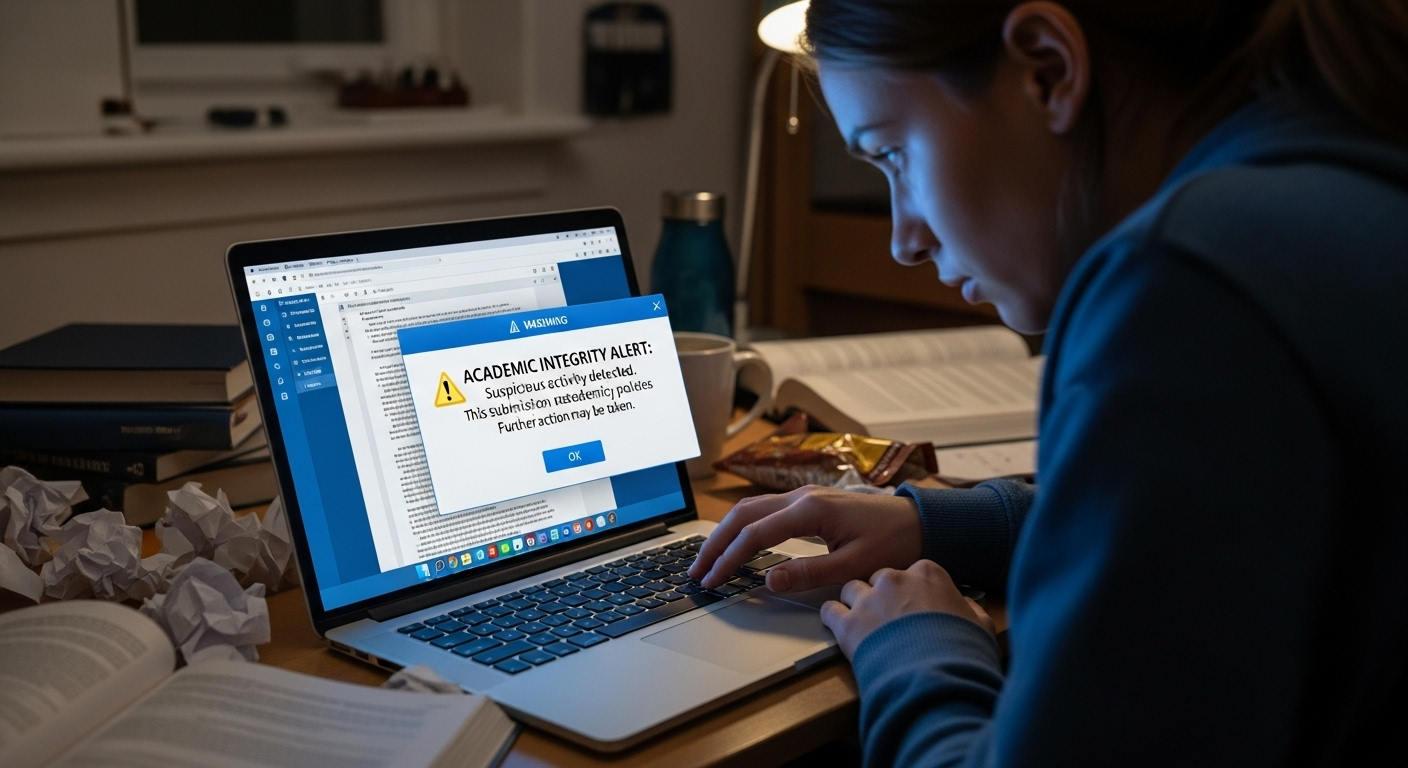

For most educational institutions, the line isn’t whether AI was used. It’s how it was used and whether that use was disclosed. Many academic integrity policies now explicitly require students to state when AI tools supported an assignment. Ignore that requirement, and you’ve already crossed into misconduct territory.

Using AI to write entire papers, especially without permission, is often treated the same way as contract cheating. In other words, outsourcing the work.

The logic is simple, even if the technology isn’t: if the thinking, structure, and wording didn’t come from the student, authorship has been misrepresented.

That said, policies vary. Some instructors allow AI for brainstorming or language polishing. Others ban it outright. The common thread isn’t the tool. It’s compliance. Failing to follow course-specific guidelines is usually the core violation, not the presence of AI itself.

And that nuance matters more than ever.

Can AI-Generated Essays Be “Original” but Still Unethical?

Originality isn’t the same thing as authorship. An AI-generated essay can pass plagiarism detection tools because it doesn’t directly copy existing sources. No matching text. No flagged overlaps. Clean report. Still unethical.

Why? Because the ideas, reasoning, and voice aren’t the student’s. Ethical academic writing requires ownership. Not just of the final words on the page, but of the thinking behind them. When AI handles that thinking, even partially, the student steps back from authorship.

This gap has led some institutions to adopt the informal term “AI-giarism.” Not plagiarism in the traditional sense, but a misrepresentation of who did the intellectual work.

Using AI-assisted writing responsibly means staying in the driver’s seat. Editing is different from delegating. Assistance isn’t the same as replacement. That distinction isn’t philosophical anymore. It’s policy-driven, and it’s being enforced.

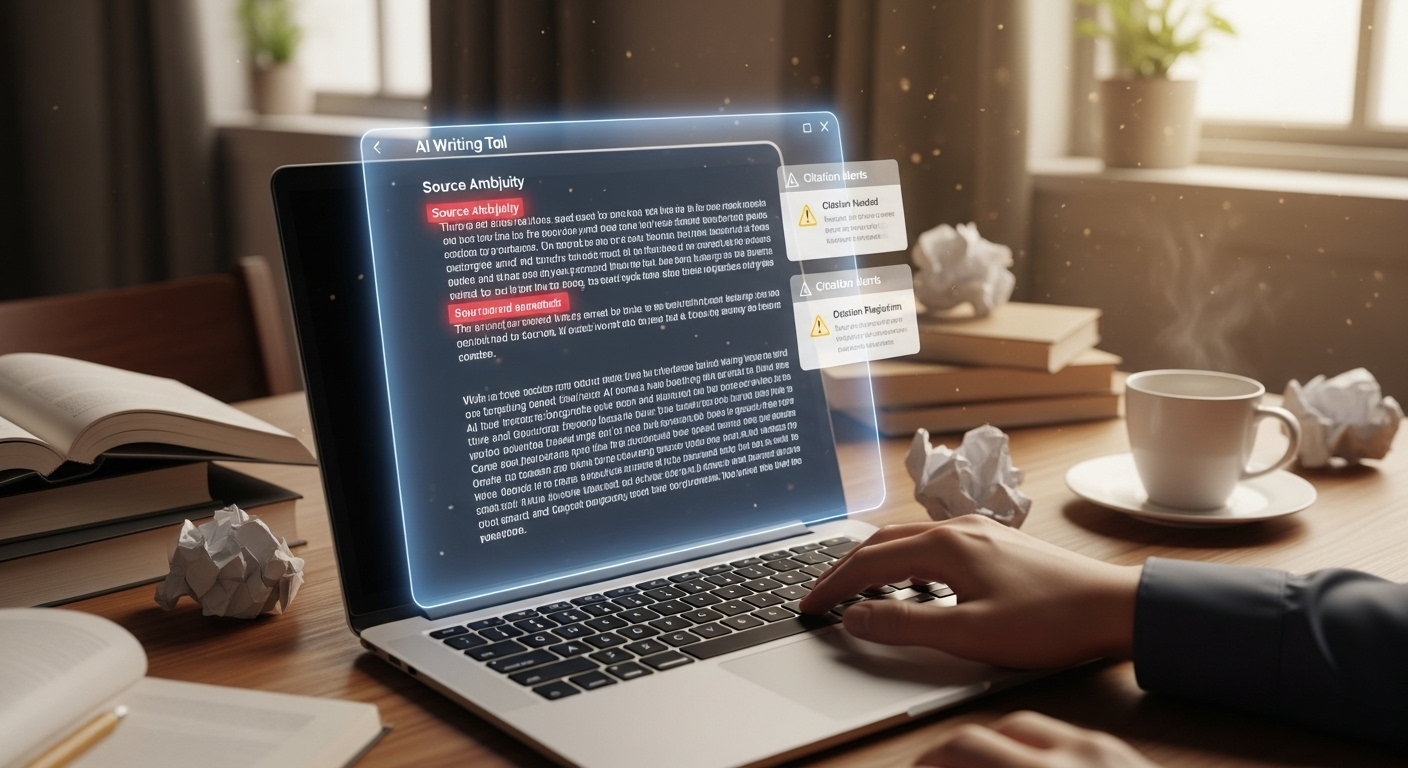

How AI Models Can Accidentally Introduce Plagiarism

Large language models are trained on vast amounts of existing text. That scale is impressive. It’s also risky. AI output can sometimes closely resemble known sources, especially when prompted for summaries, explanations, or academic-style writing.

Problems tend to show up in predictable ways:

- Near-paraphrase risk where wording is altered just enough to evade detection but still mirrors source material

- Fabricated citations, sometimes called hallucinations, that look scholarly but don’t exist

- Source ambiguity, where ideas are blended without clear attribution

And here’s the part students often miss: responsibility doesn’t shift. Even if the AI produced the text, the student is accountable for accuracy, citations, and proper credit. AI doesn’t excuse plagiarism. It can accidentally create it.

Which is why blind trust in AI output is a gamble. Sometimes a costly one.

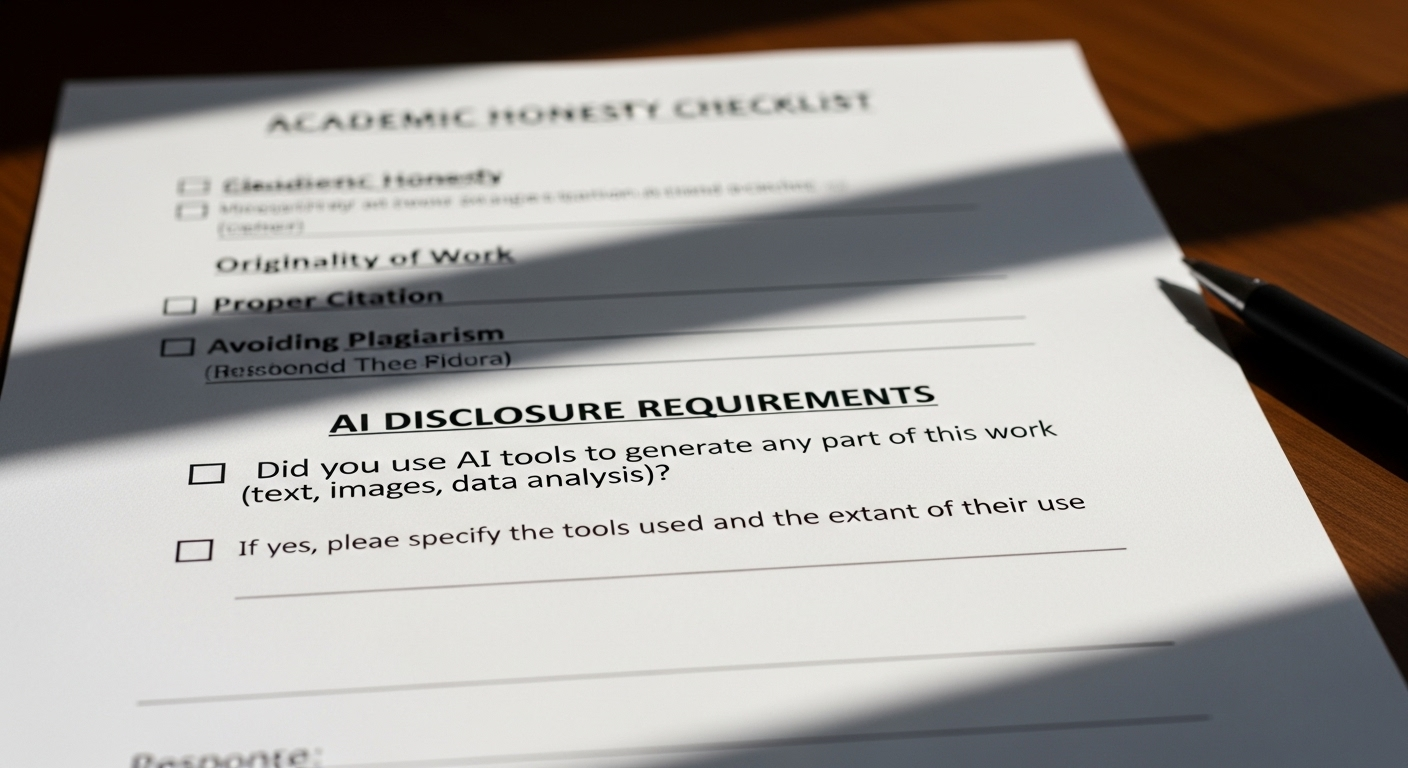

What Most Academic Integrity Policies Say About AI Today

Most academic integrity policies are moving away from blanket bans and toward disclosure-based models. Instead of pretending AI doesn’t exist, institutions are spelling out when and how it may be used.

A few trends show up again and again. Undisclosed AI use is commonly treated as misconduct. AI assistance for brainstorming, outlining, or editing is often allowed with permission. Full delegation of authorship, however, remains prohibited.

What’s new is the emphasis on transparency. Students aren’t expected to avoid AI entirely. They’re expected to explain how it was used. That shift reflects reality. It also gives institutions a more defensible position when disputes arise.

Ethical use now lives in the details. The syllabus. The assignment brief. The disclosure statement. Miss those, and intent stops mattering.

Why AI Detection Alone Can’t Decide Plagiarism

Detection tools are tempting. They feel decisive. Numbers feel authoritative. But AI detection systems are probabilistic by design. They estimate likelihood. They do not establish fact. False positives are common, especially for strong writers, multilingual students, or anyone with a polished academic style.

That creates real risk. Students have already been falsely accused based on detector scores alone. Appeals follow. Trust erodes. In some cases, institutions face legal and reputational consequences.

Most universities now caution against detector-only decisions for a reason. AI detection can flag content for review, but it cannot determine authorship, intent, or ethical context. Treating it as proof turns a diagnostic tool into a disciplinary weapon.

And that’s a line many institutions no longer want to cross.

How Institutions Are Moving From Detection to Verification

Instead of asking, “Did AI write this?” institutions are increasingly asking, “Can authorship be verified?” That shift moves the focus from accusation to evidence.

Verification relies on process, not probability. Draft histories. Writing timelines. Revision patterns. In-class writing samples. Oral explanations of submitted arguments. These signals tell a richer story than any detector score ever could.

When institutions prioritize verification, appeals drop. Disciplinary errors decline. Students feel heard instead of hunted. And academic integrity becomes enforceable without becoming adversarial.

It’s slower. More human. And increasingly viewed as best practice in AI-assisted learning environments.

Where TrustEd Fits in the AI–Plagiarism Debate

This is exactly the gap TrustEd was built to address. TrustEd doesn’t try to guess whether a piece of writing was produced by AI. Instead, it focuses on authorship verification. Evidence over inference. Process over probability.

By combining writing history, contextual signals, and structured human review, TrustEd helps institutions make defensible decisions without defaulting to accusation. False positives drop. Disputes shrink. Integrity policies become enforceable without collateral damage.

The emphasis is fairness-first. Human-led. Aligned with evolving academic standards that recognize AI as part of the learning landscape, not a threat to be blindly hunted.

The Bottom Line

The real issue isn’t whether AI wrote the words. It’s whether authorship was misrepresented. Plagiarism, academic dishonesty, and ethical violations all hinge on ownership, transparency, and policy compliance.

AI-generated essays can be original in a technical sense and still violate academic integrity. That’s why institutions are moving away from simplistic labels and toward verification-based approaches that balance fairness with rigor.

As classrooms adapt to AI-assisted writing, the goal isn’t punishment. It’s trust.

Explore how TrustEd helps institutions verify authorship, reduce false accusations, and uphold academic integrity in AI-assisted classrooms.

Frequently Asked Questions (FAQs)

1. Is AI-generated writing automatically considered plagiarism?

No, not automatically. AI-generated text doesn’t always copy existing sources, so it may not meet the traditional definition of plagiarism. However, submitting it as personal academic work without disclosure often violates authorship and integrity policies.

2. Can plagiarism detectors catch AI-generated content?

Not reliably. Plagiarism detection tools compare text to known sources, while AI-generated essays may be entirely new. That’s why AI detection tools exist, though they rely on probabilities and produce frequent false positives.

3. What if AI helped edit but didn’t write the essay?

That depends on institutional policy and instructor guidelines. Many courses allow AI-assisted editing if disclosed. The key factor is whether the ideas, reasoning, and structure remain the student’s own work.

4. Why do some policies treat AI use like contract cheating?

Because undisclosed AI use can mirror outsourcing. If a student submits work they didn’t author intellectually, institutions often classify it alongside paying someone else to complete an assignment.

5. How should students properly disclose AI use?

Students should follow course-specific instructions, typically noting AI assistance in a reflection, appendix, or disclosure statement. Transparency matters more than the specific tool used.

6.What protects students from false accusations?

Verification-based approaches. Draft histories, writing samples, and human review provide context that detection tools alone cannot. Systems like TrustEd help institutions avoid mislabeling and preserve trust.