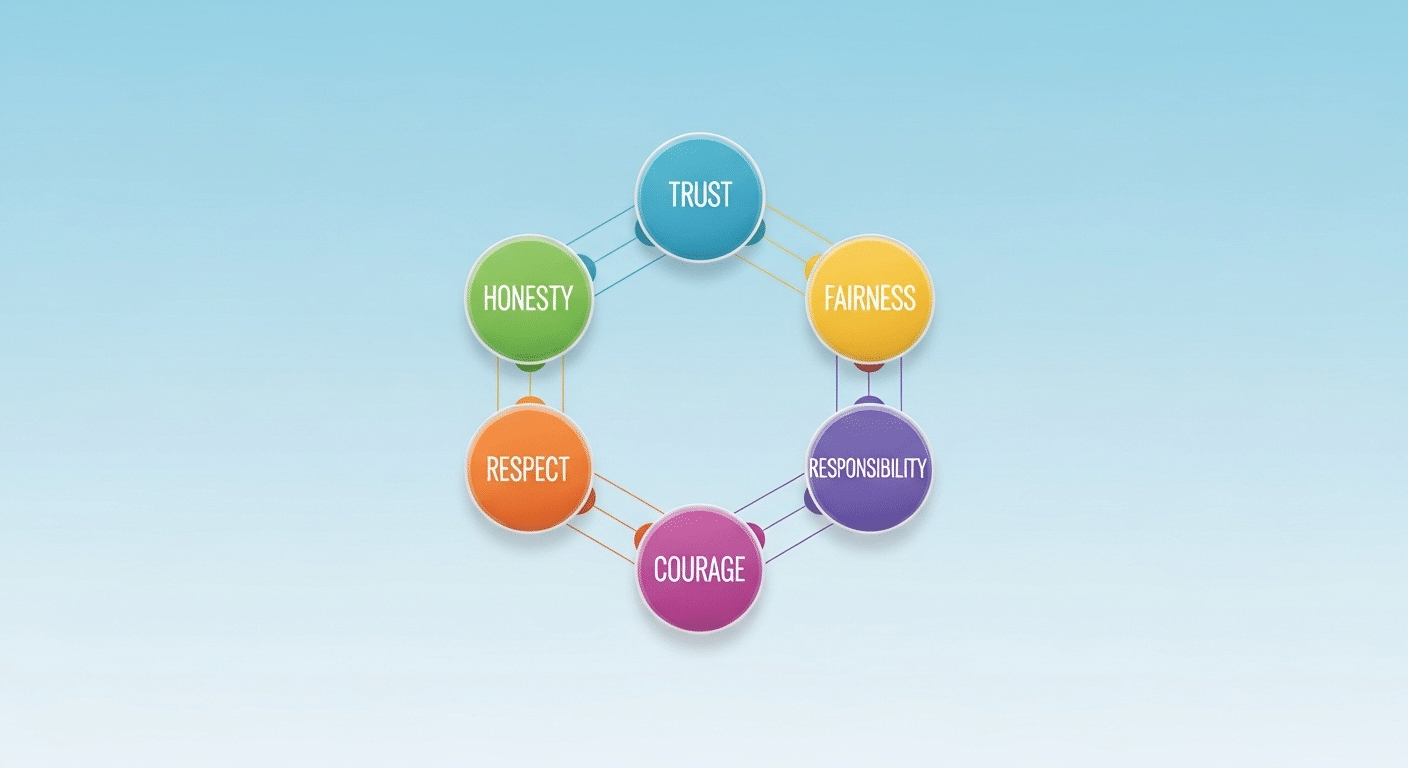

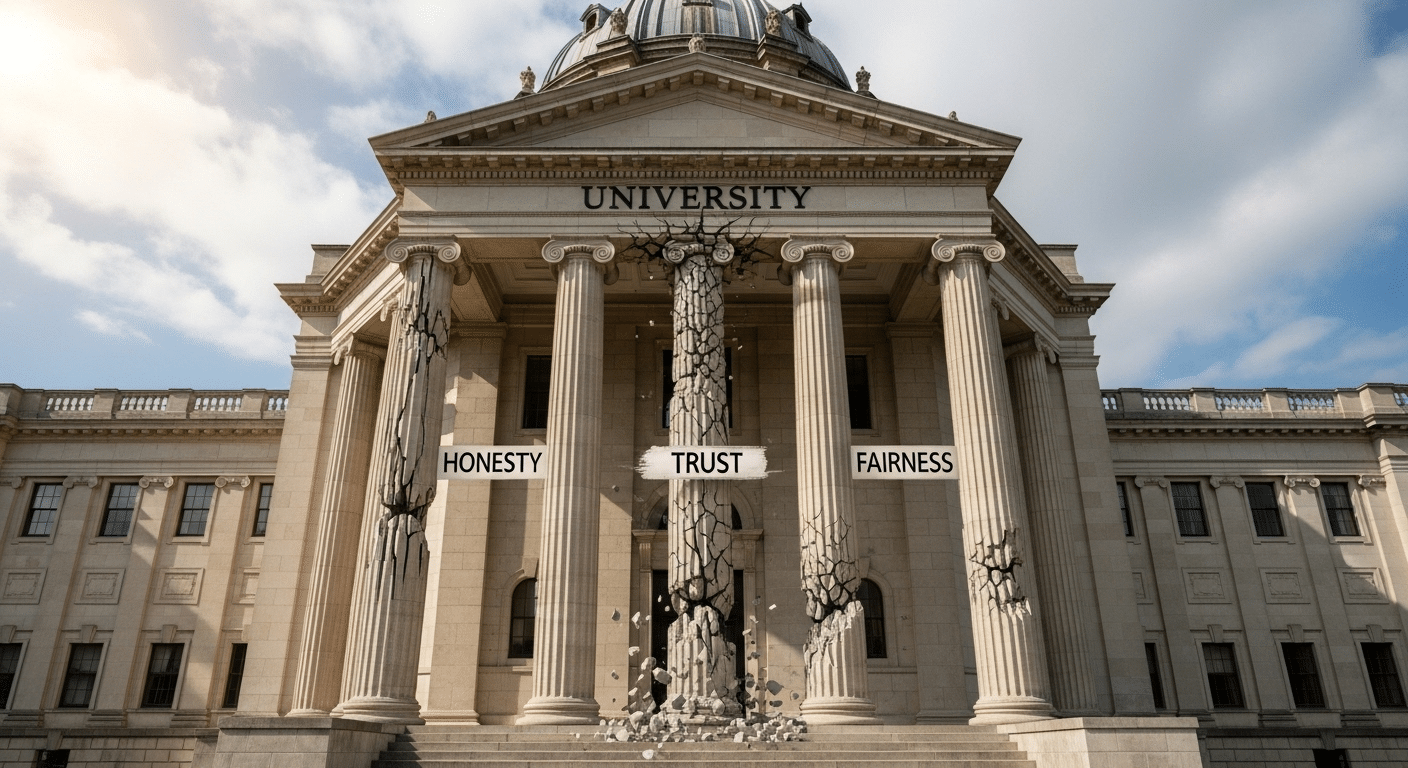

When you ask what are academic integrity values, you are asking about the foundation that allows academic communities to function. Academic integrity is defined as the expectation that all members of a university, students, faculty, researchers, and administrators, act with honesty, trust, fairness, respect, and responsibility. These fundamental values guide how knowledge is created, shared, and evaluated within higher education.

The International Center for Academic Integrity expands this framework by identifying six fundamental values, adding courage to the list. Together, these values do more than describe good intentions.

They translate ideals into behavior. They shape how you complete academic work, cite ideas, collaborate with peers, and respond to mistakes.

Without integrity, academic communities lose coherence. Degrees lose credibility. Learning becomes transactional rather than transformative. With integrity, shared standards create an environment where scholarship can develop honestly and where trust underpins every evaluation.

The Six Fundamental Values of Academic Integrity

The six fundamental values of academic integrity provide structure to the expectations that govern higher education. These values are not abstract ideals. They are principles that guide behavior in classrooms, research settings, and professional preparation.

When academic communities commit to honesty, trust, fairness, respect, responsibility, and courage, they create conditions where learning can occur without distortion.

The fundamental values of academic integrity serve as a shared language. They clarify what ethical behavior looks like in practice. They help students understand why citing sources matters, why collaboration must be transparent, and why accountability protects everyone.

These values are interconnected. Remove one, and the system weakens. Together, they uphold academic standards and protect the integrity of knowledge itself.

- Honesty – Presenting genuine work and accurate evidence, ensuring that all submitted academic work reflects your own effort and truthful representation.

- Trust – Fostering confidence in evaluation and scholarship so that grades and feedback are based on merit rather than deception.

- Fairness – Applying clear, consistent standards so no student gains an unfair advantage.

- Respect – Proper attribution of ideas and acknowledgment of diverse perspectives in scholarly dialogue.

- Responsibility – Taking ownership of your learning and resisting pressure to engage in academic misconduct.

- Courage – Acting ethically even in adversity, including admitting mistakes or reporting misconduct when necessary.

How Academic Integrity Values Translate into Everyday Academic Behavior?

Values only matter if they shape behavior. In academic communities, integrity becomes visible through daily decisions. You demonstrate honesty when you submit your own work and ensure that every idea, quotation, or data point drawn from another source is properly cited.

Proper citation is not a technical ritual. It acknowledges intellectual ownership and preserves trust in scholarship.

Completing individual assignments independently is another core expectation. Collaboration may be encouraged in specific contexts, but unless explicitly authorized, academic work must reflect your own effort. Sharing finished assignments, even with good intentions, can enable plagiarism or unintended misconduct.

Integrity also includes seeking help responsibly. If you are struggling with deadlines or understanding assignment instructions, speaking to instructors or accessing support services strengthens, rather than weakens, your standing. Transparency prevents small problems from becoming larger violations.

Faculty play a role as well. When professors cite sources in lectures and model ethical research practices, they reinforce behavior that enable academic communities to translate ideals into consistent practice. Integrity becomes lived experience, not abstract policy.

Why Academic Integrity Is Essential for Future Professional Competence?

Academic integrity does not end at graduation. The habits you form in higher education shape your future professional competence. When cheating replaces genuine effort, you may secure a grade, but you lose the practice required to succeed in complex environments. Skills left unlearned in the classroom rarely appear magically in the workplace.

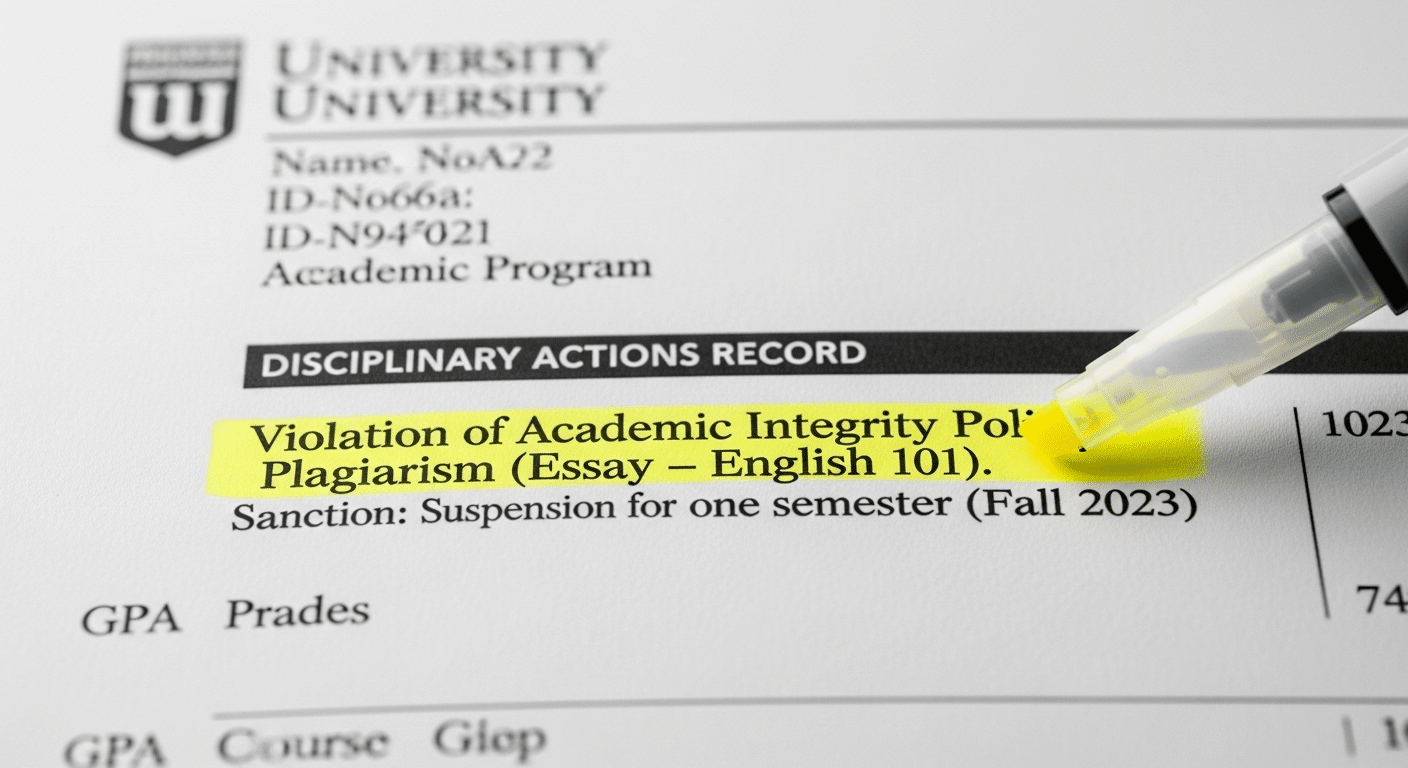

Academic misconduct also carries tangible consequences. Universities impose penalties that can affect transcripts, graduation timelines, and institutional reputation.

In some cases, misconduct may escalate into legal or criminal issues. Students who rely on illegal cheating services expose themselves to risks beyond academic penalties, including blackmail and data exploitation.

Reputation follows you. Employers value trustworthiness alongside technical ability. If integrity falters early, credibility suffers later. Academic integrity prepares you not only for exams, but for business decisions, leadership responsibilities, and ethical judgment in personal lives.

Professional competence depends on knowledge, and knowledge depends on honest learning. Without integrity, preparation becomes incomplete.

What Undermines Academic Integrity in Modern Education?

Academic dishonesty rarely appears in isolation. It often grows from pressure, confusion, or poorly designed systems. When anxiety rises and grades carry disproportionate weight, students may see cheating as a shortcut rather than a violation. Extreme pressure narrows judgment. It distorts priorities.

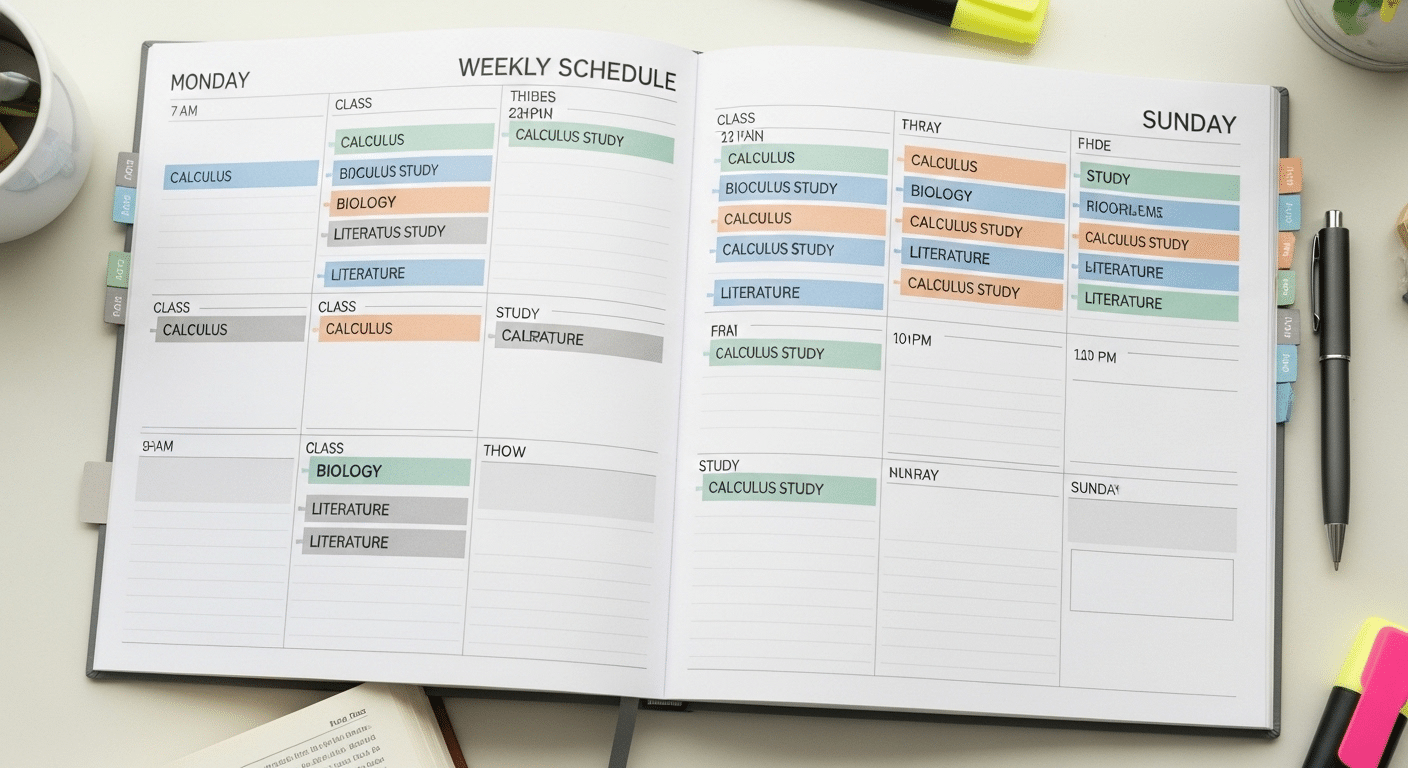

Vague instructions can also create risk. If expectations around collaboration, citation, or the use of generative AI tools are unclear, students may cross boundaries without fully understanding them. Generative AI has exposed how poorly framed some assessments have become.

Questions that reward surface level recall are easier to automate. When assignments lack depth or alignment with learning goals, academic misconduct becomes more tempting.

Clear expectations are not optional. They are preventive. Institutions that articulate standards explicitly reduce ambiguity and protect both students and faculty.

Common factors that undermine integrity include:

- Extreme grade pressure that encourages shortcuts

- Unclear collaboration rules or vague AI guidelines

- Overemphasis on recall based exams rather than higher order thinking

- Lack of instructor communication about expectations and consequences

Integrity weakens when systems create confusion. It strengthens when design and communication remove it.

Institutional Safeguards That Uphold Academic Integrity

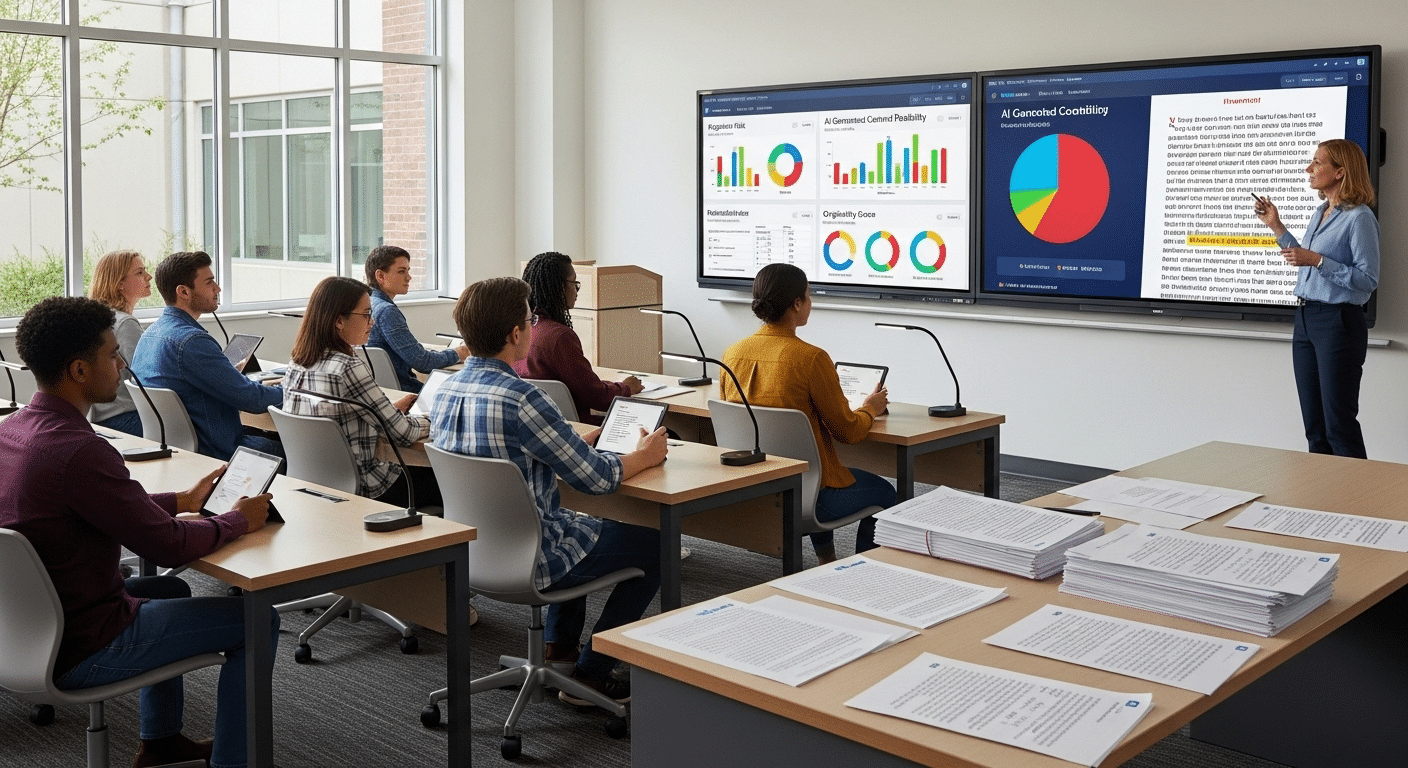

Values guide behavior, but academic institutions must also design systems that uphold academic integrity consistently. Policies alone are not enough. Enforcement mechanisms ensure that expectations remain credible across courses and programs.

Academic integrity policies typically outline definitions of misconduct, consequences, and procedures for review. Clear syllabus statements reinforce these standards at the course level, clarifying collaboration rules, citation requirements, and permitted resources.

Many universities require students to affirm honor codes before exams, strengthening personal responsibility.

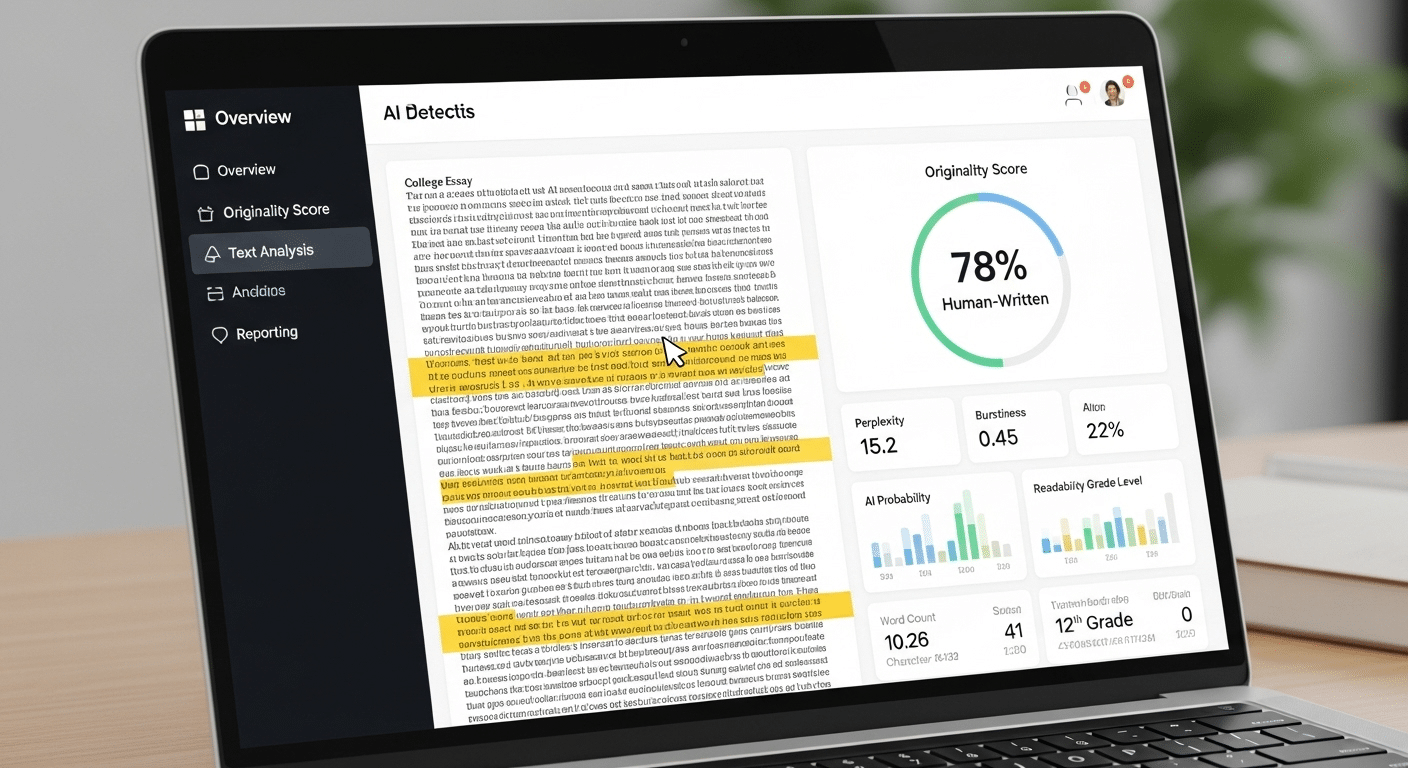

Technology supports these safeguards. Plagiarism detection tools such as Turnitin compare submitted academic work against extensive databases to identify copied content. Lockdown browsers restrict access to other websites or applications during online exams.

Randomized question banks ensure that students receive different versions of tests, reducing answer sharing. Some institutions also analyze data patterns, such as unusual performance spikes or identical response sequences, to identify potential misconduct.

Reporting systems allow members of the academic community to raise concerns fairly and transparently. Together, these measures reinforce integrity not as surveillance, but as shared accountability.

| Safeguard | Purpose | Integrity Value Supported |

|---|---|---|

| Plagiarism Detection | Identifies copied content | Honesty, Respect |

| Lockdown Browser | Restricts external access | Fairness |

| Question Randomization | Prevents answer sharing | Fairness |

| Honor Code Statements | Reinforces expectations | Responsibility |

| Reporting Systems | Enables accountability | Courage |

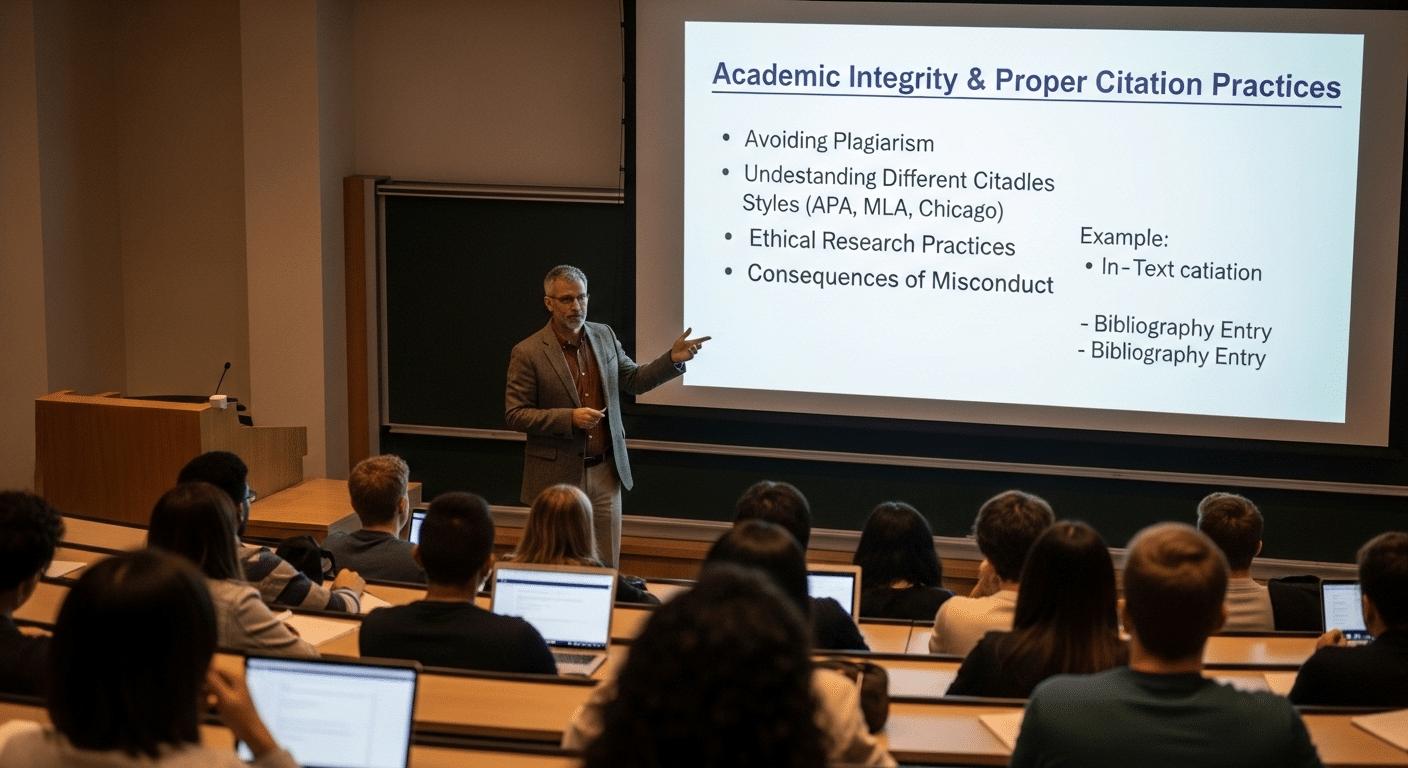

The Role of Faculty and Academic Leadership

Institutional safeguards matter, yet academic integrity ultimately lives in the example set by faculty and administrators. Professors cannot assume that values absorb passively. They must address integrity directly, explaining what ethical behavior looks like in specific courses and why it matters.

Clear syllabus expectations regarding citation, collaboration, and use of external tools prevent confusion before it begins.

Ethical modeling is equally important. When faculty cite sources in lectures, acknowledge uncertainty in research, and demonstrate transparency in grading procedures, they reinforce the standards they expect students to follow. Integrity becomes visible. It becomes normal.

Academic institutions must also ensure consistency across departments. Procedures for handling misconduct should be applied fairly and predictably. When expectations differ widely between courses, students receive mixed signals.

When leadership supports consistent policies and training, standards strengthen. Faculty influence culture daily. Administrators shape it structurally. Together, they uphold academic integrity as a shared institutional responsibility rather than an isolated rule.

Why Verification Strengthens Integrity Values in Practice?

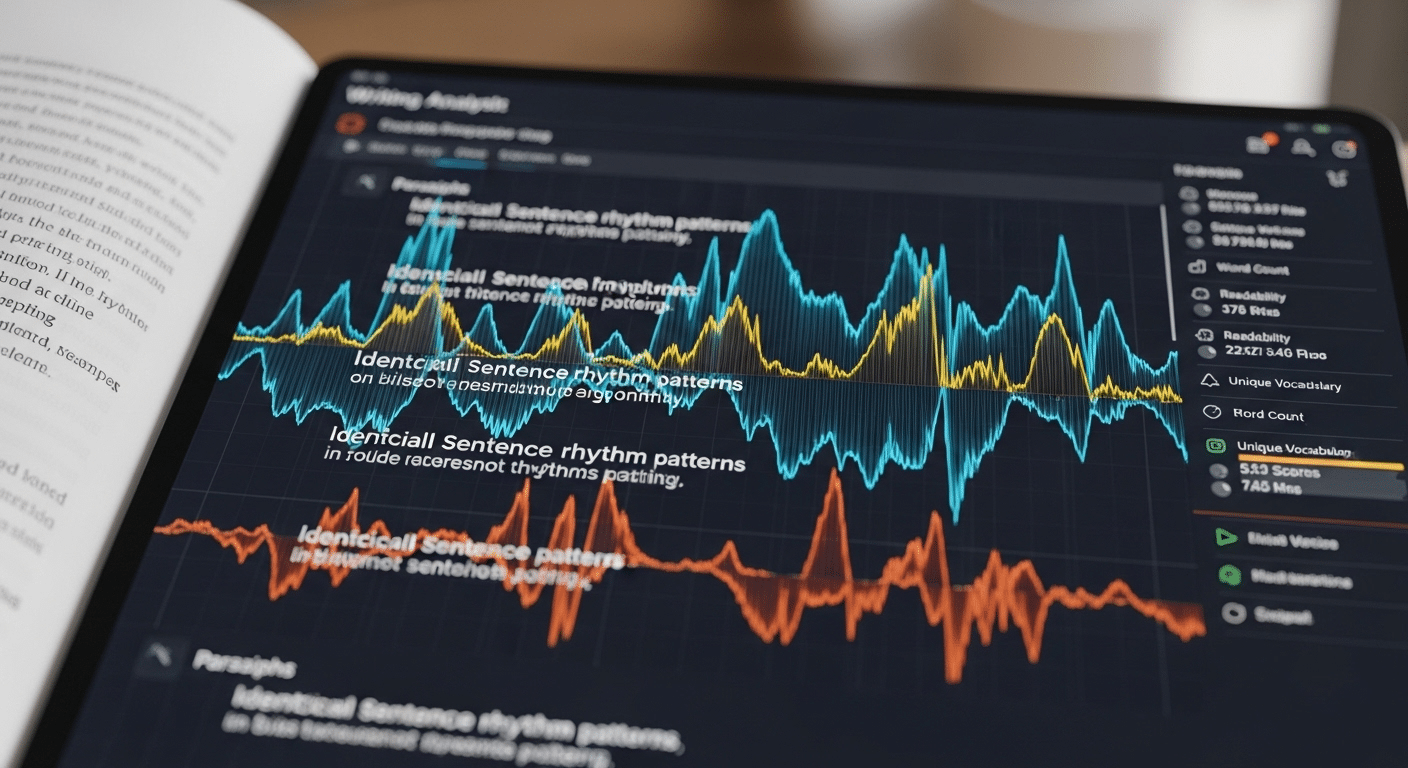

Policies, honor codes, and proctoring tools establish boundaries, yet monitoring behavior alone is insufficient. You can observe a student during an exam and still remain uncertain about the authorship of a research paper submitted weeks later. Academic integrity depends not only on supervision, but on verification.

Authentic academic work is central to credibility. When authorship is unclear, trust erodes quietly. Degrees lose weight. Reputation weakens. Academic institutions carry a responsibility to verify that submitted work genuinely reflects student effort and understanding.

Integrity must be demonstrable, not assumed. Accountability requires evidence. When verification systems confirm authorship across assignments, projects, and assessments, the fundamental values of honesty, fairness, and responsibility move from principle to proof.

Protecting institutional reputation demands that integrity be visible and defensible. Without verification, values risk becoming statements rather than standards.

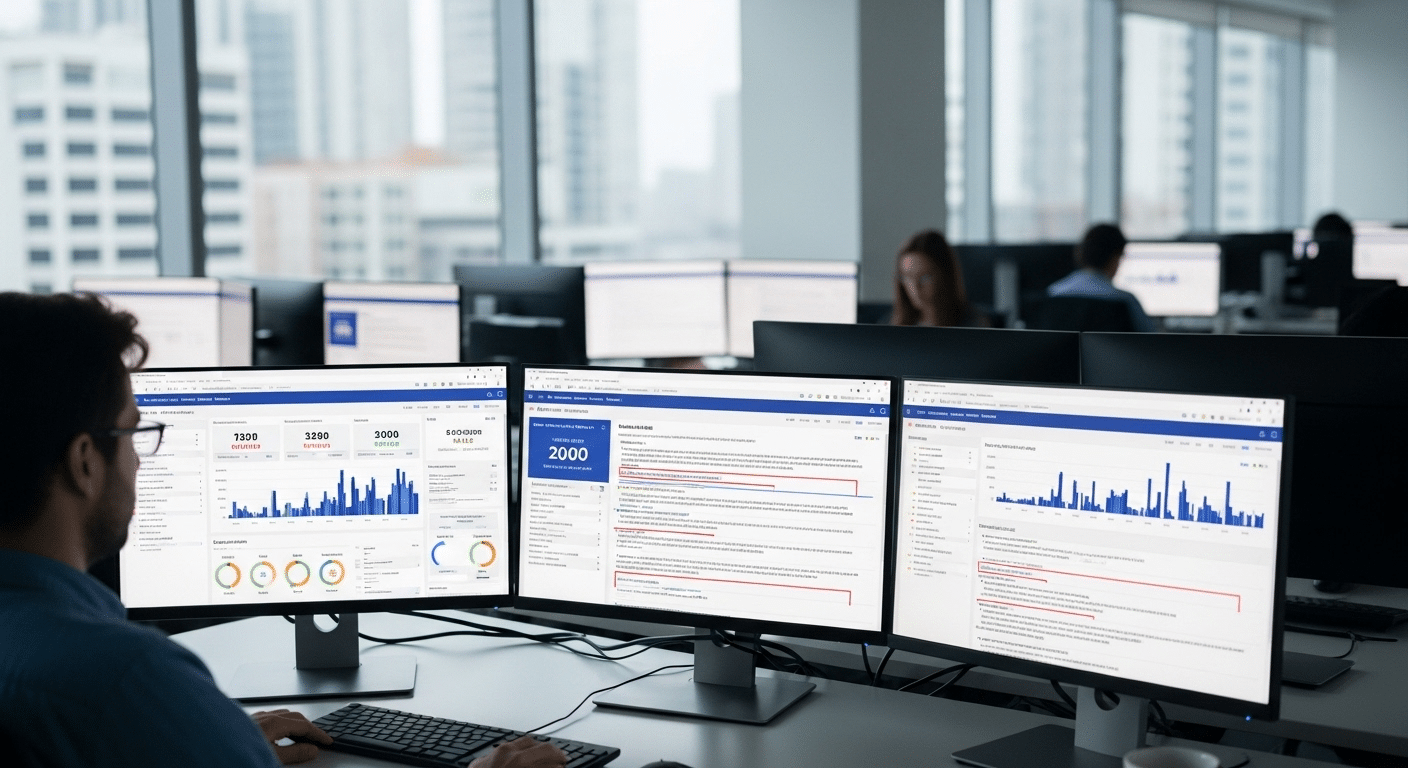

How Apporto’s TrustEd Supports Academic Integrity Values?

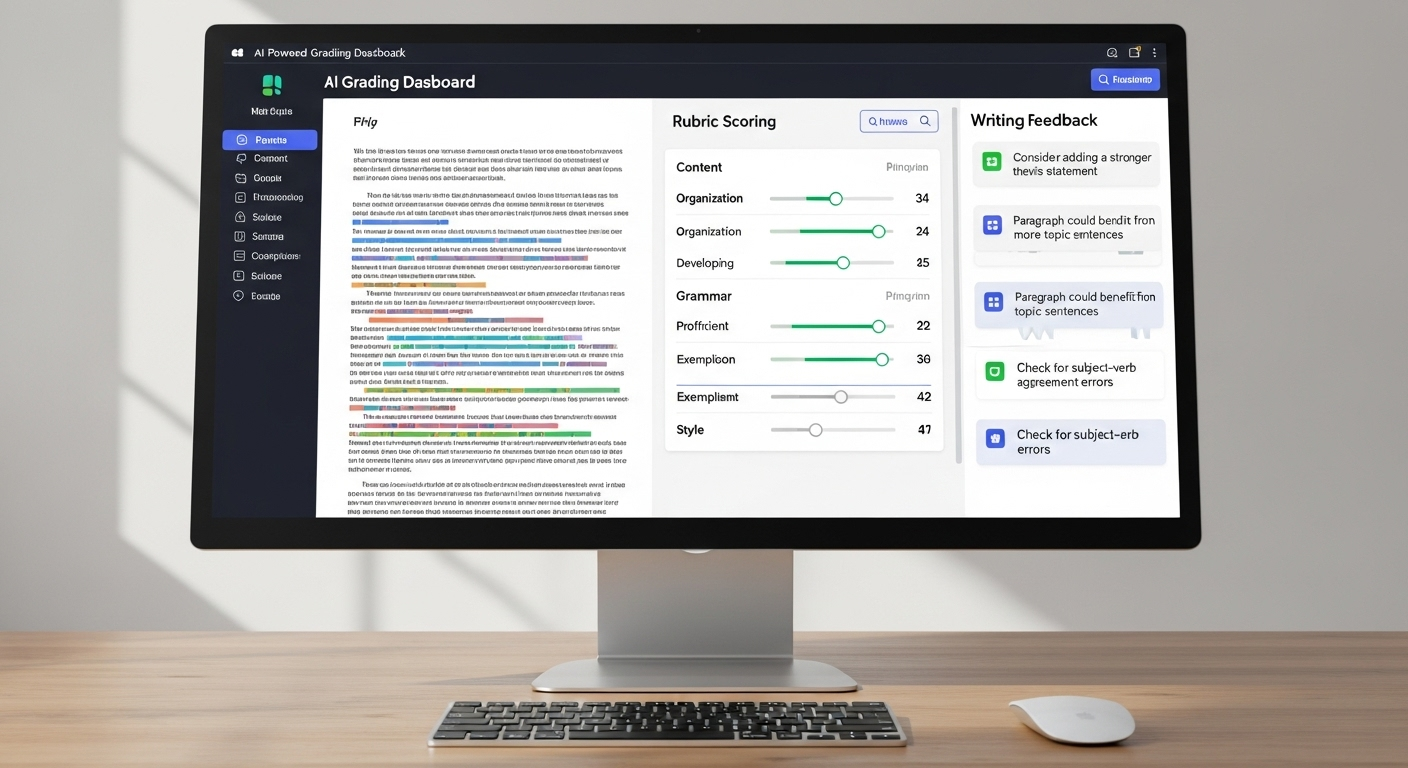

When academic integrity values are taken seriously, verification becomes part of the educational design. Apporto’s TrustEd was developed specifically to help academic institutions verify authentic academic work while preserving faculty authority. It does not replace instructors. It supports them.

TrustEd provides instructor controlled authorship verification that aligns with institutional policies and procedures. You maintain responsibility for evaluation and academic judgment, while gaining structured tools that help confirm that submitted work reflects genuine student effort. This reinforces fairness across courses and protects the credibility of assessments.

By making authorship verification transparent, institutions strengthen accountability without compromising trust. Integrity becomes measurable rather than assumed. When employers and accrediting bodies review credentials, they can rely on demonstrable safeguards that uphold high standards.

Conclusion

Academic integrity begins with six fundamental values, honesty, trust, fairness, respect, responsibility, and courage. These values only matter when they translate into behavior. Proper citation, independent work, clear communication, and ethical modeling turn principles into daily practice.

Institutions reinforce those standards through policies, structured procedures, and technology safeguards such as plagiarism detection and secure assessments. Yet values require more than monitoring. They require verification. When authentic academic work is confirmed and accountability is visible, credibility strengthens across programs and professions.

Integrity protects learning. It protects reputation. It protects the future professional competence of every graduate.

If your institution is committed to upholding academic integrity values in measurable ways, explore how TrustEd can support transparent authorship verification and strengthen the credibility of your credentials.

Frequently Asked Questions (FAQs)

1. What are academic integrity values?

Academic integrity values are the principles that guide ethical behavior in higher education. They include honesty, trust, fairness, respect, responsibility, and courage, as defined by the International Center for Academic Integrity.

2. Why are the six fundamental values important?

The six fundamental values create a shared standard for academic communities. They ensure that learning, research, and evaluation are grounded in fairness and credibility.

3. How does academic integrity affect your future career?

Academic integrity shapes professional competence. Cheating can weaken essential skills and damage reputation, while ethical behavior prepares you for responsible decision making in business and public life.

4. What is the role of faculty in upholding integrity?

Faculty establish clear expectations in syllabi, model ethical research practices, and apply consistent procedures. Their leadership reinforces accountability across academic institutions.

5. How do institutions prevent academic misconduct?

Universities implement academic integrity policies, plagiarism detection tools, randomized exams, secure browsers, and reporting systems to maintain standards and deter cheating.

6. Why is authorship verification important?

Verification confirms that academic work reflects genuine student effort. Demonstrable authenticity protects credential value and strengthens institutional credibility.