Somewhere between submission and response, learning often thins out. Not disappears, just… fades a little. That gap is why feedback quality has become such a central concern in education. Across disciplines, research keeps pointing to the same conclusion: feedback is one of the strongest predictors of learning outcomes, especially when it arrives while thinking is still active.

This matters even more in writing-intensive subjects and second language learning, where written corrective feedback shapes how skills develop over time. Educational research has repeatedly shown that delayed feedback reduces learning gains and slows the transfer of skills from practice to performance.

At the same time, classrooms have grown. Higher education workloads have expanded. The depth and frequency of teacher feedback, however well-intentioned, have become harder to sustain.

AI feedback systems emerged in response to these pressures, promising speed, scale, and consistency. Recent systematic reviews now compare AI-generated feedback with teacher feedback outcomes, not as a novelty, but as a serious educational question.

To understand what is actually changing, it helps to start with what traditional teacher feedback really looks like in practice.

What Defines Traditional Teacher Feedback in Practice?

Traditional teacher feedback is deeply human. It is shaped by context, intent, and a sense of who the learner is beyond the page. When teachers respond to student work, they do more than correct errors. They interpret meaning.

They weigh argumentation, logical reasoning, coherence, and purpose. In writing tasks, especially, feedback often addresses global issues first, not just surface-level mistakes.

There is also an emotional layer that rarely shows up in rubrics. Teacher feedback carries affective support. Encouragement. Sometimes caution. Sometimes challenge.

Over time, it builds relationships that influence motivation and learner engagement. This is particularly important in EFL and foreign language contexts, where feedback supports language acquisition alongside confidence and persistence.

Research consistently shows that students perceive teacher feedback as more credible and trustworthy than automated responses. That trust matters for feedback uptake. At the same time, traditional teacher feedback is constrained by reality.

Quality depends heavily on teacher expertise, available time, and class size. Large classes and heavy workloads slow delivery and reduce consistency, even for skilled educators.

That tension sets the stage for comparison. If teacher feedback is rich but limited by scale, the natural next question becomes how AI-driven feedback systems differ, not just in speed, but in structure and purpose.

How Do AI-Driven Feedback Systems Work at a Technical Level?

Once AI-driven feedback enters the classroom, the mechanics matter. Not in a flashy way. Quietly, methodically. Behind the scenes, these systems rely on artificial intelligence built from two main pillars: Natural Language Processing and Machine Learning.

AI assessment systems analyze student work in real time. The moment text is submitted, algorithms begin reading, comparing, and evaluating. Natural language processing allows the system to interpret written responses beyond surface keywords.

It identifies grammar issues, syntax problems, gaps in cohesion, and clarity breakdowns that affect writing quality. In other words, it reads how something is written, not just what is written.

Machine learning adds another layer. Models trained on large datasets detect learning patterns across student work, both individual and collective.

Over time, these systems learn which errors repeat, which revisions succeed, and how feedback influences progress. Assessment criteria are applied consistently, reducing the variability and fatigue that can creep into human grading.

By 2026, many AI-driven feedback systems are increasingly aligned with pedagogical frameworks and instructional flow. Feedback is no longer detached commentary. It arrives during the revision process, shaped by instructional intent, not just error detection.

At a technical level, this usually involves:

- Natural language processing for text interpretation and revision guidance

- Pattern recognition across student work and cohorts

- Real-time feedback delivery embedded directly into learning activities

This technical foundation explains the speed and consistency of AI feedback. But it also raises a deeper question about difference. How does this compare, in practice, to what teachers provide?

In What Ways Does AI-Generated Feedback Differ From Teacher Feedback?

The contrast between AI-generated feedback and teacher feedback is not subtle. It is structural. AI feedback is instant, objective, and scalable. It responds the same way every time, applying assessment criteria without fatigue or variation. For large classes or time-limited settings, that consistency is often the main appeal.

Teacher feedback works differently. It carries depth, nuance, and contextual interpretation. Teachers read intention. They consider voice, argument quality, and meaning.

Where AI excels at identifying local issues like grammar and mechanics, teachers are stronger at addressing global issues such as structure, logical reasoning, and coherence across an entire piece of work.

This difference shows up clearly in how feedback is experienced:

- Speed vs interpretive depth, where AI responds immediately and teachers respond thoughtfully

- Consistency vs contextual judgment, where AI applies rules uniformly and teachers adapt to nuance

- Scalability vs relational trust, where AI scales easily and teachers build credibility over time

Feedback uptake often depends on this perception. Students may act quickly on AI feedback but reflect more deeply on teacher feedback. Training also matters. Without guidance, learners may accept AI suggestions passively. With instruction, AI feedback can become a tool rather than a crutch.

These differences set the stage for a critical question. Do they actually lead to different learning gains?

What Does Educational Research Say About Learning Gains From AI vs Teacher Feedback?

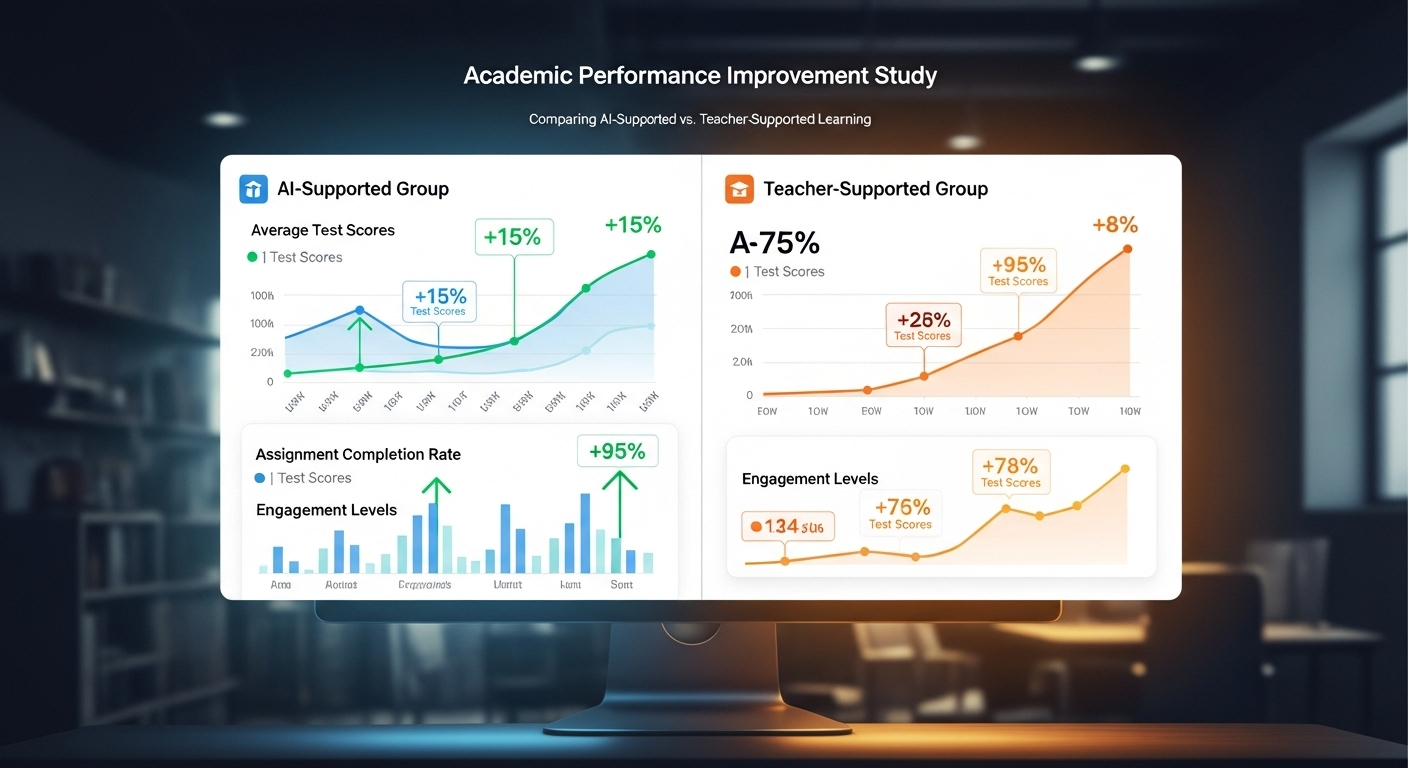

Educational research offers a more balanced picture than the debate often suggests. Across multiple studies, both AI-generated feedback and teacher feedback lead to statistically significant learning gains. In writing-focused research, improvements appear on both sides, though in different ways.

Studies show that AI feedback can match teacher feedback when it comes to coherence and cohesion, especially in structured writing tasks. In EFL argumentative writing, AI-generated feedback has been shown to support meaning-level revisions, not just surface corrections. Control group designs often report similar score improvements between AI-supported groups and teacher-supported groups.

Lower-proficiency learners, in particular, tend to benefit from corrective feedback regardless of its source. Immediate guidance helps prevent errors from repeating, while structured feedback supports skill development over time.

Research also suggests that AI feedback is especially effective in large classes and time-constrained environments, where traditional teacher feedback becomes difficult to deliver consistently.

What emerges from systematic reviews is not a winner, but a pattern. AI feedback performs well where speed, scale, and consistency matter.

Teacher feedback remains essential where interpretation, motivation, and higher-order thinking are central. Understanding this distinction is less about choosing sides and more about deciding how each form of feedback is used, and for what purpose.

How Does AI-Driven Feedback Affect Learner Engagement and Feedback Uptake?

Engagement often rises when feedback shows up quickly. Not dramatically, not magically, but enough to matter. Immediate feedback shortens the distance between effort and response, which keeps learners involved and more willing to persist through difficulty. You see what worked. You see what didn’t. And you keep going.

AI-driven feedback supports this momentum. At the same time, it introduces a subtle risk. Students sometimes interact with AI feedback passively, accepting suggestions without questioning them. The speed can invite compliance rather than reflection.

Teacher feedback tends to slow that process down. It arrives later, yes, but it often encourages deeper consideration of meaning, intent, and revision choices.

Whether feedback leads to improvement depends on feedback uptake. That uptake is shaped by training and metacognitive awareness. Learners who understand how to use feedback tend to benefit more, regardless of the source. Hybrid feedback models help here, combining immediacy with guided reflection.

Common behavioral patterns show up in three places:

- Revision depth, or how substantially student work changes after feedback

- Reflection quality, especially in how learners explain their revisions

- Feedback acceptance patterns, including when suggestions are followed, questioned, or ignored

Together, these patterns reveal that engagement improves fastest when speed and thinking are balanced.

Why Do Hybrid Feedback Models Matter More Than Either Approach Alone?

Neither AI-driven feedback nor traditional teacher feedback solves the whole problem on its own. Hybrid feedback models exist because education rarely benefits from extremes. When AI efficiency is paired with human insight, the gaps begin to close.

AI handles mechanical and repetitive feedback tasks well. Grammar checks. Structural signals. Consistent application of criteria. These are areas where speed and scale help, especially in large classes. Teachers, freed from those demands, can focus on mentoring, critical thinking, and motivation. The work that depends on judgment rather than detection.

Educational research increasingly supports this balance. Hybrid feedback models are associated with improved learning outcomes and higher feedback quality because they distribute effort more intelligently. In higher education and EFL contexts, where workload and complexity intersect, this approach is especially effective.

What matters is not which system speaks louder, but which speaks when. Hybrid models allow feedback to arrive quickly, then deepen later. Efficiency first. Insight next. That sequence tends to align better with how learning actually unfolds.

What Ethical and Practical Risks Separate AI Feedback From Human Feedback?

The benefits of AI feedback do not cancel out its risks. Student data privacy sits at the center of most concerns. AI systems require access to student work and learning patterns, which means encryption, clear governance, and transparent policies are not optional.

Algorithmic bias presents another challenge. When datasets are narrow or incomplete, AI feedback can unintentionally reinforce inequality.

Regular bias audits and diverse training data help reduce this risk, but they require ongoing attention. Trust depends on visibility. Systems that cannot explain how feedback is generated invite skepticism.

Human override options remain essential. Educators must be able to intervene, adjust, or reject AI-generated feedback when context demands it. Overreliance on automation can also reduce human interaction, which plays a crucial role in motivation and social learning.

Finally, AI literacy matters. Both students and educators need to understand how AI feedback works, where it helps, and where it falls short.

Without that understanding, even well-designed systems can be misused. Responsible adoption is not about limiting technology. It is about setting boundaries that keep learning human.

How Does AI-Driven Feedback Change the Role of Educators?

The shift does not feel dramatic at first. It shows up quietly, in calendars that open up and margins that look less crowded. AI-driven feedback changes the role of educators mainly by changing how time is spent.

When AI systems reduce grading workloads by approximately 70%, the impact is immediate and practical. Less time goes into repetitive human grading. More time becomes available for work that cannot be automated.

That change reshapes teaching priorities:

- More time for mentorship, where conversations focus on progress, goals, and confidence rather than surface errors

- Greater emphasis on higher-order feedback, such as argument quality, critical thinking, and reasoning

- Access to valuable insights, as AI surfaces learning patterns that are difficult to see assignment by assignment

- Retention of authority, since educators still define evaluation standards and make final judgments

Teaching gradually shifts from correction to coaching. AI handles detection and consistency. Educators handle meaning, context, and motivation. The role does not shrink. It sharpens.

How Can PowerGrader Support a Human-Centered Feedback Model at Scale?

Scale is where feedback systems often break down. PowerGrader is designed to hold that line. It supports instructor-controlled AI-generated feedback rather than automated decision-making.

PowerGrader delivers real-time written corrective feedback during the revision process, allowing students to respond while learning is still active. Assessment criteria are set by educators and applied consistently by AI, reducing variability without diluting rigor. Pattern detection across cohorts helps instructors see where learning stalls or clusters of confusion form.

What matters most is governance. PowerGrader follows a human-in-the-loop model. Educators can review, adjust, or override AI feedback at any point. Workloads decrease, but standards remain intact.

Feedback becomes faster, not looser. At scale, this balance allows institutions to expand access to high-quality feedback without sacrificing trust, accountability, or instructional intent.

What Should Institutions Consider Before Replacing or Augmenting Teacher Feedback With AI?

Replacement is rarely the right goal. Augmentation is. AI is most effective when it supplements teacher feedback rather than competes with it. Pedagogical context matters more than automation. Tools must align with how learning is taught, assessed, and supported.

Trust, training, and transparency determine whether AI improves or complicates outcomes. Educators and students need clarity about how feedback is generated and when human judgment takes priority.

Responsible implementation improves learning outcomes by strengthening feedback loops, not fragmenting them. Education evolves when technology supports focus and progress, but human judgment remains the foundation for performance and evaluation.

Frequently Asked Questions (FAQs)

1.How does AI-driven feedback differ from traditional teacher feedback?

AI-driven feedback is immediate, consistent, and scalable, while traditional teacher feedback provides deeper contextual understanding, interpretive judgment, and emotional support shaped by human experience.

2. Is AI-generated feedback as effective as teacher feedback?

Research shows both can lead to statistically significant learning gains, with AI matching teacher feedback in certain writing outcomes, especially structure, coherence, and revision efficiency.

3. Why do students often trust teacher feedback more than AI feedback?

Teacher feedback carries human intent, relational context, and credibility built through interaction, which influences how seriously students reflect on and apply the guidance.

4. Can AI-driven feedback replace teachers in large classes?

No. AI can support feedback delivery at scale, but teachers remain essential for evaluation, mentorship, motivation, and higher-order instructional decisions.

5. What risks come with relying too heavily on AI feedback?

Overreliance can reduce human interaction, introduce bias if data is limited, and weaken critical engagement if students accept feedback without reflection.

6. Why are hybrid feedback models widely recommended?

Hybrid models combine AI efficiency with human insight, improving feedback quality, learner engagement, and learning outcomes across diverse educational settings.

7. How does PowerGrader fit into a hybrid feedback approach?

PowerGrader provides instructor-controlled AI feedback, reducing workload while preserving human oversight, consistent standards, and academic rigor.