How Does AI Provide Real-Time Feedback to Students?

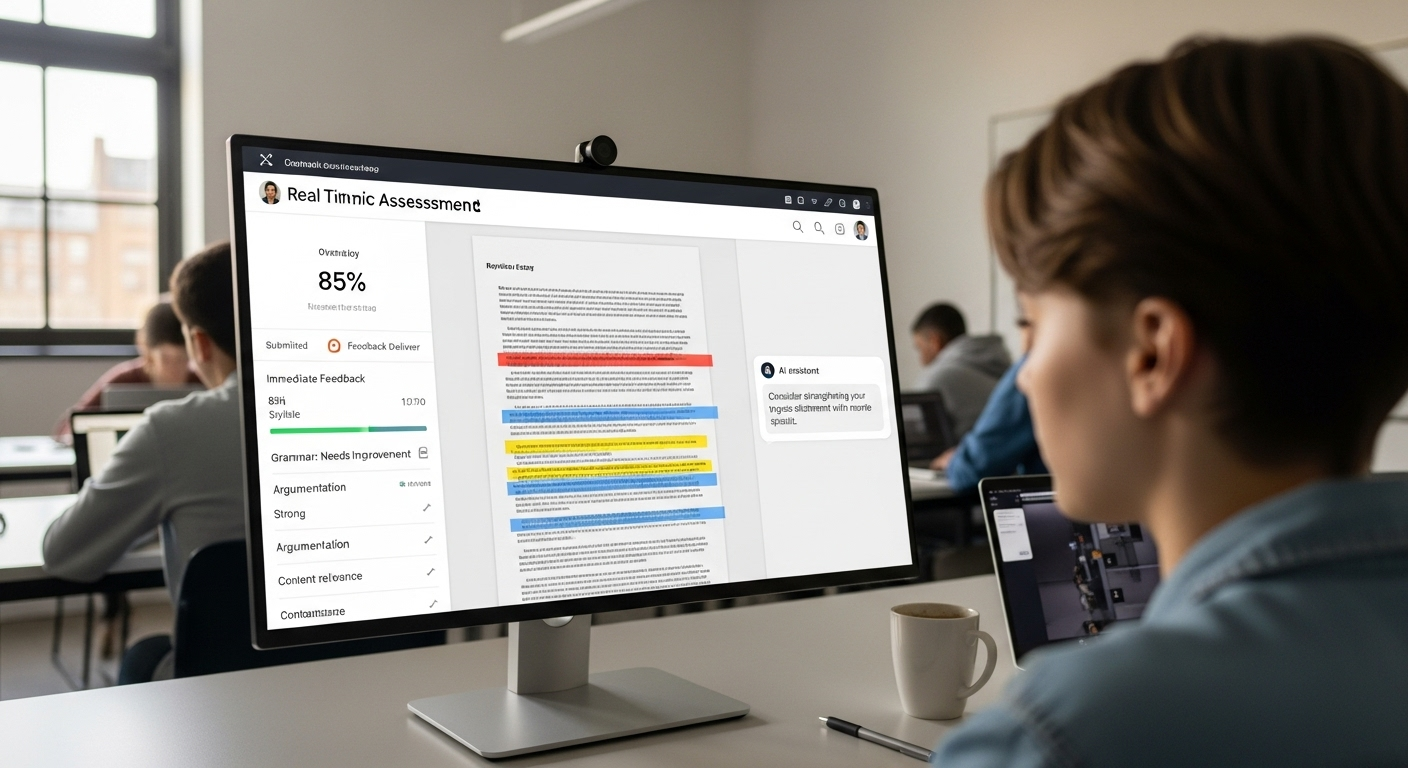

AI provides real-time feedback by analyzing student responses instantly and delivering guidance while learning is still in progress. This helps correct mistakes early, improve understanding, and keep learners engaged. Platforms like Apporto TrustEd enable scalable, in-context feedback that supports both students and educators.

For years, feedback in education has arrived late. Students complete an assignment, submit it, and wait. Days pass. Sometimes weeks. By the time feedback appears, the learning moment has already slipped away, and early misunderstandings have had time to settle.

This delay is built into many traditional teaching methods, but it comes at a cost. When feedback is separated from effort, retention drops and student progress slows.

Real-time feedback changes that relationship. With AI, guidance can appear while a student is still engaged with the task, still thinking through the problem, and still able to adjust.

That change raises an important question. If feedback now happens during learning rather than after it, what does “real-time feedback” actually mean in practice, and how does AI deliver it inside the learning process?

What Does “Real-Time Feedback” Actually Mean During the Learning Process?

Real-time feedback happens inside the learning moment. It does not wait for an assignment to close or grades to be released. Instead, feedback appears while a student is still working, still thinking, and still able to respond.

With AI, feedback delivery becomes immediate. A response, a hint, or a correction shows up as soon as a student submits an answer, writes a sentence, or makes a choice. That timing changes everything.

Immediate feedback has been shown to improve learning outcomes compared to delayed responses, largely because the brain is still focused on the task. When learners can act while they are cognitively engaged, feedback quality improves.

Guidance feels relevant, not abstract. To understand how this is possible, it helps to look beneath the surface at what AI systems actually do when student work is submitted.

What Happens Inside AI Systems When a Student Submits Work?

The moment a student submits work, AI systems begin analyzing it in real time. This process is fast, but it is not shallow. AI assessment systems evaluate responses as they arrive, allowing feedback to surface almost instantly.

Several layers of artificial intelligence work together:

- Natural language processing allows the system to read and interpret written responses, not just scan for keywords.

- Machine learning models compare student answers against known patterns, including common mistakes and partial understanding.

- Automated feedback tools deliver immediate corrections for syntax, pronunciation, and citation style, especially in writing-heavy tasks.

This real-time analysis serves an important purpose. Early detection prevents misconceptions from becoming habits. Instead of repeating errors, students receive guidance while the lesson is still unfolding. That early intervention keeps learning aligned, efficient, and far more resilient as concepts become more complex.

In What Ways Does AI Adapt Feedback to Each Student Individually?

AI adapts feedback by watching how a student learns, not just what they submit. Over time, AI chatbots and tutoring tools recognize individual learning patterns and adjust accordingly.

That personalization shows up in several practical ways:

- Learning pace awareness as feedback changes speed and depth based on how quickly a student progresses

- Prior knowledge recognition so explanations build on what the learner already understands

- Tone and detail adjustment with brief nudges for confident learners and clearer breakdowns for those who need more support

- Targeted guidance that focuses on specific gaps instead of repeating general advice

This is where personalized learning becomes real. Students are no longer pushed forward at the class average. They move at their own speed, guided by personalized feedback that responds in the moment.

Engagement improves because feedback feels relevant. Retention improves because learners stay aligned with material that matches where they actually are.

Where Do Intelligent Tutoring Systems Fit Into Real-Time Learning?

Intelligent tutoring systems operate inside the learning process itself. They deliver feedback while students are actively solving problems, not after the session ends. That timing keeps mistakes visible and correctable.

These systems work by continuously assessing student behavior and performance:

- Real-time problem-solving feedback that appears during quizzes, exercises, or simulations

- Adaptive difficulty adjustment based on ongoing assessment rather than fixed levels

- Progress and learning-style analysis that shapes how content is presented

- Multiple learning paths that support diverse learners without forcing a single approach

Platforms like Khan Academy already use GPT-based tutors to offer hints instead of answers. The same principle applies to Apporto’s AI-powered tutoring solution, CoTutor.

CoTutor delivers in-context guidance that helps students think through problems in real time, while instructors remain fully in control. It scales personalized support without turning learning into automation, which is exactly where intelligent tutoring systems add the most value.

Which Student Outcomes Improve Most With Immediate AI Feedback?

Immediate AI feedback has a direct and measurable impact on how students learn and how quickly they improve. When guidance arrives in the moment, it changes the learning dynamic in several important ways:

- Faster correction of mistakes because errors are addressed before they repeat across multiple attempts

- Deeper understanding of complex concepts since students receive direction while the problem is still active in their mind

- Stronger learner confidence built through continuous feedback instead of delayed judgment

- Higher engagement as students see a clear connection between effort and outcome

Together, these effects create rapid learning cycles. Students act, receive feedback, adjust, and move forward without long pauses. Over time, those tighter cycles lead to stronger learning outcomes and sustained improvement, not just short-term gains.

How Can AI Tools Identify Patterns and Support At-Risk Students Early?

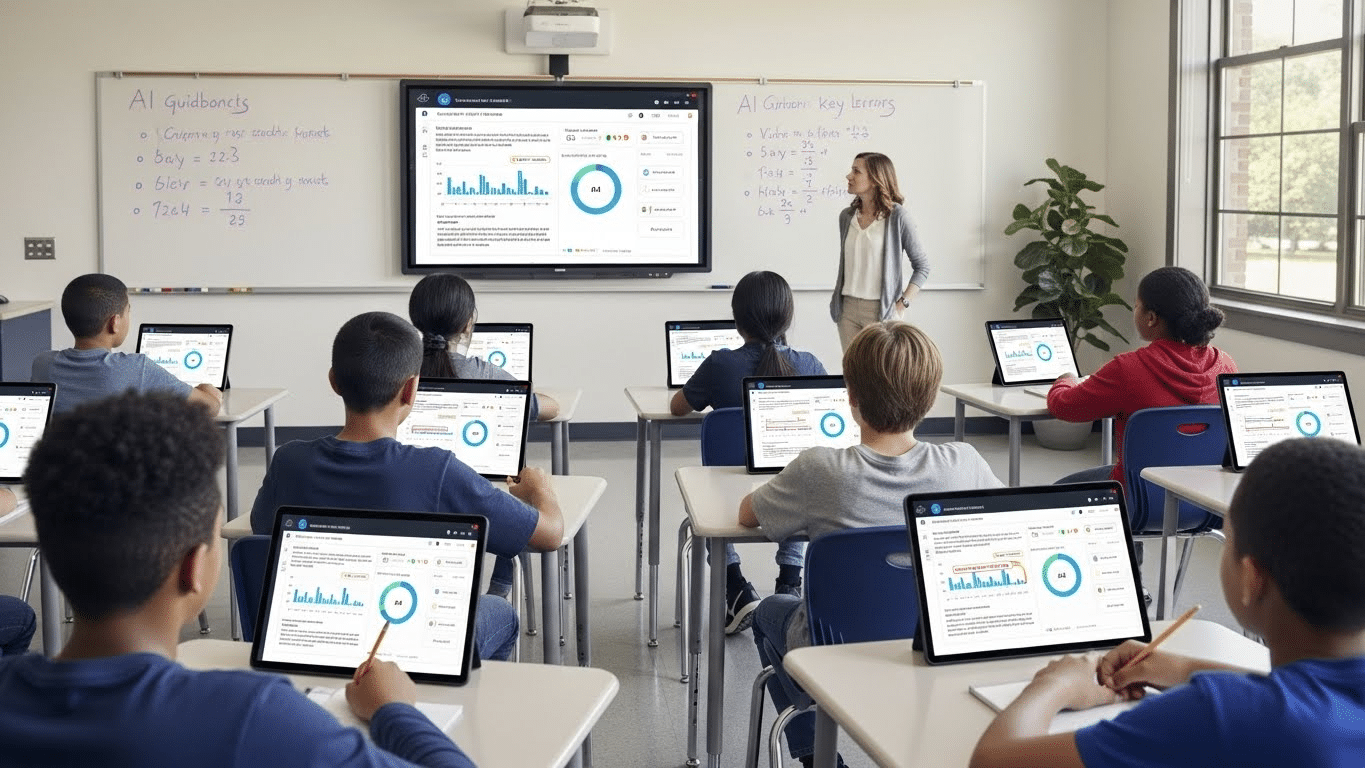

While real-time feedback helps individual students, AI tools also operate at a broader level. By analyzing performance across an entire classroom, AI can identify patterns that are difficult to see through manual review alone.

These systems look for trends in student responses, pacing, and accuracy. When many students struggle with the same concept, that signal becomes clear.

When an individual begins to fall behind, that pattern surfaces early. AI dashboards translate this data into actionable insights, giving educators a real-time view of student performance rather than a delayed summary.

This early visibility changes how support works. Instead of reacting after grades drop, teachers can intervene sooner, adjust materials, or refine teaching strategies based on real evidence. The result is proactive, data-driven support that helps at-risk students before small gaps grow into larger challenges.

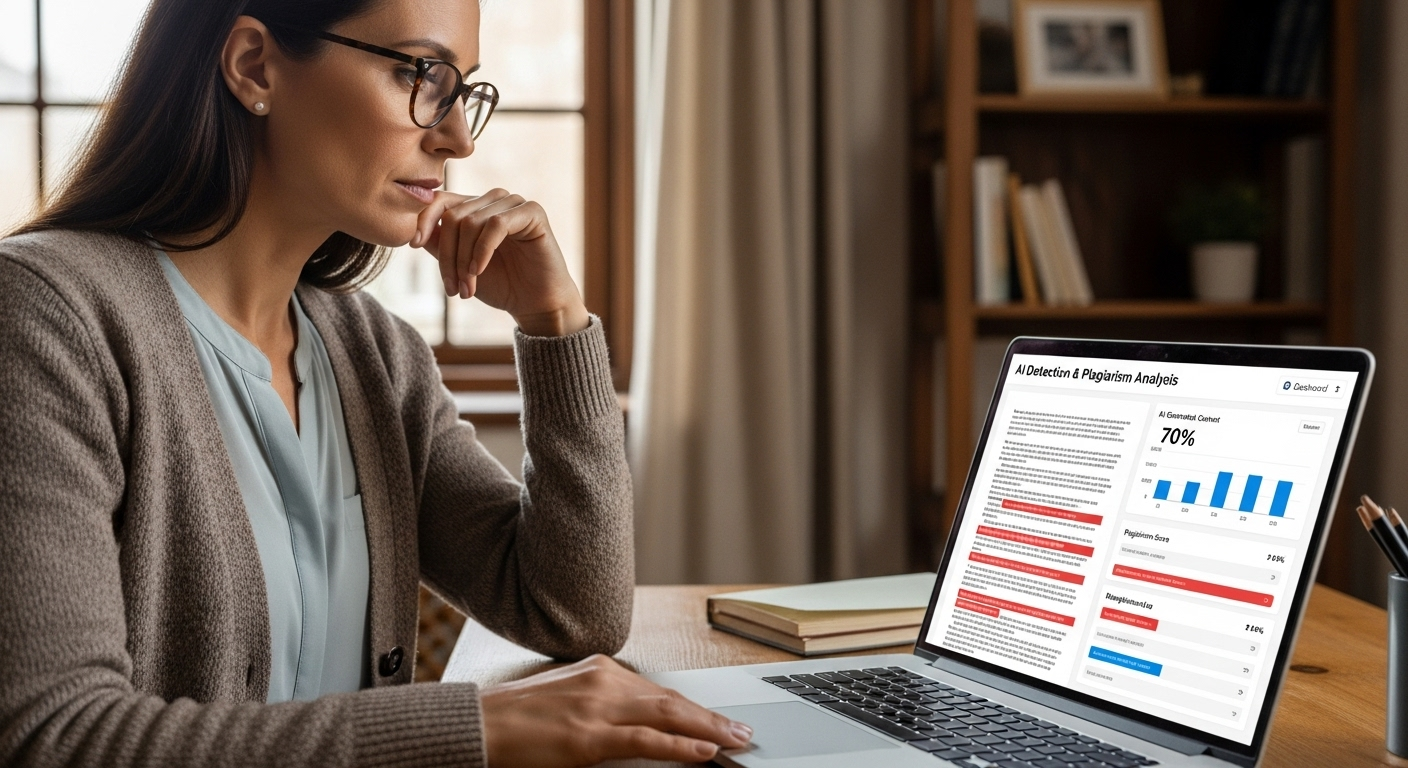

How Does AI Reduce Grading Workloads Without Lowering Feedback Quality?

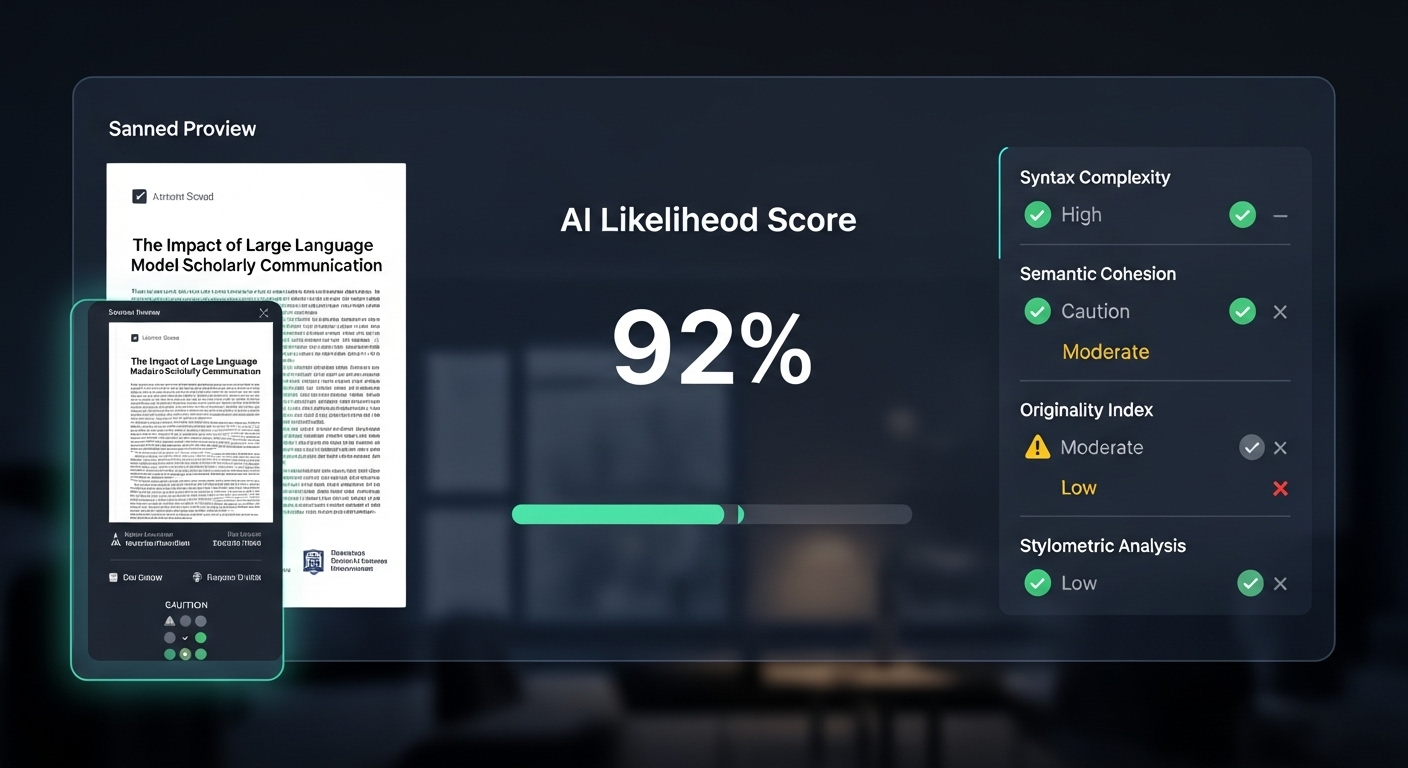

Grading has always carried a quiet tension. Do it fast, or do it well. AI softens that tradeoff. By automating large parts of human grading, AI-powered tools can reduce grading workloads by roughly 70%, which is not a small shift. It changes how time gets spent.

Consistency improves first. AI applies the same criteria every time, which reduces the subtle bias that can creep in when fatigue sets in. Accuracy improves too, especially in written work, where natural language processing helps catch issues in structure, clarity, and alignment with rubrics.

Less time spent on administrative tasks means more time for student support. And when educators are not rushing, feedback quality improves. Calm time tends to produce better thinking. That holds true here as well.

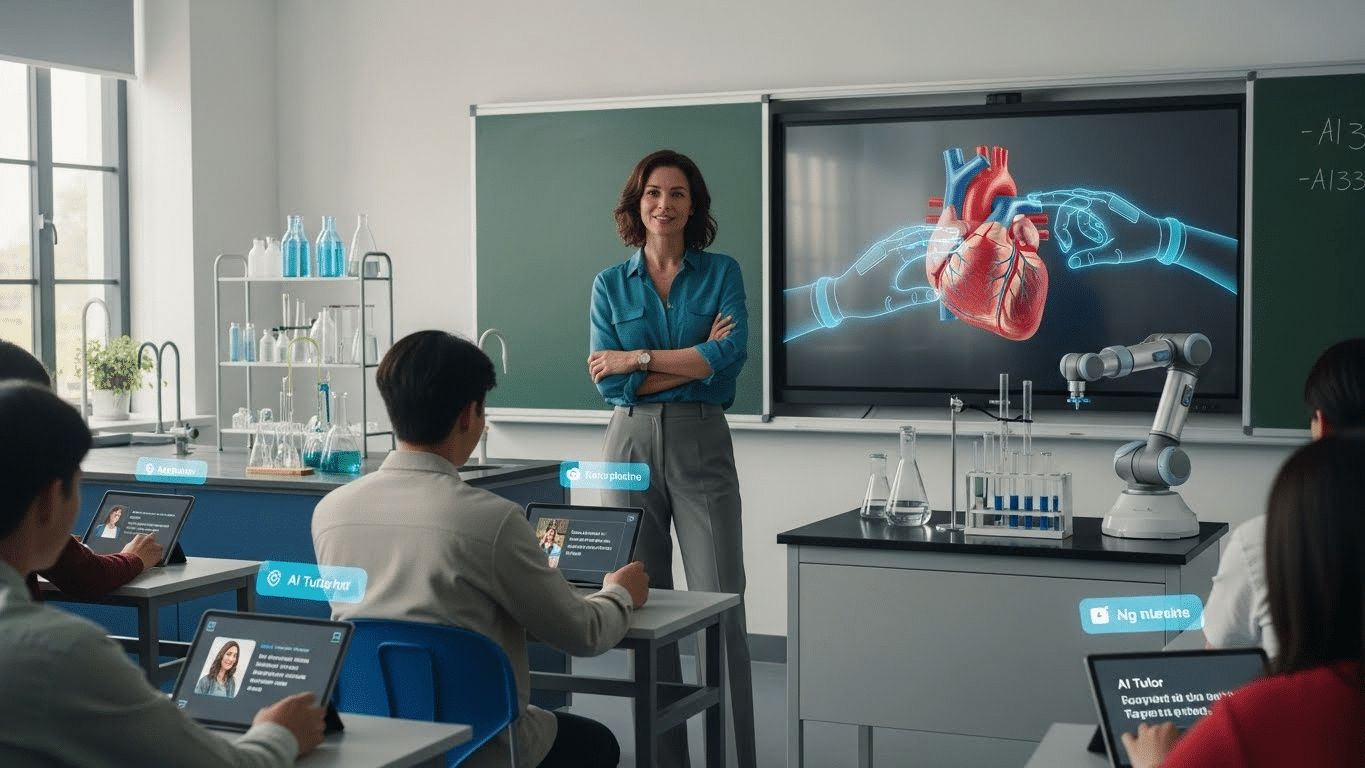

How Does AI Support Diverse Learners Across Different Educational Levels?

Learning does not look the same in every classroom, and AI reflects that reality. Today, AI is used across elementary schools, secondary education, and higher education, adapting its role as learners mature.

What makes this possible is flexibility. AI systems can adjust content to different learning styles, offering adaptive explanations, pacing, and formats. Visual learners see things differently.

So do those who need repetition or a slower build. At scale, AI can support large populations without flattening individuality. Personalized learning still exists, even in crowded classrooms.

Perhaps most importantly, feedback remains consistent. Regardless of class size or institution, students receive timely responses that reinforce understanding. That consistency helps learning experiences feel fair, predictable, and easier to trust.

What Ethical Safeguards Are Essential for AI-Generated Feedback?

Any system that touches student work carries responsibility. With AI-generated feedback, that responsibility grows sharper. Protecting student privacy is not optional. It is a significant concern that shapes every design choice.

Ethical systems begin with transparency. Clear AI policies help educators and students understand what the system does, and just as important, what it does not do. Bias audits matter too. They surface blind spots that training data alone cannot reveal. Diverse training data helps reduce systemic bias, but it is not enough on its own.

Human override must always remain available. Educator training is just as critical. AI works best when teachers understand how to guide it, question it, and step in when judgment—not automation—is required.

How Can Educators Integrate AI Feedback Without Losing the Human Element?

Integration works best when AI stays in its lane. AI augments human tutors; it does not replace them. That distinction matters. Emotional intelligence, nuance, and trust still live with people, not systems.

What AI does well is create space. By handling repetitive feedback and surface-level analysis, AI frees time for meaningful teacher-student interaction. Conversations deepen. Mentorship improves. Classrooms breathe a little easier.

Blended approaches tend to work best. AI provides steady, immediate guidance, while educators focus on context, motivation, and judgment. Together, they improve the classroom experience without making it feel automated. The technology fades into the background. The relationship stays front and center.

Why Does AI Support Teachers Instead of Replacing Them?

AI does not teach in isolation. It supports instructional decision-making by surfacing patterns, highlighting gaps, and offering timely signals. But authority remains with educators. Always.

Teachers still evaluate work, shape learning goals, and decide what matters. AI strengthens teaching practices by providing data insights that would otherwise take hours to assemble. It does not tell educators what to think. It gives them clearer information to think with.

Human judgment remains central to education because learning is not just technical. It is social, emotional, and contextual. AI can help manage complexity, but it does not replace wisdom.

How Can Apporto’s AI Solutions Enable Real-Time Feedback at Scale?

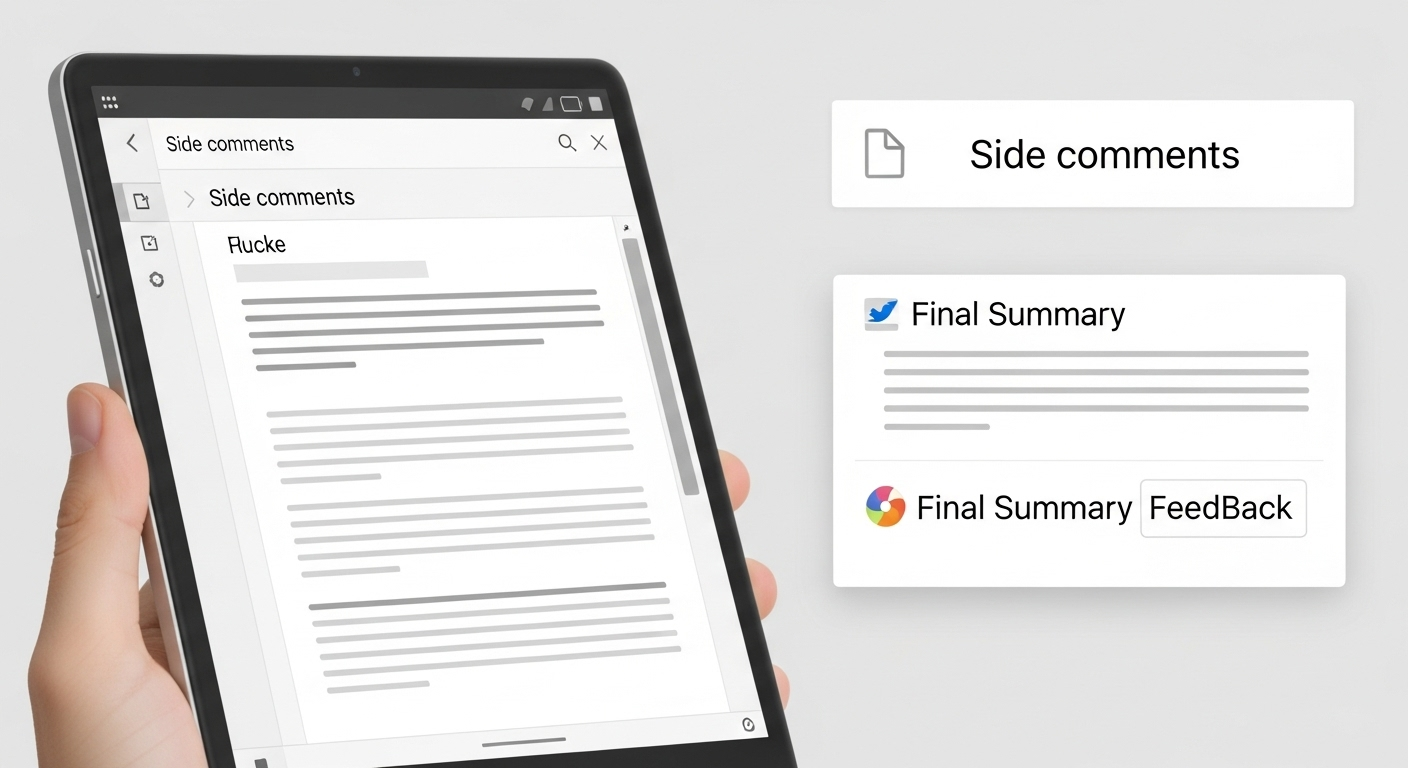

Real-time feedback only works if it can scale without losing trust. That’s where Apporto’s AI solutions fit. Tools like PowerGrader and CoTutor are designed around a simple idea: AI should assist educators, not take control away from them.

PowerGrader helps instructors deliver fast, consistent feedback on student work while keeping grading criteria firmly in human hands. CoTutor works alongside students, offering real-time, in-context guidance as they learn, without jumping straight to answers.

Both solutions surface patterns across cohorts, reduce workload without lowering rigor, and keep humans in the loop. Feedback stays timely, personal, and accountable.

That balance is what makes real-time feedback sustainable at scale. If you’re curious to see it in action, try it now.

Conclusion:

The direction is clear. Feedback will keep getting faster, more accurate, and more personal. AI already helps educators respond in the moment, not after the fact. As these systems mature, real-time feedback will feel less like an intervention and more like a natural part of learning.

What matters most is how responsibly this integration happens. When AI is used thoughtfully, learning outcomes improve and teaching becomes more human, not less.

Frequently Asked Questions (FAQs)

1. How does AI provide real-time feedback to students during learning activities?

AI analyzes student responses as they are submitted and delivers guidance immediately, allowing learners to adjust their thinking while the task is still active and cognitively relevant.

2. Does real-time AI feedback actually improve learning outcomes?

Yes. Immediate feedback helps prevent misconceptions, supports faster correction of mistakes, and creates rapid learning cycles that lead to stronger understanding and long-term retention.

3. Can AI-generated feedback be personalized for individual students?

AI systems adapt feedback based on learning pace, prior knowledge, and response patterns, which allows students to receive targeted support instead of generic, one-size-fits-all comments.

4. How does AI help teachers manage large classes more effectively?

AI tools analyze patterns across classrooms, surface actionable insights, and reduce grading workloads, enabling educators to intervene earlier and focus more on student support.

5. Is AI feedback safe and ethical for educational use?

Responsible systems protect student privacy, use transparent policies, undergo bias audits, and include human override options to ensure feedback remains fair and accountable.

6. Does using AI for feedback replace teachers?

No. AI supports instructional decision-making and reduces administrative burden, but educators retain full authority over evaluation, teaching strategies, and human connection.

7. Can AI feedback work across different education levels?

Yes. AI is used from elementary schools through higher education, delivering consistent, timely feedback while adapting to diverse learners and institutional needs.