One of the clearest signs that higher education is moving into a more mature AI era is the decline of the old policy debate. For a time, many institutions approached generative AI with a binary mindset. Ban it or allow it. Restrict it or embrace it. But that framing is proving far too simplistic for the realities campuses now face.

AI is not a single behavior. It is a category of capabilities being used in very different ways across writing, coding, research, design, feedback, tutoring, and study support. A policy model built around broad prohibition or broad permission cannot account for those differences. And when institutions rely on that kind of blunt framework, they push the real burden of interpretation onto faculty and students.

That is exactly what many leaders are now trying to fix.

Across higher education, students are encountering widely different expectations depending on the course, department, or instructor. In one class, AI-supported brainstorming may be encouraged. In another, similar use may be treated as misconduct. In one department, attribution may be expected but not clearly defined. In another, there may be no guidance at all. This inconsistency does more than create confusion. It weakens trust, increases enforcement challenges, and makes it harder for students to develop sound judgment.

The answer is not more restrictive language. It is better structured guidance.

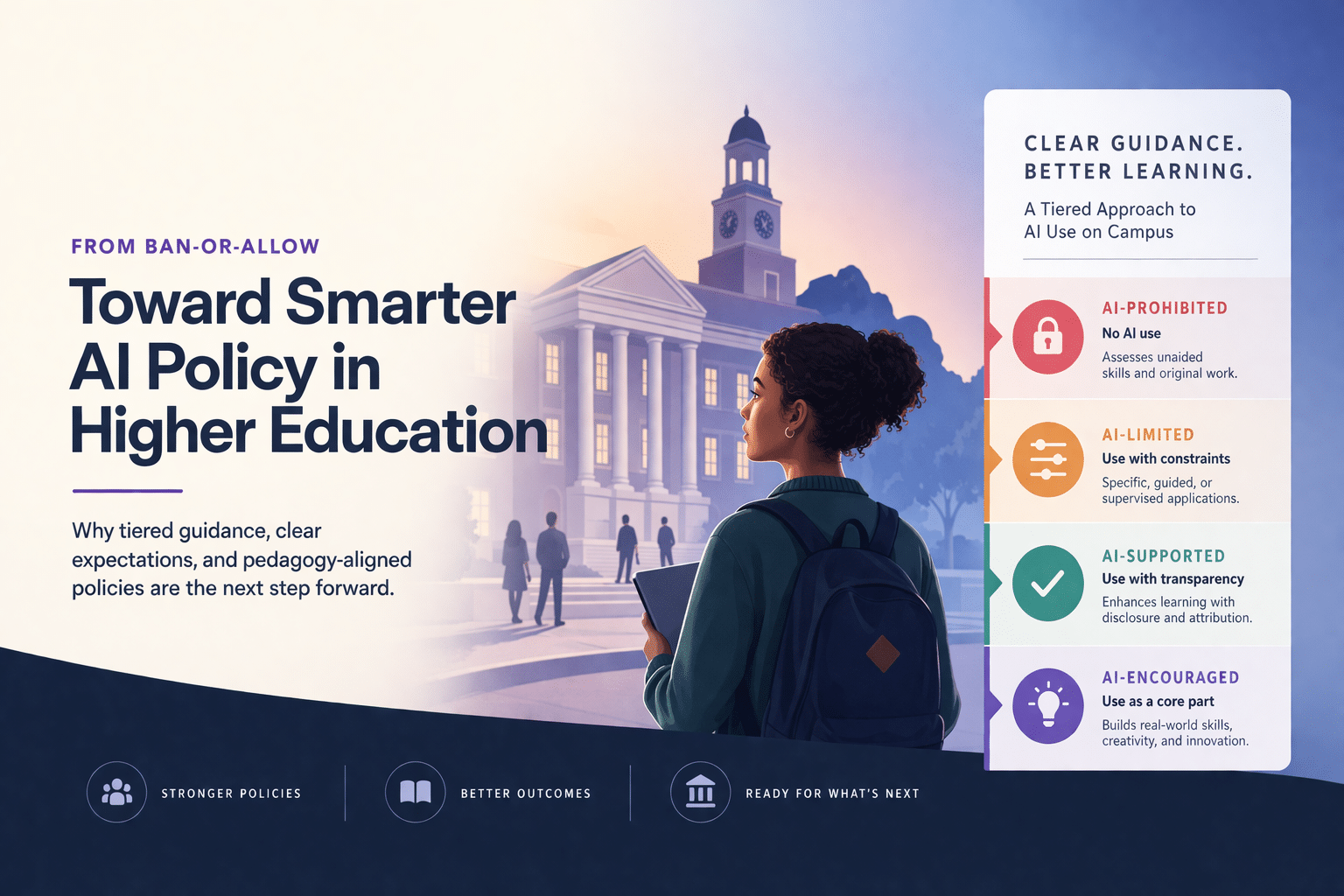

That is why tiered AI policy frameworks are becoming more important. Rather than trying to classify AI as simply allowed or prohibited, institutions can define categories such as AI-prohibited, AI-limited, AI-supported, and AI-encouraged. The point is not to create more bureaucracy. The point is to align AI use with learning objectives.

For example, if the goal of an assignment is to assess a student’s unaided writing fluency, AI-generated drafting may be inappropriate. If the goal is to evaluate editing, critique, or argument refinement, then guided AI support might be reasonable with disclosure. If the task is coding in a real-world workflow, use of AI may actually be part of the authentic skill being assessed. A tiered model gives institutions and faculty a practical way to reflect those differences. For leaders looking at how peers are navigating these questions at scale, the 2026 State of AI in Higher Education Leadership Survey offers a broader view of the policy, governance, and readiness challenges shaping the sector.

This is where policy becomes far more useful when it is connected to pedagogy.

Too many early AI policy conversations focused on enforcement before design. But durable policy only works when it reflects how learning is meant to happen. Faculty need support in translating broad institutional principles into assignment-level expectations. Students need examples that make the rules concrete. Advising, orientation, and teaching support teams need shared language that can be repeated consistently across the institution.

In other words, policy cannot stay at the level of abstract principle statements.

This is a challenge many institutions are still working through. Leaders report that policies often exist as draft language, borrowed templates, or high-level values statements that have not yet been operationalized. That creates ambiguity at exactly the moment when clarity matters most. A campus may say it supports responsible AI use, but if students cannot tell what that means in a lab, seminar, take-home exam, or capstone, the policy has not done its job.

A more effective approach starts with three practical moves.

First, define a shared institution-wide vocabulary. Everyone should understand the difference between prohibited use, supported use, and conditional use. That vocabulary should appear not just in policy documents, but also in syllabus guidance, student resources, and faculty development materials.

Second, build discipline-specific interpretation on top of that shared language. AI expectations in computer science, business, nursing, and writing-intensive humanities courses will not be identical. That is fine. The goal is consistency in structure, not sameness in every rule.

Third, connect policy to real workflows. Faculty need model syllabus statements. Students need examples of disclosure and attribution. Academic integrity teams need procedures that reflect the realities of AI-related cases. Teaching and learning centers need resources that help instructors design assignments around the policy rather than bolt it on after the fact.

The institutions moving in this direction are not trying to eliminate judgment. They are trying to support it. They understand that responsible AI use is not just about restricting misuse. It is also about helping students learn how and when to use AI well.

That point matters because AI literacy is becoming a core institutional goal in its own right. If students are going to graduate into workplaces where AI is embedded in everyday tasks, then higher education cannot limit itself to warning them about AI. It has to teach them how to evaluate outputs, document use, recognize limitations, and apply judgment in context. Policy is part of that educational infrastructure.

The shift from ban-or-allow to tiered guidance is not a cosmetic change. It reflects a deeper evolution in how higher education understands AI. Institutions are beginning to recognize that the challenge is not just controlling a tool. It is creating coherent expectations across teaching, learning, and assessment.

That work is not simple. But it is far more sustainable than leaving every course to invent its own rules from scratch.

The campuses that get this right will not necessarily have the longest policy documents. They will have the clearest ones. Their policies will be understandable, actionable, and connected to actual learning design. And in an AI-enabled academic environment, that kind of clarity is quickly becoming one of the most valuable forms of institutional leadership. For a deeper look at how higher ed leaders across the market are approaching AI policy, readiness, and academic integrity, download the full leadership survey report.