Assessment has become one of the most powerful levers in raising student achievement. In many systems, high stakes testing drives instructional priorities, curriculum pacing, and even classroom management decisions. The pressure is real. Scores matter. Accountability requirements shape the educational process in ways that are hard to ignore.

Yet authentic learning asks something different of students. It asks them to think, to apply, to solve problems grounded in real world contexts. It asks for participation, not memorization. And that is where the tension begins.

If student learning now involves active inquiry, performance tasks, and meaningful projects, then traditional measures cannot fully capture it. High stakes testing often measures recall under controlled conditions. Authentic learning unfolds in more complex environments. Measuring it requires tools that capture application, judgment, and transfer.

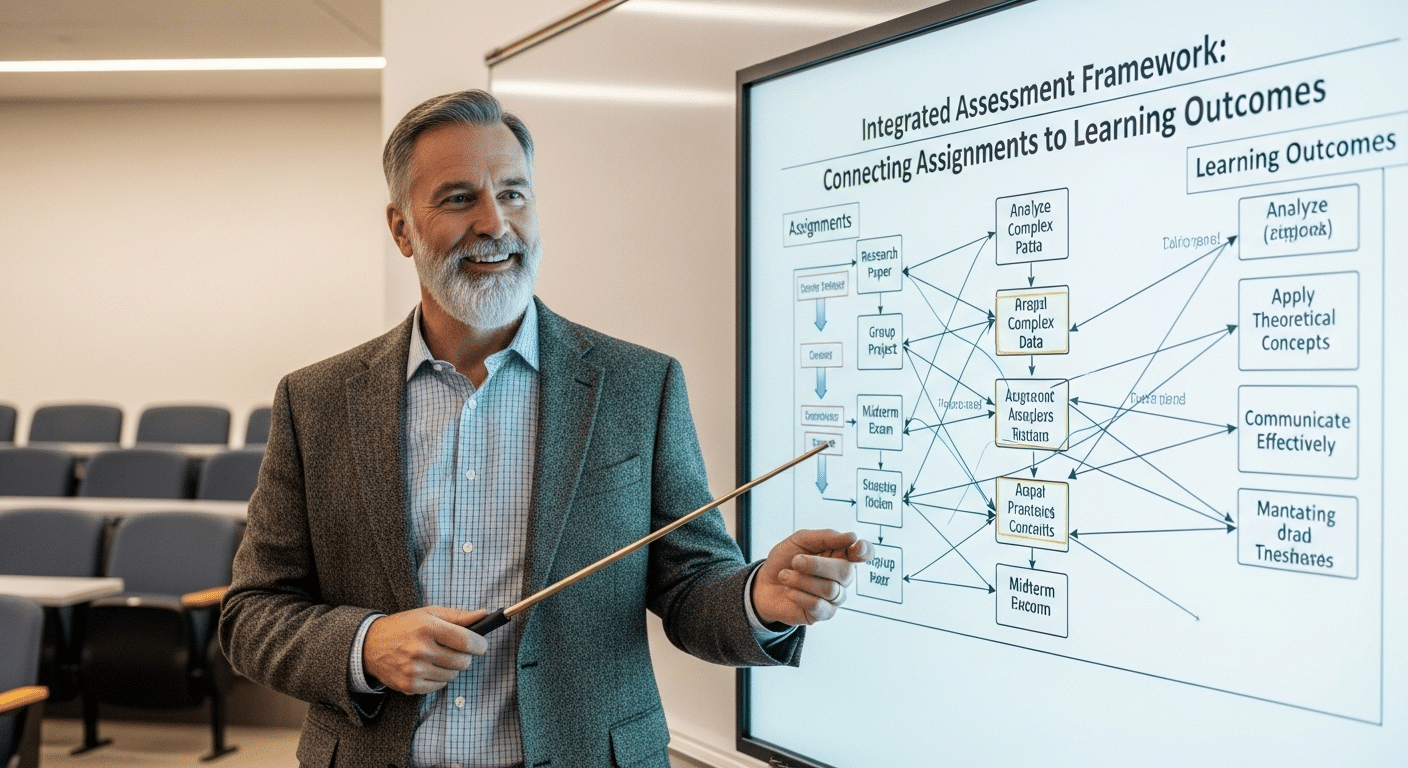

To understand how to assess authentic learning effectively, you must first recognize this complexity. What you measure shapes what students value. And what students value shapes how they learn.

What Does Authentic Learning Actually Require From Students?

Authentic learning asks more of students than simple recall. It places them inside real world context, where problems are rarely tidy and answers are rarely singular.

Instead of repeating information, students confront situations that resemble professional practice, civic responsibility, or community challenges. The expectation changes. They must apply knowledge, not recite it.

In authentic learning environments, students construct understanding actively. They ask questions, test ideas, revise assumptions. The learning process becomes participatory rather than passive. Self-directed inquiry plays a central role.

Students follow lines of curiosity, gather evidence, and connect concepts across disciplines. Reflection is not an afterthought. It helps them examine what worked, what did not, and why.

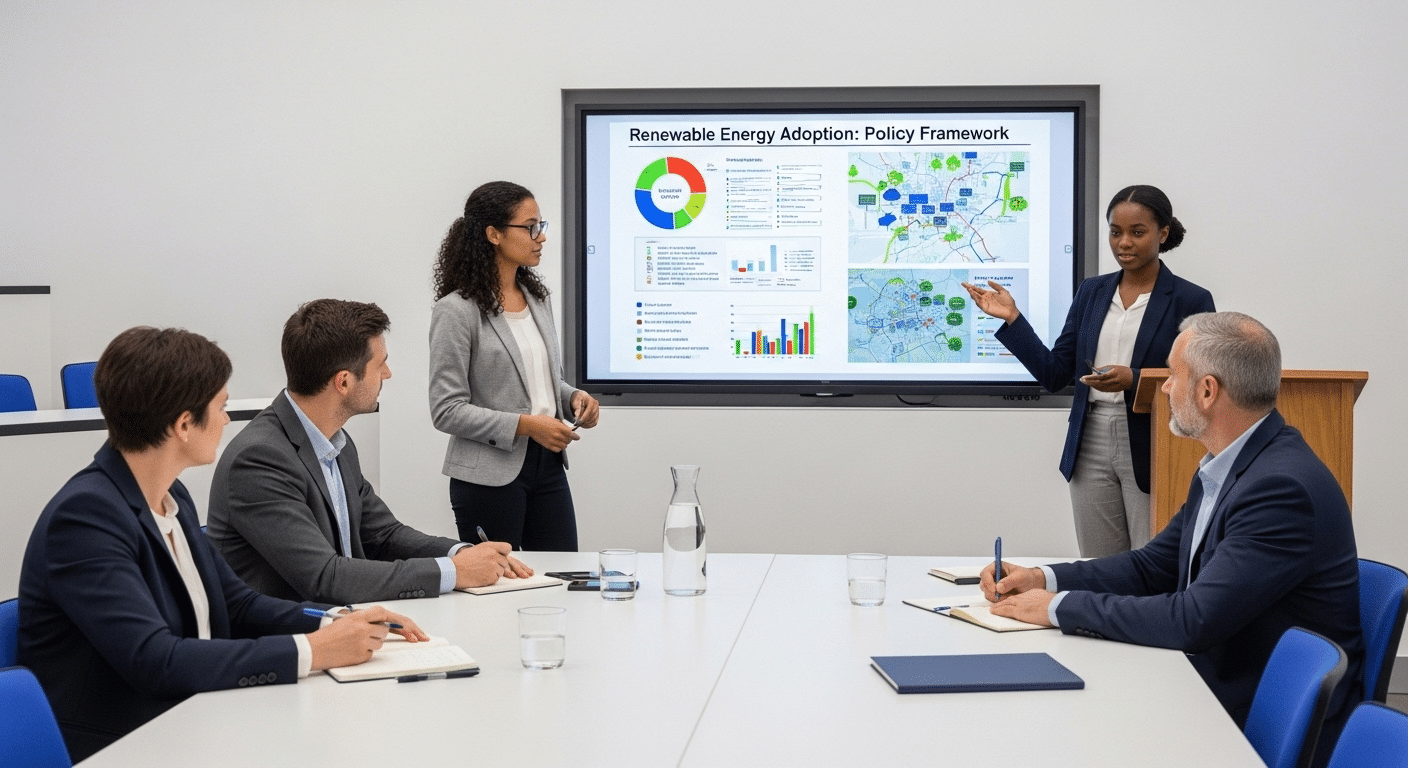

Tangible outcomes often emerge from this process. Students create prototypes, reports, presentations, or other useful products that extend beyond the classroom. These artifacts demonstrate higher order thinking skills because they require analysis, synthesis, and judgment.

If authentic learning demands transfer across situations, then assessment must measure that transfer. It must capture how well students apply knowledge in unfamiliar conditions. Anything less reduces complexity to recall, and recall alone cannot represent authentic understanding.

What Does It Mean to Assess Authentic Learning, Not Just Activity?

Engagement alone is not evidence. A classroom can feel energetic, projects can look impressive, and students can appear deeply involved, yet assessment must still answer a harder question. What did they actually learn, and can they use it?

To assess authentic learning, you move beyond visible activity and examine transfer. Authentic assessment evaluates how well students apply knowledge and skills in situations that require judgment. It asks whether understanding travels, whether concepts hold when conditions change. This is different from checking completion or participation.

Alternative assessments provide a more comprehensive view of student achievement because they focus on performance rather than surface compliance. When you assess performance tasks, you are not merely observing effort. You are measuring how effectively students solve problems, justify decisions, and connect ideas across contexts.

Authentic learning assessment should measure:

- Application of knowledge in complex tasks

- Transfer across contexts, not isolated recall

- Decision-making under constraints and uncertainty

- Demonstrated proficiency aligned to clear criteria

- Metacognitive awareness, including reflection on strengths and gaps

Assessment tools must therefore capture evidence, not enthusiasm. When you assess authentic learning carefully, you align evaluation with what matters most, meaningful understanding that extends beyond the immediate assignment.

Which Assessment Strategies Capture Authentic Learning Most Effectively?

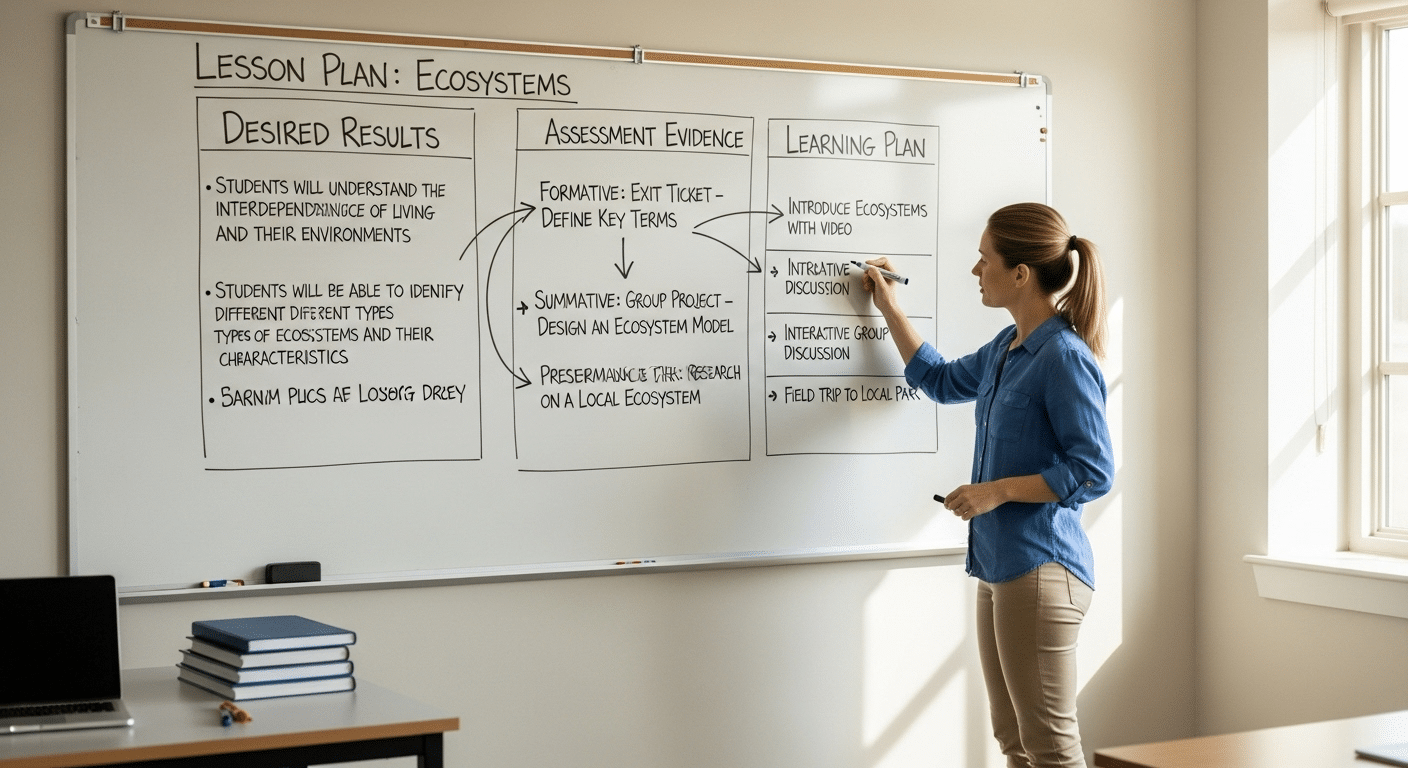

If authentic learning requires application and transfer, then your assessment strategies must make those demands visible. The goal is not to add novelty to the classroom. The goal is to gather credible evidence of understanding through performance assessment. That requires intentional design.

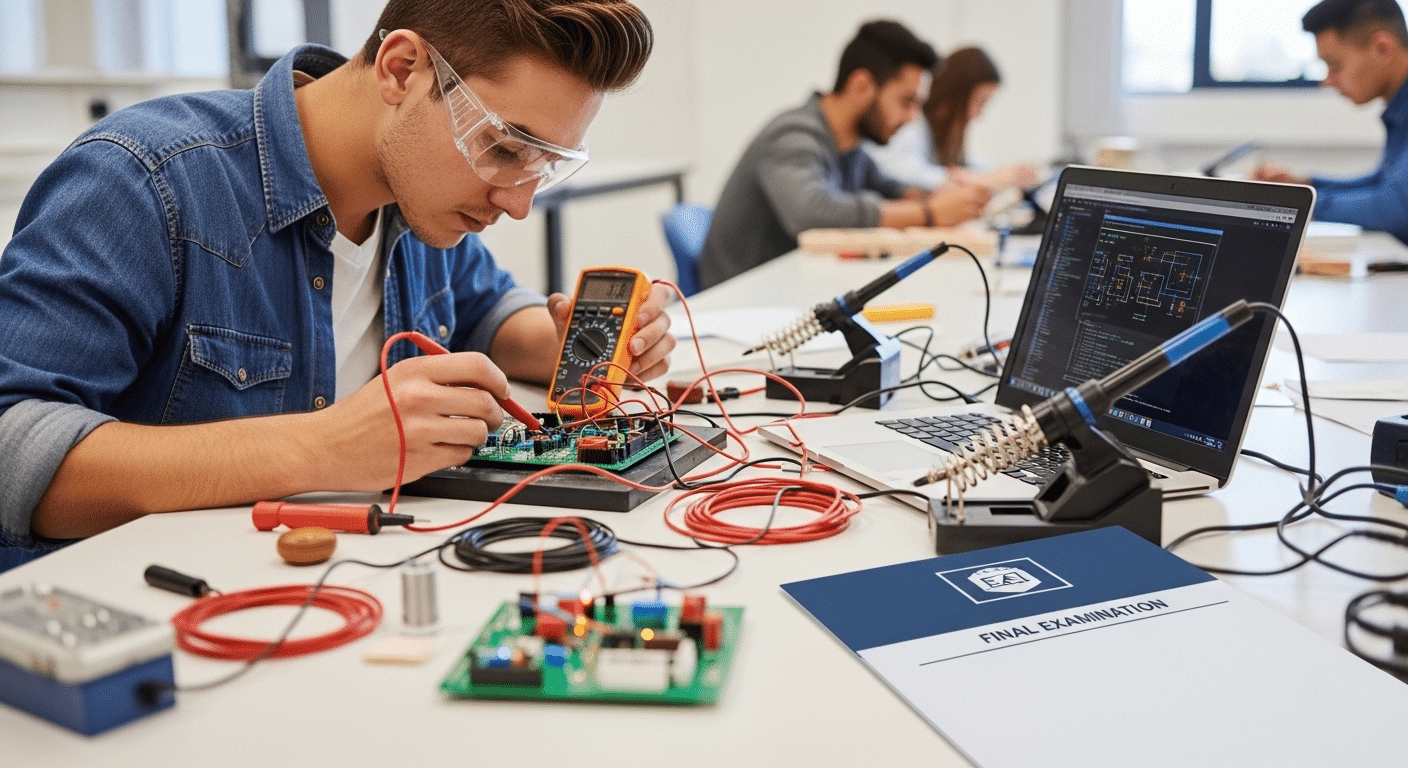

Performance tasks are foundational. When students conduct experiments, draft policy proposals, participate in debates, or design solutions to community problems, you see how well they integrate knowledge and skills. These complex tasks demonstrate understanding in action. Project-Based Learning extends this further. Extended projects encourage critical thinking, sustained inquiry, and revision over time.

Simulations and role-playing add another layer. By replicating real constraints, such as budget limits or competing priorities, they test decision-making under pressure. Case studies require analysis of genuine situations, pushing students to weigh evidence and propose reasoned solutions. Student portfolios then track growth across multiple attempts, making development visible rather than assumed.

Some strategies include:

- Performance tasks with clear criteria to assess proficiency

- Project-based assessments that unfold over time

- Case studies grounded in real scenarios

- Simulations and role-play that introduce constraints

- Student portfolios documenting progress and revision

- Learning logs that promote reflection and metacognitive insight

Together, these assessment tools create a balanced approach. They allow you to assess authentic learning with depth, while still maintaining structure and clarity.

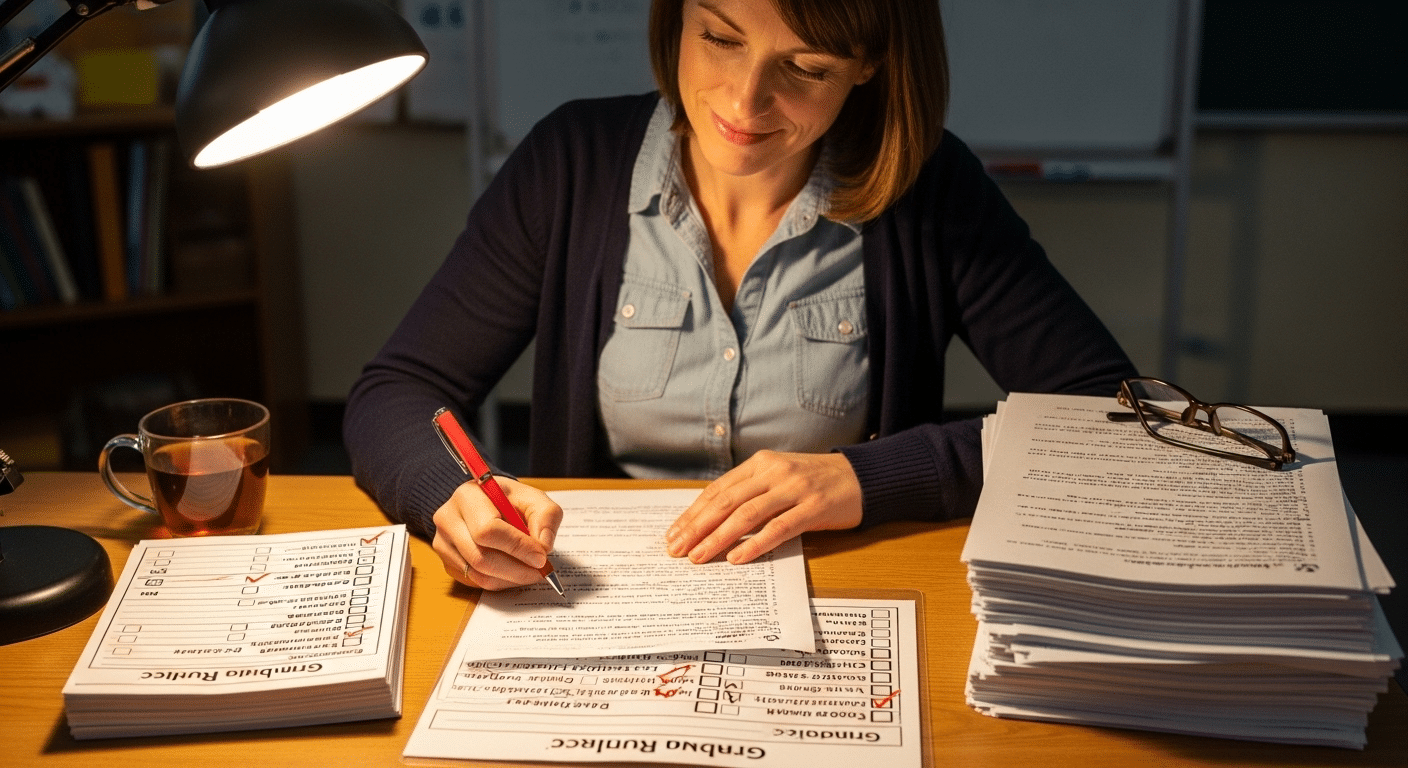

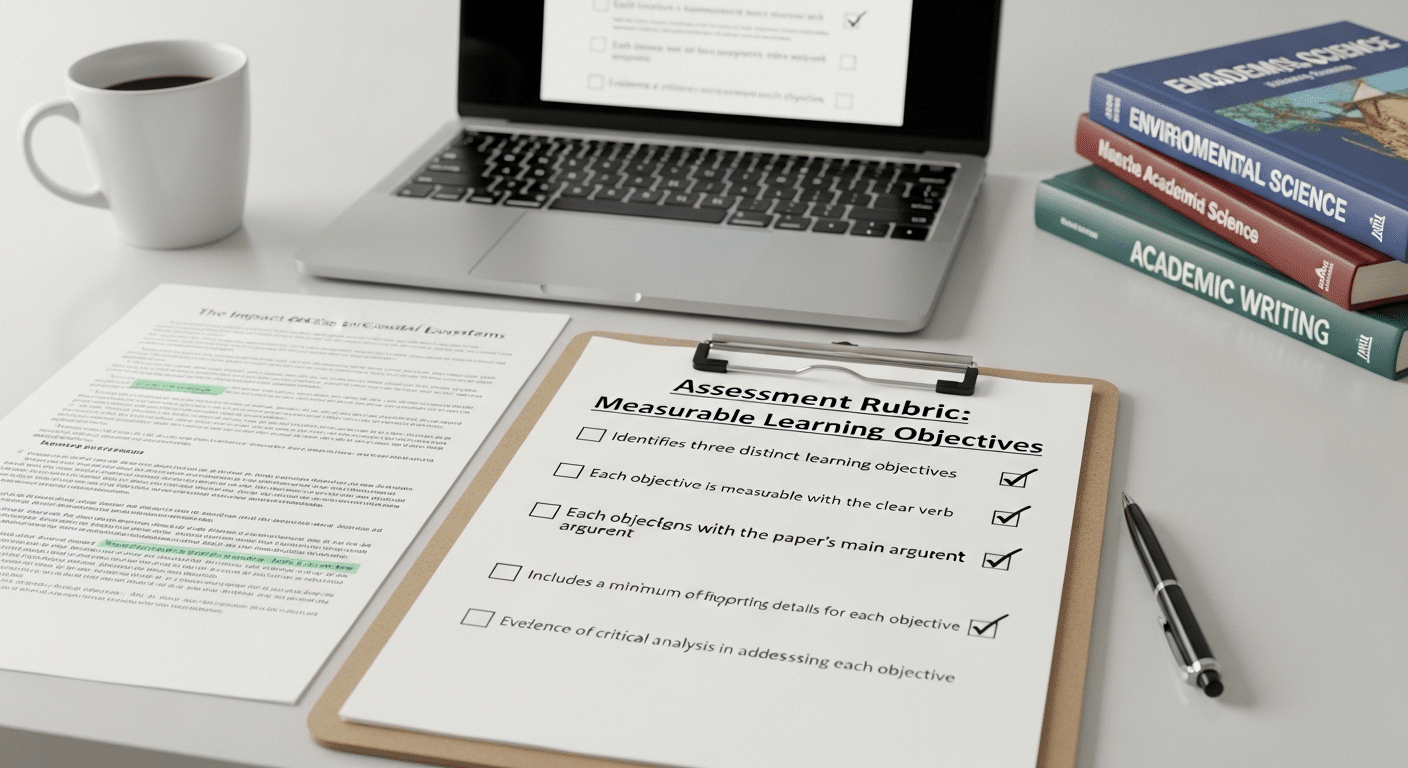

How Do Rubrics, Checklists, and Clear Criteria Strengthen Authentic Assessment?

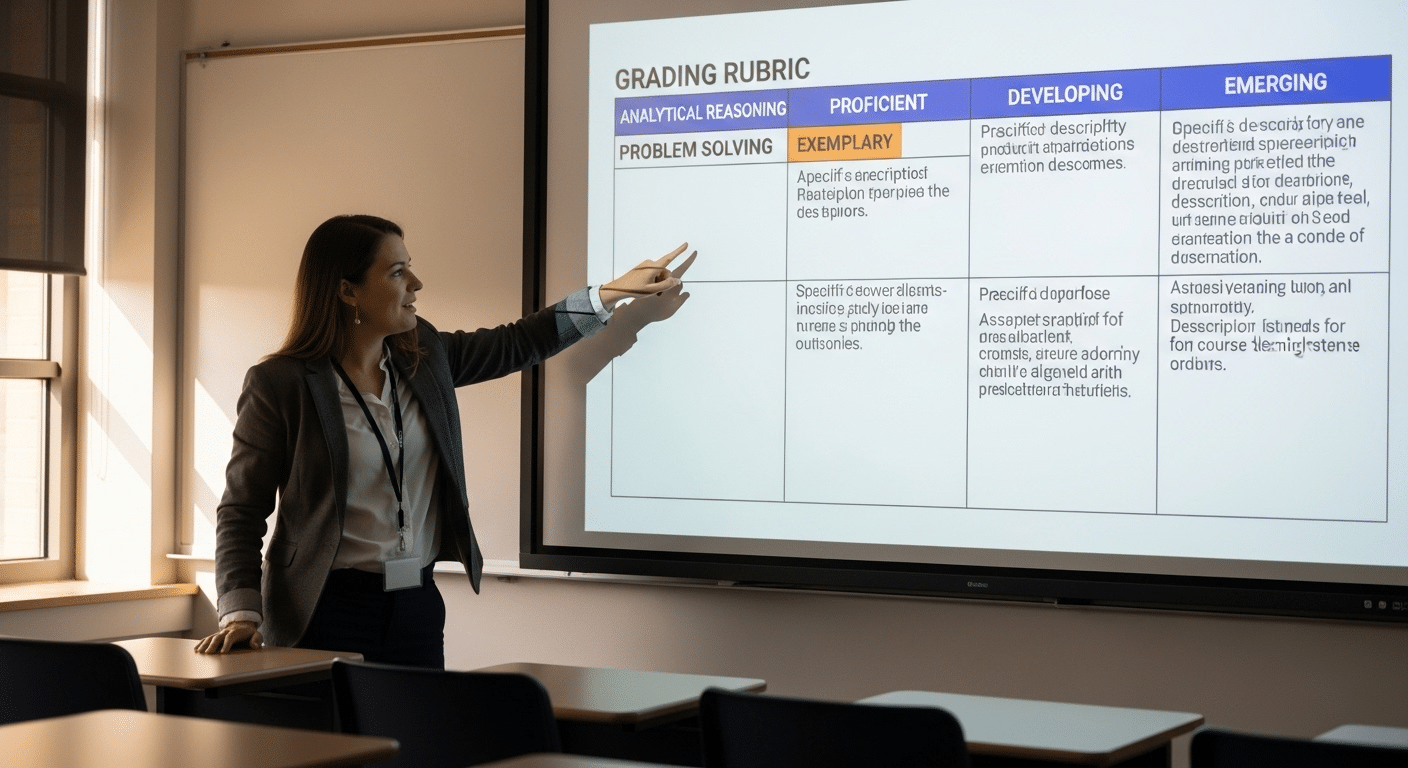

Authentic assessment asks students to complete complex tasks, but complexity without structure can create confusion. If expectations are unclear, scoring becomes inconsistent. Fairness begins to erode. That is where rubrics, checklists, and clear criteria become essential assessment tools.

When you construct rubrics carefully, you measure performance across multiple criteria rather than relying on a single overall impression. Quality, reasoning, accuracy, communication, and application can each be evaluated explicitly.

This produces valid and reliable data, especially when tasks are open-ended. Clear grading criteria provided in advance also increase transparency. Students understand what proficiency looks like before they begin, which reduces uncertainty and strengthens student confidence.

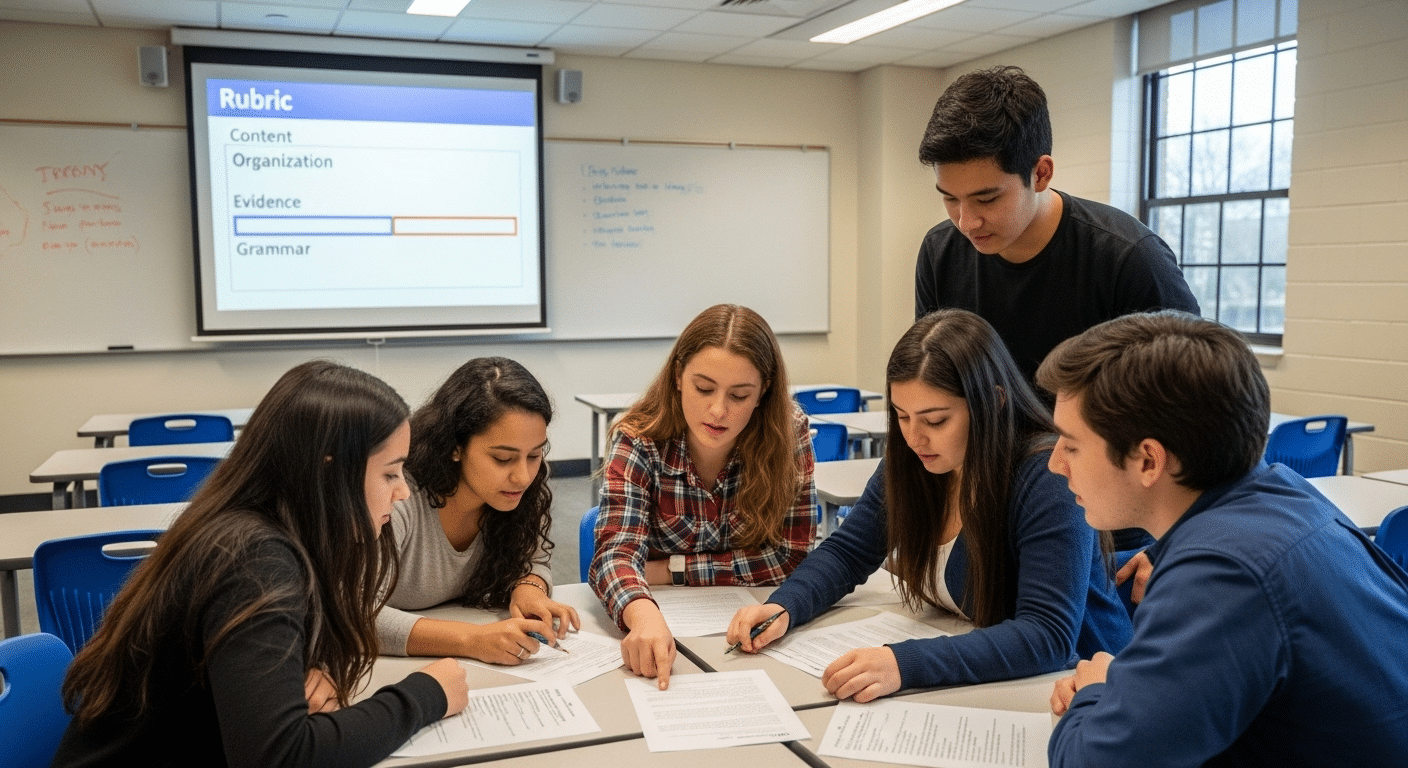

Checklists serve a different but equally important function. They guide students during the learning process. Instead of guessing what matters, students can monitor their own progress against visible standards. This supports balanced assessment, where evaluation and instruction work together rather than against each other.

Effective tools should:

- Define performance levels clearly and consistently

- Align with mandated academic standards

- Provide diagnostic feedback that informs next steps

- Support differentiated instruction for diverse learners

Reliable scoring is not accidental. It is designed. When criteria are explicit and aligned to standards, authentic assessment becomes both rigorous and fair.

How Does Differentiated Assessment Support Diverse Learners?

Authentic learning assumes diversity. Students arrive with different readiness levels, interests, cultural backgrounds, and prior knowledge. A single pathway rarely serves all of them well. Differentiated instruction recognizes this reality and tailors learning experiences to match varied strengths and needs.

Assessment must follow the same logic. When you emphasize differentiating instruction through thoughtful assessment design, you create multiple ways for students to demonstrate understanding.

Performance tasks can vary in format. Reflection prompts can invite different perspectives. Alternative assessments broaden the range of evidence you collect, which improves learning because students are not confined to one narrow expression of competence.

Formative assessments play a central role here. They help you target students learning needs in real time. Instead of waiting for a final evaluation, you adjust support, pacing, and feedback as patterns emerge.

Struggling students benefit from scaffolding and clearer milestones. Advanced learners benefit from deeper challenges and expanded criteria.

A balanced assessment system combines structure with flexibility. It maintains high expectations while allowing for varied routes toward mastery. Equity does not mean lowering standards.

It means ensuring that each student has a fair opportunity to meet them. Differentiated assessment makes authentic learning accessible without reducing its rigor.

Why Are Formative and Iterative Processes Essential for Authentic Learning?

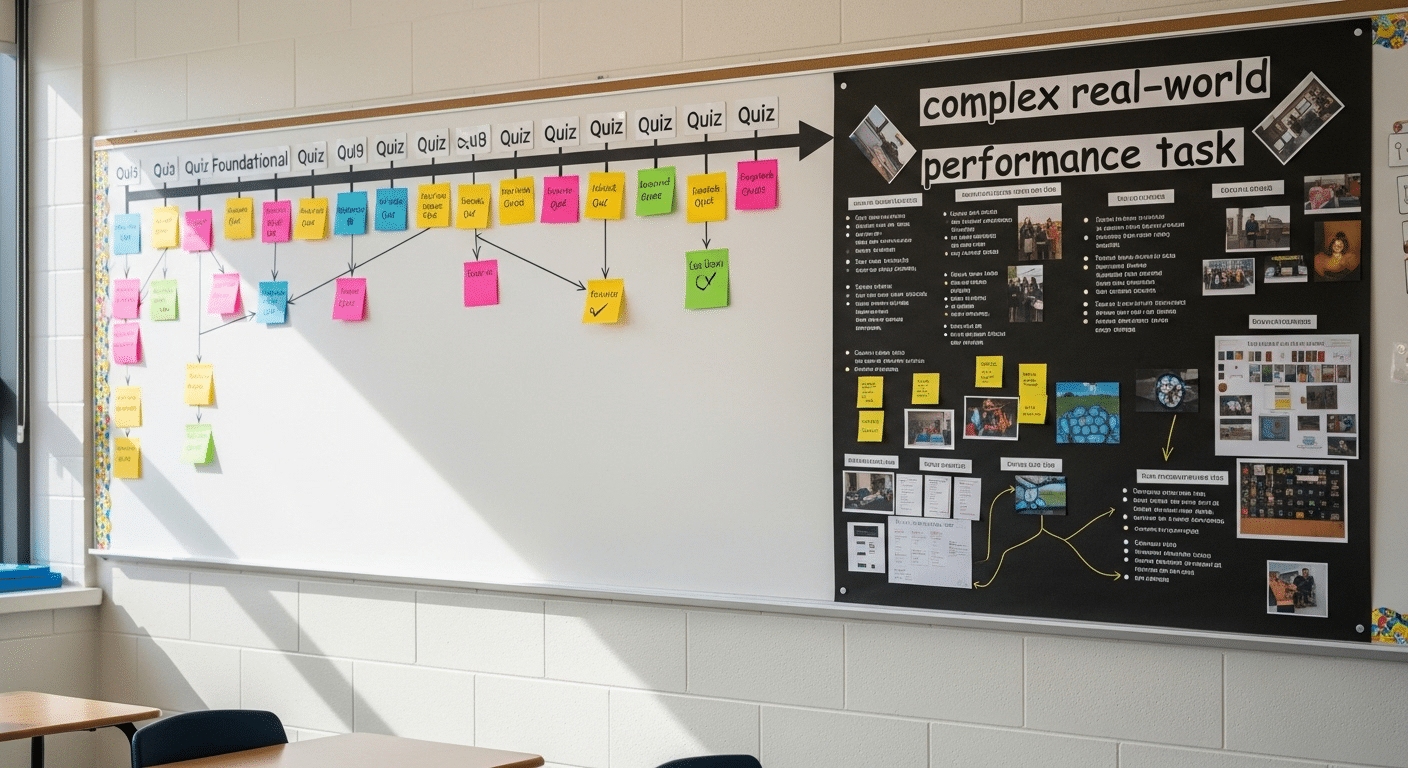

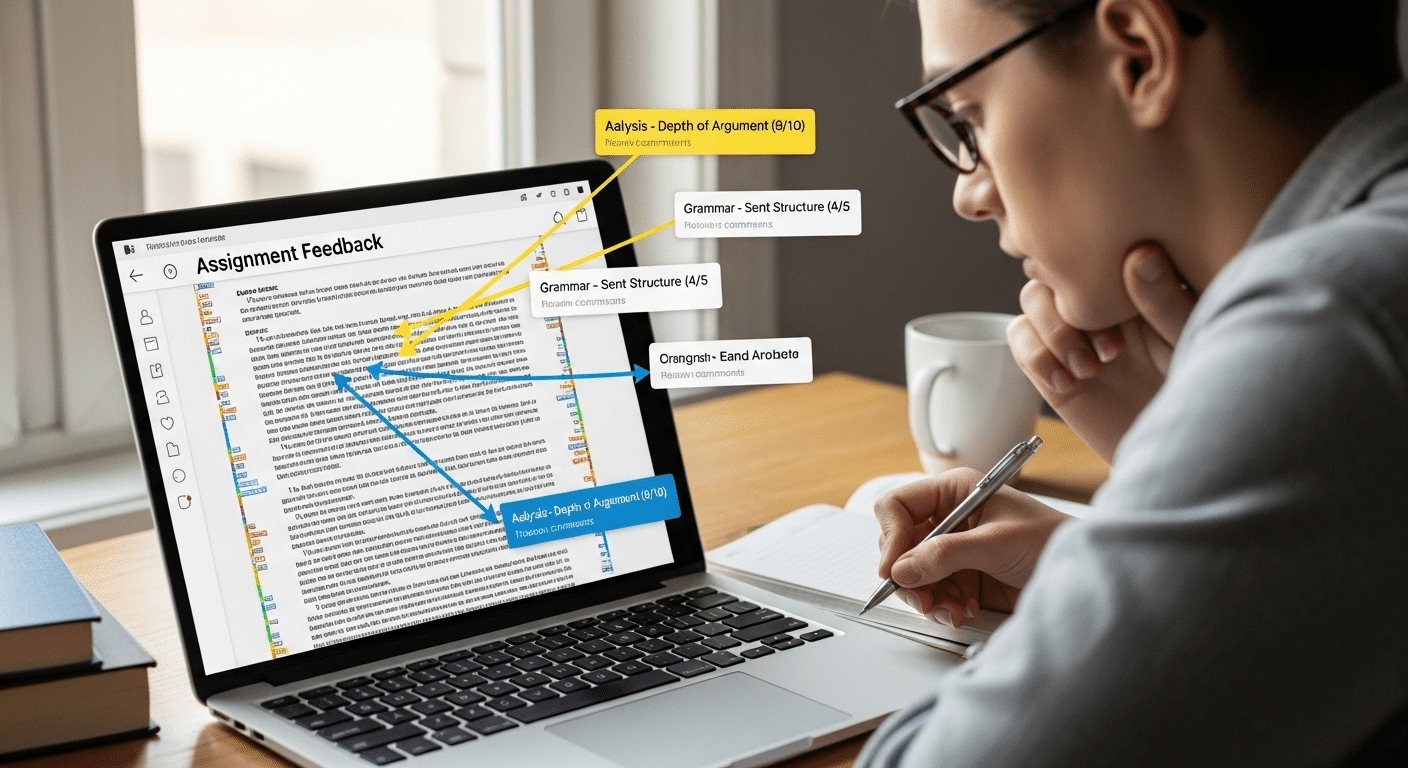

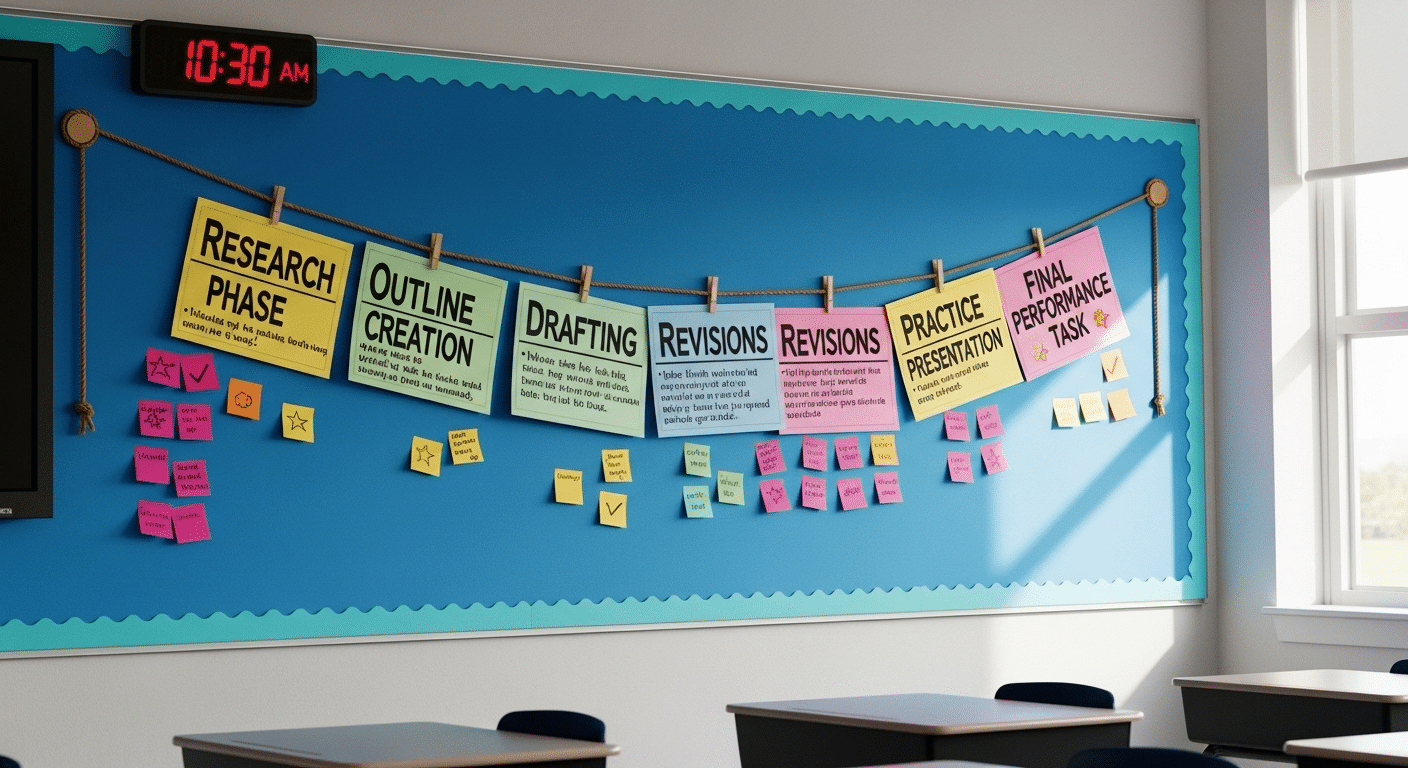

Authentic learning rarely unfolds in a single attempt. Complex tasks demand time, revision, and reflection. If assessment captures only the final product, it misses the intellectual work that led there. That is why formative assessment and iterative design are essential.

Scaffolding breaks complex tasks into manageable milestones. Instead of asking students to produce a finished project all at once, you structure checkpoints that clarify expectations and reduce cognitive overload.

At each stage, formative feedback loops help refine performances. Students adjust their reasoning, improve their evidence, and strengthen their conclusions before the final submission.

Reflection deepens this process. When students examine their own decisions, they strengthen metacognition, awareness of how they learn and why certain strategies succeed.

Learning logs make this visible. When you assess learning logs alongside performance tasks, you capture growth in thinking, not just outcomes.

Iterative assessment includes:

- Milestone deadlines that structure progress

- Feedback cycles that guide improvement

- Revision opportunities before final evaluation

- Reflection journals that document learning decisions

This approach reinforces that authentic assessment is developmental. It supports improvement over time, not just judgment at the end.

How Do Portfolios and Performance Assessment Portfolios Provide Deeper Evidence?

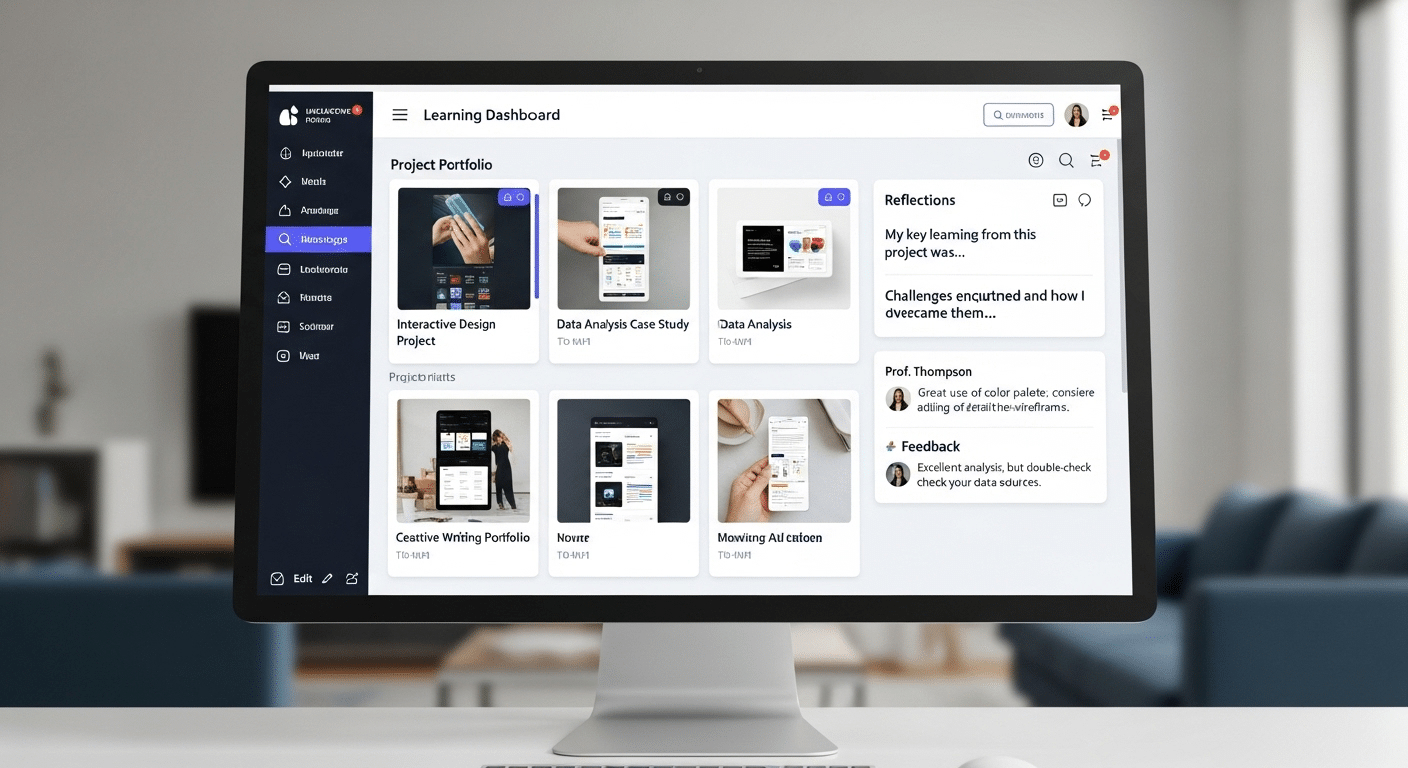

Single assessments capture moments. Portfolios capture movement. When you examine portfolio development over time, you begin to see patterns of growth, revision, and refinement that isolated tasks cannot reveal. This longitudinal evidence changes how you interpret student learning.

Performance assessment portfolios gather direct evidence across multiple tasks and contexts. Early drafts sit beside later revisions. Reflections accompany completed projects. Feedback and response become part of the record.

Instead of asking whether a student performed well once, you ask how their understanding evolved. That difference matters.

Portfolios also influence motivation. When students see improvement documented clearly, student self-efficacy increases. Growth becomes visible rather than assumed.

Confidence builds because progress is tangible. Student achievement is no longer defined only by a single score but by demonstrated development across time.

For educators, performance assessment portfolios strengthen evaluation decisions. You can compare work against standards more accurately when you see consistency, revision, and increasing sophistication. Direct evidence accumulates. It becomes difficult to reduce learning to a narrow metric.

In this way, portfolios transform assessment from snapshot to narrative. They honor process while still holding students accountable for quality and rigor.

What Challenges Do Educators Face When Assessing Authentic Learning?

Authentic learning promises depth, but depth requires effort. When you design classroom assessments that ask students to solve complex problems or create meaningful products, you also increase the demands on yourself. Time becomes a real constraint. Planning performance tasks, developing rubrics, and reviewing detailed work requires sustained attention.

High stakes testing pressures add another layer. Accountability requirements often prioritize measurable outcomes tied to mandated standards. You must ensure that authentic assessment aligns clearly with those expectations. Without alignment, authentic work can be dismissed as enrichment rather than evidence.

Reliable scoring is another challenge. Open-ended tasks introduce variability. Maintaining fairness across students requires careful calibration and transparent criteria. The assessment process becomes more sophisticated, and educational leadership must support that complexity rather than oversimplify it.

Common challenges include:

- Designing clear criteria that translate complex tasks into measurable standards

- Managing grading complexity across large groups

- Aligning assessments with mandated standards and accountability demands

- Maintaining consistency in scoring across multiple evaluators

These challenges are not reasons to abandon authentic assessment. They are signals that structure and intentional design are necessary.

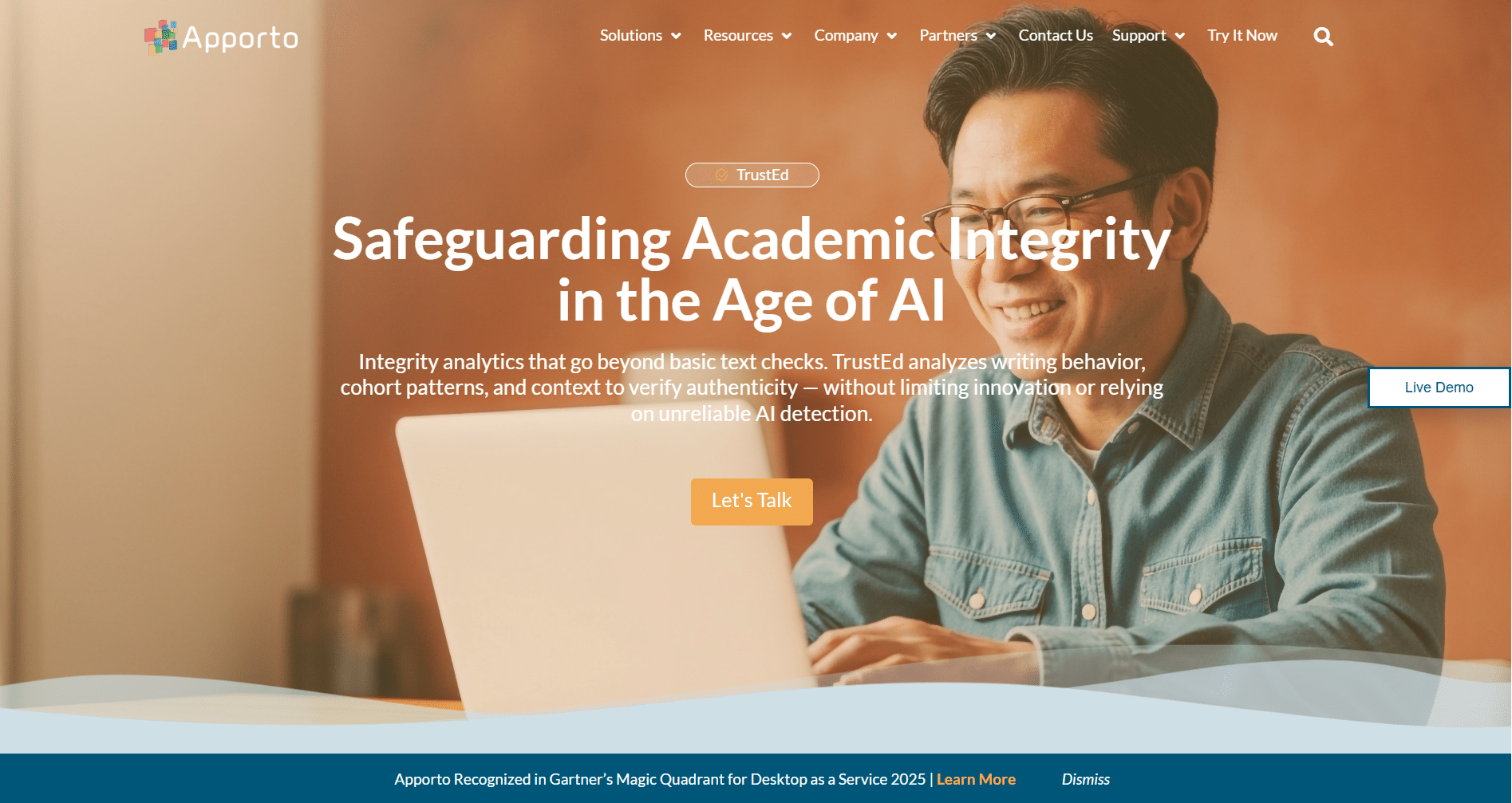

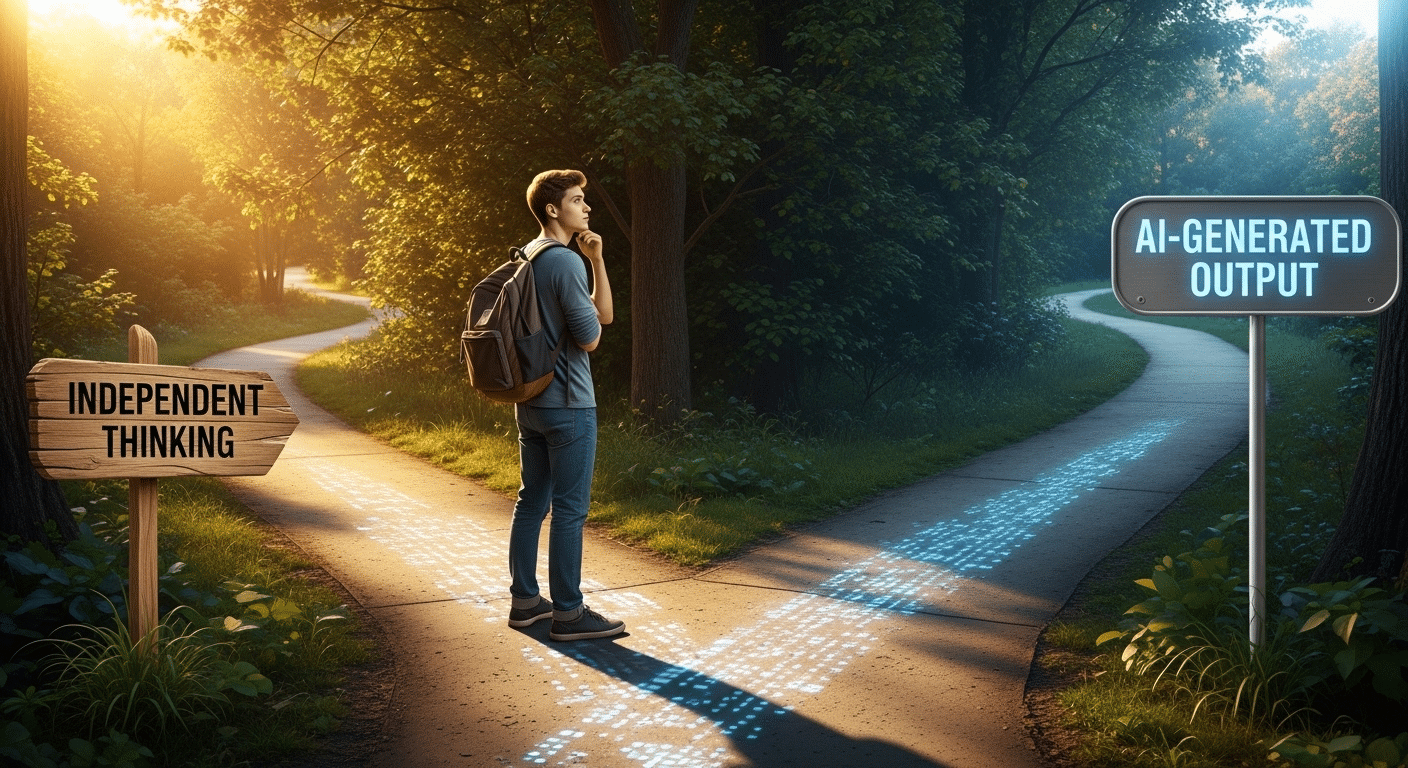

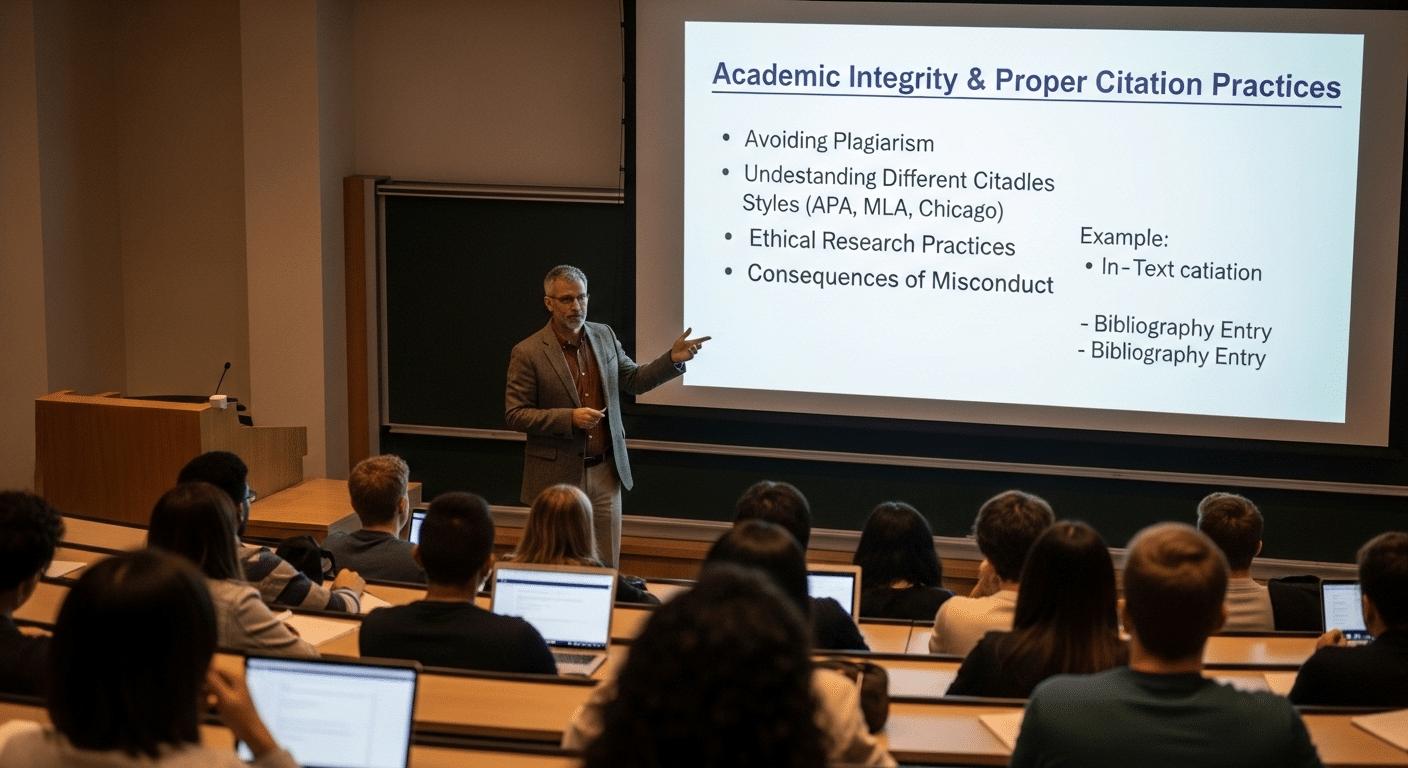

How Does Academic Integrity Complicate the Assessment of Authentic Learning?

Authentic learning depends on authentic performance. When students engage in real world context tasks, the value lies in their reasoning, their decision-making, and their ability to apply knowledge independently. Assessment assumes that the student work submitted reflects that effort.

The rise of generative AI complicates this assumption. Essays, research papers, and even complex projects can now be produced by automated systems. On the surface, the work may appear polished and coherent.

Yet polished output does not guarantee demonstrated proficiency. If the intellectual labor did not originate with the student, the assessment no longer measures learning. It measures access to tools.

This challenge does not invalidate authentic assessment. It raises the stakes. When you assess authentic learning, you must also verify authenticity. Otherwise, conclusions about student achievement become unstable. Ensuring authentic performance preserves validity in the assessment process. It protects fairness for students who complete their work independently. It also protects the credibility of the educational process itself.

In an era where tools can generate convincing artifacts, evidence must extend beyond appearance. Authentic learning requires evidence that the student truly understands, applies, and reflects, not merely submits.

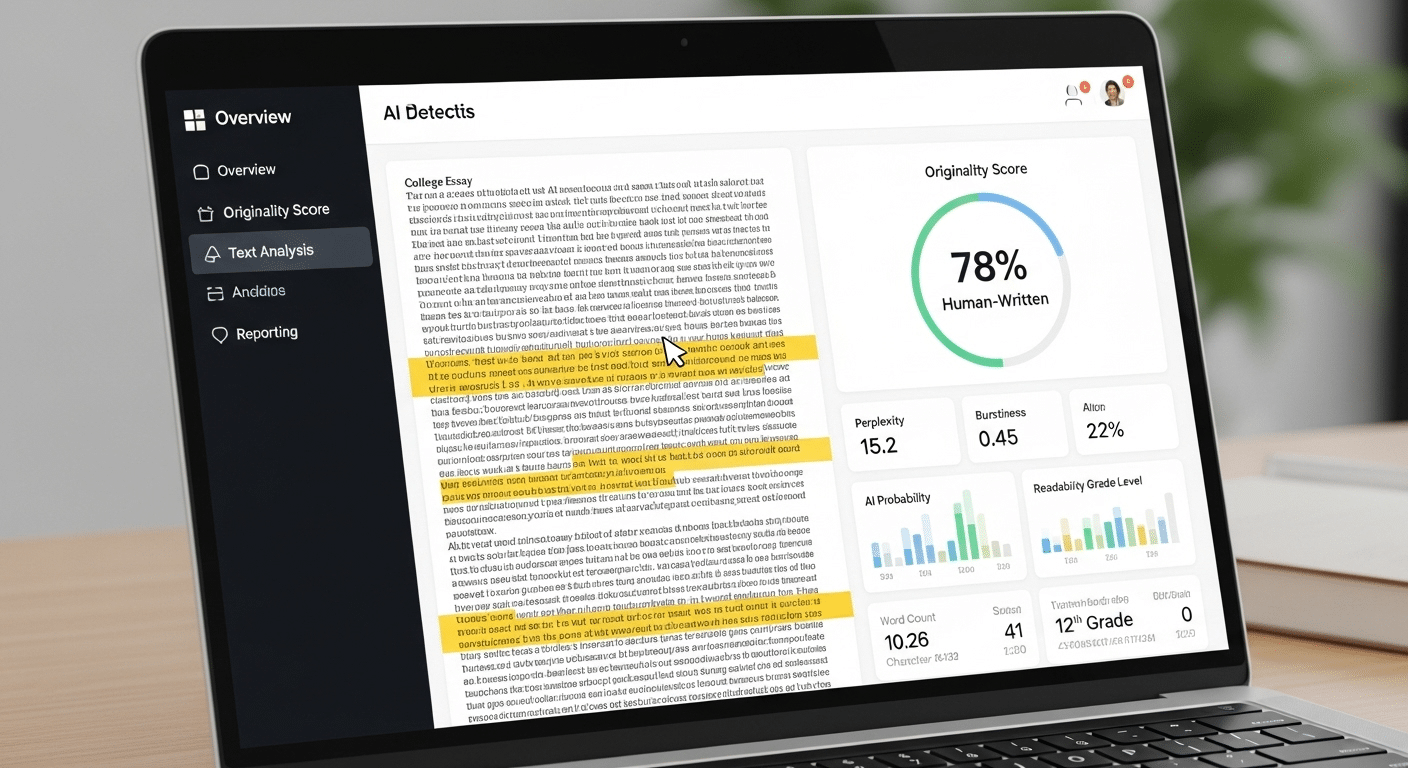

How Can TrustEd Protect the Validity of Authentic Learning Assessment?

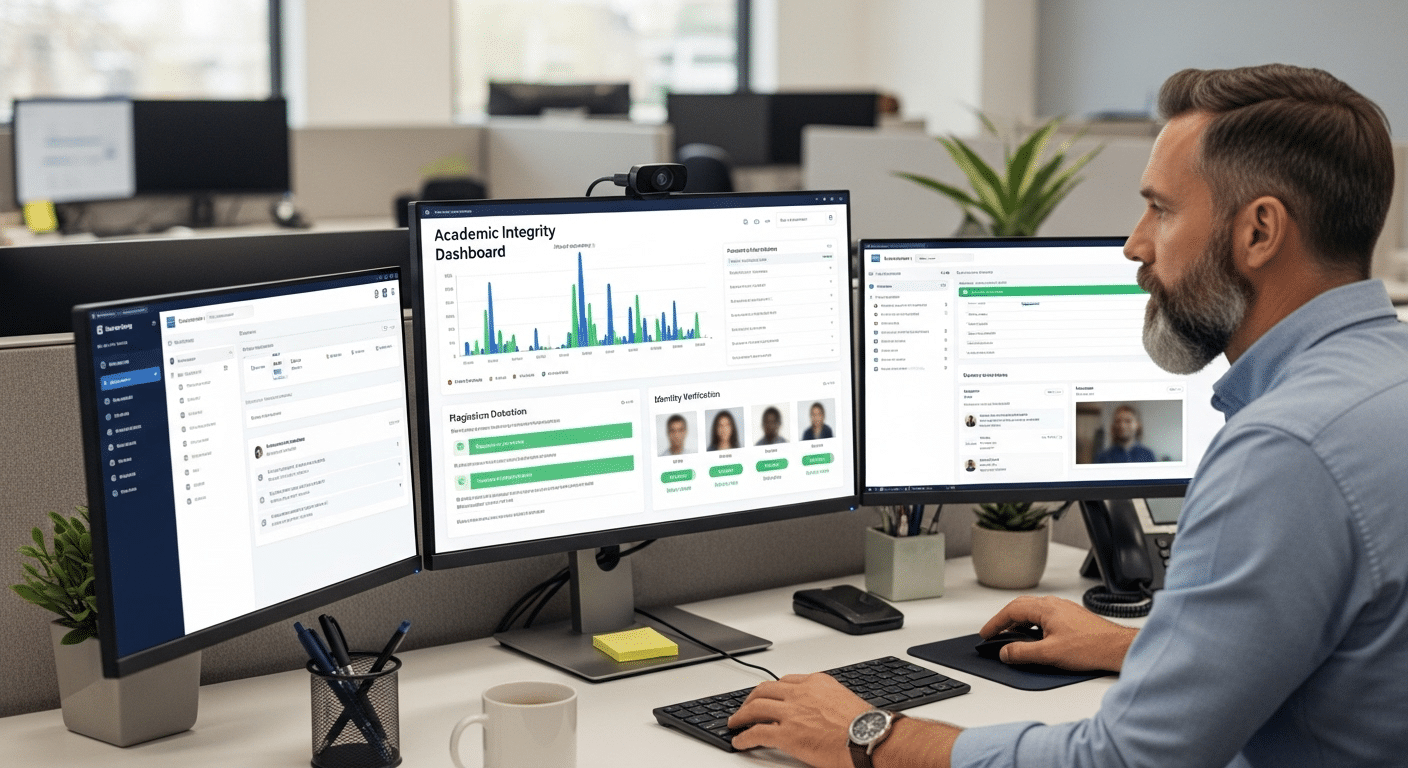

When authentic learning depends on authentic performance, integrity becomes part of the assessment process itself. If authorship is uncertain, evaluation loses clarity. Reliable scoring becomes difficult because you cannot be fully confident that the student work reflects genuine understanding.

TrustEd addresses this concern directly. It supports educators by verifying authorship before evaluation begins, strengthening confidence in the evidence you review. This is not about suspicion. It is about preserving fairness. When you know that submissions represent real effort, you can focus on quality, reasoning, and demonstrated proficiency.

Authentic learning assessment must remain equitable. Students who complete complex tasks independently deserve evaluation based on their own thinking. TrustEd reinforces that standard without disrupting instruction or undermining trust.

By safeguarding student work at the point of submission, TrustEd helps ensure that authentic assessment remains valid, rigorous, and credible.

Conclusion

Authentic learning asks students to apply knowledge, solve complex problems, and produce meaningful work. If that is the goal, then authentic assessment must follow. You cannot measure transfer with recall alone. You must design assessments that capture application, reasoning, and growth across contexts.

This requires structure. Clear criteria, performance tasks, formative feedback, and portfolios provide the evidence needed to evaluate student learning with rigor. Without thoughtful design, authentic tasks risk becoming activity without proof. Evidence must be visible, measurable, and aligned to standards.

Integrity sustains validity. When authentic performance is verified and student work reflects genuine effort, reliable scoring becomes possible. Fairness is preserved. Confidence in the assessment process remains intact.

Frequently Asked Questions (FAQs)

1. What does it mean to assess authentic learning?

To assess authentic learning means evaluating how well students apply knowledge and skills in meaningful, real world contexts. Instead of measuring recall, you measure transfer, reasoning, problem solving, and demonstrated proficiency across complex tasks.

2. How do performance tasks measure authentic learning?

Performance tasks require students to demonstrate understanding through action. They may conduct experiments, analyze case studies, or design solutions to real problems. These tasks reveal how effectively students apply concepts under realistic conditions.

3. Can authentic learning be assessed reliably?

Yes, authentic learning can be assessed reliably when clear rubrics, aligned criteria, and structured feedback are used. Reliable scoring depends on defined performance levels, consistent standards, and transparent evaluation practices.

4. How do portfolios support assessment?

Portfolios provide longitudinal evidence of student learning. By documenting drafts, revisions, and reflections, they show growth over time. This allows educators to evaluate development rather than relying on a single performance snapshot.

5. Does authentic assessment improve student achievement?

Research indicates that effective assessment is a major factor in raising student achievement. Authentic assessment improves engagement, strengthens higher order thinking skills, and supports deeper understanding, all of which contribute to stronger outcomes.