Artificial intelligence is now part of the academic setting. AI writing tools can generate essays, research summaries, and even full academic papers in seconds. As a result, AI-generated content is increasingly present in student submissions across schools and universities.

This shift creates real challenges. Educators must now determine how to check if your essay is AI generated without relying on guesswork. At the same time, students must understand how AI use affects academic integrity. Using artificial intelligence in writing raises important questions about originality, authorship, and fairness.

Not every institution defines cheating in the same way. Some allow limited AI assistance. Others prohibit AI-generated writing entirely. That variation makes transparency essential. Disclosing AI use and following institutional policies helps protect both students and educators.

Technology alone cannot solve this issue. No AI detector is 100% accurate. AI detection tools can misinterpret human-written text or overlook sophisticated AI-generated content.

For this reason, they should never be the sole decision-maker. Instead, they should support a broader evaluation process that includes context, writing history, and human judgment.

Understanding how AI detection works and where it falls short is the first step toward responsible academic oversight.

What Does It Mean for an Essay to Be AI Generated?

An essay is considered AI generated when most or all of the written content is produced by artificial intelligence instead of a human author. Today’s AI models, including ChatGPT, Claude, and Google Gemini, create text using advanced AI technology trained on massive datasets.

These systems do not think or reason like a person. They predict likely word sequences based on patterns found in existing writing.

Because of this design, AI generated text often appears polished, structured, and grammatically correct. Paragraphs flow logically. Sentences are clean and consistent. At first glance, AI written content can resemble strong academic writing.

However, this surface quality can hide limitations. AI content often lacks deep personal nuance or original thought. It may rely on broad generalizations instead of detailed analysis.

In research papers and academic writing, this can result in arguments that sound complete but offer limited depth. AI models may also fabricate references, statistics, or citations that appear credible but do not exist.

There is also an important difference between AI-assisted writing and fully AI-generated writing. AI-assisted work might involve using AI tools to brainstorm ideas, refine wording, or correct grammar.

Fully AI-generated writing means the essay was largely written by AI with minimal human contribution.

The ethical gray area lies between these two uses. Institutional policies vary. Some allow limited AI support, while others restrict AI use entirely.

In all cases, disclosure is recommended. Clearly stating when AI was used in academic writing helps maintain academic integrity and protects both students and educators from misunderstanding.

What Are the Most Common Signs of AI-Generated Writing?

Detecting AI generated writing requires careful attention to patterns. No single sign proves that text was written by AI. However, certain indicators appear frequently in AI generated text. Reviewing these signs can help you detect AI written content more accurately.

1. Overly Generic or Broad Content

AI often relies on vague generalizations. The writing may discuss big ideas without offering specific analysis. Arguments can sound correct but remain surface level.

You may notice statements such as, “Throughout history, society has faced many challenges,” without detailed examples. Human written text usually includes concrete evidence, course references, or specific case studies. AI generated writing often stays broad and avoids narrow analysis.

2. Lack of Personal Voice or Nuance

AI text rarely includes emotional depth or lived experience. There are no personal reflections, no classroom references, and no subtle opinions formed from direct engagement.

In academic settings, students often connect theory to lectures, discussions, or assigned readings. AI content may miss these in-class references. When trying to identify AI generated text, the absence of authentic voice is a strong clue.

3. Perfect Grammar and Hyper-Polished Tone

AI rarely makes typos or minor grammar mistakes. Sentences are clean and technically correct. The tone can feel overly formal or detached.

Human writing usually contains small inconsistencies. AI written content may appear too smooth. Uniform sentence structures and consistent punctuation patterns can signal automated production.

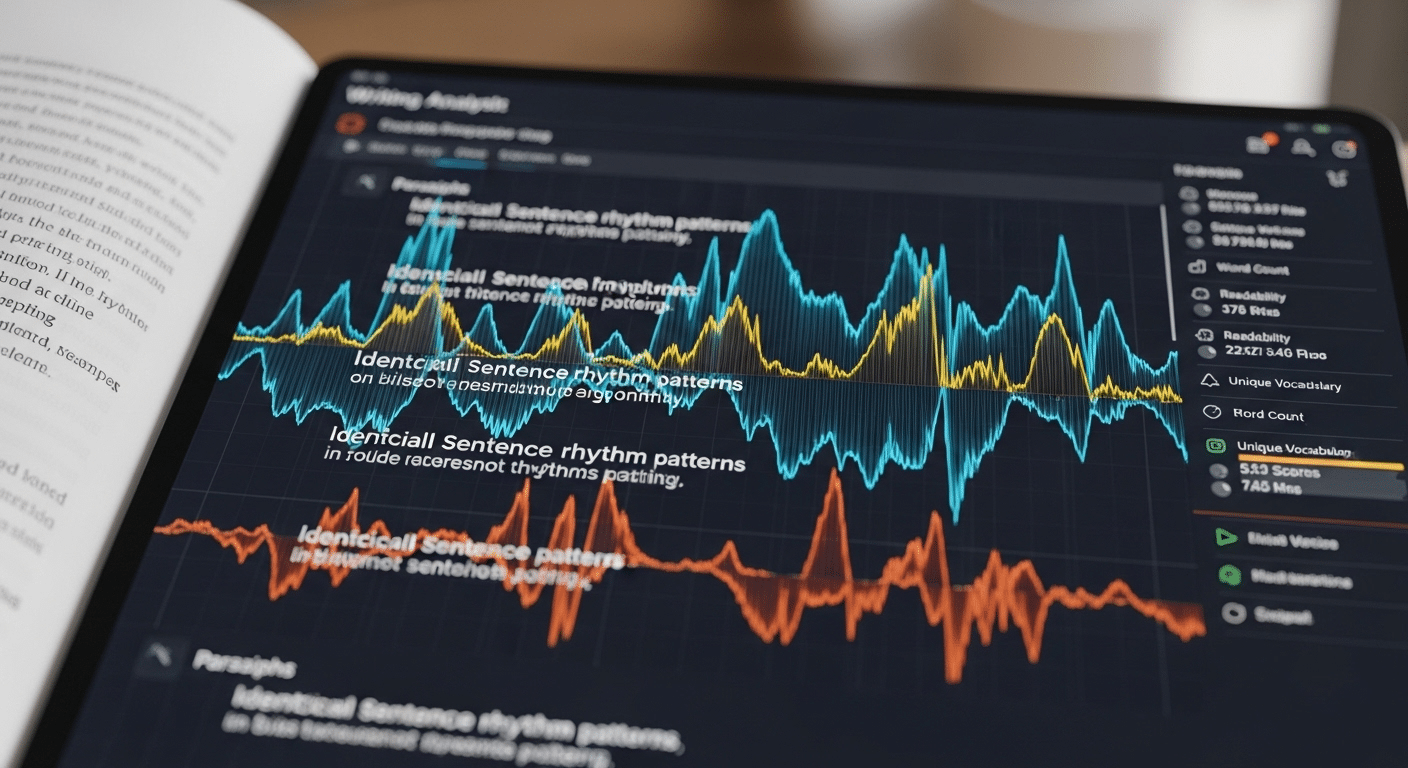

4. Monotonous or Formulaic Sentence Structure

AI generated essays often follow predictable paragraph patterns. Sentences may have similar lengths and rhythms.

There may be limited variation in structure. Human writers naturally mix short and long sentences. When text feels mechanically balanced throughout, it may warrant closer review.

5. Fabricated or Hallucinated Citations

AI models sometimes invent plausible-sounding but non-existent references. Citations may look legitimate but fail verification.

When checking research papers, always verify sources. Fabricated statistics or academic articles are a known sign of AI generated content.

6. Sudden Writing Style Improvement

Comparing writing samples can reveal major vocabulary shifts or tone changes. A sudden, dramatic improvement in clarity or sophistication may raise questions.

This does not prove AI use, but it helps detect writing inconsistencies across student submissions.

7. Repetition or Circular Reasoning

AI may repeat similar phrases or expand ideas without adding substance. Paragraphs can feel padded. Repetitive explanations and circular reasoning are common in AI generated text.

8. Placeholder Errors

Occasionally, AI leaves behind template artifacts such as “[insert name here]” or incomplete prompts.

These errors make it easier to detect AI generated content.

Taken together, these signs help you identify AI generated text. Still, they should be used carefully. No single indicator confirms authorship. Human review and contextual judgment remain essential when evaluating written work.

How Do AI Detection Tools Actually Work?

AI detection tools are designed to analyze text and estimate whether it was written by artificial intelligence. They do not confirm authorship with certainty. Instead, they rely on statistical analysis.

An AI detection tool examines linguistic patterns in written content. It compares sentence structure, word choice, and phrasing against patterns commonly found in AI generated text. Detection models are trained on large datasets that include both human written text and AI generated samples. The system then compares the submitted writing to these known patterns.

Most AI detectors return a probability-based AI score. This score estimates how likely the text is to have been written by AI. It does not provide a final judgment. AI detection is statistical, not definitive.

Several technical mechanisms support this process.

Perplexity scoring measures how predictable a piece of text is. AI models tend to produce highly predictable word sequences. Human writing often includes more variation and unexpected phrasing.

Burstiness analysis looks at variation in sentence length and complexity. Human writers naturally vary rhythm. AI generated writing may show more uniform patterns.

Pattern recognition helps detection models identify repeated phrasing or structural similarities that appear in AI output.

Probability modeling combines these signals to generate an overall likelihood score.

It is important to understand the limits of AI content detection. No AI detector is fully accurate. Accuracy depends on the detection model, the context in which it is used, and the type of writing being analyzed. Academic writing, for example, can appear structured and formal, which may resemble AI output.

AI detection tools can also misclassify human written content. False positives occur when authentic writing is flagged as AI generated. The false positive rate directly impacts reliability, especially in academic settings where decisions carry consequences.

For this reason, AI detector tools should be used as part of a broader evaluation process. They provide signals, not proof. Human judgment remains essential when interpreting any AI score.

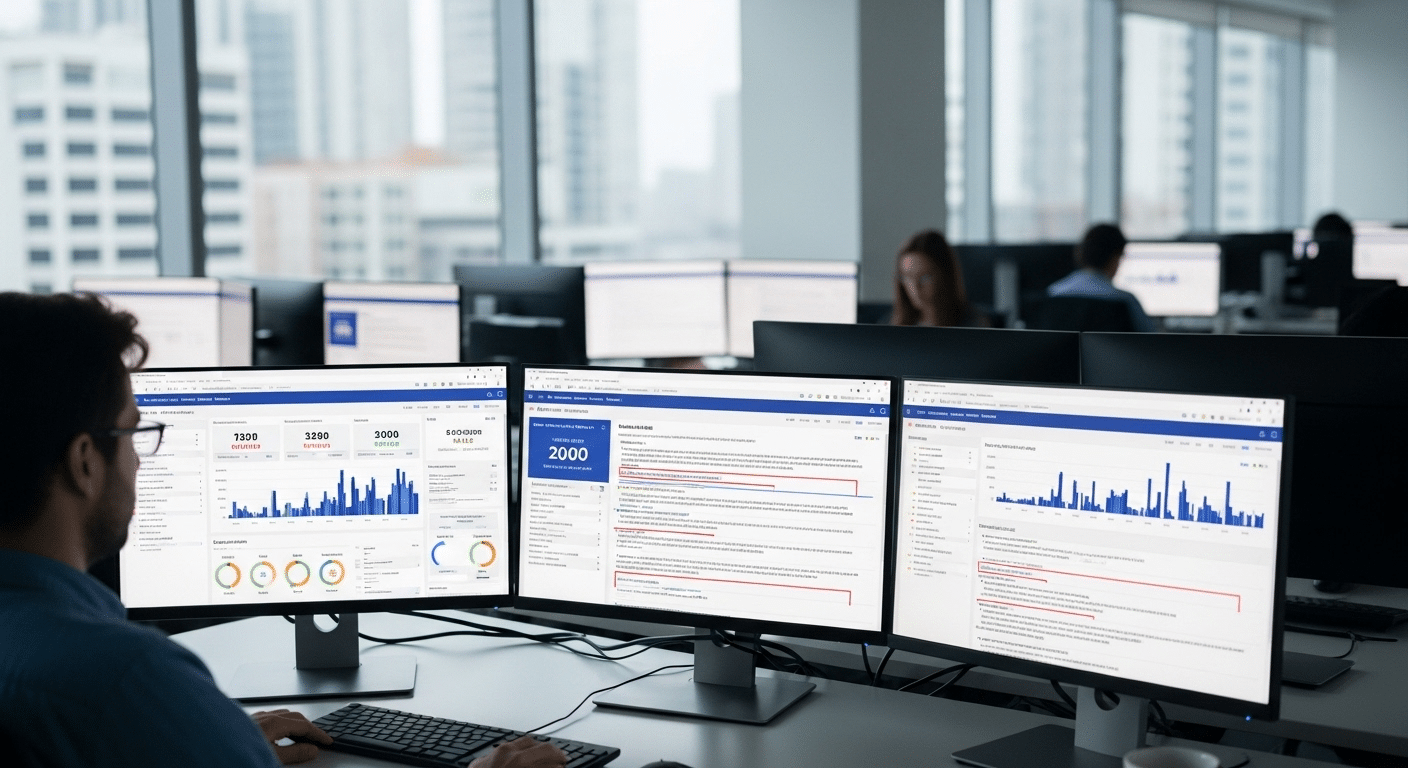

What Are the Most Accurate AI Detection Tools Available Today?

Many institutions now rely on AI detector tools to help review written content. These systems are designed to analyze text and return a probability score indicating whether AI generated writing may be present. No tool is perfect, but several platforms are widely used in academic settings.

Below is a structured overview of some of the most discussed AI detection tools today.

Turnitin AI Content Checker

Turnitin is already well known for plagiarism detection. Its AI content checker extends that capability to AI content detection.

Turnitin’s AI detection model is built around a transformer deep-learning architecture. This architecture analyzes patterns found in AI generated text and compares them to student submissions. The tool is designed specifically for academic institutions and is integrated directly into existing plagiarism checks.

Key features include:

- Built for academic settings

- Integrated with plagiarism detection workflows

- Designed to help educators detect AI generated text in student submissions

Turnitin does not claim perfect accuracy. Like all AI detector tools, it provides probability-based insights rather than final judgments.

Grammarly AI Detector

Grammarly offers an AI checker that analyzes written content and displays a percentage indicating how much of the text appears AI generated. This AI score is based on its detection model, which has been trained on the latest AI models.

The tool is currently available to premium users. Grammarly also provides features that help users cite AI usage properly, which supports academic integrity.

Key features include:

- Displays AI-generated likelihood percentage

- Trained on newer AI models

- Includes citation support for AI-assisted writing

Grammarly’s AI plagiarism checker functions as an additional review layer, not as a disciplinary tool.

Copyleaks AI Content Detector

Copyleaks is positioned as a multilingual AI content detector. It supports over 30 languages, which is important for institutions with diverse student populations.

The company states that it continually retrains its detection model to adapt to evolving AI technology. Copyleaks also claims relatively low false-positive rates for non-native English writing, an area where many AI detector tools struggle.

Key features include:

- Supports 30+ languages

- Continuous model retraining

- Focus on reducing false positives for multilingual users

As with other tools, Copyleaks should be used alongside human review.

Pangram AI Detection Tool

Pangram was built by a team of machine learning engineers with experience in AI systems. It detects writing generated by models such as ChatGPT, Claude, and Google Gemini.

Pangram claims up to 99 percent accuracy in identifying AI generated text. It reports a false positive rate of 1 in 10,000 based on large public datasets. The tool returns a likelihood score rather than a binary result.

Key features include:

- Detects content from major AI models

- Claimed high accuracy

- Reported low false positive rate

- Provides probability-based scoring

Even with strong performance claims, no AI content detector can guarantee complete accuracy.

Comparison of Major AI Detection Tools

| Tool | Languages Supported | Accuracy Claim | False Positive Rate | Academic Integration |

|---|---|---|---|---|

| Turnitin | Primarily English | Not publicly specified | Not publicly specified | Strong integration with plagiarism checks |

| Grammarly | Primarily English | Not publicly specified | Not publicly specified | Limited, premium access |

| Copyleaks | 30+ languages | Not publicly specified | Claims low rate | Supports institutional use |

| Pangram | Primarily English | Up to 99 percent claim | 1 in 10,000 claim | Used for structured analysis |

Critical Reminder

There is no single best AI detector for every context. The most accurate AI detector depends on the detection model, the writing type, and the academic environment in which it is used.

No AI detection tool is 100 percent accurate. AI detector tools can misclassify human written text and generate false positives.

For this reason, AI content detection should always be paired with human judgment, writing history review, and institutional policy guidelines.

Why Do AI Detectors Produce False Positives?

AI detection tools are designed to estimate the likelihood that text was generated by artificial intelligence. However, false positives remain a significant concern. A false positive occurs when human written text is incorrectly flagged as AI generated. This issue directly affects trust in any AI detection tool.

The false positive rate plays a critical role in determining reliability. Even a small percentage can impact many students when used at scale. In academic settings, a false positive can lead to serious consequences, including academic review or disciplinary action.

One major factor is bias in AI detection models. These systems are trained on datasets that may not reflect the full diversity of writing styles. As a result, non-native English writers may be disproportionately flagged. Structured academic writing, which tends to be formal and consistent, can also resemble AI generated text. This overlap increases the likelihood of misclassification.

Context dependency further complicates AI detection. A detection model may perform differently across disciplines, assignment types, or educational levels. An accurate AI detector in one context may produce misleading results in another.

It is also important to recognize that AI detection is probabilistic. A single AI score should never serve as final proof of misconduct. Detection tools analyze patterns, not intent. They do not understand authorship in a human sense.

Over-reliance on automated scoring creates ethical risks. Institutions that treat AI detection results as definitive may undermine fairness and academic integrity. To reduce false positives, AI detection tools must be used as part of a holistic evaluation process. This includes reviewing writing samples, assessing consistency, and applying human judgment before drawing conclusions.

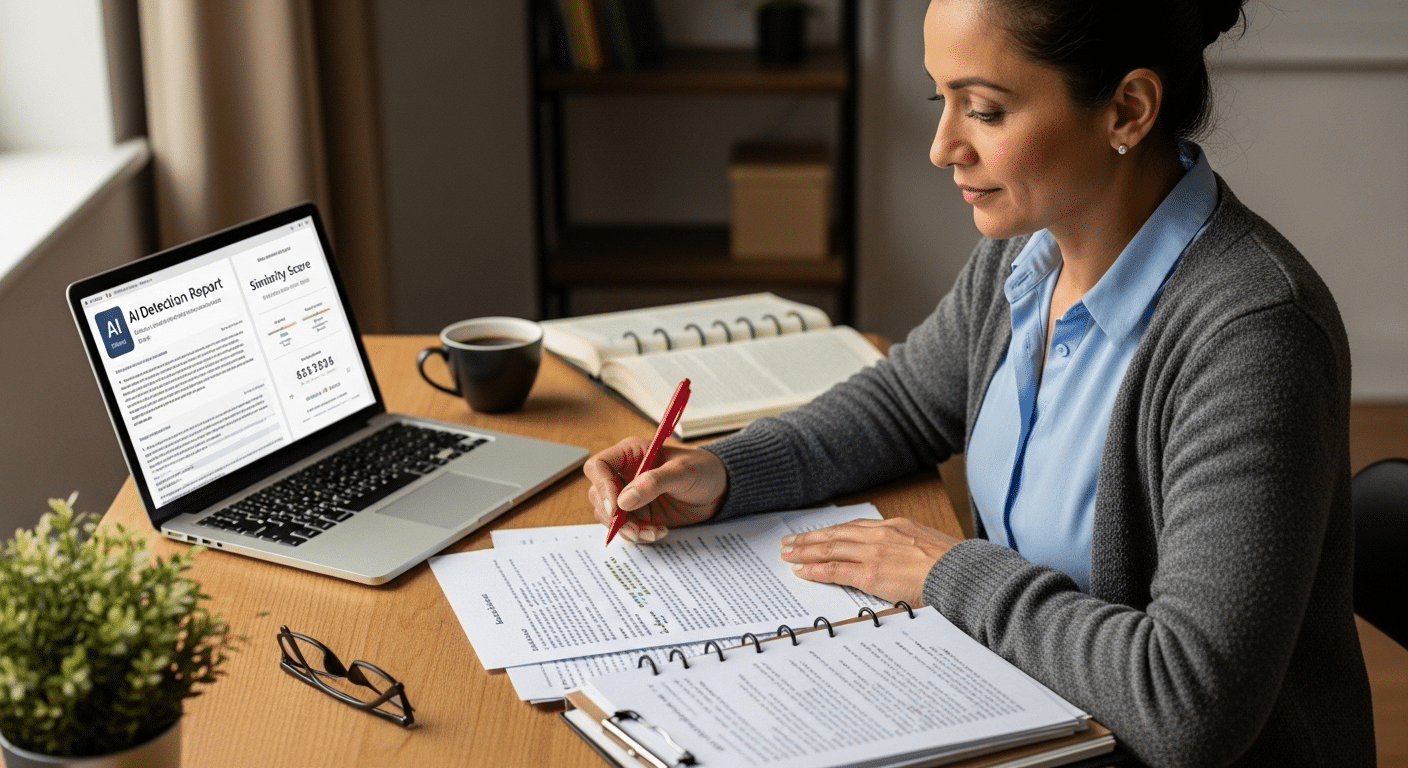

How Can You Manually Check If an Essay Was Written by AI?

AI detection tools can help, but manual review remains essential. If you want to identify AI generated text accurately, you need a structured evaluation process. The following framework helps you detect AI content without relying only on automated tools.

Step 1: Compare Against Past Writing Samples

Start by reviewing previous writing samples from the same student. Look closely at vocabulary shifts. A sudden jump in complexity or sophistication may raise questions.

Pay attention to tone changes. If earlier student submissions were informal or uneven, and the new essay is highly polished and consistent, the difference may be significant. Also review grammar patterns. Human writers tend to repeat small habits, including sentence rhythm and punctuation style. AI generated writing often removes those quirks.

This comparison provides context that no plagiarism detector or AI checker can fully capture.

Step 2: Evaluate Intellectual Depth

AI often relies on broad generalizations. Essays may summarize common ideas without offering specific analysis. In academic papers, this can appear as surface-level explanations rather than detailed argumentation.

Look for weak original insight. Does the essay engage deeply with course material, or does it repeat widely known points? Human written text usually reflects personal interpretation, even in structured academic writing.

Step 3: Verify Sources and Citations

AI models sometimes fabricate references. Citations may look legitimate but fail verification. Check references against web pages and academic databases. Confirm that quoted material exists and matches the source.

Fabricated or inaccurate citations are a common signal when trying to detect AI content.

Step 4: Ask for Clarification

If concerns remain, ask the student to explain complex sections of the essay. Request clarification on arguments or specific examples.

A student who wrote the paper should be able to discuss their reasoning. This step helps assess ownership of ideas beyond what plagiarism checks can reveal.

Step 5: Analyze Consistency

Examine overall writing quality and argument structure. A sudden improvement in writing quality, combined with changes in organization or reasoning style, may indicate outside assistance.

No single indicator proves AI use. However, when multiple patterns appear together, manual review becomes a powerful complement to AI detection tools.

How Does AI Use Impact Academic Integrity?

AI use in academic writing raises important questions about authorship, fairness, and responsibility. Academic integrity depends on transparency. When students submit work, educators expect that the ideas and analysis reflect the student’s own understanding unless stated otherwise.

Institutions vary in their AI policies. Some academic institutions allow limited AI assistance for brainstorming or grammar support. Others restrict AI generated content entirely. Because policies differ, students must review institutional guidelines carefully before using AI services in assignments.

Disclosure of AI use is widely recommended. When AI tools contribute to academic writing, citing that assistance supports transparency. Using AI generated content without citation may be considered unethical in many academic settings. In some cases, it may violate institutional rules.

Responsible AI use focuses on learning, not substitution. AI tools can support the writing process when used carefully. For example, they may help clarify sentence structure, summarize background material, or suggest organizational improvements. However, replacing original thinking with AI written content weakens academic integrity.

Educators are developing guidelines to help students navigate these challenges. These guidelines often address how to cite AI tools, how much AI assistance is acceptable, and how to distinguish between support and authorship. Clear policies protect both instructors and students.

Transparency requirements are becoming central to modern academic writing. If AI contributed to an assignment, acknowledging that contribution reduces confusion and builds trust.

Academic integrity is not only about detecting misconduct. It is also about creating clear expectations and encouraging responsible AI use that supports learning rather than undermines it.

Should AI Detection Be the Only Method of Evaluation?

AI detection tools can help analyze text and identify patterns that resemble AI generated content. However, they should never be the only method of evaluation. AI detection is based on probability, not certainty. A detection model estimates likelihood. It does not confirm authorship.

Because AI detection tools are probabilistic, over-reliance can harm fairness. An AI score reflects statistical analysis, not intent or context. If institutions treat that score as final proof, they risk misjudging student submissions.

A holistic approach is far more reliable.

First, combine AI detection tools with instructor judgment. Educators understand course expectations, student performance history, and assignment context. That insight cannot be replicated by automated systems.

Second, compare the essay with previous writing history. Writing samples reveal patterns in vocabulary, structure, and tone. Sudden inconsistencies deserve attention, but they require interpretation.

Third, consider oral defense or clarification. Asking a student to explain their argument or reasoning provides direct evidence of understanding. This step can confirm authorship more effectively than automated analysis alone.

Finally, institutional policy should guide every decision. Clear guidelines help define acceptable AI use and appropriate review processes.

AI tools can analyze text efficiently, but they are only one part of responsible evaluation. Human oversight remains essential to ensure accuracy, fairness, and academic integrity.

How Can Institutions Build a Fair and Responsible AI Detection Framework?

Institutions cannot rely on a single plagiarism detector or AI detection tool and expect fairness. A responsible framework begins with clear AI use policies. Students and faculty must understand what forms of AI assistance are allowed, what must be disclosed, and what violates academic standards.

Transparent communication is equally important. Policies should be written in clear language and shared across the academic setting. When expectations are visible, confusion decreases and trust increases.

Human override options must be built into the process. AI content detection systems should never deliver automatic penalties. Every flagged student submission should be reviewed by a qualified instructor or committee. This safeguard protects against misclassification and supports due process.

Bias mitigation also requires attention. Detection models can misinterpret certain writing styles, especially for multilingual students. Institutions should evaluate the false positive rate of any detection tool they adopt and seek ways to reduce false positives through layered review.

A structured review process strengthens consistency. This may include initial AI analysis, instructor evaluation, comparison with prior writing samples, and student clarification if needed.

Finally, student education matters. Institutions should teach students how to use AI responsibly, how to cite AI assistance, and how to maintain academic integrity. When AI use is guided by policy and transparency, detection becomes part of a broader integrity strategy rather than a reactive enforcement tool.

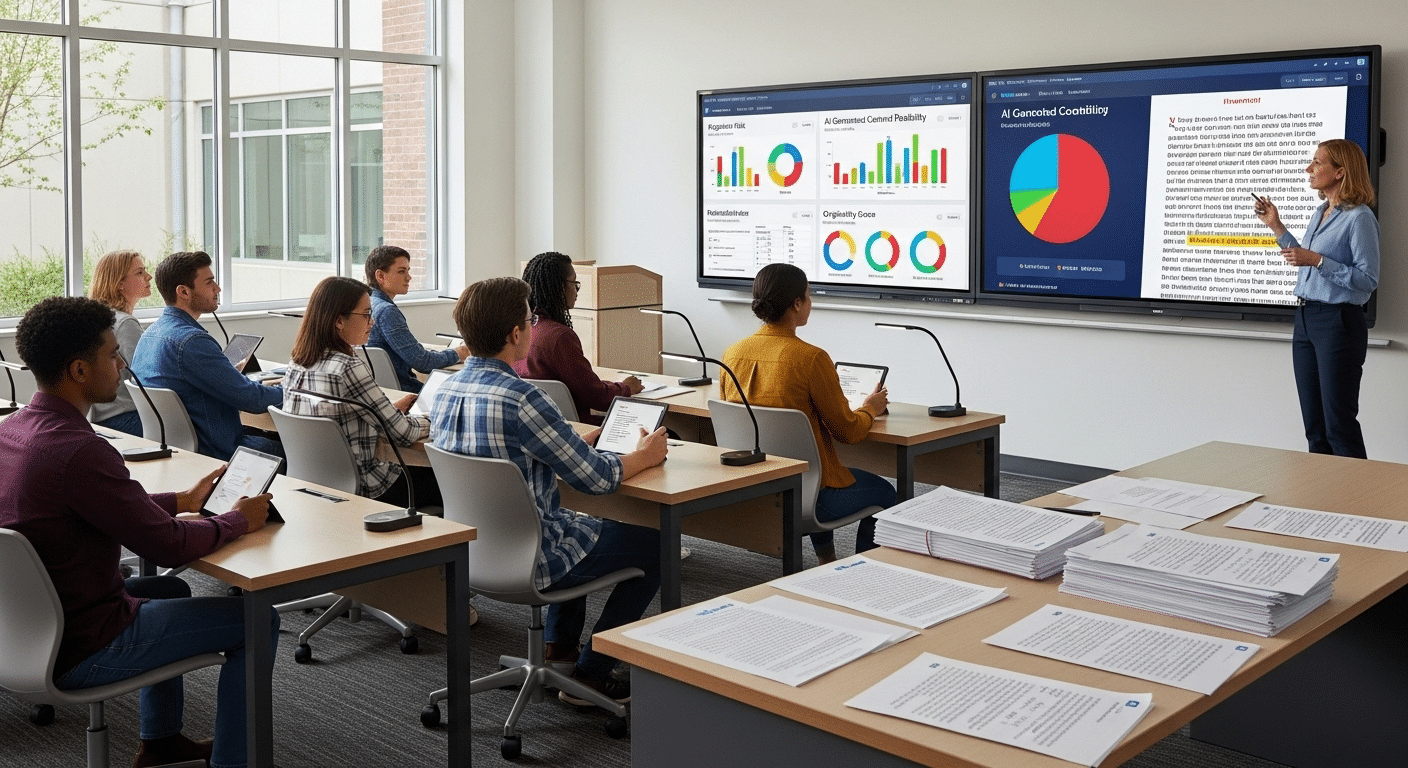

How Can Apporto Help Educational Institutions Detect AI-Generated Content Responsibly?

AI detection should support fairness, not replace judgment. That principle guides TrustEd, an AI integrity solution designed specifically for academic institutions. Detection results are presented as structured insights, not automatic conclusions.

Instructors remain in control of final decisions. This protects students from being judged by a single AI score and reinforces due process within the academic setting.

The platform uses a context-aware AI detection model that analyzes student submissions with attention to writing patterns, institutional guidelines, and evaluation context.

Instead of treating AI content detection as a standalone verdict, TrustEd integrates it into a broader review framework.

Key priorities include:

- Reducing false positives through layered analysis

- Supporting institutional AI policies and review procedures

- Providing transparent reporting for educators

- Aligning detection workflows with academic integrity standards

TrustEd is built for real academic environments. It acknowledges that plagiarism detection and AI detection tools are probabilistic. For that reason, it emphasizes transparency, structured review, and human oversight.

Institutions that adopt TrustEd gain a balanced system that helps detect AI-generated content responsibly while protecting legitimate human written text. The goal is not punishment. The goal is clarity, fairness, and consistency.

If your institution is developing or refining its AI integrity framework, TrustEd provides the structure needed to support responsible oversight without sacrificing trust.

Conclusion

Detecting AI generated essays is no longer a rare task in academic settings. AI detection tools can help analyze text, highlight patterns, and estimate the likelihood of AI generated content. They offer speed and consistency. However, they are helpful but imperfect. No detection model can determine authorship with complete certainty. AI scores are statistical estimates. They require interpretation. Without context, those scores can mislead.

Human evaluation remains critical. Instructors understand course expectations, writing history, and academic standards. Comparing student submissions to past work, reviewing citation accuracy, and asking for clarification all provide insight that automated systems cannot fully capture. Transparency also protects everyone involved. When institutions create clear AI policies and communicate expectations openly, confusion decreases.

Students who disclose AI use responsibly reduce the risk of misunderstanding. Educators who apply consistent review processes strengthen trust. Responsible AI integration is the long-term solution. Technology should support academic integrity, not undermine it. When AI detection tools are combined with human judgment and clear institutional guidelines, institutions can respond to AI use fairly and confidently.

Frequently Asked Questions (FAQs)

1. Can AI detectors accurately identify AI-generated essays?

AI detectors can estimate the likelihood that text was AI generated, but they are not fully accurate. Results are probabilistic and require human review.

2. What is the most accurate AI detector available?

There is no single most accurate AI detector for every situation. Accuracy depends on the detection model, context, and writing type being analyzed.

3. Why do AI detection tools produce false positives?

False positives occur when human written text is flagged as AI generated. Structured academic writing and non-native English styles can increase this risk.

4. How can students avoid being falsely flagged by an AI detector?

Students should follow institutional AI policies, cite AI assistance when used, and maintain consistency with past writing samples.

5. Is using AI for academic writing always considered cheating?

Not always. Policies vary by institution. Some allow limited AI use if disclosed, while others restrict AI generated content entirely.

6. Can AI detection tools analyze text from Google Docs or Microsoft Word?

Yes. Most AI detection tools can analyze exported text from Google Docs or Microsoft Word documents.

7. Do plagiarism checkers also detect AI-generated text?

Some plagiarism checkers now include AI content detection features, but traditional plagiarism detection alone does not identify AI generated writing.