What Are the Best Honorlock Alternatives for Secure Online Exams?

Honorlock alternatives help institutions secure online exams through AI monitoring, live proctoring, identity verification, or controlled testing environments. Solutions such as Apporto Exam Space, Proctorio, Respondus Monitor, and ProctorU offer different approaches based on privacy requirements, exam security needs, and assessment workflows.

Online proctoring plays an important role in online exams, but concerns about privacy, AI monitoring, and data collection have led many institutions to explore Honorlock alternatives. Honorlock’s requirement for a Chrome extension, along with student complaints about surveillance and recorded sessions, has raised questions about balancing exam integrity with a positive student experience.

As academic integrity remains a priority, institutions are looking for solutions that offer effective online proctoring without unnecessary friction. This guide evaluates the best Honorlock alternatives based on security, privacy, flexibility, LMS integration, pricing, and overall usability.

How Did We Select the Best Honorlock Alternatives?

Choosing an online proctoring platform involves more than comparing monitoring features. Institutions must balance security requirements with student privacy, accessibility, administrative workload, and cost. A proctoring solution that works well for a certification provider may not be the best fit for a university managing thousands of online assessments across multiple departments.

To build this list, each platform was evaluated based on its ability to maintain exam integrity, support different proctoring models, integrate with LMS platforms, protect student data, and scale across various testing environments. Accessibility and pricing flexibility were also important considerations, particularly for institutions looking to support diverse learner populations while managing budgets effectively.

The goal was to identify solutions that help test administrators uphold academic integrity without creating unnecessary barriers for students or increasing operational complexity.

Exam Integrity: Platforms must maintain exam integrity without creating unnecessary friction for test takers.

Monitoring Options: Solutions offering both automated proctoring and live human proctoring scored higher.

Privacy Considerations: Preference was given to platforms with transparent data handling policies and clear student privacy protections.

Institutional Flexibility: Platforms serving higher education, certification providers, and workforce testing environments received priority.

Quick Comparison Table: Which Honorlock Alternative Fits Your Needs Best?

Not every institution needs the same type of proctoring platform. Some prioritize AI-based monitoring to support large-scale online exams, while others place greater emphasis on student privacy, human oversight, or seamless LMS integration. In many cases, the decision comes down to balancing exam integrity, administrative effort, and the overall testing experience.

The table below provides a high-level comparison of the leading Honorlock alternatives covered in this guide. Use it as a starting point before exploring the detailed reviews of each platform.

| Platform | Best For | Proctoring Type | Pricing Model | Standout Feature |

|---|---|---|---|---|

| Apporto Exam Space | Secure exam environments | Environment-Based | Custom | Controlled exam workspace |

| Proctorio | Large-scale testing | AI Proctoring | Custom | Advanced automation |

| Integrity Advocate | Privacy-conscious institutions | Flexible Monitoring | Flexible | Non-invasive approach |

| ProctorU | High-stakes exams | Live Human Proctoring | Custom | Human oversight |

| Respondus Monitor | LMS-focused institutions | Automated + Review | Custom | LMS integration |

| TestnHire | Recruitment assessments | AI Monitoring | Custom | Hiring-focused workflows |

| Examity | Flexible security levels | Human + AI | Custom | Tiered proctoring |

| Mercer Mettl | Enterprise assessments | AI + Human | Custom | Hiring and certification |

| Talview | Remote interviewing | AI + Human | Custom | Video assessment tools |

| ProctorTrack | Academic testing | AI Monitoring | Custom | Identity verification |

| AutoProctor | Budget-conscious institutions | Automated | Lower Cost | Simple deployment |

| WeCP | Technical hiring | Skills Testing | Custom | Coding assessments |

| TestInvite | Exam management | Flexible Monitoring | Custom | Exam customization |

| RPNow (Meazure Learning) | High-stakes testing | Automated + Review | Custom | Strong compliance focus |

| Kaltura Exam Proctoring | Video-centric institutions | AI + Human | Custom | Kaltura ecosystem |

Honorlock Alternative

1. Apporto Exam Space – Best Honorlock Alternative for Secure Exam Environments

Overview

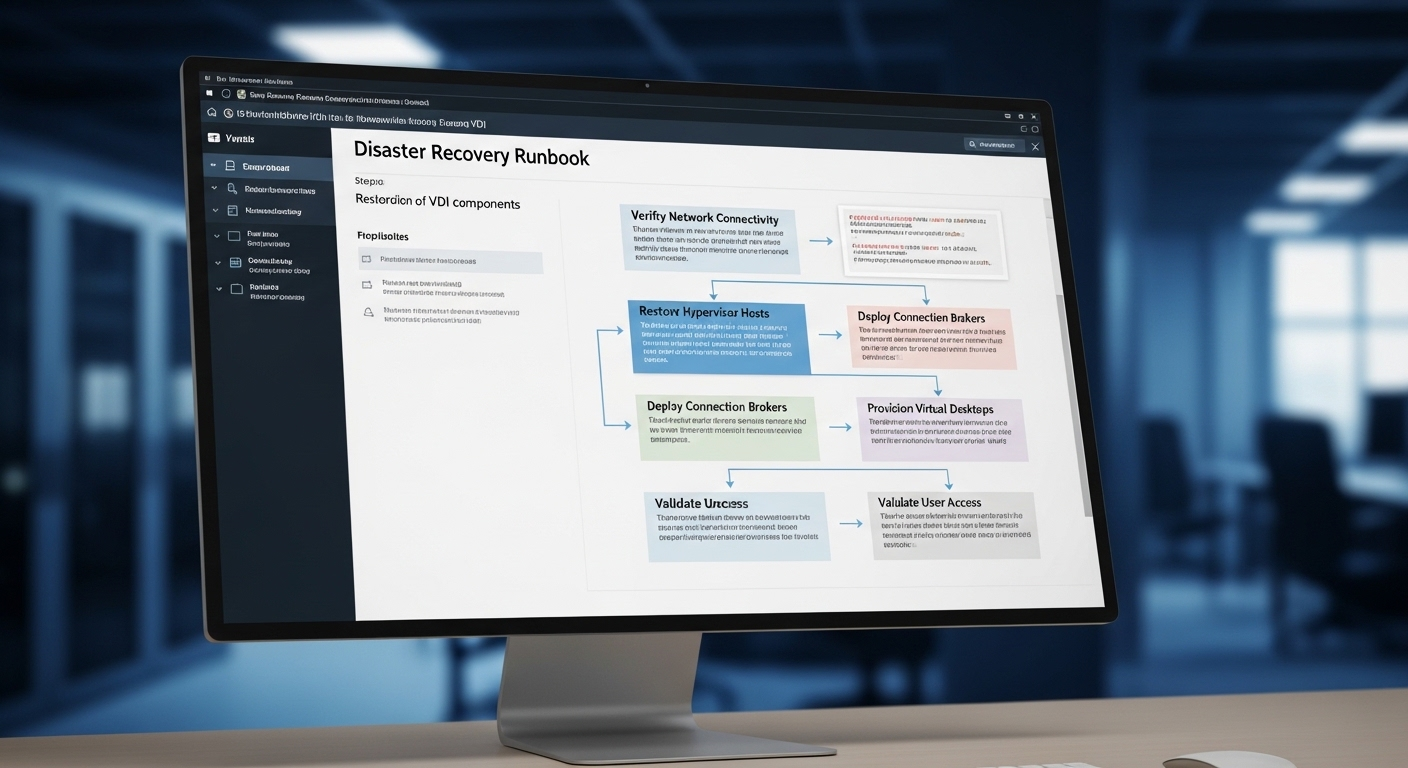

Many online proctoring platforms focus heavily on monitoring student behavior. Apporto Exam Space approaches the problem from a different angle. Instead of relying primarily on surveillance, the platform creates a controlled testing environment where students can access only the tools, applications, and resources approved for the exam.

This distinction matters. One of the recurring criticisms of traditional online proctoring software is that extensive monitoring can create privacy concerns, increase student anxiety, and generate false positives that require additional review. Apporto Exam Space reduces some of that burden by securing the exam environment itself rather than focusing exclusively on watching the test taker.

The platform is designed for institutions that want to maintain exam integrity while delivering a more streamlined testing experience. Because exams run within a managed workspace, administrators can control what students access during an assessment without requiring invasive monitoring practices.

Key Features:

Apporto Exam Space focuses on creating a secure and controlled environment for online assessments.

Controlled Exam Workspace: Provides secure access to approved applications, websites, files, and resources during exams.

No Browser Extensions: Eliminates dependency on Chrome plugins and additional student-side software installations.

Centralized Administration: Simplifies exam setup, access management, and monitoring through a single administrative interface.

Flexible Delivery Models: Supports a variety of assessment formats, including quizzes, practical exams, lab-based assessments, and high-stakes testing.

Best For

Apporto Exam Space is best suited for higher education institutions, certification providers, and testing programs that want to strengthen exam security while minimizing student privacy concerns. It is particularly effective for exams that require access to specialized software, virtual labs, or controlled digital resources.

Limitations

Organizations looking for continuous live human proctoring or highly surveillance-driven monitoring may find Apporto’s environment-first approach different from traditional proctoring platforms. Some institutions may still choose to combine it with additional proctoring measures for certain high-stakes exams.

Pricing

Apporto Exam Space offers custom pricing based on institutional requirements, exam volume, deployment scope, and support needs. Interested organizations must contact Apporto directly for a tailored quote.

2. Proctorio – Best Honorlock Alternative for Large-Scale Automated Testing

Overview

For institutions that prefer automation over continuous human oversight, Proctorio is one of the most recognized Honorlock alternatives on the market. The platform relies heavily on AI proctoring and automated monitoring to help maintain exam integrity across large volumes of online exams. This approach makes it particularly attractive to universities, certification providers, and testing organizations that need to scale online testing without significantly increasing staffing requirements.

Like Honorlock, Proctorio combines browser lockdown capabilities with behavioral monitoring tools designed to identify suspicious activity during exams. However, it places a stronger emphasis on automation, allowing institutions to review flagged incidents after an assessment rather than relying solely on live proctors.

The platform is often chosen by organizations administering thousands of online assessments because its automated systems can monitor large numbers of test takers simultaneously. That scalability remains one of Proctorio’s biggest strengths.

Key Features

Proctorio focuses on automated exam monitoring and large-scale assessment delivery.

AI Monitoring: Uses automated systems to analyze behavior patterns and identify potential exam integrity concerns.

Facial Detection: Verifies student identity through advanced facial recognition and authentication tools.

Browser Lockdown: Restricts access to unauthorized websites, applications, and browser functions during online testing.

Machine Learning Models: Continuously analyze exam activity to support scalable monitoring across large testing populations.

Best For

Proctorio is best suited for large institutions, certification providers, and organizations conducting high-volume online exams where automation and scalability are top priorities.

Limitations

Like many AI based proctoring platforms, Proctorio can generate false positives that require human review. Some students and institutions also raise concerns about privacy, behavioral monitoring, and the accuracy of automated flagging systems. As a result, careful policy development and communication are often necessary.

Pricing

Proctorio offers custom pricing based on exam volume, institutional size, and deployment requirements. Organizations must contact the company directly to obtain detailed pricing information and implementation options.

3. Integrity Advocate – Best Honorlock Alternative for Student Privacy and Flexible Monitoring

Overview

As concerns around student privacy continue to grow, many institutions are rethinking how online proctoring should work. The challenge is obvious. You need to maintain exam integrity, but you also need to avoid creating an environment that feels unnecessarily intrusive. Integrity Advocate was built with that balance in mind.

Unlike some proctoring platforms that rely heavily on constant surveillance, extensive video recording, or aggressive AI monitoring, Integrity Advocate emphasizes a more measured approach. The platform focuses on preserving academic integrity while reducing many of the privacy concerns commonly associated with online proctoring software.

Another factor that sets Integrity Advocate apart is flexibility. Institutions can choose monitoring approaches that align with their assessment policies, risk levels, and student expectations. This makes the platform appealing to colleges, universities, and certification providers looking for a solution that adapts to different exam types rather than forcing every assessment into the same monitoring model.

Key Features

Integrity Advocate prioritizes flexibility, transparency, and a less invasive testing experience.

Non-Invasive Monitoring: Focuses on maintaining exam security without relying on excessive surveillance or intrusive monitoring practices.

Flexible Pricing: Allows institutions to align costs with actual exam requirements instead of paying premium rates across all assessment types.

Privacy-Focused Design: Emphasizes responsible data collection, transparent policies, and reduced privacy concerns for students.

Institutional Customization: Supports different security levels and monitoring approaches based on exam risk, program requirements, and institutional preferences.

Best For

Integrity Advocate is best suited for higher education institutions, certification providers, and testing programs that want to protect academic integrity while placing a strong emphasis on student privacy and trust. It is particularly appealing for organizations seeking alternatives to highly surveillance-driven proctoring tools.

Limitations

Institutions requiring extensive live monitoring or highly aggressive AI-driven detection may find fewer advanced automation capabilities compared to some competing platforms. The platform’s more balanced approach may not align with organizations seeking maximum monitoring at all times.

Pricing

Integrity Advocate offers flexible pricing models that can be tailored to different exam types, security requirements, and institutional needs. This approach often provides greater cost control than platforms that apply the same pricing structure across all assessments.

4. ProctorU – Best Honorlock Alternative for High-Stakes Exams Requiring Human Oversight

Overview

When exam results carry significant consequences, many institutions prefer human judgment over fully automated monitoring. That’s where ProctorU, now part of Meazure Learning, continues to stand out. Unlike platforms that rely primarily on AI proctoring, ProctorU centers its approach around live human proctoring, allowing trained professionals to monitor exams in real time.

This model remains particularly popular for high-stakes testing environments such as professional certifications, licensure exams, admissions testing, and regulated assessments. In these situations, institutions often want immediate intervention when suspicious activity occurs rather than reviewing automated flags after the exam has ended.

The platform combines identity verification, live monitoring, and detailed session records to help maintain exam integrity throughout the testing process. While AI tools can identify patterns and anomalies, ProctorU’s approach adds a real person to the equation, providing context and judgment that automated systems sometimes struggle to replicate.

Key Features

ProctorU focuses on direct human oversight throughout the testing experience.

Live Human Proctoring: Trained live proctors monitor exam takers in real time and can respond immediately to potential issues.

Identity Verification: Uses ID checks and authentication procedures to confirm student identity before an exam begins.

Real-Time Intervention: Allows proctors to address suspicious behavior as it occurs rather than relying solely on post-exam reviews.

Session Recording: Maintains detailed video and activity records for audit purposes, investigations, and compliance requirements.

Best For

ProctorU is best suited for certification providers, licensing organizations, professional testing programs, and higher education institutions conducting high-stakes assessments where direct human oversight is a priority.

Limitations

Live proctoring can create a more stressful testing experience for some students. Scheduling requirements, higher operational costs, and increased reliance on human resources may also make ProctorU less practical for large-scale, lower-risk online exams.

Pricing

ProctorU offers custom pricing based on exam volume, monitoring requirements, and service levels. Costs generally vary depending on the level of human involvement, making it important for institutions to evaluate pricing against the risk profile of their assessments.

5. Respondus Monitor – Best Honorlock Alternative for LMS Integration

Overview

For institutions that want online proctoring to fit naturally into their existing learning environment, Respondus Monitor is one of the strongest Honorlock alternatives available. The platform is widely used in higher education and is particularly popular among colleges and universities that already rely on Learning Management Systems such as Canvas and Blackboard.

Rather than requiring institutions to build separate testing workflows, Respondus Monitor integrates directly into existing course and assessment processes. This reduces administrative complexity for instructors and test administrators while creating a more familiar experience for students.

Respondus Monitor combines automated proctoring with recorded exam sessions that can be reviewed later. Instead of assigning live proctors to every exam, the platform uses AI-based monitoring to identify potential concerns, allowing instructors to focus on flagged incidents rather than watching entire recordings. For many institutions, this strikes a practical balance between scalability and exam integrity.

Key Features

Respondus Monitor is designed to simplify online testing within existing LMS environments.

Canvas & Blackboard Integration: Connects directly with popular LMS platforms, making exam deployment and management more streamlined.

Automated Monitoring: Uses AI tools to monitor student behavior during online exams and identify potential integrity concerns.

Post-Exam Review: Generates recordings and incident reports that instructors can review after an assessment is completed.

Browser Lockdown: Works alongside LockDown Browser to restrict access to unauthorized websites, applications, and digital resources during exams.

Best For

Respondus Monitor is best suited for higher education institutions seeking strong LMS integration, scalable automated proctoring, and simplified exam administration across large student populations.

Limitations

Like many automated proctoring tools, Respondus Monitor can generate false positives that require manual review. Some students may also express concerns about video monitoring, browser restrictions, and the overall testing experience associated with online proctoring.

Pricing

Respondus Monitor uses institution-based pricing that varies according to enrollment size, deployment scope, and licensing agreements. Institutions typically work directly with Respondus to receive customized pricing and implementation details.

6. TestnHire – Best Honorlock Alternative for Recruitment and Candidate Assessments

Overview

Not every assessment is academic. Many organizations use online testing to evaluate job candidates, verify skills, and streamline hiring decisions. In those situations, traditional online proctoring platforms designed for higher education may not provide the right mix of functionality. TestnHire takes a different approach by focusing on recruitment assessments and candidate evaluation rather than classroom exams.

The platform combines assessment delivery, monitoring capabilities, and candidate screening tools within a single environment. This allows hiring teams to evaluate candidates more efficiently while reducing the risk of dishonest behavior during remote testing. For organizations managing large applicant pools, that efficiency can be a significant advantage.

Unlike some proctoring tools that concentrate solely on preventing cheating, TestnHire also emphasizes assessment workflows and hiring outcomes. This makes it particularly useful for employers looking to identify qualified candidates rather than simply monitor test takers.

Key Features

TestnHire is designed to support remote candidate evaluation and recruitment workflows.

AI-Based Monitoring: Helps identify suspicious behavior during candidate assessments without requiring continuous live oversight.

Candidate Verification: Supports identity validation processes to confirm that the correct individual is completing the assessment.

Assessment Management: Enables organizations to create, schedule, and administer online tests from a centralized platform.

Recruitment-Focused Workflows: Integrates assessment results into broader hiring and candidate evaluation processes.

Best For

TestnHire is best suited for hiring teams, staffing firms, corporate recruiters, and organizations conducting pre-employment assessments at scale. It is particularly valuable for businesses that want to evaluate candidates remotely while maintaining consistency and fairness.

Limitations

The platform is designed primarily for recruitment and workforce assessments rather than higher education testing. Institutions looking for deep LMS integration, academic integrity tools, or classroom-focused exam workflows may find other solutions better suited to their needs.

Pricing

TestnHire offers custom pricing based on assessment volume, hiring requirements, and organizational size. Prospective customers typically need to contact the company directly to receive detailed pricing information and deployment options.

7. Examity – Best Honorlock Alternative for Flexible Security Levels

Overview

One of the biggest challenges institutions face when selecting an online proctoring platform is that not every exam requires the same level of security. A low-stakes quiz and a professional certification exam carry very different risks. Examity addresses this issue by offering multiple monitoring options that can be tailored to the importance of the assessment.

Now part of Meazure Learning, Examity has built its reputation around flexibility. Instead of forcing institutions into a single proctoring model, the platform allows them to choose between different levels of oversight, ranging from automated monitoring to live human proctoring. This gives test administrators more control over how they balance exam integrity, student experience, and operational costs.

For organizations managing a mix of assessments, that flexibility can be particularly valuable. You can apply stronger controls to high-stakes exams while using lighter monitoring for lower-risk tests.

Key Features

Examity is designed to adapt to different testing requirements and security needs.

Multiple Security Tiers: Offers varying levels of monitoring so institutions can align security measures with the importance of each exam.

Live Monitoring: Provides access to live proctors who can observe exam sessions and intervene when necessary.

AI Assistance: Uses automated tools to identify unusual behavior and support proctoring workflows.

Identity Checks: Verifies test taker identity before exams begin through authentication and validation procedures.

Best For

Examity is best suited for higher education institutions, certification providers, and testing organizations that administer a variety of assessments with different security requirements. Its tiered approach works especially well for organizations seeking greater flexibility than a one-size-fits-all proctoring solution.

Limitations

Institutions may need to carefully configure policies and workflows to get the most value from the platform’s multiple monitoring options. Live monitoring services can also increase costs for exams requiring higher levels of oversight.

Pricing

Examity uses custom pricing based on exam volume, monitoring levels, and institutional requirements. Costs typically vary according to the selected security tier and the degree of human involvement required during testing.

8. Mercer Mettl – Best Honorlock Alternative for Certification and Enterprise Testing

Overview

Mercer Mettl occupies a slightly different position than many traditional online proctoring platforms. While it offers robust exam proctoring capabilities, its broader focus is on large-scale assessments, certification programs, workforce evaluations, and enterprise testing initiatives. This makes it a strong Honorlock alternative for organizations that need more than just online exam monitoring.

The platform combines assessment delivery, AI proctoring, live human review options, and reporting tools within a single ecosystem. For certification providers and enterprises conducting thousands of assessments annually, this integrated approach can simplify administration and reduce the need for multiple tools.

Mercer Mettl is also widely used in corporate environments where hiring teams need to evaluate candidates through skill-based assessments, aptitude tests, and certification exams. As a result, it appeals to a broader audience than platforms designed primarily for higher education.

Key Features

Mercer Mettl combines online assessment capabilities with flexible proctoring and analytics tools.

AI and Human Proctoring: Supports both automated monitoring and human review, allowing organizations to select the level of oversight that best fits each assessment.

Comprehensive Assessment Platform: Enables the creation, delivery, and management of certification exams, hiring assessments, and workforce evaluations.

Identity Verification: Includes authentication tools that help confirm test taker identity before and during exams.

Detailed Reporting and Analytics: Generates performance reports, incident summaries, and assessment insights for administrators and decision-makers.

Best For

Mercer Mettl is best suited for certification providers, enterprise testing programs, workforce development initiatives, and organizations conducting large-scale assessments across multiple locations. It is particularly valuable when testing extends beyond traditional academic exams.

Limitations

The platform’s extensive feature set may be more than some institutions require, particularly those seeking a simple proctoring solution for classroom assessments. Smaller organizations may also face a learning curve when configuring advanced testing workflows.

Pricing

Mercer Mettl offers custom pricing based on assessment volume, proctoring requirements, user counts, and deployment scope. Organizations typically work directly with Mercer Mettl to develop a pricing package aligned with their testing needs.

9. Talview – Best Honorlock Alternative for Video Assessments and Interviews

Overview

While many Honorlock alternatives focus primarily on academic testing, Talview takes a broader approach. The platform combines online proctoring, video interviewing, candidate evaluation, and assessment management into a single solution. This makes it particularly attractive for organizations that need to assess knowledge, skills, and communication abilities within the same workflow.

Talview is widely used by employers, certification providers, and training organizations that conduct remote assessments at scale. Instead of treating proctoring as a standalone function, the platform integrates it with recruitment and evaluation processes. For many organizations, this creates a more streamlined experience from initial assessment through final decision-making.

The platform also supports both AI-assisted monitoring and human review, giving administrators flexibility in how they approach exam integrity and candidate verification. That balance has helped Talview gain traction among organizations looking for more than a traditional online proctoring solution.

Key Features

Talview combines assessment delivery, monitoring, and video-based evaluation tools.

Video Assessments: Enables organizations to conduct structured video interviews alongside exams and assessments.

AI-Assisted Monitoring: Uses AI monitoring to identify suspicious activity during online testing and assessment sessions.

Identity Verification: Supports ID checks and authentication processes to help confirm participant identity.

Human Review Workflows: Allows flagged incidents and recorded sessions to be reviewed by human reviewers when necessary.

Best For

Talview is best suited for hiring teams, certification providers, workforce development programs, and organizations that combine online testing with video interviews or candidate evaluations. It works especially well when communication skills are as important as assessment performance.

Limitations

Institutions focused exclusively on higher education exam proctoring may find some of Talview’s recruitment and interview features unnecessary. Organizations seeking a simpler proctoring platform may prefer solutions designed specifically for academic assessments.

Pricing

Talview offers custom pricing based on assessment volume, interview requirements, monitoring features, and deployment size. Interested organizations typically need to contact the company directly to receive a customized quote tailored to their use case.

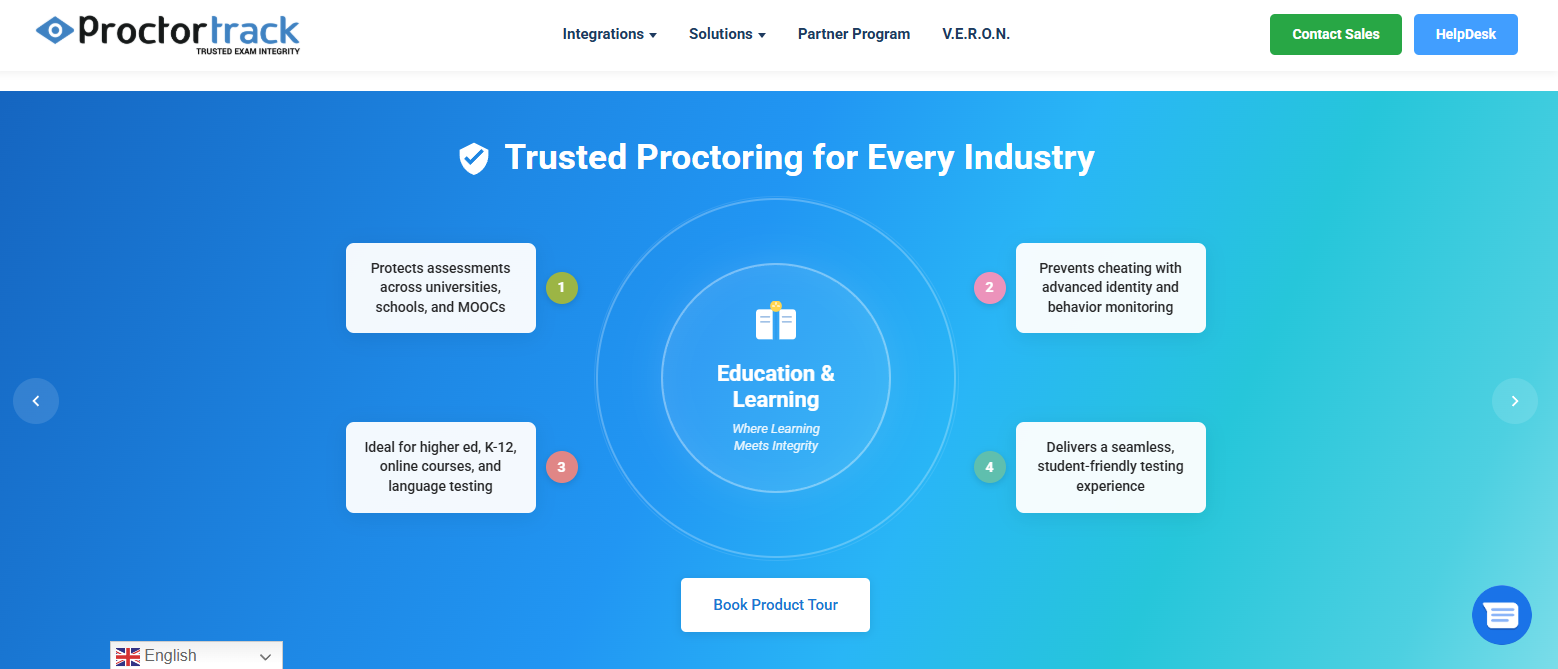

10. ProctorTrack – Best Honorlock Alternative for Identity Verification and Monitoring

Overview

For institutions that place a strong emphasis on identity verification and exam security, ProctorTrack has long been a recognized name in the online proctoring market. Developed by Verificient, the platform combines automated monitoring, authentication tools, and detailed exam oversight to help organizations maintain exam integrity across remote testing environments.

Unlike some proctoring platforms that focus primarily on browser restrictions or post-exam analysis, ProctorTrack places identity verification at the center of its approach. The platform uses multiple authentication methods before and during an assessment to help ensure that the registered test taker is the person completing the exam.

This focus has made ProctorTrack a popular choice among universities, certification providers, and organizations conducting high-stakes assessments. At the same time, the platform offers a range of monitoring tools designed to detect suspicious behavior and provide administrators with detailed review data when incidents occur.

Key Features

ProctorTrack combines identity validation with automated monitoring and exam security controls.

Advanced Identity Verification: Uses authentication processes, biometric checks, and ID validation to confirm participant identity before testing begins.

AI Monitoring: Tracks exam activity and flags potential integrity concerns for review by administrators or human reviewers.

Screen and Environment Monitoring: Records screen activity and monitors the testing environment to identify potential policy violations.

Incident Reports and Session Recording: Generates detailed reports and recorded sessions that help institutions investigate flagged events and maintain compliance records.

Best For

ProctorTrack is best suited for higher education institutions, certification providers, licensing organizations, and testing programs where identity verification is a critical requirement. It is particularly valuable for remote exams that require strong candidate authentication and audit trails.

Limitations

Some students may view the platform’s monitoring and verification processes as intrusive, particularly in environments where privacy concerns are already a topic of discussion. Institutions should carefully evaluate data policies, accessibility requirements, and student communication strategies before implementation.

Pricing

ProctorTrack offers custom pricing based on exam volume, monitoring requirements, institutional size, and deployment needs. Organizations typically work directly with the vendor to determine a pricing structure that aligns with their testing programs.

11. AutoProctor – Best Honorlock Alternative for Lower-Cost Automated Proctoring

Overview

Not every institution has the budget or operational requirements for premium online proctoring services. In many cases, schools, training organizations, and certification providers simply need a reliable way to monitor online exams without investing in extensive live proctoring programs. AutoProctor is designed to address that need.

The platform focuses on automated proctoring, using AI-based monitoring and exam security controls to help maintain exam integrity at a lower cost than many enterprise-focused alternatives. This approach allows institutions to scale online testing without the logistical challenges associated with scheduling live proctors for every assessment.

AutoProctor emphasizes simplicity. Deployment is relatively straightforward, and the platform is designed to help administrators launch online assessments quickly without extensive configuration. For organizations that prioritize ease of use and affordability, that can be a significant advantage.

Key Features

AutoProctor delivers core proctoring functionality through an automation-first approach.

Automated Monitoring: Uses AI-based proctoring tools to detect suspicious behavior and flag potential exam integrity concerns.

Identity Verification: Supports authentication and ID validation processes before an exam begins.

Browser Security Controls: Helps restrict unauthorized activity during online testing sessions.

Automated Reporting: Generates incident reports and session data that can be reviewed by test administrators after the assessment.

Best For

AutoProctor is best suited for smaller institutions, workforce training programs, certification providers, and organizations looking for a lower-cost online proctoring solution. It is particularly useful for low- to medium-stakes exams where full human oversight may not be necessary.

Limitations

As with many automated proctoring platforms, AutoProctor may generate false positives that require manual review. Institutions conducting high-stakes assessments may also find the absence of continuous live human monitoring less suitable for their security requirements.

Pricing

AutoProctor is generally positioned as a more budget-friendly alternative to larger online proctoring companies. Pricing varies based on exam volume, feature requirements, and deployment scope, with customized plans available for institutions and organizations of different sizes.

12. WeCP – Best Honorlock Alternative for Technical Hiring and Skill-Based Assessments

Overview

Traditional online proctoring platforms are primarily designed to prevent cheating during exams. Technical hiring, however, presents a different challenge. Employers need to verify not only who is taking an assessment, but also whether candidates can actually perform the tasks required for the role. That’s where WeCP stands apart.

WeCP focuses on skill-based assessments, technical evaluations, and hiring workflows rather than academic testing alone. The platform allows organizations to assess coding ability, problem-solving skills, and job-specific competencies within a controlled environment. This makes it particularly useful for companies recruiting software developers, data analysts, engineers, and other technical professionals.

Instead of relying exclusively on proctoring, WeCP combines assessment delivery with practical skill validation. For hiring teams, that often provides a more complete picture of candidate capabilities than traditional multiple-choice testing.

Key Features

WeCP is built around technical assessments and candidate evaluation.

Coding Assessments: Supports real-world programming challenges across multiple languages and development environments.

Technical Hiring Workflows: Streamlines the assessment process from candidate invitation to final evaluation and reporting.

Skill Validation: Measures practical abilities through hands-on tasks rather than relying solely on theoretical knowledge tests.

Candidate Evaluation Tools: Provides detailed scoring, performance insights, and benchmarking to help hiring teams make informed decisions.

Best For

WeCP is best suited for hiring teams, staffing agencies, technology companies, and organizations conducting technical recruitment at scale. It is particularly valuable for evaluating software developers, engineers, data professionals, and other specialized talent where practical skills matter as much as credentials.

Limitations

The platform is not primarily designed for higher education exams or traditional academic assessments. Institutions seeking extensive LMS integration, classroom-focused proctoring features, or certification testing workflows may find more suitable alternatives elsewhere.

Pricing

WeCP offers custom pricing based on assessment volume, candidate numbers, feature requirements, and organizational needs. Companies typically work directly with the vendor to build a pricing package that aligns with their hiring and assessment goals.

13. TestInvite – Best Honorlock Alternative for Customizable Online Assessments

Overview

Many online proctoring platforms are built around a fixed testing model. That can work well for standardized exams, but organizations with unique assessment requirements often need greater flexibility. TestInvite addresses this challenge by focusing on customizable online assessments that can be tailored to different testing objectives, security requirements, and participant groups.

The platform combines exam creation, assessment delivery, and proctoring capabilities within a configurable environment. Rather than forcing institutions and organizations into predefined workflows, TestInvite allows administrators to customize exam settings, monitoring rules, and assessment structures based on their specific needs.

This flexibility has made TestInvite a popular option among educational institutions, certification providers, and corporate training programs. It is particularly useful when different exams require different levels of security, timing controls, or monitoring methods.

Key Features

TestInvite is designed to provide greater control over how assessments are created and delivered.

Customizable Exam Design: Supports a wide range of question types, exam structures, and assessment formats.

Flexible Monitoring Options: Allows administrators to configure proctoring settings based on the risk level and purpose of each assessment.

Secure Testing Environment: Includes tools that help protect exam content and maintain assessment integrity during online testing.

Detailed Reporting and Analytics: Provides insights into participant performance, exam activity, and assessment outcomes.

Best For

TestInvite is best suited for educational institutions, certification organizations, training providers, and businesses that require highly customizable online assessments. It works particularly well for organizations managing diverse testing programs with varying security requirements.

Limitations

While TestInvite offers significant flexibility, institutions seeking highly specialized proctoring features or extensive live human monitoring may find some competitors better suited to those specific needs. The platform’s broad customization options may also require additional setup time compared to simpler solutions.

Pricing

TestInvite offers custom pricing based on assessment volume, feature requirements, user counts, and deployment scope. Organizations typically need to contact the vendor directly to receive pricing information tailored to their testing programs and operational needs.

14. RPNow (Meazure Learning) – Best Honorlock Alternative for Compliance-Focused Testing Programs

Overview

For organizations operating in regulated industries or administering high-stakes assessments, compliance requirements often carry as much weight as exam security. RPNow, part of the Meazure Learning portfolio, was designed with that reality in mind. The platform focuses on secure remote testing while providing the documentation, review processes, and oversight needed to support compliance-driven testing programs.

Unlike platforms that rely exclusively on live monitoring, RPNow uses automated proctoring combined with post-exam human review. This approach allows institutions to scale online testing while maintaining a detailed audit trail of exam activity. For certification providers, licensing bodies, and professional testing organizations, that balance can be particularly valuable.

RPNow has gained traction among organizations that need to demonstrate consistent testing standards across large candidate populations. By combining automation with structured review processes, the platform helps maintain exam integrity without requiring a live proctor for every session.

Key Features

RPNow is designed to support secure, scalable, and compliance-focused testing environments.

Automated Monitoring: Uses AI-assisted monitoring tools to identify suspicious behavior and potential exam integrity concerns during testing sessions.

Human Review: Recorded sessions and flagged incidents are reviewed by trained professionals, providing additional context and reducing reliance on automated decisions alone.

Compliance Controls: Supports audit requirements through detailed reporting, documentation, and secure record management processes.

High-Stakes Testing Support: Designed to accommodate certification exams, licensure assessments, and other high-stakes testing programs that require consistent oversight.

Best For

RPNow is best suited for certification providers, professional licensing organizations, workforce credentialing programs, and institutions that must meet strict compliance and documentation requirements while delivering remote exams.

Limitations

Because the platform relies on post-exam review rather than continuous live human proctoring, institutions requiring immediate intervention during exams may prefer alternative solutions. Some organizations may also need additional review workflows for highly sensitive assessments.

Pricing

RPNow offers custom pricing based on testing volume, review requirements, compliance needs, and deployment scope. Organizations typically work with Meazure Learning directly to develop a pricing structure that aligns with their assessment and regulatory requirements.

15. Kaltura Exam Proctoring – Best Honorlock Alternative for Institutions Using Kaltura

Overview

For institutions that already rely on Kaltura for video learning, lecture capture, and virtual classroom experiences, Kaltura Exam Proctoring offers a natural extension of their existing educational technology ecosystem. Rather than introducing a completely separate testing platform, Kaltura integrates proctoring capabilities into a familiar environment that many students and instructors already use.

The platform is designed to support online exams while helping institutions maintain academic integrity across remote and hybrid learning programs. By leveraging Kaltura’s expertise in video technology, the solution combines exam monitoring, session recording, and assessment oversight within a unified framework.

This integrated approach can reduce administrative complexity and simplify implementation. Instead of managing multiple vendors and disconnected systems, institutions can centralize more of their teaching, learning, and assessment activities under a single platform. For universities with significant investments in Kaltura, that operational efficiency can be a meaningful advantage.

Key Features

Kaltura Exam Proctoring combines assessment security with video-based monitoring and administrative controls.

Integrated Video Monitoring: Uses Kaltura’s video infrastructure to support exam recording and monitoring throughout assessment sessions.

Session Recording: Captures exam activity for review, investigation, and academic integrity verification purposes.

Assessment Management Support: Works alongside existing educational workflows to streamline exam administration.

Kaltura Ecosystem Integration: Connects with other Kaltura learning tools, reducing the need for additional platforms and separate user experiences.

Best For

Kaltura Exam Proctoring is best suited for colleges, universities, and educational organizations already using Kaltura for video learning and content delivery. It is particularly valuable for institutions seeking tighter integration between instructional technology and exam proctoring processes.

Limitations

Organizations that do not currently use Kaltura may not realize the same integration benefits. Institutions looking for highly specialized proctoring features, extensive live human monitoring, or advanced AI proctoring capabilities may find stronger options elsewhere on this list.

Pricing

Kaltura Exam Proctoring uses custom pricing based on institutional size, deployment requirements, assessment volume, and existing Kaltura services. Institutions typically need to work directly with Kaltura to receive a tailored quote and implementation plan.

How Do You Choose the Right Honorlock Alternative for Your Institution?

Selecting an online proctoring platform is no longer just a technology decision. It affects student trust, faculty workflows, exam security, compliance requirements, and institutional reputation. A platform that works well for a professional certification provider may create unnecessary friction in a university setting. Likewise, a solution designed for low-stakes assessments may not provide the oversight needed for licensure exams or accreditation requirements.

The most effective approach is to evaluate alternatives through the lens of your institution’s priorities rather than focusing on features alone. Student privacy, monitoring requirements, LMS compatibility, and assessment risk levels should all play a role in the decision.

How Important Is Student Privacy in Your Proctoring Strategy?

Student privacy has become one of the most debated topics in online proctoring. Over the past few years, many institutions have faced questions about data collection, video recordings, AI monitoring, and how student information is stored. As a result, privacy considerations are now central to many procurement decisions.

A platform’s security capabilities matter, but so does the student experience. Excessive monitoring can increase anxiety and lead to concerns about fairness, particularly when students are required to grant access to cameras, microphones, screens, and personal devices.

Before selecting a proctoring platform, consider the following:

Data Collection Policies: Understand what information is collected, how long it is stored, who can access it, and whether students have visibility into those practices.

Monitoring Methods: Compare invasive and non-invasive approaches. Some platforms rely heavily on video surveillance and AI monitoring, while others focus on creating secure testing environments with fewer privacy concerns.

Recording Requirements: Determine whether continuous audio and video recording is necessary for your assessment strategy.

Institutional Transparency: Look for vendors that clearly communicate privacy policies and data handling procedures.

Ultimately, the strongest proctoring strategy is often one that protects academic integrity while preserving student trust. Institutions that find the right balance are often better positioned to achieve both objectives.

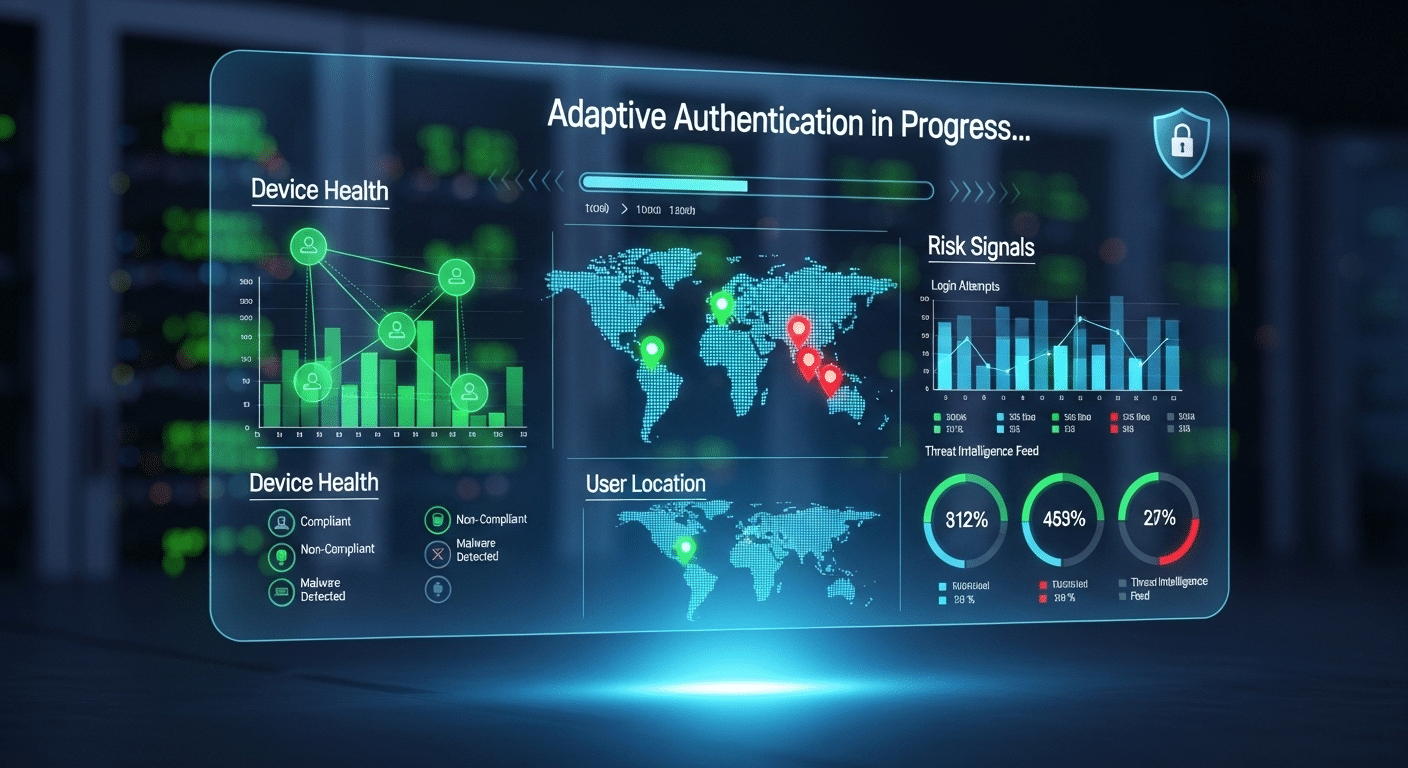

Do You Need AI Monitoring, Human Proctors, or Both?

One of the first decisions you’ll need to make is how exams should be monitored. There is no universal answer because different assessments carry different levels of risk. A weekly quiz may not require the same oversight as a licensing exam or professional certification test.

Modern proctoring platforms generally fall into three categories:

Automated Proctoring: AI monitoring systems analyze screen activity, eye movement, audio cues, browser behavior, and other signals. This approach is scalable and often more cost-effective for large institutions managing thousands of online exams.

Live Human Proctoring: A real person monitors the testing session in real time and can intervene immediately if suspicious activity occurs. This model provides greater oversight and is commonly used for high-stakes assessments.

Hybrid Models: Combine automated proctoring with human review. AI identifies potential concerns, while trained reviewers evaluate incident reports and recordings before making final determinations.

The right choice depends on your risk tolerance, budget, student population, and assessment requirements. In many cases, hybrid models provide the best balance between efficiency and accuracy.

Which Platforms Integrate Best with Your LMS?

Even the most advanced proctoring platform can create administrative headaches if it doesn’t work well with your existing systems. Strong LMS integration reduces manual work, simplifies exam delivery, and creates a more seamless experience for instructors and students.

Before selecting a platform, evaluate how it fits into your current academic workflows.

Canvas Integration: Platforms such as Respondus Monitor and Honorlock are often chosen because they integrate directly into Canvas course environments.

Blackboard Integration: Institutions using Blackboard should verify compatibility for exam deployment, reporting, and student authentication processes.

Single Sign-On Support: SSO capabilities help reduce login friction and simplify user management for students, instructors, and administrators.

Administrative Workflows: Consider how exams are created, scheduled, monitored, and reviewed. Platforms that streamline these tasks can save significant administrative time throughout the academic year.

A proctoring solution should feel like an extension of your LMS, not a separate system that requires constant workarounds.

What Type of Exams Are You Delivering?

The nature of your assessments should heavily influence your platform selection. A solution that excels in one environment may be less effective in another.

Different testing programs often have very different priorities.

Higher Education: Universities typically need a balance of academic integrity, student privacy, LMS integration, and scalability.

Certification Providers: Professional certification exams often require stronger identity verification, detailed audit trails, and stricter security controls.

Technical Hiring: Platforms such as WeCP, Talview, and TestnHire focus on candidate evaluation, coding assessments, and practical skill validation rather than traditional exam proctoring.

High-Stakes Assessments: Licensing exams, admissions tests, and credentialing programs often benefit from live human proctoring, advanced identity verification, and comprehensive compliance documentation.

The closer a platform aligns with your testing goals, the more likely it is to deliver a secure, efficient, and positive testing experience.

What Are the Biggest Limitations of Traditional Online Proctoring Platforms?

Online proctoring has helped institutions expand access to remote exams, support distance learning, and maintain academic integrity at scale. Yet even the most advanced proctoring platforms come with tradeoffs. As institutions evaluate Honorlock alternatives, many are looking beyond feature lists and asking a broader question: what impact does proctoring have on students, instructors, and the overall testing experience?

The answer is rarely straightforward. Strong security controls can improve exam integrity, but they can also introduce challenges that affect adoption, satisfaction, and trust. Some of the most common concerns include:

Privacy Concerns: Continuous video recording, audio monitoring, screen monitoring, and identity verification processes can make students uncomfortable. Many institutions are now paying closer attention to how proctoring platforms collect, store, and manage sensitive data.

False Positives: AI monitoring systems can sometimes incorrectly flag normal behavior as suspicious. Looking away from the screen, adjusting posture, background noise, or natural eye movement may trigger incident reports that require additional review.

Student Anxiety: The presence of live proctors or extensive monitoring tools can increase stress levels during exams. For some students, the feeling of constant observation affects concentration and performance.

Technical Issues: Internet disruptions, browser lockdown requirements, software compatibility problems, and device limitations can create friction during online exams. Even minor technical issues can have a significant impact during high-stakes assessments.

Accessibility Challenges: Not all proctoring solutions accommodate every learner equally. Students using assistive technologies, alternative input devices, or accessibility accommodations may face additional barriers if platforms are not designed with inclusivity in mind.

For many institutions, the goal is no longer simply preventing cheating. It is finding a balance between exam security, student trust, accessibility, and a positive testing experience.

Final Thoughts

The right Honorlock alternative depends largely on your institution’s priorities. If student privacy is at the center of your decision-making process, Integrity Advocate stands out for its non-invasive approach and flexible monitoring options.

Institutions that rely heavily on Canvas or Blackboard will likely find Respondus Monitor to be the strongest choice due to its seamless LMS integration and familiar administrative workflows. For high-stakes assessments that require direct oversight, ProctorU remains one of the most trusted options thanks to its live human proctoring model.

Organizations seeking scalable automated monitoring should consider Proctorio, which combines AI monitoring, browser lockdown capabilities, and advanced automation tools for large-scale online testing.

For institutions looking beyond traditional proctoring, Apporto Exam Space offers a different path. Rather than focusing primarily on surveillance, it secures the testing environment itself through controlled access to applications, resources, and exam tools.

This approach can help maintain exam integrity while reducing many of the privacy and usability concerns associated with conventional online proctoring platforms.

Ultimately, the best solution is the one that aligns with your assessment strategy, student experience goals, and administrative requirements. Explore Apporto Exam Space

Frequently Asked Questions (FAQs)

1. What is the best Honorlock alternative in 2026?

The best Honorlock alternative depends on your goals. Apporto Exam Space is ideal for secure exam environments, Integrity Advocate prioritizes student privacy, ProctorU excels in high-stakes testing, and Respondus Monitor remains a strong choice for LMS-integrated online exams.

2. Why are institutions looking for alternatives to Honorlock?

Many institutions are reevaluating Honorlock due to concerns about privacy, AI monitoring, Chrome extension requirements, and student feedback. Others are seeking more flexible pricing, alternative monitoring approaches, or proctoring solutions that better align with their assessment strategies.

3. Is live human proctoring better than AI proctoring?

Neither approach is universally better. Live human proctoring provides direct oversight and immediate intervention, while AI proctoring offers greater scalability and lower operational costs. Many institutions prefer hybrid models that combine automated monitoring with human review.

4. Which Honorlock alternative works best with Canvas and Blackboard?

Respondus Monitor is widely regarded as one of the strongest options for Canvas and Blackboard users. Its direct LMS integration simplifies exam setup, administration, monitoring, and post-exam review while minimizing disruptions to existing academic workflows.

5. Are Honorlock alternatives more privacy-friendly?

Some are. Platforms such as Integrity Advocate and Apporto Exam Space are designed to reduce reliance on invasive monitoring techniques. However, privacy practices vary significantly between vendors, making it important to review data collection and storage policies carefully.

6. Which proctoring platform is best for high-stakes exams?

ProctorU is often considered one of the strongest choices for high-stakes assessments because it offers live human proctoring, identity verification, real-time intervention, and detailed session records. Certification providers and licensing organizations commonly use it for secure remote testing.

7. Who is the competitor of Honorlock?

Several companies compete with Honorlock in the online proctoring market, including Apporto Exam Space, Proctorio, Respondus Monitor, ProctorU, and Integrity Advocate. These platforms offer different approaches to exam security, ranging from AI monitoring and live proctoring to controlled testing environments.

8. Which app is best for online exams?

The best app for online exams depends on your requirements. Apporto Exam Space is well suited for secure exam environments, ProctorU specializes in live proctoring, and Proctorio focuses on automated monitoring. Institutions should evaluate security, privacy, scalability, and LMS integration before choosing.

9. What is the best proctoring software?

There is no single best proctoring software for every institution. Popular options include Apporto Exam Space, Honorlock, Proctorio, Respondus Monitor, and ProctorU. The right choice depends on whether you prioritize privacy, live oversight, automated monitoring, or assessment flexibility.

10. Do professors actually check Honorlock?

Yes, professors and administrators often review Honorlock reports, flagged events, and recorded sessions when investigating potential academic integrity concerns. However, flagged activity is typically reviewed alongside other evidence before any conclusions or disciplinary decisions are made.

11. Can Honorlock really detect phones?

Honorlock can identify behaviors that may suggest phone use during an exam through webcam monitoring, screen activity analysis, and other proctoring tools. However, detection capabilities vary by exam settings, and institutions generally review flagged incidents before taking action.