Quick Answer

How to Choose the Right DaaS Provider?

A DaaS provider delivers secure cloud-hosted virtual desktops and applications that users can access from any device. The right provider should offer strong security, centralized management, reliable performance, and flexible scalability. Fully managed platforms like Apporto simplify desktop delivery while reducing infrastructure complexity and operational overhead.

Choosing a Desktop as a Service provider used to feel like a narrow IT task. That assumption no longer holds. As remote teams grow and virtual desktops become a standard way to deliver work environments, the decision carries weight far beyond infrastructure.

Organizations now rely on DaaS providers to deliver secure access, consistent performance, and a user experience that does not slow people down. At the same time, cost pressure is real. Subscription models, usage based pricing, and hidden infrastructure expenses can quietly affect budgets over time.

Security expectations have also risen. Protecting sensitive data, meeting compliance requirements, and maintaining visibility across user sessions are no longer optional.

This is why knowing how to choose the right DaaS provider matters. The provider you select influences productivity, risk exposure, and long term flexibility. Some platforms fit short term needs but strain as teams grow. Others support sustainable outcomes but require careful evaluation upfront.

This guide breaks down what to look for, what to question, and how to decide with clarity rather than guesswork.

What Is a DaaS Provider, and What Do They Actually Deliver?

A DaaS provider delivers complete desktop environments without requiring you to own or manage physical machines. Instead of installing operating systems and applications on individual computers, the provider delivers virtual desktops and virtual apps from cloud infrastructure. You log in, your workspace appears, and the heavy lifting happens elsewhere.

This is where confusion often starts. Desktop as a Service is not the same as traditional VDI running in a server room, and it is not the same as SaaS tools accessed through a browser.

A service DaaS provider manages the backend infrastructure, including servers, storage, updates, and availability. Your team focuses on using the desktop, not maintaining it.

These providers also make access flexible. Users connect to the same desktop from laptops, tablets, or shared machines, as long as there is an internet connection. The desktop follows the user, not the device. That consistency matters as teams spread across locations and devices.

At its core, a DaaS provider delivers:

- A virtual desktop environment hosted in the cloud

- Centralized management for updates, images, and policies

- Secure remote access to desktops and applications

Understanding this foundation makes it easier to compare providers later. Once you see what is actually delivered, you can start asking better questions about security, performance, and fit

Why the “Right” DaaS Provider Depends on Your Business Needs

There is no single DaaS provider that works best for every organization. The right provider depends on how your business operates, who your users are, and what outcomes matter most over time. Treating the decision as a generic technology purchase usually leads to frustration later.

User needs vary widely. A call center agent, a developer, and a healthcare administrator all interact with desktops in different ways. Some roles demand high performance and low latency. Others prioritize secure access to sensitive data or compatibility with specialized software. A provider that works well for one group may create friction for another.

Industry context also shapes the decision. Compliance requirements, cost controls, and security expectations differ across sectors. What feels cost effective in one environment can become expensive or restrictive in another. The right provider balances performance, compliance, and pricing in a way that supports how your teams actually work.

Provider fit shows up quickly in productivity and user experience. When desktops load slowly, sessions drop, or tools feel constrained, people notice. Aligning provider capabilities with real business needs helps avoid these issues and creates better long term business outcomes rather than short term convenience.

Types of DaaS Providers You’ll Encounter

Before comparing features or pricing, it helps to understand the main categories of DaaS providers on the market. These providers take different approaches to delivering virtual desktops, and those differences affect control, complexity, and long term flexibility.

Some organizations start with hyperscalers. These platforms are built directly on large cloud providers and integrate tightly with broader cloud services. They offer strong scalability and appeal to teams already invested in a specific cloud ecosystem. Setup and management often require more internal expertise, especially as environments grow.

Citrix based platforms sit on top of cloud infrastructure but add a mature layer for desktop virtualization and virtual apps. They are known for performance optimization and granular control, though they can introduce licensing complexity and higher management overhead.

VMware based platforms follow a similar model. They appeal to organizations with existing VMware experience and offer consistency across on premises and cloud environments. Operational complexity can increase if teams are not already familiar with the tooling.

Fully managed third party providers aim to simplify everything. They handle infrastructure, updates, security, and scaling, allowing IT teams to focus on higher value work.

Common examples you will encounter include:

Knowing which category aligns with your capabilities makes deeper evaluation far more practical.

Features to Evaluate in Any DaaS Provider

When evaluating DaaS providers, having a clear baseline checklist keeps comparisons grounded. Features look similar on paper, but small differences in how they are delivered can affect daily operations in a big way.

- Virtual desktop and virtual app delivery

A strong provider supports both full desktops and individual virtual apps. This flexibility allows teams to choose what users actually need instead of forcing a one size approach.

- Windows and Linux desktop support

Operating system support matters more than it seems. Some environments rely heavily on Windows desktops, while others need Linux desktops for engineering or specialized workloads.

- Image management and custom images

The ability to create, update, and reuse custom images saves time and reduces configuration drift. Poor image management quickly turns into operational overhead.

- Centralized management tools

Centralized management simplifies updates, policy changes, and troubleshooting. Without it, IT teams end up juggling multiple consoles and manual processes.

- User session controls

Granular session controls help manage resource usage, idle sessions, and access behavior. These controls directly affect performance and cost efficiency.

- Application compatibility

Virtual desktops must support existing software without workarounds. Compatibility issues often surface late and disrupt user workflows if not tested early.

This checklist creates a practical foundation. Once these key features are clear, deeper evaluation around security, pricing, and scalability becomes far easier and more reliable.

Security and Compliance: What You Cannot Afford to Overlook

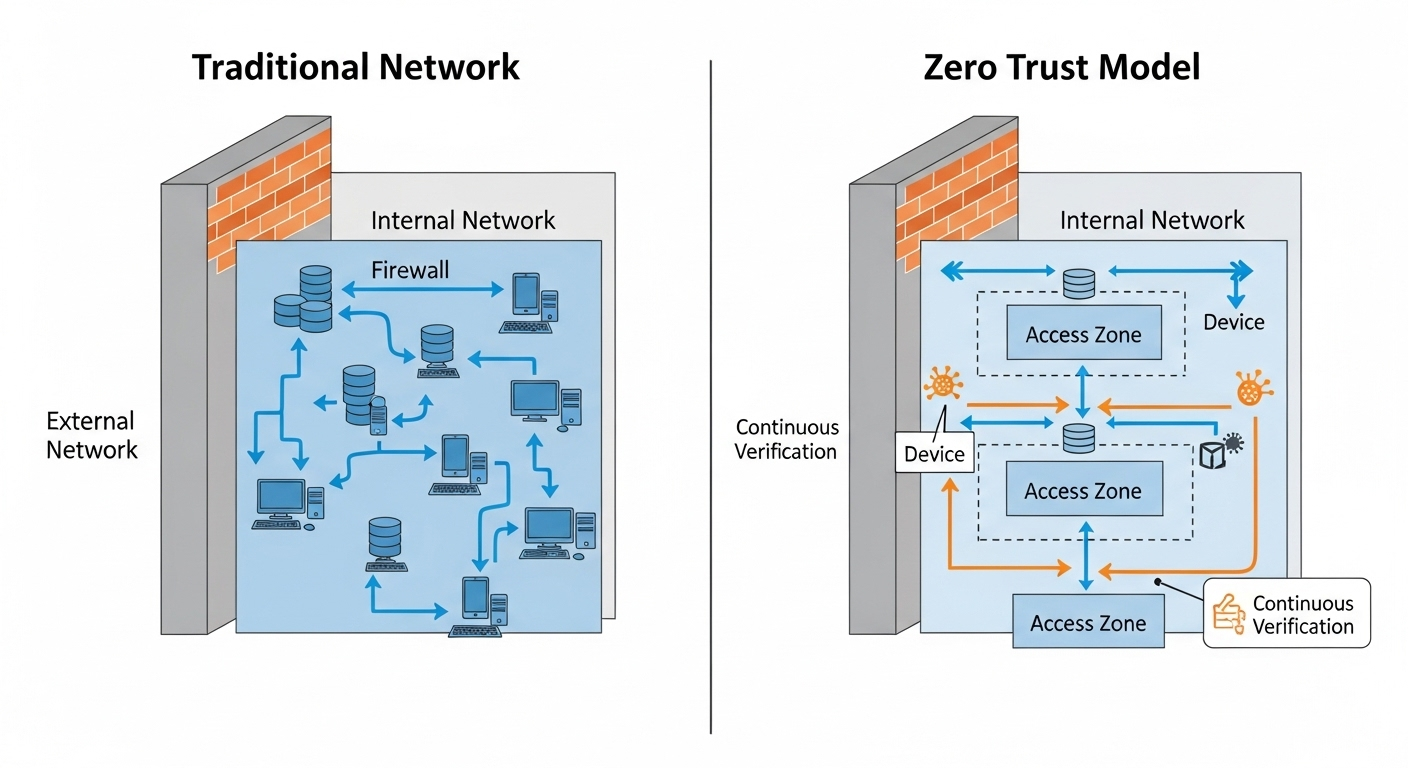

Security and compliance cannot sit at the end of a DaaS evaluation checklist. They belong near the top, because the way a provider protects data and enforces controls directly affects risk, trust, and long term viability. Once virtual desktops are in place, reversing a weak security decision becomes expensive and disruptive.

Centralized data storage is one of the biggest advantages of desktop as a service. Sensitive data stays in controlled cloud environments rather than scattered across local devices. When laptops are lost or employees change roles, data exposure drops sharply. That alone changes the security posture for many organizations.

Compliance requirements add another layer. Industries handling patient records, payment data, or regulated information must meet strict standards. A provider’s certifications, controls, and audit practices matter as much as performance benchmarks. Security cannot rely on promises alone. It needs evidence.

Ongoing monitoring also plays a critical role. Threats evolve, configurations drift, and usage patterns change. Providers that invest in regular audits and visibility tools help organizations detect issues early instead of reacting after damage occurs.

Key security capabilities to verify include:

- Multi factor authentication to strengthen user access

- Encryption in transit and at rest to protect data flows and storage

- Compliance controls for HIPAA, PCI DSS, and similar regulations

- Regular security audits that validate controls over time

When security and compliance are treated as core decision drivers, the result is a more resilient DaaS deployment that protects sensitive data without slowing users down.

Performance and User Experience: What Actually Affects End Users

Performance decisions made on the backend surface very quickly for end users. When a virtual desktop feels slow, disconnects often, or struggles to load applications, productivity drops and frustration rises. This is where many DaaS evaluations succeed or fail.

Latency is one of the first factors users notice. Internet connection quality, geographic proximity to data centers, and how traffic is routed all influence response time. Even small delays add up when users spend hours inside a desktop session. Low latency is not a luxury, it is a baseline expectation.

Resource allocation matters just as much. Providers differ in how they assign CPU, memory, and storage to each user session. Poor allocation leads to contention, where one user’s workload affects another’s experience. Strong platforms monitor usage patterns and adjust resources to maintain optimal performance throughout the day.

Performance tuning also affects consistency. Some providers optimize for peak loads, while others struggle during busy periods. These differences show up in application responsiveness, login times, and session stability.

The impact on productivity is direct. Smooth performance allows people to focus on work rather than workarounds. When desktops respond quickly and behave predictably, users trust the environment. That trust translates into better adoption, fewer support tickets, and a user experience that supports real work instead of getting in the way.

Integration With Your Existing Infrastructure

Integration often decides how smooth a DaaS rollout feels after the first login. A provider may look strong on paper, but if it does not fit cleanly with your existing infrastructure, friction appears quickly. Users notice it, and IT teams feel it even sooner.

Identity systems sit at the center of this discussion. Most organizations already rely on Active Directory or similar identity services to manage access. A DaaS provider should integrate directly with these systems so users authenticate once and move between tools without confusion. When identity flows cleanly, access stays secure and administration stays manageable.

The same applies to the Microsoft ecosystem. Many teams depend on Microsoft 365, Windows desktops, and related services. Tight alignment here reduces duplication, avoids conflicting policies, and keeps workflows familiar. When desktops, files, and collaboration tools work together, adoption happens faster.

Existing tools and workflows also matter. Monitoring platforms, security controls, and management processes should continue working without heavy redesign. Integration gaps create manual work and increase the chance of errors.

When evaluating providers, confirm support for:

- Seamless integration with current systems and identity platforms

- Hybrid cloud deployments that connect cloud desktops with on premises resources

- Compatibility with existing infrastructure, tools, and workflows

Strong integration shortens deployment timelines, lowers operational risk, and helps virtual desktops feel like a natural extension of what teams already use.

Pricing Models and Total Cost of Ownership

Pricing is where many DaaS decisions quietly go wrong. What looks affordable at first can become expensive once usage grows, features expand, or contracts renew. Understanding pricing models and total cost of ownership early helps avoid those surprises.

Most DaaS platforms operate under an operating expense model rather than a capital expense one. Instead of large upfront hardware purchases, costs are spread over time through subscriptions. This can improve cash flow, but it also requires closer attention to usage patterns. Idle desktops, oversized resource allocations, or unused licenses still cost money month after month.

Subscription types vary. Some providers charge per user, others charge based on compute usage, storage, or session hours. Long term cost efficiency depends on how closely pricing aligns with how your teams actually work. A model that fits a small pilot may not scale well across hundreds or thousands of users.

Hidden costs deserve careful scrutiny. Licensing for operating systems, virtual apps, or third party software can add up quickly. Network egress fees, premium support tiers, and advanced security features may not be included by default.

Key pricing elements to evaluate include:

- Pay as you go pricing tied to actual usage

- Flat rate pricing for predictable workloads

- Hidden fees and licensing costs buried in contracts

- Infrastructure and maintenance savings from offloading hardware

Looking beyond monthly rates and focusing on total cost over time leads to better decisions and fewer budget surprises.

Scalability, Flexibility, and Growth Readiness

Scalability becomes important the moment conditions change, and they always do. Teams grow, projects end, seasonal demand rises, and priorities shift. A DaaS provider should handle these changes without forcing major reconfiguration or long approval cycles.

Scaling users up or down needs to be simple. Adding new users quickly supports hiring and onboarding, while removing users just as easily prevents paying for unused resources. Providers that lock organizations into rigid commitments make growth harder than it needs to be.

Flexible work models add another layer. Remote teams, contractors, and temporary workers often require access for limited periods. A scalable platform supports these scenarios without creating security gaps or operational overhead. Desktops should appear when needed and disappear when the work ends.

Resource elasticity also matters. Workloads fluctuate throughout the day and across the year. Platforms that adjust compute and storage dynamically avoid overprovisioning while still delivering reliable performance. This balance supports growth without inflating costs.

Evaluating scalability means looking past current headcount. The right provider supports growth, contraction, and experimentation. When desktops scale smoothly alongside the business, technology becomes an enabler rather than a constraint, and teams stay focused on outcomes instead of infrastructure limits.

Vendor Lock-In and Multi Cloud Support Considerations

Vendor lock in rarely causes problems on day one. It shows up later, when costs rise, service levels change, or business priorities move in a new direction. At that point, switching providers can feel far more difficult than expected.

Provider dependency becomes a risk when desktops, images, identity systems, and data are tightly bound to a single platform. Custom configurations that cannot be exported, proprietary tools, or restrictive contracts limit flexibility. Over time, this can reduce negotiating power and slow down change.

Multi cloud support helps reduce that risk. Providers that operate across multiple cloud environments give organizations more options. This flexibility matters for performance optimization, regulatory requirements, and long term cost control. It also makes future transitions less disruptive if priorities evolve.

Data portability plays a critical role here. Virtual desktops generate profiles, settings, and user data that must remain accessible. If exporting that data is difficult or poorly documented, lock in becomes very real.

When evaluating providers, look closely at:

- Exit strategies that allow you to move workloads cleanly

- Support for multi cloud environments rather than a single dependency

- Data portability for desktops, images, and user profiles

Avoiding vendor lock in is not about planning to leave immediately. It is about preserving options so the platform continues to serve the organization as needs change.

Service Level Agreements and Support Expectations

Service level agreements define how reliable a DaaS provider actually is once the platform is in daily use. Marketing claims fade quickly when desktops go down or performance drops, so written commitments matter. SLAs set expectations for uptime, response times, and accountability when things go wrong.

Uptime guarantees are the first place to look. Providers often promise high availability, but the details matter. How uptime is measured, what counts as downtime, and what remedies exist if targets are missed all affect real reliability. A strong SLA is clear, specific, and enforceable.

Support responsiveness is just as important. When users cannot access their desktops, delays ripple across teams. Fast response times, clear escalation paths, and knowledgeable support staff reduce disruption. This becomes even more critical for organizations running time sensitive operations or supporting global teams.

Incident handling deserves close attention. Problems will happen. What matters is how quickly they are detected, communicated, and resolved. Providers that invest in monitoring and transparent incident reporting build trust over time.

Key areas to verify include:

- SLAs and uptime commitments with defined remedies

- Support availability across hours and regions

- Troubleshooting processes and escalation paths

Clear service levels turn a provider relationship into a dependable partnership rather than a source of uncertainty.

How to Evaluate and Compare DaaS Providers Step by Step

Comparing DaaS providers works best when the process is structured. Skipping steps or relying on demos alone often leads to decisions that look good initially but fail under real workloads. A step by step approach keeps evaluation grounded in reality.

- Define user personas and workloads

Start by mapping who will use the desktops and how. Identify roles, applications, performance needs, and usage patterns. This prevents overbuying or underestimating requirements.

- Identify compliance and security requirements

Document industry regulations, data protection standards, and internal policies. These requirements narrow the field quickly and prevent late stage surprises.

- Test performance with pilot users

Run a pilot with real users and real work. Measure login times, application responsiveness, and session stability. Feedback from this phase is often more valuable than specifications.

- Review pricing and contracts

Examine subscription models, licensing terms, and usage limits. Look beyond monthly rates and calculate total cost under realistic scenarios.

- Validate support and SLAs

Review service level agreements, escalation processes, and support coverage. Confirm how issues are handled when performance or availability drops.

Following these steps turns provider selection into a deliberate decision rather than a guess. The result is a clearer path to choosing the right provider with fewer tradeoffs hidden beneath the surface.

Why the Right DaaS Provider Should Feel Like a Partner

Choosing a DaaS provider is not a one time transaction. It becomes an ongoing relationship that affects daily operations, long term plans, and how smoothly teams work over time. This is why the right provider should feel less like a vendor and more like a true partner.

Strategic alignment matters here. A provider that understands your strategic goals is better positioned to recommend configurations, pricing models, and performance options that support growth rather than short term convenience. When priorities change, a partner adapts with you instead of forcing rigid constraints.

Ongoing optimization is another signal. The best providers do not disappear after deployment. They help analyze usage patterns, suggest improvements, and adjust resources as needs evolve. This continuous involvement helps maintain performance and cost efficiency without constant internal effort.

Shared success metrics bring accountability into the relationship. When uptime, user experience, and security outcomes are measured together, incentives align naturally. Both sides focus on long term value rather than short term fixes.

A provider that acts as a partner contributes stability, insight, and flexibility. That relationship often becomes the difference between a platform that merely functions and one that consistently supports business success.

Conclusion

Choosing the right DaaS provider is a decision that reaches far beyond infrastructure. It shapes how securely data is handled, how predictable costs remain over time, and how users experience their daily work. When desktops perform well and access feels seamless, teams stay productive. When they do not, friction spreads quickly.

Careful planning reduces that risk. Evaluating providers against real business needs, security requirements, and growth plans helps avoid compromises that surface later. A thoughtful approach also makes it easier to balance flexibility with control, and innovation with stability.

The right DaaS provider supports long term success rather than creating future obstacles. It scales as teams grow, adapts as priorities change, and maintains a consistent user experience without adding unnecessary complexity.

Before making a final decision, take time to assess readiness, requirements, and expectations across the organization. Explore modern, secure DaaS platforms with a clear understanding of what matters most to your teams. Confidence comes from clarity, and clarity leads to a provider that truly fits.

Frequently Asked Questions (FAQs)

1. What should you look for in a DaaS provider?

When choosing a DaaS provider, look for strong security, reliable performance, centralized management, scalability, and compatibility with existing applications and devices. Providers should also offer flexible pricing, responsive support, and compliance features that align with your organization’s operational and security requirements.

2. How do you compare DaaS providers fairly

To compare DaaS providers fairly, evaluate them using the same criteria for performance, security, pricing, scalability, and support. Testing real workloads with pilot users helps identify differences in user experience, application compatibility, and reliability that may not appear in product specifications alone.

3. What costs should you look at beyond the monthly price?

Monthly pricing rarely tells the full story. Look closely at licensing fees, storage charges, network usage costs, and premium support tiers. These items often appear later and affect total cost. Also consider operational savings. Reduced hardware purchases, lower maintenance effort, and fewer support tickets can offset subscription costs over time.

4. How important are security certifications when choosing a provider?

Security certifications matter because they show how controls are implemented and audited. Standards like HIPAA or PCI DSS indicate structured processes rather than informal promises. Ask how often audits occur and what monitoring tools are in place. Certifications should be paired with ongoing visibility and clear incident response practices.

5. How secure are DaaS platforms?

DaaS platforms are designed with centralized security controls, including encryption, multi-factor authentication, and role-based access management. Because data remains in the cloud instead of local devices, DaaS can reduce data exposure risks while supporting compliance requirements such as HIPAA, PCI DSS, and GDPR.

6. How long does it usually take to migrate to a DaaS platform?

Timelines vary based on complexity. Small pilots can launch in days, while full deployments often take weeks. Factors include application compatibility, identity integration, and user training. A phased rollout reduces risk. Starting with a limited group helps surface issues early and improves adoption before wider deployment.

7. Can DaaS work with existing devices and hardware?

es, most DaaS platforms work with existing laptops, desktops, thin clients, and mobile devices through a web browser or lightweight client. This allows organizations to extend hardware lifespan while providing secure access to cloud-hosted desktops and applications from multiple locations.

8. How do you avoid vendor lock in with DaaS providers?

Look for providers that support data portability and standard image formats. Understand exit terms in contracts and confirm how desktops and user data can be exported. Multi cloud support also reduces dependency. Providers that operate across environments give organizations more options as needs evolve.

9. What performance tests should you run before deciding?

Test login speed, application load times, and session stability during peak usage. Simulate real workloads rather than ideal conditions. User feedback matters as much as metrics. If desktops feel responsive and predictable, adoption improves and support demands stay manageable.